Huiqun Yu

A Hybrid Knowledge-Grounded Framework for Safety and Traceability in Prescription Verification

Mar 11, 2026Abstract:Medication errors pose a significant threat to patient safety, making pharmacist verification (PV) a critical, yet heavily burdened, final safeguard. The direct application of Large Language Models (LLMs) to this zero-tolerance domain is untenable due to their inherent factual unreliability, lack of traceability, and weakness in complex reasoning. To address these challenges, we introduce PharmGraph-Auditor, a novel system designed for safe and evidence-grounded prescription auditing. The core of our system is a trustworthy Hybrid Pharmaceutical Knowledge Base (HPKB), implemented under the Virtual Knowledge Graph (VKG) paradigm. This architecture strategically unifies a relational component for set constraint satisfaction and a graph component for topological reasoning via a rigorous mapping layer. To construct this HPKB, we propose the Iterative Schema Refinement (ISR) algorithm, a framework that enables the co-evolution of both graph and relational schemas from medical texts. For auditing, we introduce the KB-grounded Chain of Verification (CoV), a new reasoning paradigm that transforms the LLM from an unreliable generator into a transparent reasoning engine. CoV decomposes the audit task into a sequence of verifiable queries against the HPKB, generating hybrid query plans to retrieve evidence from the most appropriate data store. Experimental results demonstrate robust knowledge extraction capabilities and show promises of using PharmGraph-Auditor to enable pharmacists to achieve safer and faster prescription verification.

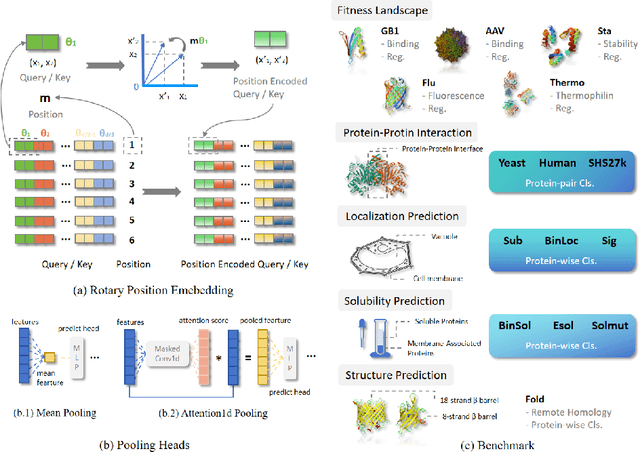

VenusX: Unlocking Fine-Grained Functional Understanding of Proteins

May 17, 2025

Abstract:Deep learning models have driven significant progress in predicting protein function and interactions at the protein level. While these advancements have been invaluable for many biological applications such as enzyme engineering and function annotation, a more detailed perspective is essential for understanding protein functional mechanisms and evaluating the biological knowledge captured by models. To address this demand, we introduce VenusX, the first large-scale benchmark for fine-grained functional annotation and function-based protein pairing at the residue, fragment, and domain levels. VenusX comprises three major task categories across six types of annotations, including residue-level binary classification, fragment-level multi-class classification, and pairwise functional similarity scoring for identifying critical active sites, binding sites, conserved sites, motifs, domains, and epitopes. The benchmark features over 878,000 samples curated from major open-source databases such as InterPro, BioLiP, and SAbDab. By providing mixed-family and cross-family splits at three sequence identity thresholds, our benchmark enables a comprehensive assessment of model performance on both in-distribution and out-of-distribution scenarios. For baseline evaluation, we assess a diverse set of popular and open-source models, including pre-trained protein language models, sequence-structure hybrids, structure-based methods, and alignment-based techniques. Their performance is reported across all benchmark datasets and evaluation settings using multiple metrics, offering a thorough comparison and a strong foundation for future research. Code and data are publicly available at https://github.com/ai4protein/VenusX.

Residual Learning Inspired Crossover Operator and Strategy Enhancements for Evolutionary Multitasking

Mar 27, 2025

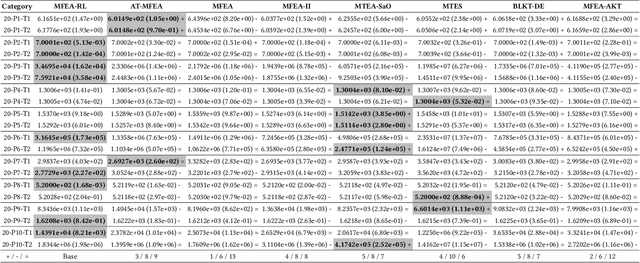

Abstract:In evolutionary multitasking, strategies such as crossover operators and skill factor assignment are critical for effective knowledge transfer. Existing improvements to crossover operators primarily focus on low-dimensional variable combinations, such as arithmetic crossover or partially mapped crossover, which are insufficient for modeling complex high-dimensional interactions.Moreover, static or semi-dynamic crossover strategies fail to adapt to the dynamic dependencies among tasks. In addition, current Multifactorial Evolutionary Algorithm frameworks often rely on fixed skill factor assignment strategies, lacking flexibility. To address these limitations, this paper proposes the Multifactorial Evolutionary Algorithm-Residual Learning (MFEA-RL) method based on residual learning. The method employs a Very Deep Super-Resolution (VDSR) model to generate high-dimensional residual representations of individuals, enhancing the modeling of complex relationships within dimensions. A ResNet-based mechanism dynamically assigns skill factors to improve task adaptability, while a random mapping mechanism efficiently performs crossover operations and mitigates the risk of negative transfer. Theoretical analysis and experimental results show that MFEA-RL outperforms state-of-the-art multitasking algorithms. It excels in both convergence and adaptability on standard evolutionary multitasking benchmarks, including CEC2017-MTSO and WCCI2020-MTSO. Additionally, its effectiveness is validated through a real-world application scenario.

VenusFactory: A Unified Platform for Protein Engineering Data Retrieval and Language Model Fine-Tuning

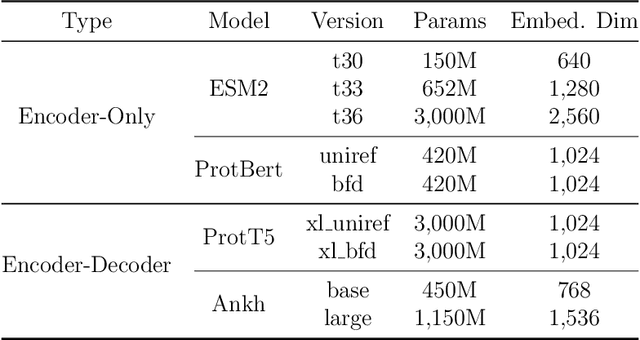

Mar 19, 2025Abstract:Natural language processing (NLP) has significantly influenced scientific domains beyond human language, including protein engineering, where pre-trained protein language models (PLMs) have demonstrated remarkable success. However, interdisciplinary adoption remains limited due to challenges in data collection, task benchmarking, and application. This work presents VenusFactory, a versatile engine that integrates biological data retrieval, standardized task benchmarking, and modular fine-tuning of PLMs. VenusFactory supports both computer science and biology communities with choices of both a command-line execution and a Gradio-based no-code interface, integrating $40+$ protein-related datasets and $40+$ popular PLMs. All implementations are open-sourced on https://github.com/tyang816/VenusFactory.

CPE-Pro: A Structure-Sensitive Deep Learning Model for Protein Representation and Origin Evaluation

Oct 21, 2024

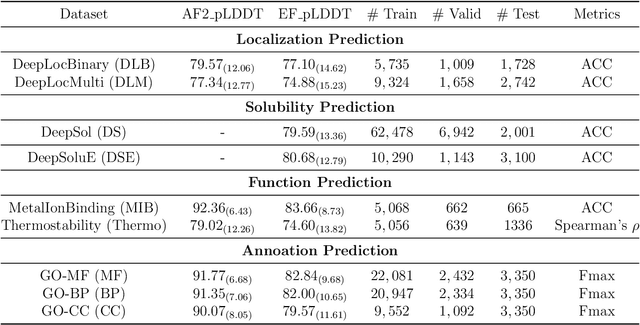

Abstract:Protein structures are important for understanding their functions and interactions. Currently, many protein structure prediction methods are enriching the structure database. Discriminating the origin of structures is crucial for distinguishing between experimentally resolved and computationally predicted structures, evaluating the reliability of prediction methods, and guiding downstream biological studies. Building on works in structure prediction, We developed a structure-sensitive supervised deep learning model, Crystal vs Predicted Evaluator for Protein Structure (CPE-Pro), to represent and discriminate the origin of protein structures. CPE-Pro learns the structural information of proteins and captures inter-structural differences to achieve accurate traceability on four data classes, and is expected to be extended to more. Simultaneously, we utilized Foldseek to encode protein structures into "structure-sequence" and trained a protein Structural Sequence Language Model, SSLM. Preliminary experiments demonstrated that, compared to large-scale protein language models pre-trained on vast amounts of amino acid sequences, the "structure-sequences" enable the language model to learn more informative protein features, enhancing and optimizing structural representations. We have provided the code, model weights, and all related materials on https://github.com/GouWenrui/CPE-Pro-main.git.

A PLMs based protein retrieval framework

Jul 16, 2024

Abstract:Protein retrieval, which targets the deconstruction of the relationship between sequences, structures and functions, empowers the advancing of biology. Basic Local Alignment Search Tool (BLAST), a sequence-similarity-based algorithm, has proved the efficiency of this field. Despite the existing tools for protein retrieval, they prioritize sequence similarity and probably overlook proteins that are dissimilar but share homology or functionality. In order to tackle this problem, we propose a novel protein retrieval framework that mitigates the bias towards sequence similarity. Our framework initiatively harnesses protein language models (PLMs) to embed protein sequences within a high-dimensional feature space, thereby enhancing the representation capacity for subsequent analysis. Subsequently, an accelerated indexed vector database is constructed to facilitate expedited access and retrieval of dense vectors. Extensive experiments demonstrate that our framework can equally retrieve both similar and dissimilar proteins. Moreover, this approach enables the identification of proteins that conventional methods fail to uncover. This framework will effectively assist in protein mining and empower the development of biology.

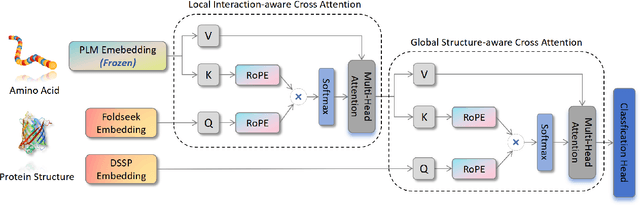

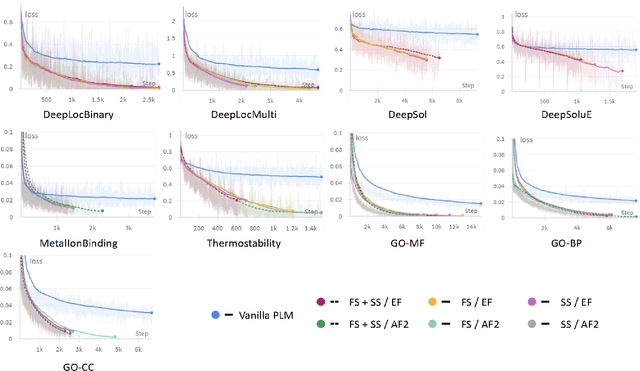

Simple, Efficient and Scalable Structure-aware Adapter Boosts Protein Language Models

Apr 23, 2024

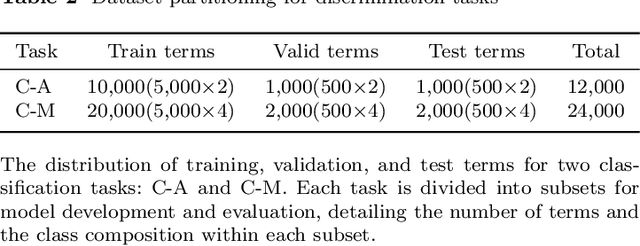

Abstract:Fine-tuning Pre-trained protein language models (PLMs) has emerged as a prominent strategy for enhancing downstream prediction tasks, often outperforming traditional supervised learning approaches. As a widely applied powerful technique in natural language processing, employing Parameter-Efficient Fine-Tuning techniques could potentially enhance the performance of PLMs. However, the direct transfer to life science tasks is non-trivial due to the different training strategies and data forms. To address this gap, we introduce SES-Adapter, a simple, efficient, and scalable adapter method for enhancing the representation learning of PLMs. SES-Adapter incorporates PLM embeddings with structural sequence embeddings to create structure-aware representations. We show that the proposed method is compatible with different PLM architectures and across diverse tasks. Extensive evaluations are conducted on 2 types of folding structures with notable quality differences, 9 state-of-the-art baselines, and 9 benchmark datasets across distinct downstream tasks. Results show that compared to vanilla PLMs, SES-Adapter improves downstream task performance by a maximum of 11% and an average of 3%, with significantly accelerated training speed by a maximum of 1034% and an average of 362%, the convergence rate is also improved by approximately 2 times. Moreover, positive optimization is observed even with low-quality predicted structures. The source code for SES-Adapter is available at https://github.com/tyang816/SES-Adapter.

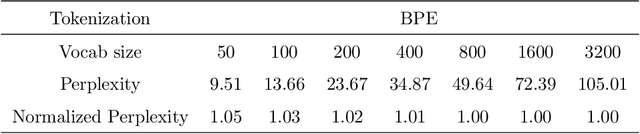

PETA: Evaluating the Impact of Protein Transfer Learning with Sub-word Tokenization on Downstream Applications

Oct 26, 2023

Abstract:Large protein language models are adept at capturing the underlying evolutionary information in primary structures, offering significant practical value for protein engineering. Compared to natural language models, protein amino acid sequences have a smaller data volume and a limited combinatorial space. Choosing an appropriate vocabulary size to optimize the pre-trained model is a pivotal issue. Moreover, despite the wealth of benchmarks and studies in the natural language community, there remains a lack of a comprehensive benchmark for systematically evaluating protein language model quality. Given these challenges, PETA trained language models with 14 different vocabulary sizes under three tokenization methods. It conducted thousands of tests on 33 diverse downstream datasets to assess the models' transfer learning capabilities, incorporating two classification heads and three random seeds to mitigate potential biases. Extensive experiments indicate that vocabulary sizes between 50 and 200 optimize the model, whereas sizes exceeding 800 detrimentally affect the model's representational performance. Our code, model weights and datasets are available at https://github.com/ginnm/ProteinPretraining.

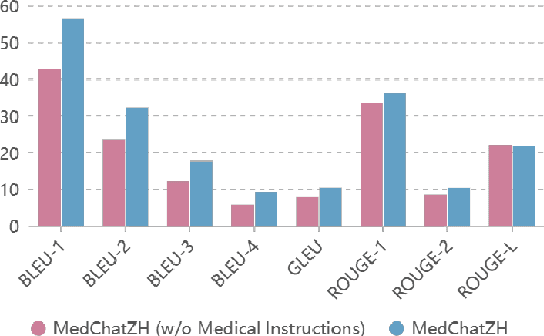

MedChatZH: a Better Medical Adviser Learns from Better Instructions

Sep 03, 2023

Abstract:Generative large language models (LLMs) have shown great success in various applications, including question-answering (QA) and dialogue systems. However, in specialized domains like traditional Chinese medical QA, these models may perform unsatisfactorily without fine-tuning on domain-specific datasets. To address this, we introduce MedChatZH, a dialogue model designed specifically for traditional Chinese medical QA. Our model is pre-trained on Chinese traditional medical books and fine-tuned with a carefully curated medical instruction dataset. It outperforms several solid baselines on a real-world medical dialogue dataset. We release our model, code, and dataset on https://github.com/tyang816/MedChatZH to facilitate further research in the domain of traditional Chinese medicine and LLMs.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge