Haiou Zhang

Fully-analog array signal processor using 3D aperture engineering

Mar 01, 2026Abstract:The rapid progress in radar and communication places increasing demands on low-latency and energy-efficiency array signal processing methods. There is an emerging direction of constructing analog computing processors for directly processing electromagnetic (EM) waves. However, the existing methods are constrained by 2D physical aperture and imprecise design process with inefficient computing architecture, resulting in limited sensing resolution and number of separated sources. Here, we present a fully-analog array signal processor (FASP) using 3D aperture engineering framework to perform super-resolution direction-of-arrival estimation, source number estimation, and multi-channel source separation in parallel for both coherent and incoherent sources. 3D aperture engineering is realized by constructing deep cascaded metasurface layers so that the diffractive propagation from oblique incident fields can be layer-wise modulated and piecewise encoded for perceiving EM fields far exceeding physical aperture limits. The multi-dimensional synthetic aperture (MSA) training is developed to characterize the metasurface modulation and optimize the neuro-augmented physical model for extending system aperture and generating high-order nonlinear angular response. FASP orthogonalizes the array response vectors of communication channels to map them into antenna detectors in the analog domain. The $N$-layer FASP has the capability to achieve ~N times higher angular resolution than the Rayleigh diffraction limit. Experiments further validate the source number estimation and independent channel separation of 10-target that can suppress radar jamming signals by ~20 dB and enhance channel communication capacity by 13.5 times at 36~41 GHz. FASP heralds a paradigm shift in signal processing for super-resolution optics, advanced radar, and 6G communications.

Super-resolution imaging using super-oscillatory diffractive neural networks

Jun 27, 2024

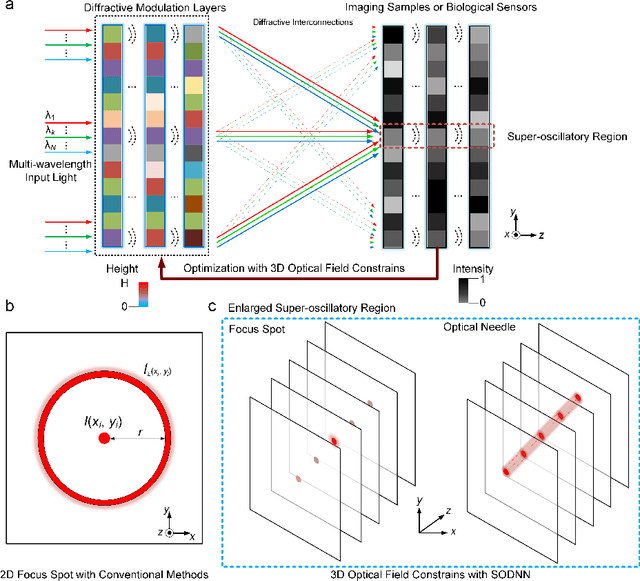

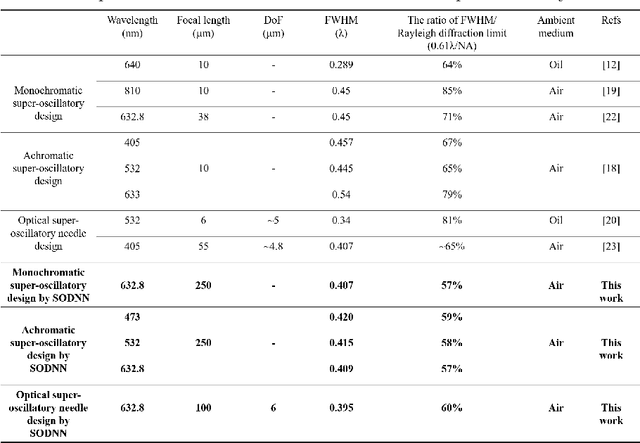

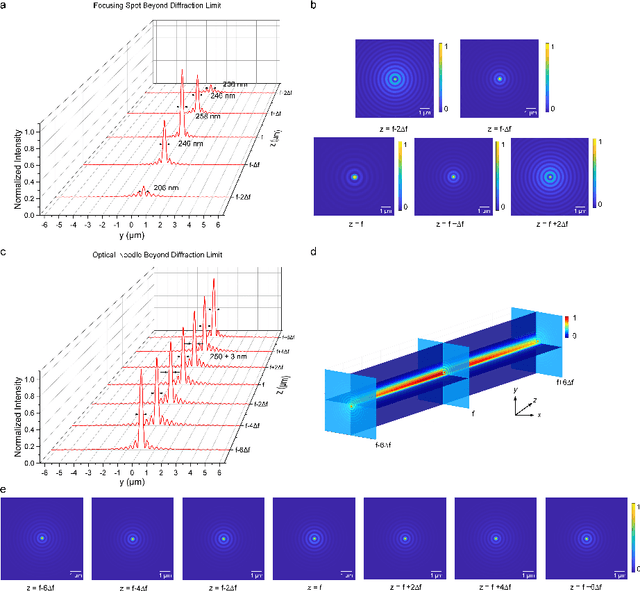

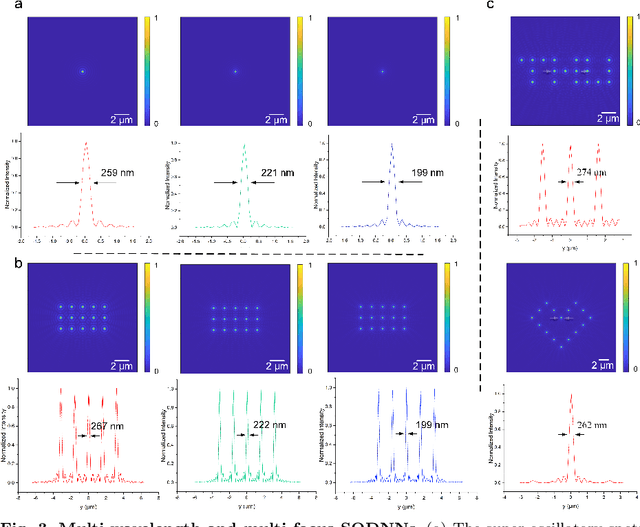

Abstract:Optical super-oscillation enables far-field super-resolution imaging beyond diffraction limits. However, the existing super-oscillatory lens for the spatial super-resolution imaging system still confronts critical limitations in performance due to the lack of a more advanced design method and the limited design degree of freedom. Here, we propose an optical super-oscillatory diffractive neural network, i.e., SODNN, that can achieve super-resolved spatial resolution for imaging beyond the diffraction limit with superior performance over existing methods. SODNN is constructed by utilizing diffractive layers to implement optical interconnections and imaging samples or biological sensors to implement nonlinearity, which modulates the incident optical field to create optical super-oscillation effects in 3D space and generate the super-resolved focal spots. By optimizing diffractive layers with 3D optical field constraints under an incident wavelength size of $\lambda$, we achieved a super-oscillatory spot with a full width at half maximum of 0.407$\lambda$ in the far field distance over 400$\lambda$ without side-lobes over the field of view, having a long depth of field over 10$\lambda$. Furthermore, the SODNN implements a multi-wavelength and multi-focus spot array that effectively avoids chromatic aberrations. Our research work will inspire the development of intelligent optical instruments to facilitate the applications of imaging, sensing, perception, etc.

Dual adaptive training of photonic neural networks

Dec 09, 2022Abstract:Photonic neural network (PNN) is a remarkable analog artificial intelligence (AI) accelerator that computes with photons instead of electrons to feature low latency, high energy efficiency, and high parallelism. However, the existing training approaches cannot address the extensive accumulation of systematic errors in large-scale PNNs, resulting in a significant decrease in model performance in physical systems. Here, we propose dual adaptive training (DAT) that allows the PNN model to adapt to substantial systematic errors and preserves its performance during the deployment. By introducing the systematic error prediction networks with task-similarity joint optimization, DAT achieves the high similarity mapping between the PNN numerical models and physical systems and high-accurate gradient calculations during the dual backpropagation training. We validated the effectiveness of DAT by using diffractive PNNs and interference-based PNNs on image classification tasks. DAT successfully trained large-scale PNNs under major systematic errors and preserved the model classification accuracies comparable to error-free systems. The results further demonstrated its superior performance over the state-of-the-art in situ training approaches. DAT provides critical support for constructing large-scale PNNs to achieve advanced architectures and can be generalized to other types of AI systems with analog computing errors.

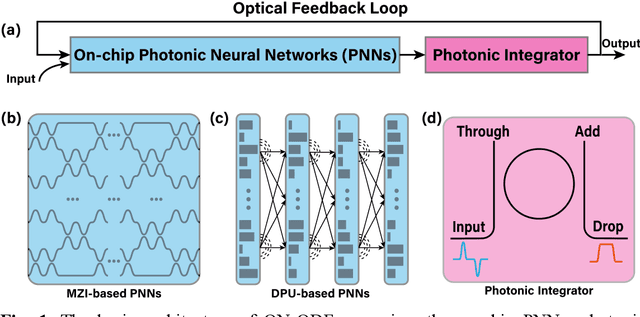

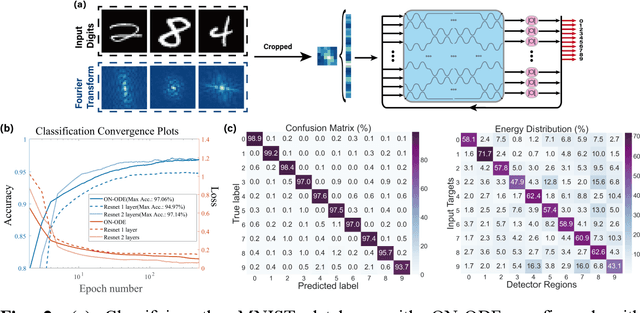

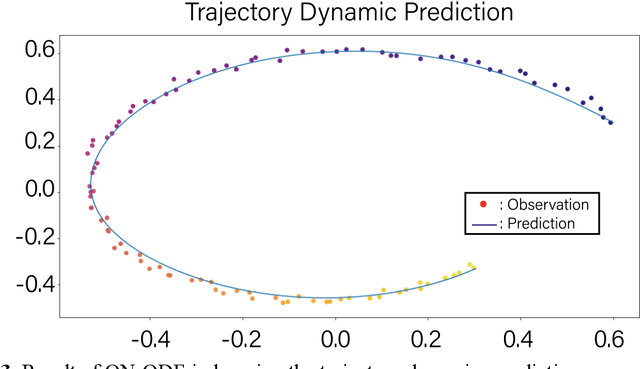

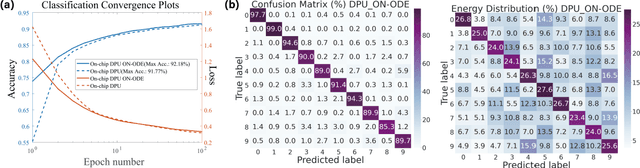

Optical Neural Ordinary Differential Equations

Sep 26, 2022

Abstract:Increasing the layer number of on-chip photonic neural networks (PNNs) is essential to improve its model performance. However, the successively cascading of network hidden layers results in larger integrated photonic chip areas. To address this issue, we propose the optical neural ordinary differential equations (ON-ODE) architecture that parameterizes the continuous dynamics of hidden layers with optical ODE solvers. The ON-ODE comprises the PNNs followed by the photonic integrator and optical feedback loop, which can be configured to represent residual neural networks (ResNet) and recurrent neural networks with effectively reduced chip area occupancy. For the interference-based optoelectronic nonlinear hidden layer, the numerical experiments demonstrate that the single hidden layer ON-ODE can achieve approximately the same accuracy as the two-layer optical ResNet in image classification tasks. Besides, the ONODE improves the model classification accuracy for the diffraction-based all-optical linear hidden layer. The time-dependent dynamics property of ON-ODE is further applied for trajectory prediction with high accuracy.

Multi-Perspective Fusion Network for Commonsense Reading Comprehension

Jan 08, 2019

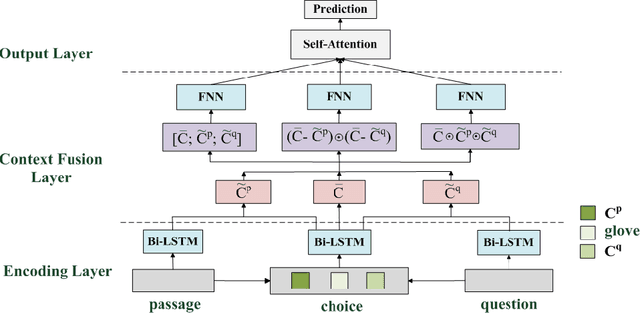

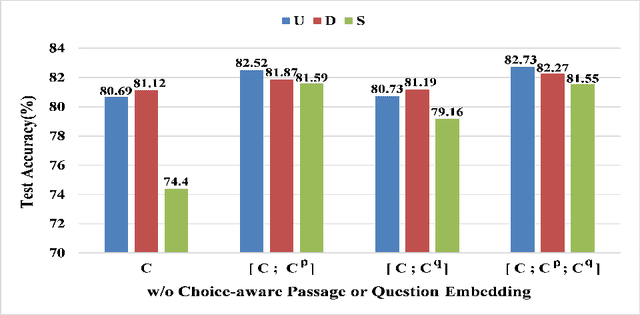

Abstract:Commonsense Reading Comprehension (CRC) is a significantly challenging task, aiming at choosing the right answer for the question referring to a narrative passage, which may require commonsense knowledge inference. Most of the existing approaches only fuse the interaction information of choice, passage, and question in a simple combination manner from a \emph{union} perspective, which lacks the comparison information on a deeper level. Instead, we propose a Multi-Perspective Fusion Network (MPFN), extending the single fusion method with multiple perspectives by introducing the \emph{difference} and \emph{similarity} fusion\deleted{along with the \emph{union}}. More comprehensive and accurate information can be captured through the three types of fusion. We design several groups of experiments on MCScript dataset \cite{Ostermann:LREC18:MCScript} to evaluate the effectiveness of the three types of fusion respectively. From the experimental results, we can conclude that the difference fusion is comparable with union fusion, and the similarity fusion needs to be activated by the union fusion. The experimental result also shows that our MPFN model achieves the state-of-the-art with an accuracy of 83.52\% on the official test set.

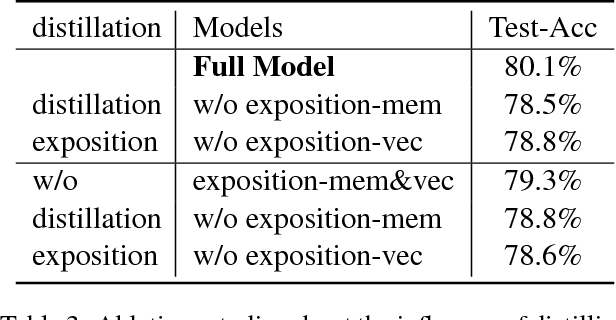

DEMN: Distilled-Exposition Enhanced Matching Network for Story Comprehension

Jan 08, 2019

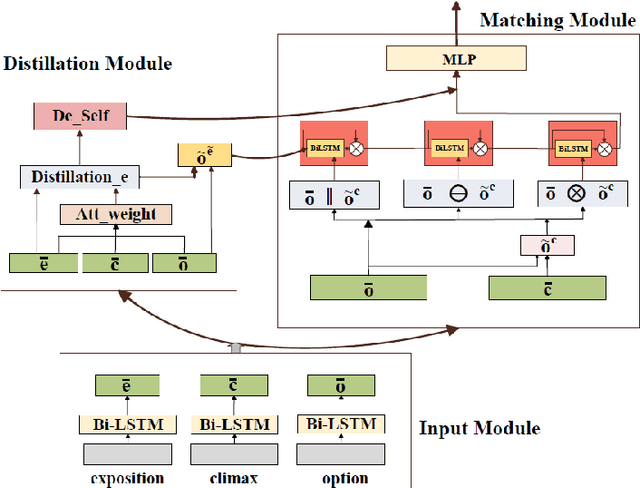

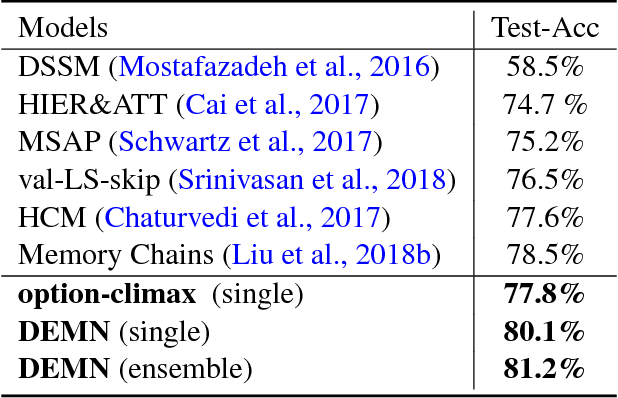

Abstract:This paper proposes a Distilled-Exposition Enhanced Matching Network (DEMN) for story-cloze test, which is still a challenging task in story comprehension. We divide a complete story into three narrative segments: an \textit{exposition}, a \textit{climax}, and an \textit{ending}. The model consists of three modules: input module, matching module, and distillation module. The input module provides semantic representations for the three segments and then feeds them into the other two modules. The matching module collects interaction features between the ending and the climax. The distillation module distills the crucial semantic information in the exposition and infuses it into the matching module in two different ways. We evaluate our single and ensemble model on ROCStories Corpus \cite{Mostafazadeh2016ACA}, achieving an accuracy of 80.1\% and 81.2\% on the test set respectively. The experimental results demonstrate that our DEMN model achieves a state-of-the-art performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge