Guozheng Wu

Mem-PAL: Towards Memory-based Personalized Dialogue Assistants for Long-term User-Agent Interaction

Nov 17, 2025Abstract:With the rise of smart personal devices, service-oriented human-agent interactions have become increasingly prevalent. This trend highlights the need for personalized dialogue assistants that can understand user-specific traits to accurately interpret requirements and tailor responses to individual preferences. However, existing approaches often overlook the complexities of long-term interactions and fail to capture users' subjective characteristics. To address these gaps, we present PAL-Bench, a new benchmark designed to evaluate the personalization capabilities of service-oriented assistants in long-term user-agent interactions. In the absence of available real-world data, we develop a multi-step LLM-based synthesis pipeline, which is further verified and refined by human annotators. This process yields PAL-Set, the first Chinese dataset comprising multi-session user logs and dialogue histories, which serves as the foundation for PAL-Bench. Furthermore, to improve personalized service-oriented interactions, we propose H$^2$Memory, a hierarchical and heterogeneous memory framework that incorporates retrieval-augmented generation to improve personalized response generation. Comprehensive experiments on both our PAL-Bench and an external dataset demonstrate the effectiveness of the proposed memory framework.

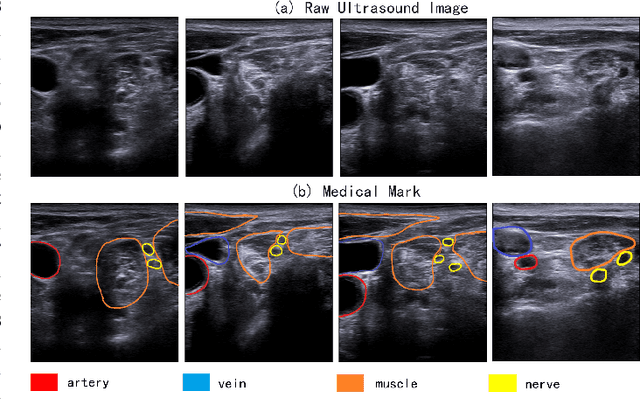

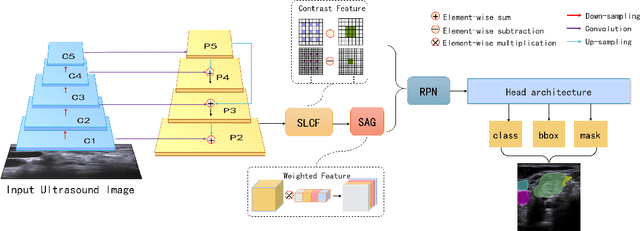

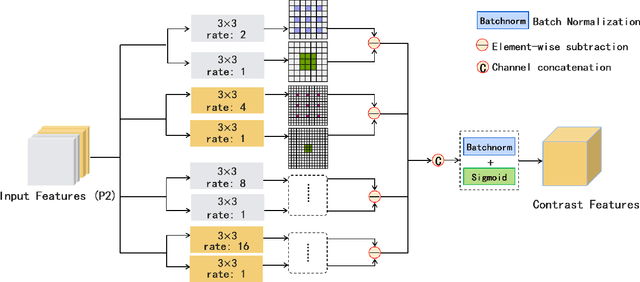

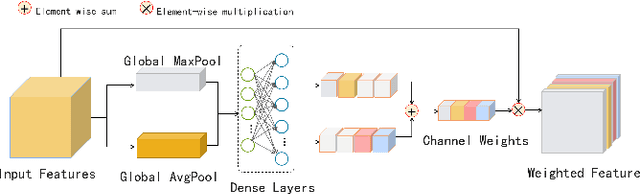

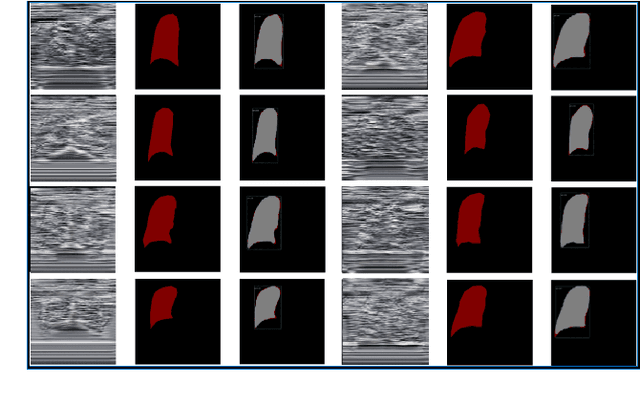

Multiple Instance Segmentation in Brachial Plexus Ultrasound Image Using BPMSegNet

Dec 22, 2020

Abstract:The identification of nerve is difficult as structures of nerves are challenging to image and to detect in ultrasound images. Nevertheless, the nerve identification in ultrasound images is a crucial step to improve performance of regional anesthesia. In this paper, a network called Brachial Plexus Multi-instance Segmentation Network (BPMSegNet) is proposed to identify different tissues (nerves, arteries, veins, muscles) in ultrasound images. The BPMSegNet has three novel modules. The first is the spatial local contrast feature, which computes contrast features at different scales. The second one is the self-attention gate, which reweighs the channels in feature maps by their importance. The third is the addition of a skip concatenation with transposed convolution within a feature pyramid network. The proposed BPMSegNet is evaluated by conducting experiments on our constructed Ultrasound Brachial Plexus Dataset (UBPD). Quantitative experimental results show the proposed network can segment multiple tissues from the ultrasound images with a good performance.

A Multi-View Dynamic Fusion Framework: How to Improve the Multimodal Brain Tumor Segmentation from Multi-Views?

Dec 21, 2020

Abstract:When diagnosing the brain tumor, doctors usually make a diagnosis by observing multimodal brain images from the axial view, the coronal view and the sagittal view, respectively. And then they make a comprehensive decision to confirm the brain tumor based on the information obtained from multi-views. Inspired by this diagnosing process and in order to further utilize the 3D information hidden in the dataset, this paper proposes a multi-view dynamic fusion framework to improve the performance of brain tumor segmentation. The proposed framework consists of 1) a multi-view deep neural network architecture, which represents multi learning networks for segmenting the brain tumor from different views and each deep neural network corresponds to multi-modal brain images from one single view and 2) the dynamic decision fusion method, which is mainly used to fuse segmentation results from multi-views as an integrate one and two different fusion methods, the voting method and the weighted averaging method, have been adopted to evaluate the fusing process. Moreover, the multi-view fusion loss, which consists of the segmentation loss, the transition loss and the decision loss, is proposed to facilitate the training process of multi-view learning networks so as to keep the consistency of appearance and space, not only in the process of fusing segmentation results, but also in the process of training the learning network. \par By evaluating the proposed framework on BRATS 2015 and BRATS 2018, it can be found that the fusion results from multi-views achieve a better performance than the segmentation result from the single view and the effectiveness of proposed multi-view fusion loss has also been proved. Moreover, the proposed framework achieves a better segmentation performance and a higher efficiency compared to other counterpart methods.

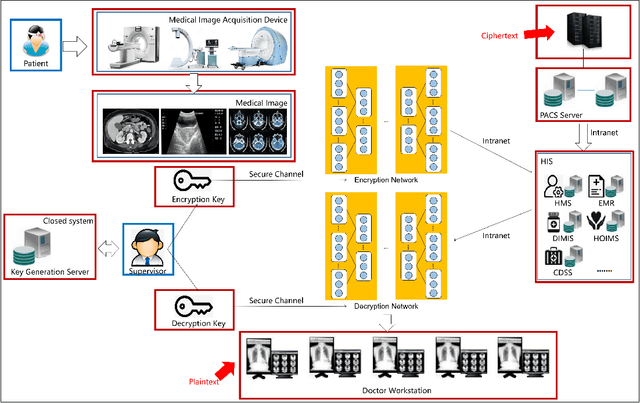

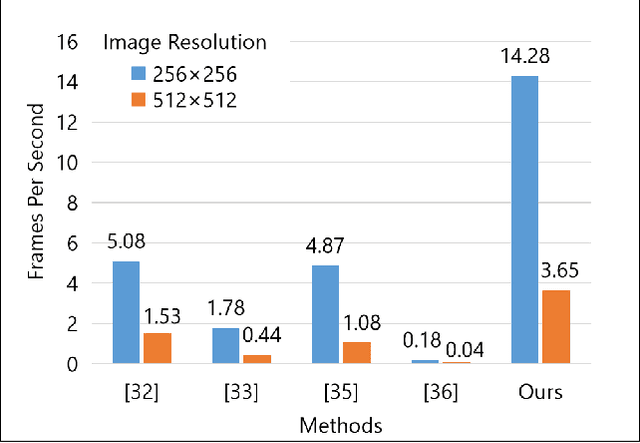

DeepEDN: A Deep Learning-based Image Encryption and Decryption Network for Internet of Medical Things

May 05, 2020

Abstract:Internet of Medical Things (IoMT) can connect many medical imaging equipments to the medical information network to facilitate the process of diagnosing and treating for doctors. As medical image contains sensitive information, it is of importance yet very challenging to safeguard the privacy or security of the patient. In this work, a deep learning based encryption and decryption network (DeepEDN) is proposed to fulfill the process of encrypting and decrypting the medical image. Specifically, in DeepEDN, the Cycle-Generative Adversarial Network (Cycle-GAN) is employed as the main learning network to transfer the medical image from its original domain into the target domain. Target domain is regarded as a "Hidden Factors" to guide the learning model for realizing the encryption. The encrypted image is restored to the original (plaintext) image through a reconstruction network to achieve an image decryption. In order to facilitate the data mining directly from the privacy-protected environment, a region of interest(ROI)-mining-network is proposed to extract the interested object from the encrypted image. The proposed DeepEDN is evaluated on the chest X-ray dataset. Extensive experimental results and security analysis show that the proposed method can achieve a high level of security with a good performance in efficiency.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge