Gangjian Zhang

MultiGO++: Monocular 3D Clothed Human Reconstruction via Geometry-Texture Collaboration

Mar 05, 2026Abstract:Monocular 3D clothed human reconstruction aims to generate a complete and realistic textured 3D avatar from a single image. Existing methods are commonly trained under multi-view supervision with annotated geometric priors, and during inference, these priors are estimated by the pre-trained network from the monocular input. These methods are constrained by three key limitations: texturally by unavailability of training data, geometrically by inaccurate external priors, and systematically by biased single-modality supervision, all leading to suboptimal reconstruction. To address these issues, we propose a novel reconstruction framework, named MultiGO++, which achieves effective systematic geometry-texture collaboration. It consists of three core parts: (1) A multi-source texture synthesis strategy that constructs 15,000+ 3D textured human scans to improve the performance on texture quality estimation in challenge scenarios; (2) A region-aware shape extraction module that extracts and interacts features of each body region to obtain geometry information and a Fourier geometry encoder that mitigates the modality gap to achieve effective geometry learning; (3) A dual reconstruction U-Net that leverages geometry-texture collaborative features to refine and generate high-fidelity textured 3D human meshes. Extensive experiments on two benchmarks and many in-the-wild cases show the superiority of our method over state-of-the-art approaches.

SAT: Supervisor Regularization and Animation Augmentation for Two-process Monocular Texture 3D Human Reconstruction

Aug 27, 2025

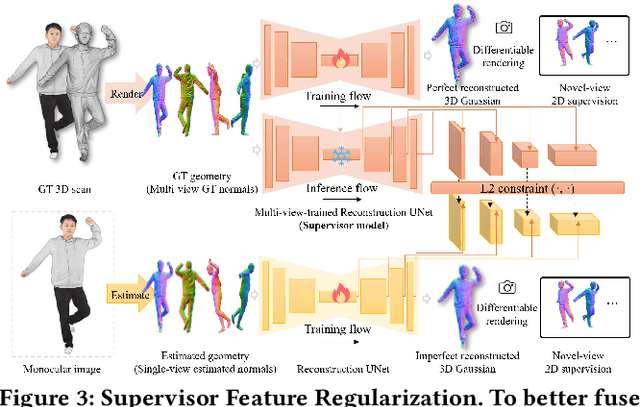

Abstract:Monocular texture 3D human reconstruction aims to create a complete 3D digital avatar from just a single front-view human RGB image. However, the geometric ambiguity inherent in a single 2D image and the scarcity of 3D human training data are the main obstacles limiting progress in this field. To address these issues, current methods employ prior geometric estimation networks to derive various human geometric forms, such as the SMPL model and normal maps. However, they struggle to integrate these modalities effectively, leading to view inconsistencies, such as facial distortions. To this end, we propose a two-process 3D human reconstruction framework, SAT, which seamlessly learns various prior geometries in a unified manner and reconstructs high-quality textured 3D avatars as the final output. To further facilitate geometry learning, we introduce a Supervisor Feature Regularization module. By employing a multi-view network with the same structure to provide intermediate features as training supervision, these varied geometric priors can be better fused. To tackle data scarcity and further improve reconstruction quality, we also propose an Online Animation Augmentation module. By building a one-feed-forward animation network, we augment a massive number of samples from the original 3D human data online for model training. Extensive experiments on two benchmarks show the superiority of our approach compared to state-of-the-art methods.

SMPL Normal Map Is All You Need for Single-view Textured Human Reconstruction

Jun 15, 2025Abstract:Single-view textured human reconstruction aims to reconstruct a clothed 3D digital human by inputting a monocular 2D image. Existing approaches include feed-forward methods, limited by scarce 3D human data, and diffusion-based methods, prone to erroneous 2D hallucinations. To address these issues, we propose a novel SMPL normal map Equipped 3D Human Reconstruction (SEHR) framework, integrating a pretrained large 3D reconstruction model with human geometry prior. SEHR performs single-view human reconstruction without using a preset diffusion model in one forward propagation. Concretely, SEHR consists of two key components: SMPL Normal Map Guidance (SNMG) and SMPL Normal Map Constraint (SNMC). SNMG incorporates SMPL normal maps into an auxiliary network to provide improved body shape guidance. SNMC enhances invisible body parts by constraining the model to predict an extra SMPL normal Gaussians. Extensive experiments on two benchmark datasets demonstrate that SEHR outperforms existing state-of-the-art methods.

Exploiting Inter-Sample Correlation and Intra-Sample Redundancy for Partially Relevant Video Retrieval

Apr 28, 2025

Abstract:Partially Relevant Video Retrieval (PRVR) aims to retrieve the target video that is partially relevant to the text query. The primary challenge in PRVR arises from the semantic asymmetry between textual and visual modalities, as videos often contain substantial content irrelevant to the query. Existing methods coarsely align paired videos and text queries to construct the semantic space, neglecting the critical cross-modal dual nature inherent in this task: inter-sample correlation and intra-sample redundancy. To this end, we propose a novel PRVR framework to systematically exploit these two characteristics. Our framework consists of three core modules. First, the Inter Correlation Enhancement (ICE) module captures inter-sample correlation by identifying semantically similar yet unpaired text queries and video moments, combining them to form pseudo-positive pairs for more robust semantic space construction. Second, the Intra Redundancy Mining (IRM) module mitigates intra-sample redundancy by mining redundant video moment features and treating them as hard negative samples, thereby encouraging the model to learn more discriminative representations. Finally, to reinforce these modules, we introduce the Temporal Coherence Prediction (TCP) module, which enhances feature discrimination by training the model to predict the original temporal order of randomly shuffled video frames and moments. Extensive experiments on three datasets demonstrate the superiority of our approach compared to previous methods, achieving state-of-the-art results.

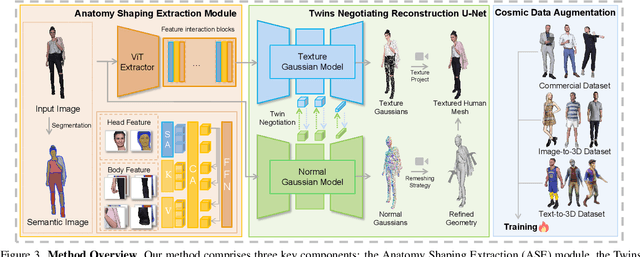

Unify3D: An Augmented Holistic End-to-end Monocular 3D Human Reconstruction via Anatomy Shaping and Twins Negotiating

Apr 25, 2025

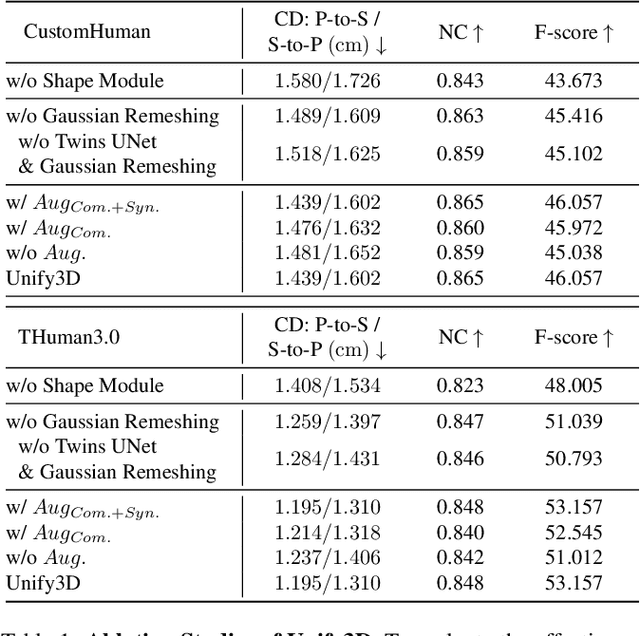

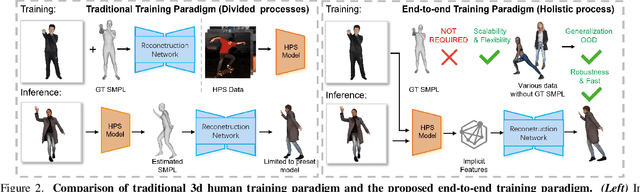

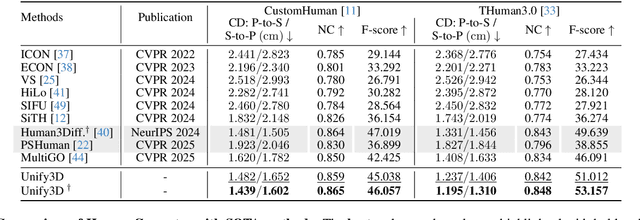

Abstract:Monocular 3D clothed human reconstruction aims to create a complete 3D avatar from a single image. To tackle the human geometry lacking in one RGB image, current methods typically resort to a preceding model for an explicit geometric representation. For the reconstruction itself, focus is on modeling both it and the input image. This routine is constrained by the preceding model, and overlooks the integrity of the reconstruction task. To address this, this paper introduces a novel paradigm that treats human reconstruction as a holistic process, utilizing an end-to-end network for direct prediction from 2D image to 3D avatar, eliminating any explicit intermediate geometry display. Based on this, we further propose a novel reconstruction framework consisting of two core components: the Anatomy Shaping Extraction module, which captures implicit shape features taking into account the specialty of human anatomy, and the Twins Negotiating Reconstruction U-Net, which enhances reconstruction through feature interaction between two U-Nets of different modalities. Moreover, we propose a Comic Data Augmentation strategy and construct 15k+ 3D human scans to bolster model performance in more complex case input. Extensive experiments on two test sets and many in-the-wild cases show the superiority of our method over SOTA methods. Our demos can be found in : https://e2e3dgsrecon.github.io/e2e3dgsrecon/.

Graph-Guided Scene Reconstruction from Images with 3D Gaussian Splatting

Feb 24, 2025

Abstract:This paper investigates an open research challenge of reconstructing high-quality, large 3D open scenes from images. It is observed existing methods have various limitations, such as requiring precise camera poses for input and dense viewpoints for supervision. To perform effective and efficient 3D scene reconstruction, we propose a novel graph-guided 3D scene reconstruction framework, GraphGS. Specifically, given a set of images captured by RGB cameras on a scene, we first design a spatial prior-based scene structure estimation method. This is then used to create a camera graph that includes information about the camera topology. Further, we propose to apply the graph-guided multi-view consistency constraint and adaptive sampling strategy to the 3D Gaussian Splatting optimization process. This greatly alleviates the issue of Gaussian points overfitting to specific sparse viewpoints and expedites the 3D reconstruction process. We demonstrate GraphGS achieves high-fidelity 3D reconstruction from images, which presents state-of-the-art performance through quantitative and qualitative evaluation across multiple datasets. Project Page: https://3dagentworld.github.io/graphgs.

Diversified Augmentation with Domain Adaptation for Debiased Video Temporal Grounding

Jan 14, 2025

Abstract:Temporal sentence grounding in videos (TSGV) faces challenges due to public TSGV datasets containing significant temporal biases, which are attributed to the uneven temporal distributions of target moments. Existing methods generate augmented videos, where target moments are forced to have varying temporal locations. However, since the video lengths of the given datasets have small variations, only changing the temporal locations results in poor generalization ability in videos with varying lengths. In this paper, we propose a novel training framework complemented by diversified data augmentation and a domain discriminator. The data augmentation generates videos with various lengths and target moment locations to diversify temporal distributions. However, augmented videos inevitably exhibit distinct feature distributions which may introduce noise. To address this, we design a domain adaptation auxiliary task to diminish feature discrepancies between original and augmented videos. We also encourage the model to produce distinct predictions for videos with the same text queries but different moment locations to promote debiased training. Experiments on Charades-CD and ActivityNet-CD datasets demonstrate the effectiveness and generalization abilities of our method in multiple grounding structures, achieving state-of-the-art results.

MultiGO: Towards Multi-level Geometry Learning for Monocular 3D Textured Human Reconstruction

Dec 04, 2024

Abstract:This paper investigates the research task of reconstructing the 3D clothed human body from a monocular image. Due to the inherent ambiguity of single-view input, existing approaches leverage pre-trained SMPL(-X) estimation models or generative models to provide auxiliary information for human reconstruction. However, these methods capture only the general human body geometry and overlook specific geometric details, leading to inaccurate skeleton reconstruction, incorrect joint positions, and unclear cloth wrinkles. In response to these issues, we propose a multi-level geometry learning framework. Technically, we design three key components: skeleton-level enhancement, joint-level augmentation, and wrinkle-level refinement modules. Specifically, we effectively integrate the projected 3D Fourier features into a Gaussian reconstruction model, introduce perturbations to improve joint depth estimation during training, and refine the human coarse wrinkles by resembling the de-noising process of diffusion model. Extensive quantitative and qualitative experiments on two out-of-distribution test sets show the superior performance of our approach compared to state-of-the-art (SOTA) methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge