Francesco Ragusa

ENIGMA-360: An Ego-Exo Dataset for Human Behavior Understanding in Industrial Scenarios

Mar 11, 2026Abstract:Understanding human behavior from complementary egocentric (ego) and exocentric (exo) points of view enables the development of systems that can support workers in industrial environments and enhance their safety. However, progress in this area is hindered by the lack of datasets capturing both views in realistic industrial scenarios. To address this gap, we propose ENIGMA-360, a new ego-exo dataset acquired in a real industrial scenario. The dataset is composed of 180 egocentric and 180 exocentric procedural videos temporally synchronized offering complementary information of the same scene. The 360 videos have been labeled with temporal and spatial annotations, enabling the study of different aspects of human behavior in industrial domain. We provide baseline experiments for 3 foundational tasks for human behavior understanding: 1) Temporal Action Segmentation, 2) Keystep Recognition and 3) Egocentric Human-Object Interaction Detection, showing the limits of state-of-the-art approaches on this challenging scenario. These results highlight the need for new models capable of robust ego-exo understanding in real-world environments. We publicly release the dataset and its annotations at https://fpv-iplab.github.io/ENIGMA-360/.

GlovEgo-HOI: Bridging the Synthetic-to-Real Gap for Industrial Egocentric Human-Object Interaction Detection

Jan 14, 2026Abstract:Egocentric Human-Object Interaction (EHOI) analysis is crucial for industrial safety, yet the development of robust models is hindered by the scarcity of annotated domain-specific data. We address this challenge by introducing a data generation framework that combines synthetic data with a diffusion-based process to augment real-world images with realistic Personal Protective Equipment (PPE). We present GlovEgo-HOI, a new benchmark dataset for industrial EHOI, and GlovEgo-Net, a model integrating Glove-Head and Keypoint- Head modules to leverage hand pose information for enhanced interaction detection. Extensive experiments demonstrate the effectiveness of the proposed data generation framework and GlovEgo-Net. To foster further research, we release the GlovEgo-HOI dataset, augmentation pipeline, and pre-trained models at: GitHub project.

SignIT: A Comprehensive Dataset and Multimodal Analysis for Italian Sign Language Recognition

Dec 16, 2025Abstract:In this work we present SignIT, a new dataset to study the task of Italian Sign Language (LIS) recognition. The dataset is composed of 644 videos covering 3.33 hours. We manually annotated videos considering a taxonomy of 94 distinct sign classes belonging to 5 macro-categories: Animals, Food, Colors, Emotions and Family. We also extracted 2D keypoints related to the hands, face and body of the users. With the dataset, we propose a benchmark for the sign recognition task, adopting several state-of-the-art models showing how temporal information, 2D keypoints and RGB frames can be influence the performance of these models. Results show the limitations of these models on this challenging LIS dataset. We release data and annotations at the following link: https://fpv-iplab.github.io/SignIT/.

Ego-EXTRA: video-language Egocentric Dataset for EXpert-TRAinee assistance

Dec 15, 2025Abstract:We present Ego-EXTRA, a video-language Egocentric Dataset for EXpert-TRAinee assistance. Ego-EXTRA features 50 hours of unscripted egocentric videos of subjects performing procedural activities (the trainees) while guided by real-world experts who provide guidance and answer specific questions using natural language. Following a ``Wizard of OZ'' data collection paradigm, the expert enacts a wearable intelligent assistant, looking at the activities performed by the trainee exclusively from their egocentric point of view, answering questions when asked by the trainee, or proactively interacting with suggestions during the procedures. This unique data collection protocol enables Ego-EXTRA to capture a high-quality dialogue in which expert-level feedback is provided to the trainee. Two-way dialogues between experts and trainees are recorded, transcribed, and used to create a novel benchmark comprising more than 15k high-quality Visual Question Answer sets, which we use to evaluate Multimodal Large Language Models. The results show that Ego-EXTRA is challenging and highlight the limitations of current models when used to provide expert-level assistance to the user. The Ego-EXTRA dataset is publicly available to support the benchmark of egocentric video-language assistants: https://fpv-iplab.github.io/Ego-EXTRA/.

Are Synthetic Data Useful for Egocentric Hand-Object Interaction Detection? An Investigation and the HOI-Synth Domain Adaptation Benchmark

Dec 05, 2023Abstract:In this study, we investigate the effectiveness of synthetic data in enhancing hand-object interaction detection within the egocentric vision domain. We introduce a simulator able to generate synthetic images of hand-object interactions automatically labeled with hand-object contact states, bounding boxes, and pixel-wise segmentation masks. Through comprehensive experiments and comparative analyses on three egocentric datasets, VISOR, EgoHOS, and ENIGMA-51, we demonstrate that the use of synthetic data and domain adaptation techniques allows for comparable performance to conventional supervised methods while requiring annotations on only a fraction of the real data. When tested with in-domain synthetic data generated from 3D models of real target environments and objects, our best models show consistent performance improvements with respect to standard fully supervised approaches based on labeled real data only. Our study also sets a new benchmark of domain adaptation for egocentric hand-object interaction detection (HOI-Synth) and provides baseline results to encourage the community to engage in this challenging task. We release the generated data, code, and the simulator at the following link: https://iplab.dmi.unict.it/HOI-Synth/.

Ego-Exo4D: Understanding Skilled Human Activity from First- and Third-Person Perspectives

Nov 30, 2023

Abstract:We present Ego-Exo4D, a diverse, large-scale multimodal multiview video dataset and benchmark challenge. Ego-Exo4D centers around simultaneously-captured egocentric and exocentric video of skilled human activities (e.g., sports, music, dance, bike repair). More than 800 participants from 13 cities worldwide performed these activities in 131 different natural scene contexts, yielding long-form captures from 1 to 42 minutes each and 1,422 hours of video combined. The multimodal nature of the dataset is unprecedented: the video is accompanied by multichannel audio, eye gaze, 3D point clouds, camera poses, IMU, and multiple paired language descriptions -- including a novel "expert commentary" done by coaches and teachers and tailored to the skilled-activity domain. To push the frontier of first-person video understanding of skilled human activity, we also present a suite of benchmark tasks and their annotations, including fine-grained activity understanding, proficiency estimation, cross-view translation, and 3D hand/body pose. All resources will be open sourced to fuel new research in the community.

ENIGMA-51: Towards a Fine-Grained Understanding of Human-Object Interactions in Industrial Scenarios

Sep 26, 2023Abstract:ENIGMA-51 is a new egocentric dataset acquired in a real industrial domain by 19 subjects who followed instructions to complete the repair of electrical boards using industrial tools (e.g., electric screwdriver) and electronic instruments (e.g., oscilloscope). The 51 sequences are densely annotated with a rich set of labels that enable the systematic study of human-object interactions in the industrial domain. We provide benchmarks on four tasks related to human-object interactions: 1) untrimmed action detection, 2) egocentric human-object interaction detection, 3) short-term object interaction anticipation and 4) natural language understanding of intents and entities. Baseline results show that the ENIGMA-51 dataset poses a challenging benchmark to study human-object interactions in industrial scenarios. We publicly release the dataset at: https://iplab.dmi.unict.it/ENIGMA-51/.

An Outlook into the Future of Egocentric Vision

Aug 14, 2023

Abstract:What will the future be? We wonder! In this survey, we explore the gap between current research in egocentric vision and the ever-anticipated future, where wearable computing, with outward facing cameras and digital overlays, is expected to be integrated in our every day lives. To understand this gap, the article starts by envisaging the future through character-based stories, showcasing through examples the limitations of current technology. We then provide a mapping between this future and previously defined research tasks. For each task, we survey its seminal works, current state-of-the-art methodologies and available datasets, then reflect on shortcomings that limit its applicability to future research. Note that this survey focuses on software models for egocentric vision, independent of any specific hardware. The paper concludes with recommendations for areas of immediate explorations so as to unlock our path to the future always-on, personalised and life-enhancing egocentric vision.

Exploiting Multimodal Synthetic Data for Egocentric Human-Object Interaction Detection in an Industrial Scenario

Jun 21, 2023

Abstract:In this paper, we tackle the problem of Egocentric Human-Object Interaction (EHOI) detection in an industrial setting. To overcome the lack of public datasets in this context, we propose a pipeline and a tool for generating synthetic images of EHOIs paired with several annotations and data signals (e.g., depth maps or instance segmentation masks). Using the proposed pipeline, we present EgoISM-HOI a new multimodal dataset composed of synthetic EHOI images in an industrial environment with rich annotations of hands and objects. To demonstrate the utility and effectiveness of synthetic EHOI data produced by the proposed tool, we designed a new method that predicts and combines different multimodal signals to detect EHOIs in RGB images. Our study shows that exploiting synthetic data to pre-train the proposed method significantly improves performance when tested on real-world data. Moreover, the proposed approach outperforms state-of-the-art class-agnostic methods. To support research in this field, we publicly release the datasets, source code, and pre-trained models at https://iplab.dmi.unict.it/egoism-hoi.

StillFast: An End-to-End Approach for Short-Term Object Interaction Anticipation

Apr 08, 2023

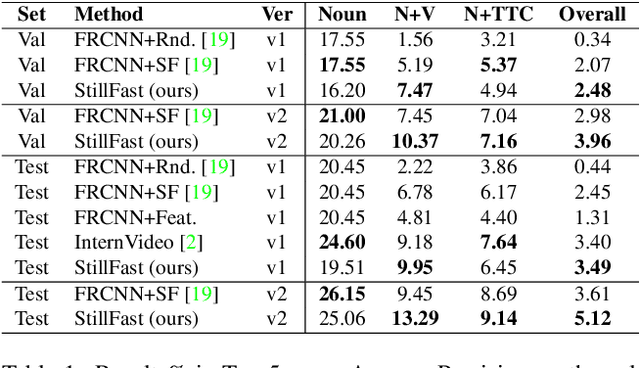

Abstract:Anticipation problem has been studied considering different aspects such as predicting humans' locations, predicting hands and objects trajectories, and forecasting actions and human-object interactions. In this paper, we studied the short-term object interaction anticipation problem from the egocentric point of view, proposing a new end-to-end architecture named StillFast. Our approach simultaneously processes a still image and a video detecting and localizing next-active objects, predicting the verb which describes the future interaction and determining when the interaction will start. Experiments on the large-scale egocentric dataset EGO4D show that our method outperformed state-of-the-art approaches on the considered task. Our method is ranked first in the public leaderboard of the EGO4D short term object interaction anticipation challenge 2022. Please see the project web page for code and additional details: https://iplab.dmi.unict.it/stillfast/.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge