Emmanuel Blazquez

Asteroid Mining: ACT&Friends' Results for the GTOC 12 Problem

Oct 28, 2024Abstract:In 2023, the 12th edition of Global Trajectory Competition was organised around the problem referred to as "Sustainable Asteroid Mining". This paper reports the developments that led to the solution proposed by ESA's Advanced Concepts Team. Beyond the fact that the proposed approach failed to rank higher than fourth in the final competition leader-board, several innovative fundamental methodologies were developed which have a broader application. In particular, new methods based on machine learning as well as on manipulating the fundamental laws of astrodynamics were developed and able to fill with remarkable accuracy the gap between full low-thrust trajectories and their representation as impulsive Lambert transfers. A novel technique was devised to formulate the challenge of optimal subset selection from a repository of pre-existing optimal mining trajectories as an integer linear programming problem. Finally, the fundamental problem of searching for single optimal mining trajectories (mining and collecting all resources), albeit ignoring the possibility of having intra-ship collaboration and thus sub-optimal in the case of the GTOC12 problem, was efficiently solved by means of a novel search based on a look-ahead score and thus making sure to select asteroids that had chances to be re-visited later on.

Small Celestial Body Exploration with CubeSat Swarms

Aug 25, 2023Abstract:This work presents a large-scale simulation study investigating the deployment and operation of distributed swarms of CubeSats for interplanetary missions to small celestial bodies. Utilizing Taylor numerical integration and advanced collision detection techniques, we explore the potential of large CubeSat swarms in capturing gravity signals and reconstructing the internal mass distribution of a small celestial body while minimizing risks and Delta V budget. Our results offer insight into the applicability of this approach for future deep space exploration missions.

On the Generation of a Synthetic Event-Based Vision Dataset for Navigation and Landing

Aug 01, 2023

Abstract:An event-based camera outputs an event whenever a change in scene brightness of a preset magnitude is detected at a particular pixel location in the sensor plane. The resulting sparse and asynchronous output coupled with the high dynamic range and temporal resolution of this novel camera motivate the study of event-based cameras for navigation and landing applications. However, the lack of real-world and synthetic datasets to support this line of research has limited its consideration for onboard use. This paper presents a methodology and a software pipeline for generating event-based vision datasets from optimal landing trajectories during the approach of a target body. We construct sequences of photorealistic images of the lunar surface with the Planet and Asteroid Natural Scene Generation Utility at different viewpoints along a set of optimal descent trajectories obtained by varying the boundary conditions. The generated image sequences are then converted into event streams by means of an event-based camera emulator. We demonstrate that the pipeline can generate realistic event-based representations of surface features by constructing a dataset of 500 trajectories, complete with event streams and motion field ground truth data. We anticipate that novel event-based vision datasets can be generated using this pipeline to support various spacecraft pose reconstruction problems given events as input, and we hope that the proposed methodology would attract the attention of researchers working at the intersection of neuromorphic vision and guidance navigation and control.

Deep Learning of Dynamical System Parameters from Return Maps as Images

Jun 20, 2023Abstract:We present a novel approach to system identification (SI) using deep learning techniques. Focusing on parametric system identification (PSI), we use a supervised learning approach for estimating the parameters of discrete and continuous-time dynamical systems, irrespective of chaos. To accomplish this, we transform collections of state-space trajectory observations into image-like data to retain the state-space topology of trajectories from dynamical systems and train convolutional neural networks to estimate the parameters of dynamical systems from these images. We demonstrate that our approach can learn parameter estimation functions for various dynamical systems, and by using training-time data augmentation, we are able to learn estimation functions whose parameter estimates are robust to changes in the sample fidelity of their inputs. Once trained, these estimation models return parameter estimations for new systems with negligible time and computation costs.

Optimality Principles in Spacecraft Neural Guidance and Control

May 22, 2023Abstract:Spacecraft and drones aimed at exploring our solar system are designed to operate in conditions where the smart use of onboard resources is vital to the success or failure of the mission. Sensorimotor actions are thus often derived from high-level, quantifiable, optimality principles assigned to each task, utilizing consolidated tools in optimal control theory. The planned actions are derived on the ground and transferred onboard where controllers have the task of tracking the uploaded guidance profile. Here we argue that end-to-end neural guidance and control architectures (here called G&CNets) allow transferring onboard the burden of acting upon these optimality principles. In this way, the sensor information is transformed in real time into optimal plans thus increasing the mission autonomy and robustness. We discuss the main results obtained in training such neural architectures in simulation for interplanetary transfers, landings and close proximity operations, highlighting the successful learning of optimality principles by the neural model. We then suggest drone racing as an ideal gym environment to test these architectures on real robotic platforms, thus increasing confidence in their utilization on future space exploration missions. Drone racing shares with spacecraft missions both limited onboard computational capabilities and similar control structures induced from the optimality principle sought, but it also entails different levels of uncertainties and unmodelled effects. Furthermore, the success of G&CNets on extremely resource-restricted drones illustrates their potential to bring real-time optimal control within reach of a wider variety of robotic systems, both in space and on Earth.

Neuromorphic Computing and Sensing in Space

Dec 17, 2022Abstract:The term ``neuromorphic'' refers to systems that are closely resembling the architecture and/or the dynamics of biological neural networks. Typical examples are novel computer chips designed to mimic the architecture of a biological brain, or sensors that get inspiration from, e.g., the visual or olfactory systems in insects and mammals to acquire information about the environment. This approach is not without ambition as it promises to enable engineered devices able to reproduce the level of performance observed in biological organisms -- the main immediate advantage being the efficient use of scarce resources, which translates into low power requirements. The emphasis on low power and energy efficiency of neuromorphic devices is a perfect match for space applications. Spacecraft -- especially miniaturized ones -- have strict energy constraints as they need to operate in an environment which is scarce with resources and extremely hostile. In this work we present an overview of early attempts made to study a neuromorphic approach in a space context at the European Space Agency's (ESA) Advanced Concepts Team (ACT).

The Fellowship of the Dyson Ring: ACT&Friends' Results and Methods for GTOC 11

May 23, 2022

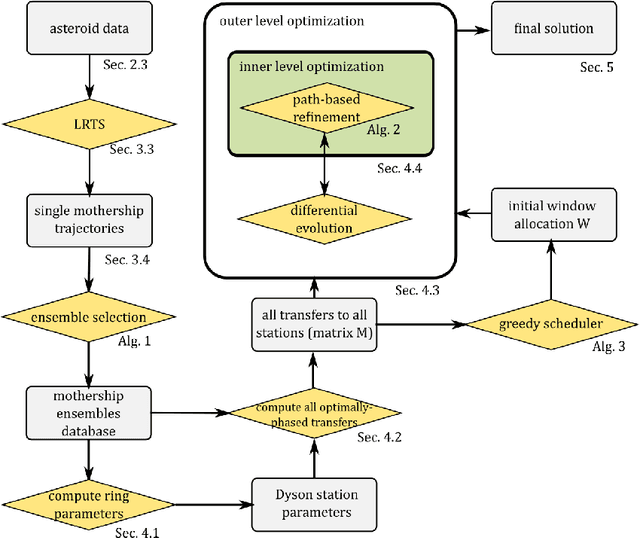

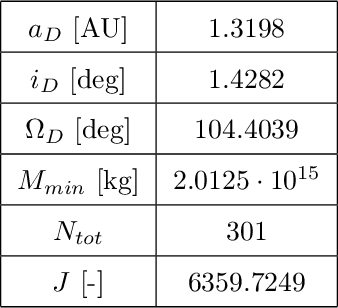

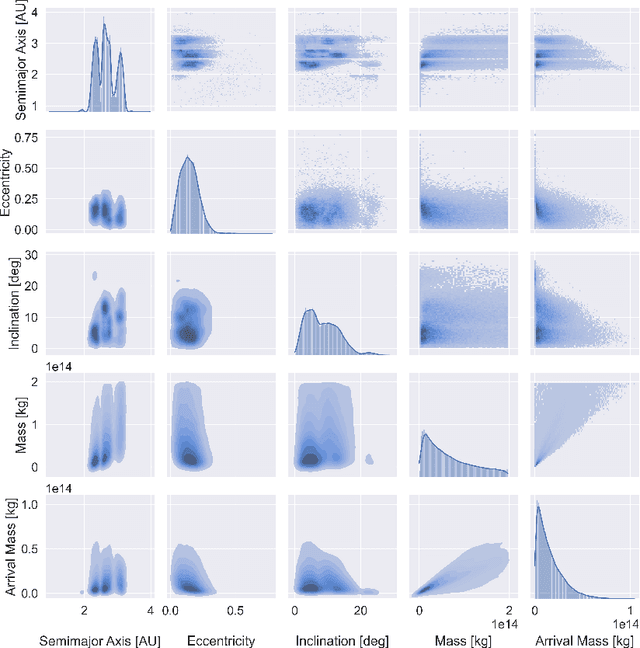

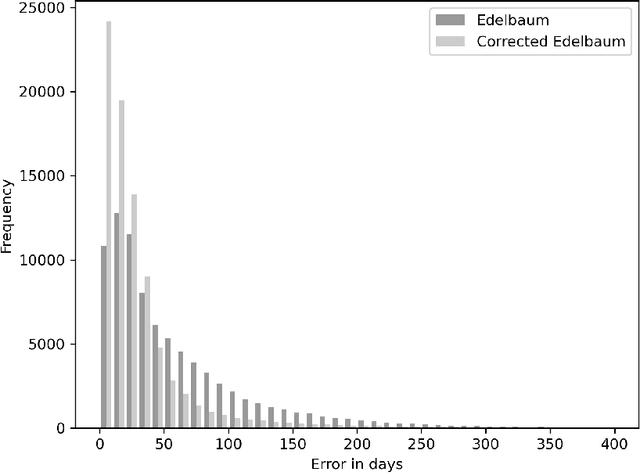

Abstract:Dyson spheres are hypothetical megastructures encircling stars in order to harvest most of their energy output. During the 11th edition of the GTOC challenge, participants were tasked with a complex trajectory planning related to the construction of a precursor Dyson structure, a heliocentric ring made of twelve stations. To this purpose, we developed several new approaches that synthesize techniques from machine learning, combinatorial optimization, planning and scheduling, and evolutionary optimization effectively integrated into a fully automated pipeline. These include a machine learned transfer time estimator, improving the established Edelbaum approximation and thus better informing a Lazy Race Tree Search to identify and collect asteroids with high arrival mass for the stations; a series of optimally-phased low-thrust transfers to all stations computed by indirect optimization techniques, exploiting the synodic periodicity of the system; and a modified Hungarian scheduling algorithm, which utilizes evolutionary techniques to arrange a mass-balanced arrival schedule out of all transfer possibilities. We describe the steps of our pipeline in detail with a special focus on how our approaches mutually benefit from each other. Lastly, we outline and analyze the final solution of our team, ACT&Friends, which ranked second at the GTOC 11 challenge.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge