Emma Robinson

Robust and Generalisable Segmentation of Subtle Epilepsy-causing Lesions: a Graph Convolutional Approach

Jun 05, 2023

Abstract:Focal cortical dysplasia (FCD) is a leading cause of drug-resistant focal epilepsy, which can be cured by surgery. These lesions are extremely subtle and often missed even by expert neuroradiologists. "Ground truth" manual lesion masks are therefore expensive, limited and have large inter-rater variability. Existing FCD detection methods are limited by high numbers of false positive predictions, primarily due to vertex- or patch-based approaches that lack whole-brain context. Here, we propose to approach the problem as semantic segmentation using graph convolutional networks (GCN), which allows our model to learn spatial relationships between brain regions. To address the specific challenges of FCD identification, our proposed model includes an auxiliary loss to predict distance from the lesion to reduce false positives and a weak supervision classification loss to facilitate learning from uncertain lesion masks. On a multi-centre dataset of 1015 participants with surface-based features and manual lesion masks from structural MRI data, the proposed GCN achieved an AUC of 0.74, a significant improvement against a previously used vertex-wise multi-layer perceptron (MLP) classifier (AUC 0.64). With sensitivity thresholded at 67%, the GCN had a specificity of 71% in comparison to 49% when using the MLP. This improvement in specificity is vital for clinical integration of lesion-detection tools into the radiological workflow, through increasing clinical confidence in the use of AI radiological adjuncts and reducing the number of areas requiring expert review.

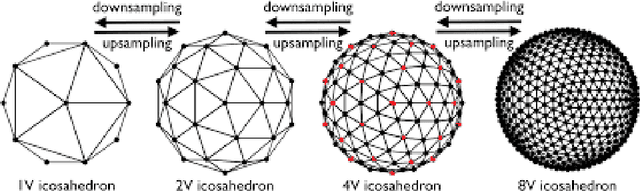

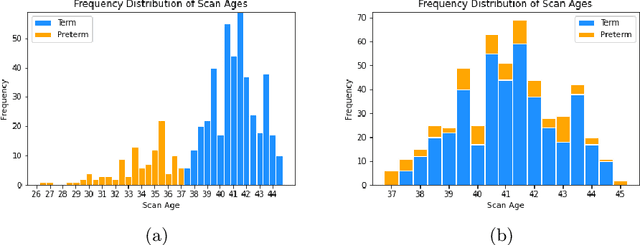

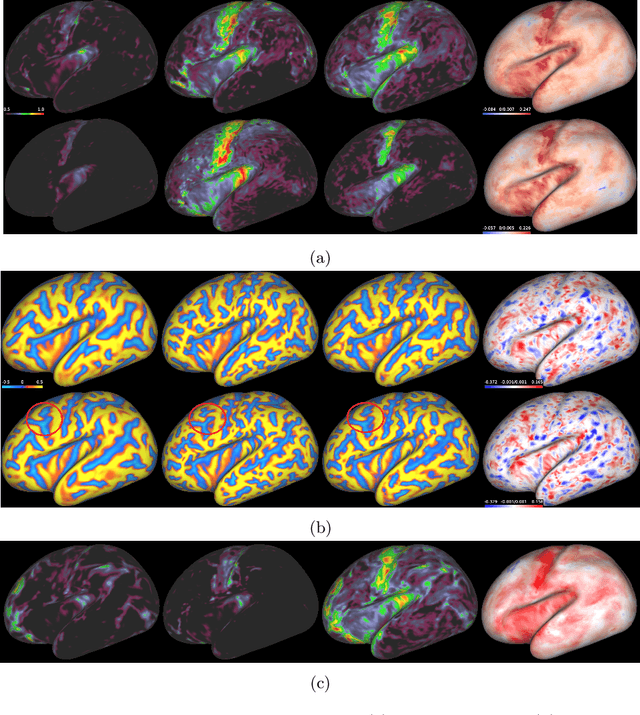

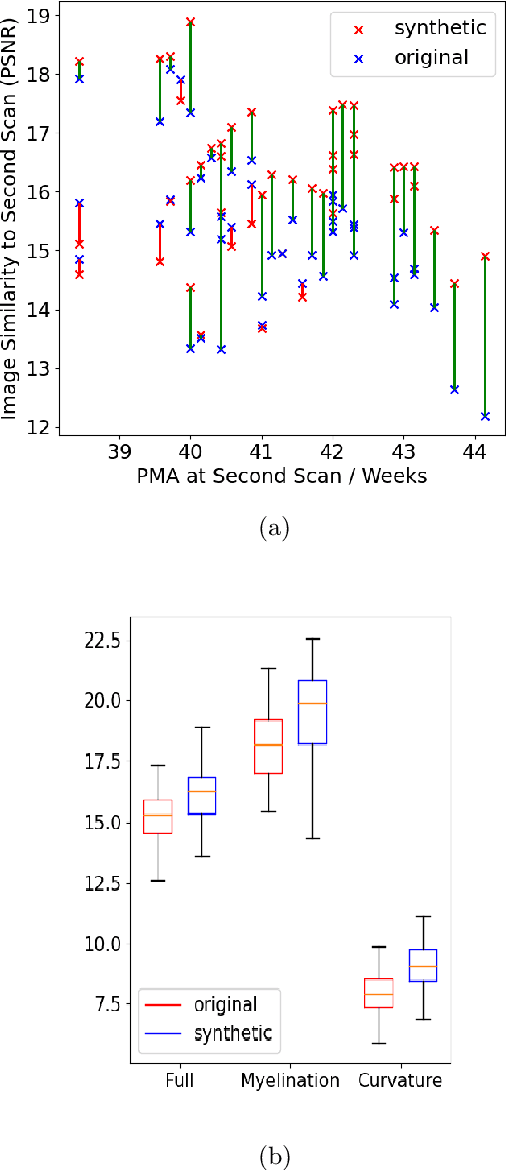

A Deep Generative Model of Neonatal Cortical Surface Development

Jun 22, 2022

Abstract:The neonatal cortical surface is known to be affected by preterm birth, and the subsequent changes to cortical organisation have been associated with poorer neurodevelopmental outcomes. Deep Generative models have the potential to lead to clinically interpretable models of disease, but developing these on the cortical surface is challenging since established techniques for learning convolutional filters are inappropriate on non-flat topologies. To close this gap, we implement a surface-based CycleGAN using mixture model CNNs (MoNet) to translate sphericalised neonatal cortical surface features (curvature and T1w/T2w cortical myelin) between different stages of cortical maturity. Results show our method is able to reliably predict changes in individual patterns of cortical organisation at later stages of gestation, validated by comparison to longitudinal data; and translate appearance between preterm and term gestation (> 37 weeks gestation), validated through comparison with a trained term/preterm classifier. Simulated differences in cortical maturation are consistent with observations in the literature.

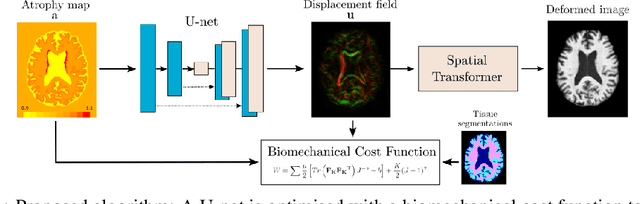

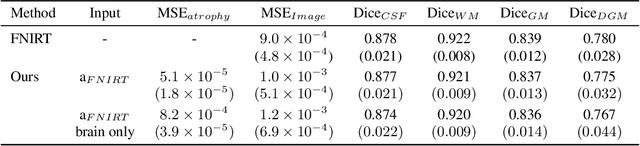

Biomechanical modelling of brain atrophy through deep learning

Dec 14, 2020

Abstract:We present a proof-of-concept, deep learning (DL) based, differentiable biomechanical model of realistic brain deformations. Using prescribed maps of local atrophy and growth as input, the network learns to deform images according to a Neo-Hookean model of tissue deformation. The tool is validated using longitudinal brain atrophy data from the Alzheimer's Disease Neuroimaging Initiative (ADNI) dataset, and we demonstrate that the trained model is capable of rapidly simulating new brain deformations with minimal residuals. This method has the potential to be used in data augmentation or for the exploration of different causal hypotheses reflecting brain growth and atrophy.

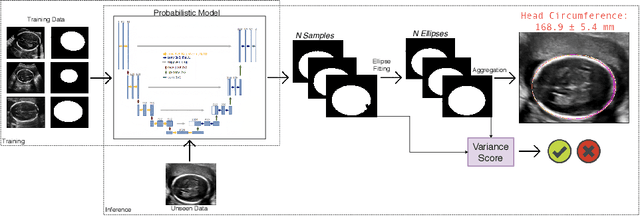

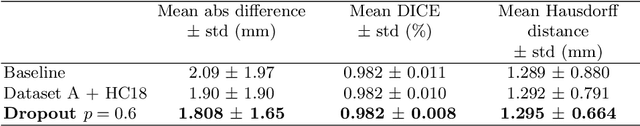

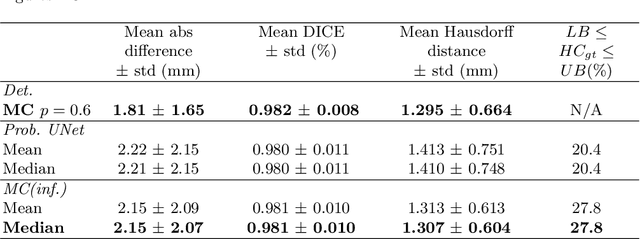

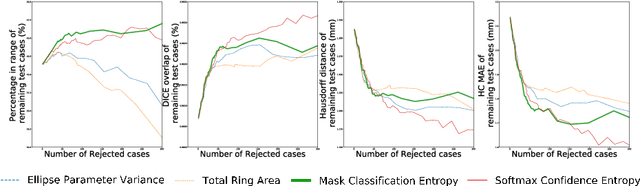

Confident Head Circumference Measurement from Ultrasound with Real-time Feedback for Sonographers

Aug 07, 2019

Abstract:Manual estimation of fetal Head Circumference (HC) from Ultrasound (US) is a key biometric for monitoring the healthy development of fetuses. Unfortunately, such measurements are subject to large inter-observer variability, resulting in low early-detection rates of fetal abnormalities. To address this issue, we propose a novel probabilistic Deep Learning approach for real-time automated estimation of fetal HC. This system feeds back statistics on measurement robustness to inform users how confident a deep neural network is in evaluating suitable views acquired during free-hand ultrasound examination. In real-time scenarios, this approach may be exploited to guide operators to scan planes that are as close as possible to the underlying distribution of training images, for the purpose of improving inter-operator consistency. We train on free-hand ultrasound data from over 2000 subjects (2848 training/540 test) and show that our method is able to predict HC measurements within 1.81$\pm$1.65mm deviation from the ground truth, with 50% of the test images fully contained within the predicted confidence margins, and an average of 1.82$\pm$1.78mm deviation from the margin for the remaining cases that are not fully contained.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge