Ding

NeRF-Based defect detection

Mar 31, 2025Abstract:The rapid growth of industrial automation has highlighted the need for precise and efficient defect detection in large-scale machinery. Traditional inspection techniques, involving manual procedures such as scaling tall structures for visual evaluation, are labor-intensive, subjective, and often hazardous. To overcome these challenges, this paper introduces an automated defect detection framework built on Neural Radiance Fields (NeRF) and the concept of digital twins. The system utilizes UAVs to capture images and reconstruct 3D models of machinery, producing both a standard reference model and a current-state model for comparison. Alignment of the models is achieved through the Iterative Closest Point (ICP) algorithm, enabling precise point cloud analysis to detect deviations that signify potential defects. By eliminating manual inspection, this method improves accuracy, enhances operational safety, and offers a scalable solution for defect detection. The proposed approach demonstrates great promise for reliable and efficient industrial applications.

On Statistical Estimation of Edge-Reinforced Random Walks

Mar 08, 2025Abstract:Reinforced random walks (RRWs), including vertex-reinforced random walks (VRRWs) and edge-reinforced random walks (ERRWs), model random walks where the transition probabilities evolve based on prior visitation history~\cite{mgr, fmk, tarres, volkov}. These models have found applications in various areas, such as network representation learning~\cite{xzzs}, reinforced PageRank~\cite{gly}, and modeling animal behaviors~\cite{smouse}, among others. However, statistical estimation of the parameters governing RRWs remains underexplored. This work focuses on estimating the initial edge weights of ERRWs using observed trajectory data. Leveraging the connections between an ERRW and a random walk in a random environment (RWRE)~\cite{mr, mr2}, as given by the so-called "magic formula", we propose an estimator based on the generalized method of moments. To analyze the sample complexity of our estimator, we exploit the hyperbolic Gaussian structure embedded in the random environment to bound the fluctuations of the underlying random edge conductances.

Semantic Product Search

Jul 01, 2019

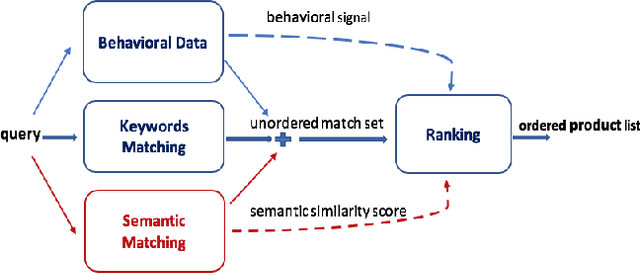

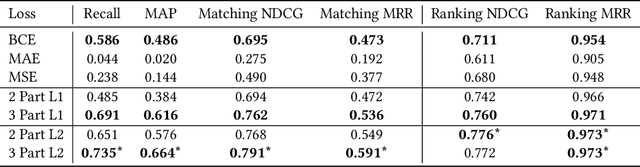

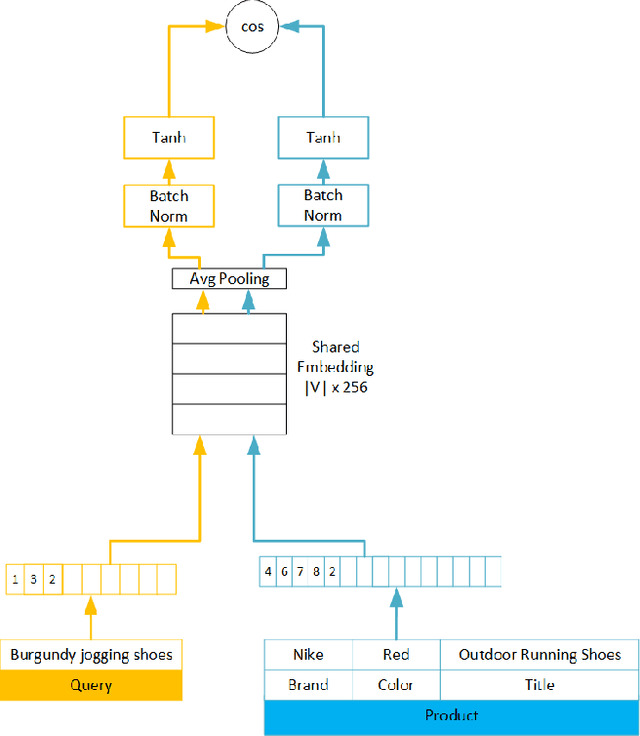

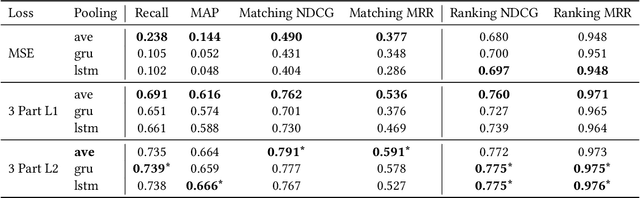

Abstract:We study the problem of semantic matching in product search, that is, given a customer query, retrieve all semantically related products from the catalog. Pure lexical matching via an inverted index falls short in this respect due to several factors: a) lack of understanding of hypernyms, synonyms, and antonyms, b) fragility to morphological variants (e.g. "woman" vs. "women"), and c) sensitivity to spelling errors. To address these issues, we train a deep learning model for semantic matching using customer behavior data. Much of the recent work on large-scale semantic search using deep learning focuses on ranking for web search. In contrast, semantic matching for product search presents several novel challenges, which we elucidate in this paper. We address these challenges by a) developing a new loss function that has an inbuilt threshold to differentiate between random negative examples, impressed but not purchased examples, and positive examples (purchased items), b) using average pooling in conjunction with n-grams to capture short-range linguistic patterns, c) using hashing to handle out of vocabulary tokens, and d) using a model parallel training architecture to scale across 8 GPUs. We present compelling offline results that demonstrate at least 4.7% improvement in Recall@100 and 14.5% improvement in mean average precision (MAP) over baseline state-of-the-art semantic search methods using the same tokenization method. Moreover, we present results and discuss learnings from online A/B tests which demonstrate the efficacy of our method.

RenderMap: Exploiting the Link Between Perception and Rendering for Dense Mapping

Feb 21, 2017

Abstract:We introduce an approach for the real-time (2Hz) creation of a dense map and alignment of a moving robotic agent within that map by rendering using a Graphics Processing Unit (GPU). This is done by recasting the scan alignment part of the dense mapping process as a rendering task. Alignment errors are computed from rendering the scene, comparing with range data from the sensors, and minimized by an optimizer. The proposed approach takes advantage of the advances in rendering techniques for computer graphics and GPU hardware to accelerate the algorithm. Moreover, it allows one to exploit information not used in classic dense mapping algorithms such as Iterative Closest Point (ICP) by rendering interfaces between the free space, occupied space and the unknown. The proposed approach leverages directly the rendering capabilities of the GPU, in contrast to other GPU-based approaches that deploy the GPU as a general purpose parallel computation platform. We argue that the proposed concept is a general consequence of treating perception problems as inverse problems of rendering. Many perception problems can be recast into a form where much of the computation is replaced by render operations. This is not only efficient since rendering is fast, but also simpler to implement and will naturally benefit from future advancements in GPU speed and rendering techniques. Furthermore, this general concept can go beyond addressing perception problems and can be used for other problem domains such as path planning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge