David Schubert

Meta-Learning for Automated Selection of Anomaly Detectors for Semi-Supervised Datasets

Nov 24, 2022

Abstract:In anomaly detection, a prominent task is to induce a model to identify anomalies learned solely based on normal data. Generally, one is interested in finding an anomaly detector that correctly identifies anomalies, i.e., data points that do not belong to the normal class, without raising too many false alarms. Which anomaly detector is best suited depends on the dataset at hand and thus needs to be tailored. The quality of an anomaly detector may be assessed via confusion-based metrics such as the Matthews correlation coefficient (MCC). However, since during training only normal data is available in a semi-supervised setting, such metrics are not accessible. To facilitate automated machine learning for anomaly detectors, we propose to employ meta-learning to predict MCC scores based on metrics that can be computed with normal data only. First promising results can be obtained considering the hypervolume and the false positive rate as meta-features.

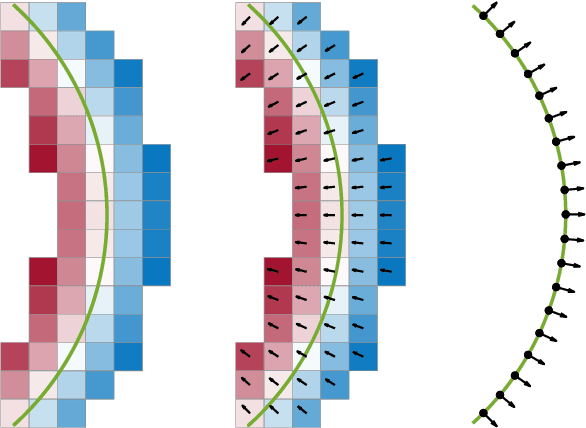

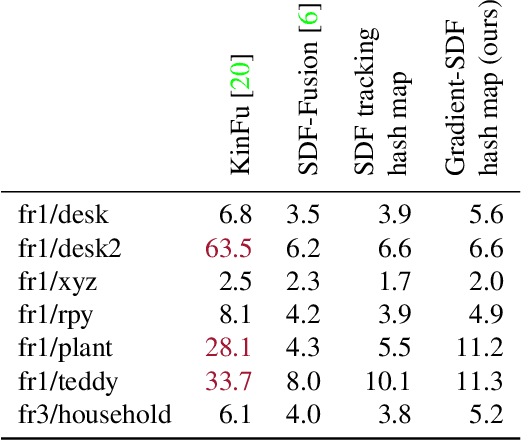

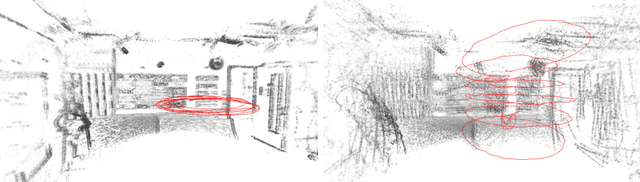

Gradient-SDF: A Semi-Implicit Surface Representation for 3D Reconstruction

Nov 26, 2021

Abstract:We present Gradient-SDF, a novel representation for 3D geometry that combines the advantages of implict and explicit representations. By storing at every voxel both the signed distance field as well as its gradient vector field, we enhance the capability of implicit representations with approaches originally formulated for explicit surfaces. As concrete examples, we show that (1) the Gradient-SDF allows us to perform direct SDF tracking from depth images, using efficient storage schemes like hash maps, and that (2) the Gradient-SDF representation enables us to perform photometric bundle adjustment directly in a voxel representation (without transforming into a point cloud or mesh), naturally a fully implicit optimization of geometry and camera poses and easy geometry upsampling. Experimental results confirm that this leads to significantly sharper reconstructions. Since the overall SDF voxel structure is still respected, the proposed Gradient-SDF is equally suited for (GPU) parallelization as related approaches.

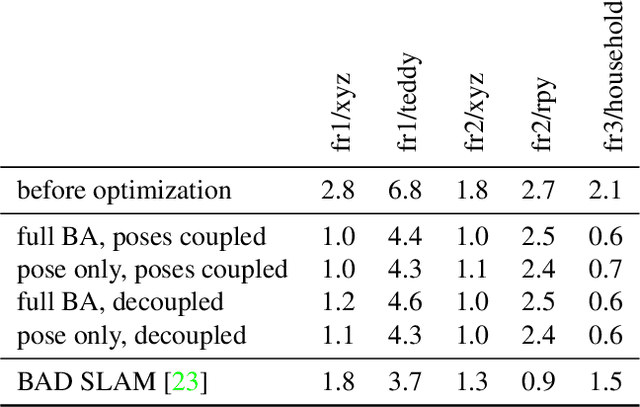

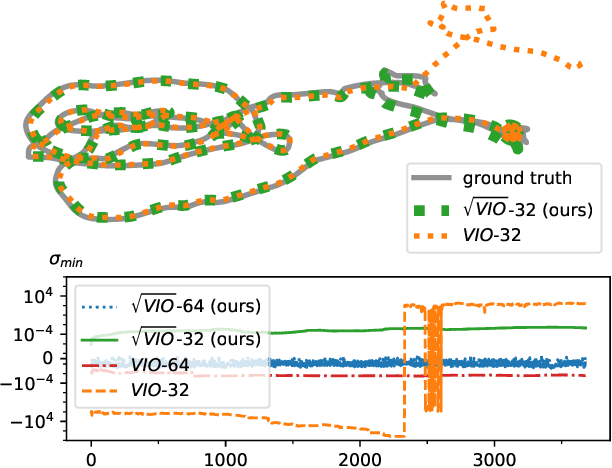

Square Root Marginalization for Sliding-Window Bundle Adjustment

Sep 05, 2021

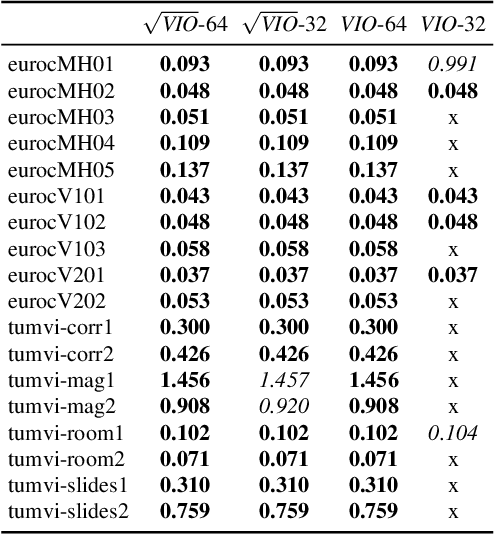

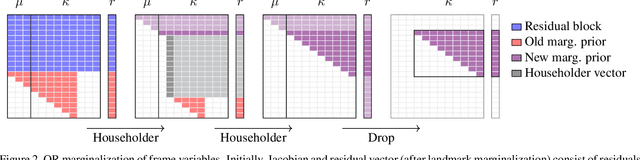

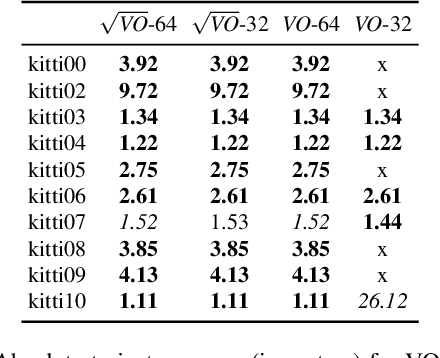

Abstract:In this paper we propose a novel square root sliding-window bundle adjustment suitable for real-time odometry applications. The square root formulation pervades three major aspects of our optimization-based sliding-window estimator: for bundle adjustment we eliminate landmark variables with nullspace projection; to store the marginalization prior we employ a matrix square root of the Hessian; and when marginalizing old poses we avoid forming normal equations and update the square root prior directly with a specialized QR decomposition. We show that the proposed square root marginalization is algebraically equivalent to the conventional use of Schur complement (SC) on the Hessian. Moreover, it elegantly deals with rank-deficient Jacobians producing a prior equivalent to SC with Moore-Penrose inverse. Our evaluation of visual and visual-inertial odometry on real-world datasets demonstrates that the proposed estimator is 36% faster than the baseline. It furthermore shows that in single precision, conventional Hessian-based marginalization leads to numeric failures and reduced accuracy. We analyse numeric properties of the marginalization prior to explain why our square root form does not suffer from the same effect and therefore entails superior performance.

Efficient Derivative Computation for Cumulative B-Splines on Lie Groups

Nov 20, 2019

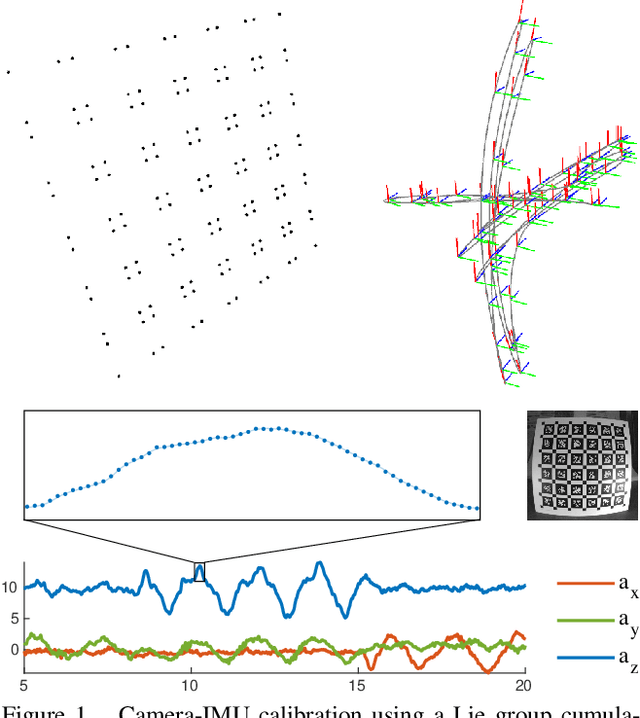

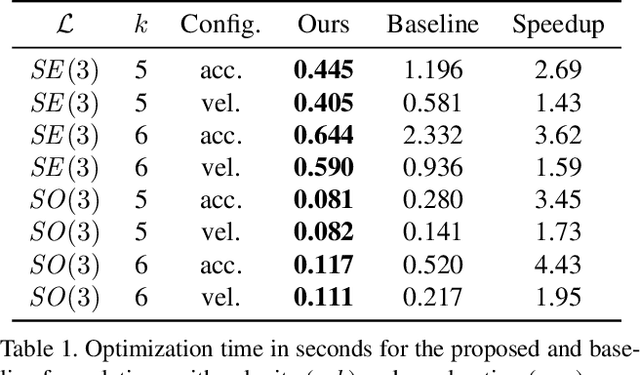

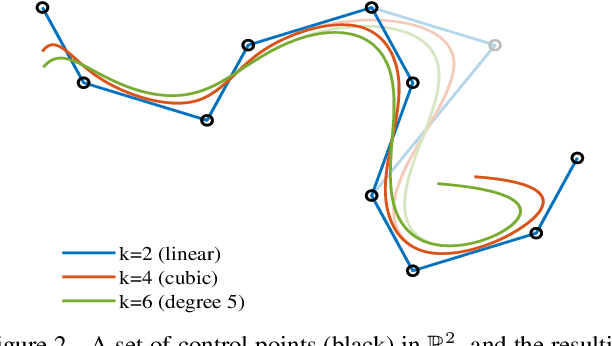

Abstract:Continuous-time trajectory representation has recently gained popularity for tasks where the fusion of high-frame-rate sensors and multiple unsynchronized devices is required. Lie group cumulative B-splines are a popular way of representing continuous trajectories without singularities. They have been used in near real-time SLAM and odometry systems with IMU, LiDAR, regular, RGB-D and event cameras, as well as for offline calibration. These applications require efficient computation of time derivatives (velocity, acceleration), but all prior works rely on a computationally suboptimal formulation. In this work we present an alternative derivation of time derivatives based on recurrence relations that needs $\mathcal{O}(k)$ instead of $\mathcal{O}(k^2)$ matrix operations (for a spline of order $k$) and results in simple and elegant expressions. While producing the same result, the proposed approach significantly speeds up the trajectory optimization and allows for computing simple analytic derivatives with respect to spline knots. The results presented in this paper pave the way for incorporating continuous-time trajectory representations into more applications where real-time performance is required.

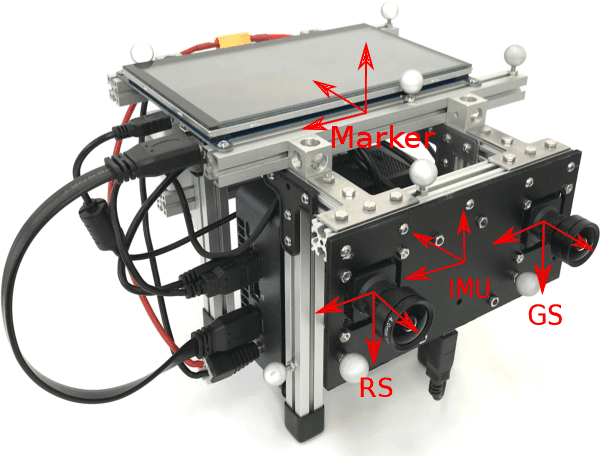

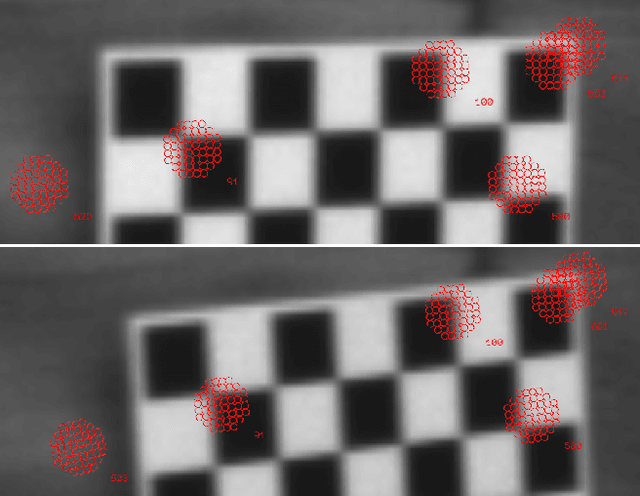

Rolling-Shutter Modelling for Direct Visual-Inertial Odometry

Nov 04, 2019

Abstract:We present a direct visual-inertial odometry (VIO) method which estimates the motion of the sensor setup and sparse 3D geometry of the environment based on measurements from a rolling-shutter camera and an inertial measurement unit (IMU). The visual part of the system performs a photometric bundle adjustment on a sparse set of points. This direct approach does not extract feature points and is able to track not only corners, but any pixels with sufficient gradient magnitude. Neglecting rolling-shutter effects in the visual part severely degrades accuracy and robustness of the system. In this paper, we incorporate a rolling-shutter model into the photometric bundle adjustment that estimates a set of recent keyframe poses and the inverse depth of a sparse set of points. IMU information is accumulated between several frames using measurement preintegration, and is inserted into the optimization as an additional constraint between selected keyframes. For every keyframe we estimate not only the pose but also velocity and biases to correct the IMU measurements. Unlike systems with global-shutter cameras, we use both IMU measurements and rolling-shutter effects of the camera to estimate velocity and biases for every state. Last, we evaluate our system on a novel dataset that contains global-shutter and rolling-shutter images, IMU data and ground-truth poses for ten different sequences, which we make publicly available. Evaluation shows that the proposed method outperforms a system where rolling shutter is not modelled and achieves similar accuracy to the global-shutter method on global-shutter data.

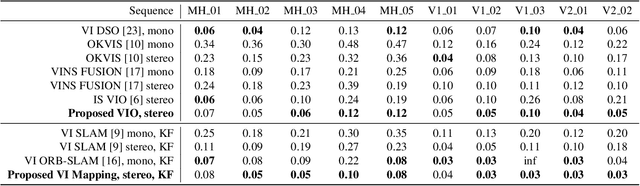

Visual-Inertial Mapping with Non-Linear Factor Recovery

Apr 29, 2019

Abstract:Cameras and inertial measurement units are complementary sensors for ego-motion estimation and environment mapping. Their combination makes visual-inertial odometry (VIO) systems more accurate and robust. For globally consistent mapping, however, combining visual and inertial information is not straightforward. To estimate the motion and geometry with a set of images large baselines are required. Because of that, most systems operate on keyframes that have large time intervals between each other. Inertial data on the other hand quickly degrades with the duration of the intervals and after several seconds of integration, it typically contains only little useful information. In this paper, we propose to extract relevant information for visual-inertial mapping from visual-inertial odometry using non-linear factor recovery. We reconstruct a set of non-linear factors that make an optimal approximation of the information on the trajectory accumulated by VIO. To obtain a globally consistent map we combine these factors with loop-closing constraints using bundle adjustment. The VIO factors make the roll and pitch angles of the global map observable, and improve the robustness and the accuracy of the mapping. In experiments on a public benchmark, we demonstrate superior performance of our method over the state-of-the-art approaches.

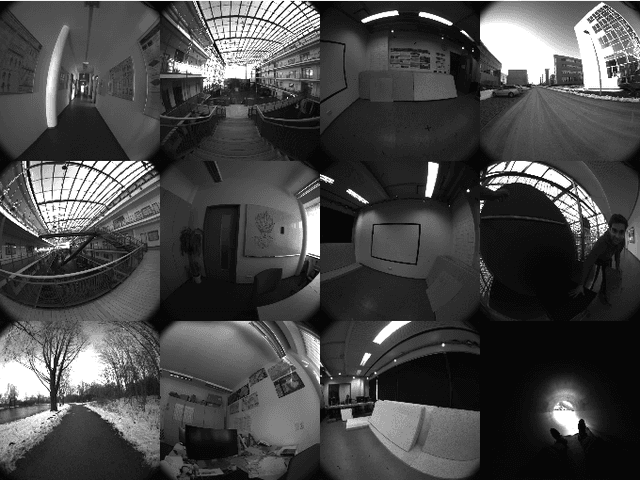

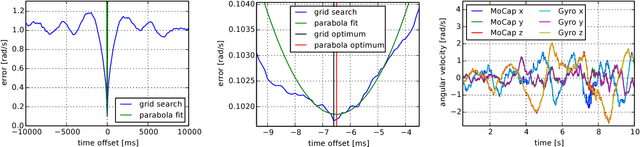

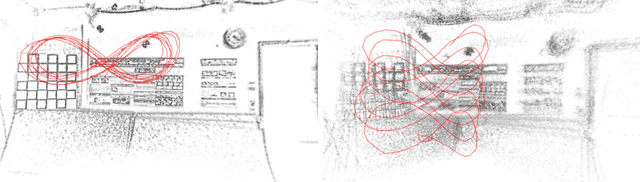

The TUM VI Benchmark for Evaluating Visual-Inertial Odometry

Sep 20, 2018

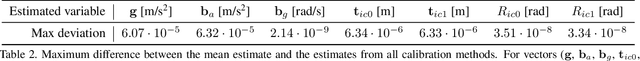

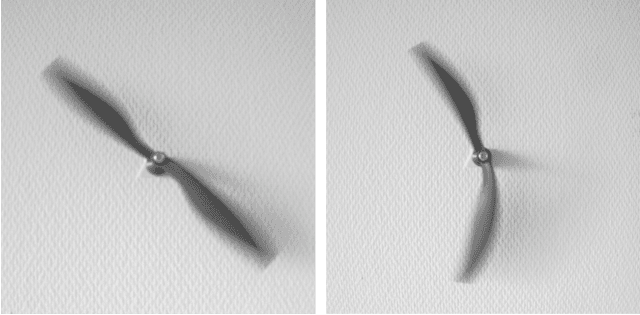

Abstract:Visual odometry and SLAM methods have a large variety of applications in domains such as augmented reality or robotics. Complementing vision sensors with inertial measurements tremendously improves tracking accuracy and robustness, and thus has spawned large interest in the development of visual-inertial (VI) odometry approaches. In this paper, we propose the TUM VI benchmark, a novel dataset with a diverse set of sequences in different scenes for evaluating VI odometry. It provides camera images with 1024x1024 resolution at 20 Hz, high dynamic range and photometric calibration. An IMU measures accelerations and angular velocities on 3 axes at 200 Hz, while the cameras and IMU sensors are time-synchronized in hardware. For trajectory evaluation, we also provide accurate pose ground truth from a motion capture system at high frequency (120 Hz) at the start and end of the sequences which we accurately aligned with the camera and IMU measurements. The full dataset with raw and calibrated data is publicly available. We also evaluate state-of-the-art VI odometry approaches on our dataset.

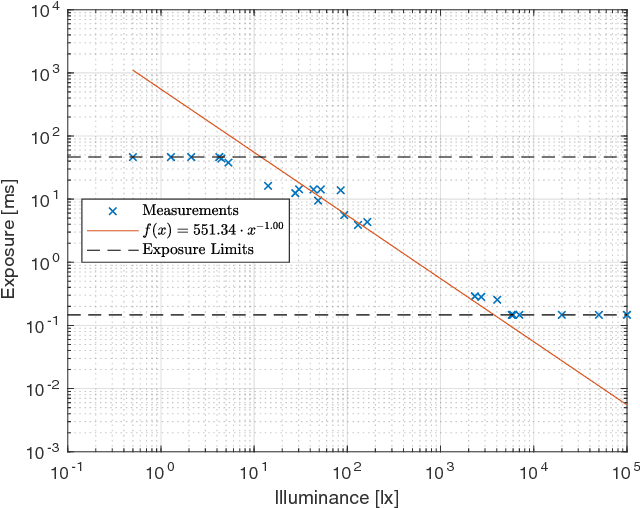

Direct Sparse Odometry with Rolling Shutter

Aug 01, 2018

Abstract:Neglecting the effects of rolling-shutter cameras for visual odometry (VO) severely degrades accuracy and robustness. In this paper, we propose a novel direct monocular VO method that incorporates a rolling-shutter model. Our approach extends direct sparse odometry which performs direct bundle adjustment of a set of recent keyframe poses and the depths of a sparse set of image points. We estimate the velocity at each keyframe and impose a constant-velocity prior for the optimization. In this way, we obtain a near real-time, accurate direct VO method. Our approach achieves improved results on challenging rolling-shutter sequences over state-of-the-art global-shutter VO.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge