Daniel Lenton

Waypoint Planning Networks

May 01, 2021

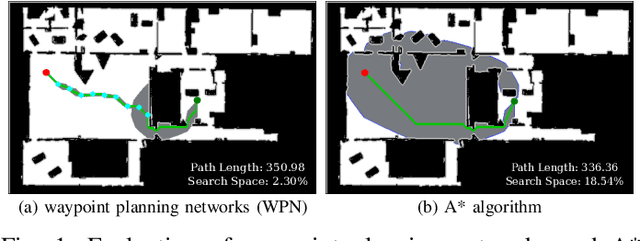

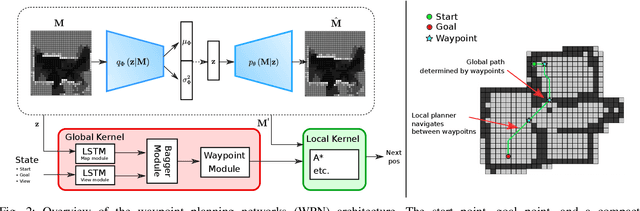

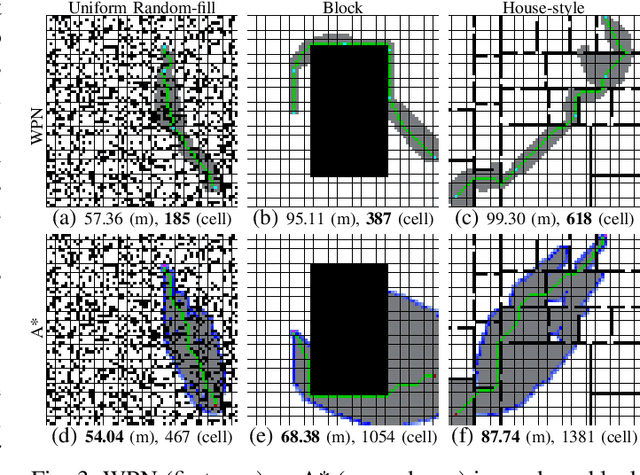

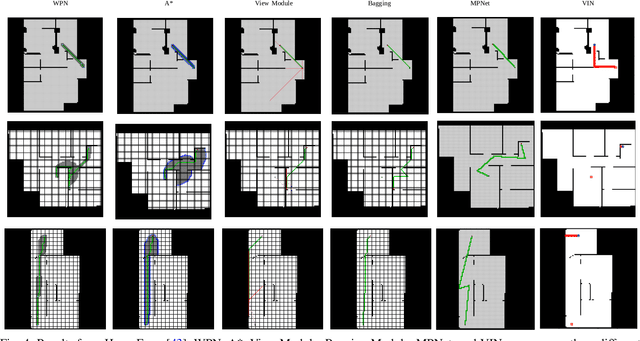

Abstract:With the recent advances in machine learning, path planning algorithms are also evolving; however, the learned path planning algorithms often have difficulty competing with success rates of classic algorithms. We propose waypoint planning networks (WPN), a hybrid algorithm based on LSTMs with a local kernel - a classic algorithm such as A*, and a global kernel using a learned algorithm. WPN produces a more computationally efficient and robust solution. We compare WPN against A*, as well as related works including motion planning networks (MPNet) and value iteration networks (VIN). In this paper, the design and experiments have been conducted for 2D environments. Experimental results outline the benefits of WPN, both in efficiency and generalization. It is shown that WPN's search space is considerably less than A*, while being able to generate near optimal results. Additionally, WPN works on partial maps, unlike A* which needs the full map in advance. The code is available online.

End-to-End Egospheric Spatial Memory

Feb 17, 2021

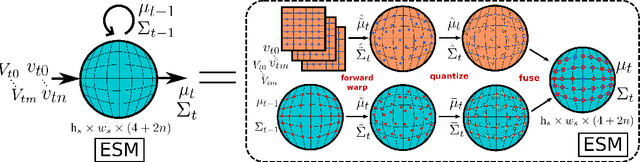

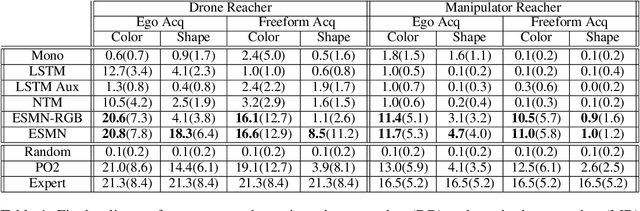

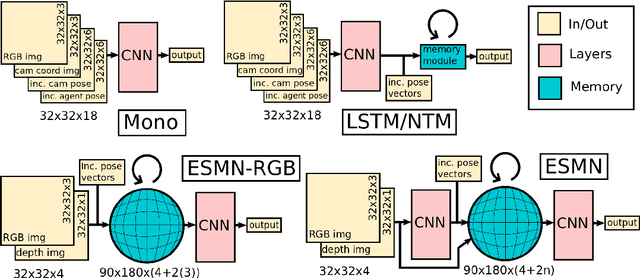

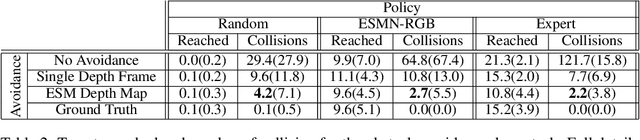

Abstract:Spatial memory, or the ability to remember and recall specific locations and objects, is central to autonomous agents' ability to carry out tasks in real environments. However, most existing artificial memory modules are not very adept at storing spatial information. We propose a parameter-free module, Egospheric Spatial Memory (ESM), which encodes the memory in an ego-sphere around the agent, enabling expressive 3D representations. ESM can be trained end-to-end via either imitation or reinforcement learning, and improves both training efficiency and final performance against other memory baselines on both drone and manipulator visuomotor control tasks. The explicit egocentric geometry also enables us to seamlessly combine the learned controller with other non-learned modalities, such as local obstacle avoidance. We further show applications to semantic segmentation on the ScanNet dataset, where ESM naturally combines image-level and map-level inference modalities. Through our broad set of experiments, we show that ESM provides a general computation graph for embodied spatial reasoning, and the module forms a bridge between real-time mapping systems and differentiable memory architectures. Implementation at: https://github.com/ivy-dl/memory.

Ivy: Templated Deep Learning for Inter-Framework Portability

Feb 15, 2021

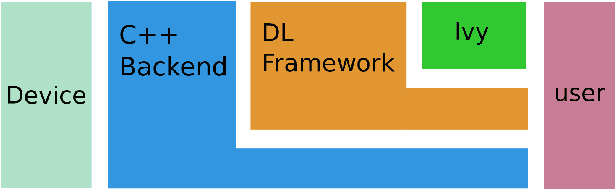

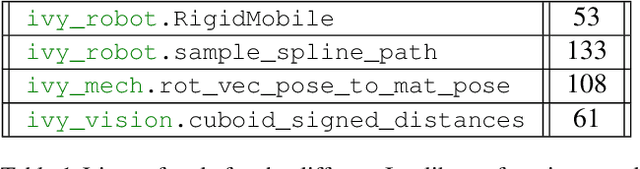

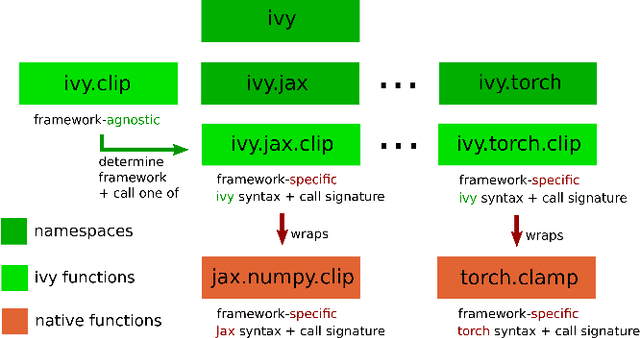

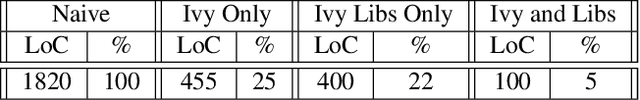

Abstract:We introduce Ivy, a templated Deep Learning (DL) framework which abstracts existing DL frameworks such that their core functions all exhibit consistent call signatures, syntax and input-output behaviour. Ivy allows high-level framework-agnostic functions to be implemented through the use of framework templates. The framework templates act as placeholders for the specific framework at development time, which are then determined at runtime. The portability of Ivy functions enables their use in projects of any supported framework. Ivy currently supports TensorFlow, PyTorch, MXNet, Jax and NumPy. Alongside Ivy, we release four pure-Ivy libraries for mechanics, 3D vision, robotics, and differentiable environments. Through our evaluations, we show that Ivy can significantly reduce lines of code with a runtime overhead of less than 1% in most cases. We welcome developers to join the Ivy community by writing their own functions, layers and libraries in Ivy, maximizing their audience and helping to accelerate DL research through the creation of lifelong inter-framework codebases. More information can be found at https://ivy-dl.org.

Learning To Find Shortest Collision-Free Paths From Images

Nov 30, 2020

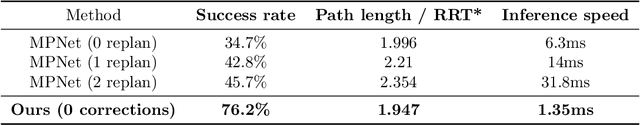

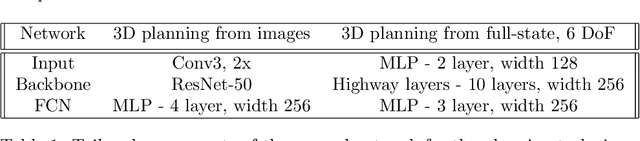

Abstract:Motion planning is a fundamental problem in robotics and machine perception. Sampling-based planners find accurate solutions by exhaustively exploring the space, but are inefficient and tend to produce jerky motions. Optimization and learning-based planners are more efficient and produce smooth trajectories. However, a significant hurdle that these approaches face is constructing a differentiable cost function that simultaneously minimizes path length and avoids collisions. These two objectives are conflicting by nature -- path length is continuous and well-behaved, but collisions are discrete non-differentiable events. Reconciling these terms has been a significant challenge in optimization-based motion planning. The main contribution of this paper is a novel cost function that guarantees collision-free shortest paths are found at its minimum. We show that our approach works seamlessly with RGBD input and predicts high-quality paths in 2D, 3D, and 6 DoF robotic manipulator settings. Our method also reduces training and inference time compared to existing approaches, in some cases by orders of magnitude.

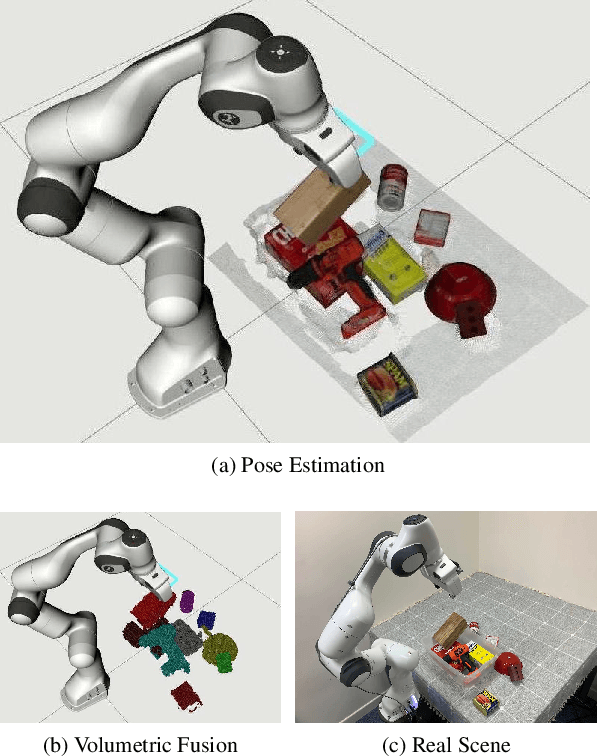

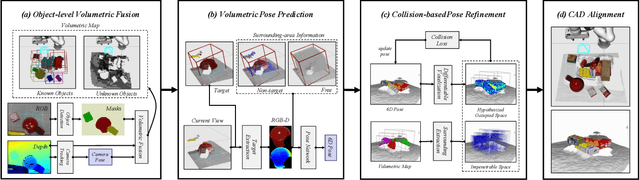

MoreFusion: Multi-object Reasoning for 6D Pose Estimation from Volumetric Fusion

Apr 09, 2020

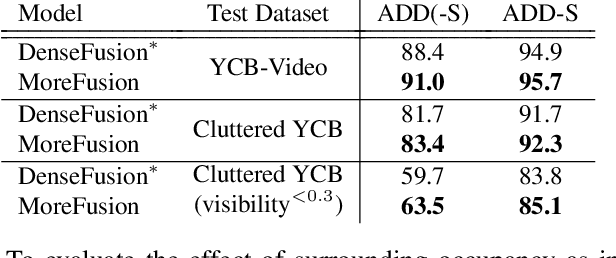

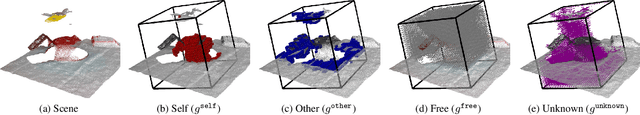

Abstract:Robots and other smart devices need efficient object-based scene representations from their on-board vision systems to reason about contact, physics and occlusion. Recognized precise object models will play an important role alongside non-parametric reconstructions of unrecognized structures. We present a system which can estimate the accurate poses of multiple known objects in contact and occlusion from real-time, embodied multi-view vision. Our approach makes 3D object pose proposals from single RGB-D views, accumulates pose estimates and non-parametric occupancy information from multiple views as the camera moves, and performs joint optimization to estimate consistent, non-intersecting poses for multiple objects in contact. We verify the accuracy and robustness of our approach experimentally on 2 object datasets: YCB-Video, and our own challenging Cluttered YCB-Video. We demonstrate a real-time robotics application where a robot arm precisely and orderly disassembles complicated piles of objects, using only on-board RGB-D vision.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge