Chris Ford

AnyRotate: Gravity-Invariant In-Hand Object Rotation with Sim-to-Real Touch

May 12, 2024

Abstract:In-hand manipulation is an integral component of human dexterity. Our hands rely on tactile feedback for stable and reactive motions to ensure objects do not slip away unintentionally during manipulation. For a robot hand, this level of dexterity requires extracting and utilizing rich contact information for precise motor control. In this paper, we present AnyRotate, a system for gravity-invariant multi-axis in-hand object rotation using dense featured sim-to-real touch. We construct a continuous contact feature representation to provide tactile feedback for training a policy in simulation and introduce an approach to perform zero-shot policy transfer by training an observation model to bridge the sim-to-real gap. Our experiments highlight the benefit of detailed contact information when handling objects with varying properties. In the real world, we demonstrate successful sim-to-real transfer of the dense tactile policy, generalizing to a diverse range of objects for various rotation axes and hand directions and outperforming other forms of low-dimensional touch. Interestingly, despite not having explicit slip detection, rich multi-fingered tactile sensing can implicitly detect object movement within grasp and provide a reactive behavior that improves the robustness of the policy, highlighting the importance of information-rich tactile sensing for in-hand manipulation.

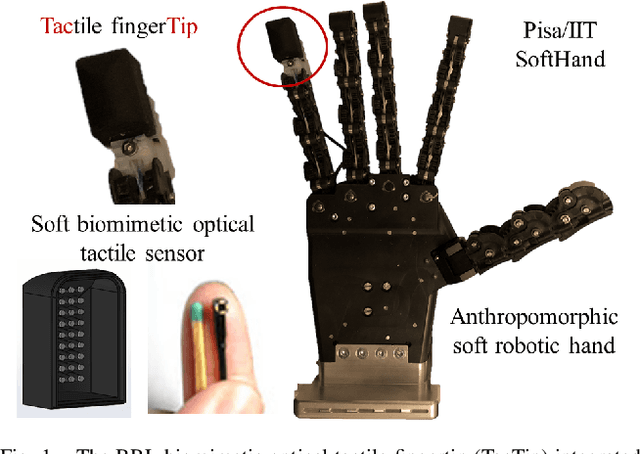

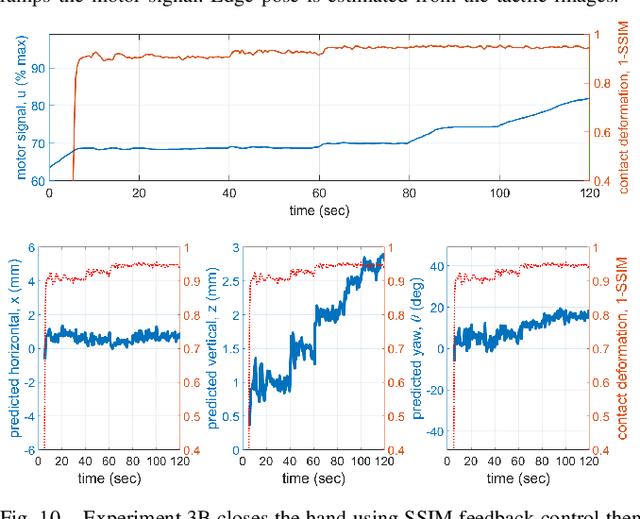

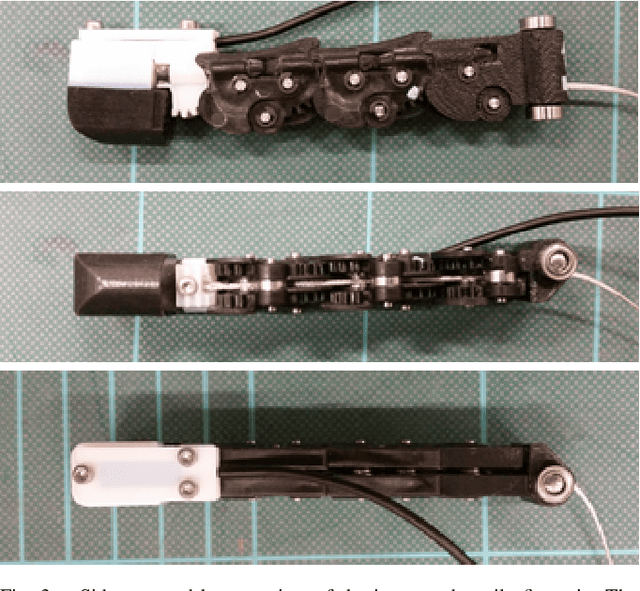

Towards integrated tactile sensorimotor control in anthropomorphic soft robotic hands

Feb 05, 2021

Abstract:In this work, we report on the integrated sensorimotor control of the Pisa/IIT SoftHand, an anthropomorphic soft robot hand designed around the principle of adaptive synergies, with the BRL tactile fingertip (TacTip), a soft biomimetic optical tactile sensor based on the human sense of touch. Our focus is how a sense of touch can be used to control an anthropomorphic hand with one degree of actuation, based on an integration that respects the hand's mechanical functionality. We consider: (i) closed-loop tactile control to establish a light contact on an unknown held object, based on the structural similarity with an undeformed tactile image; and (ii) controlling the estimated pose of an edge feature of a held object, using a convolutional neural network approach developed for controlling other sensors in the TacTip family. Overall, this gives a foundation to endow soft robotic hands with human-like touch, with implications for autonomous grasping, manipulation, human-robot interaction and prosthetics. Supplemental video: https://youtu.be/ndsxj659bkQ

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge