Asad Ali Shahid

Robot Drummer: Learning Rhythmic Skills for Humanoid Drumming

Jul 16, 2025

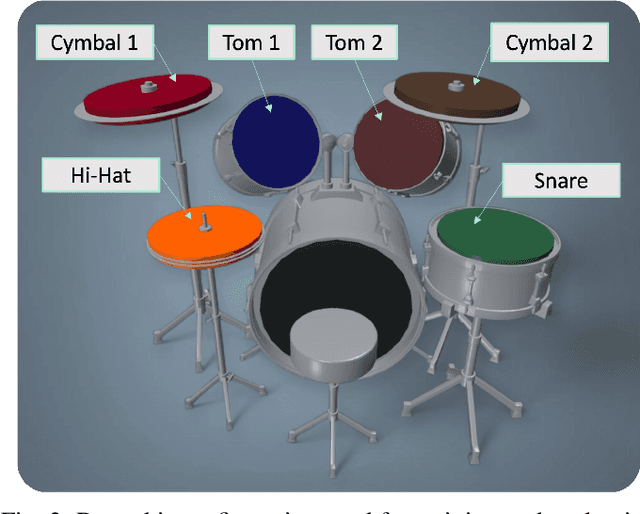

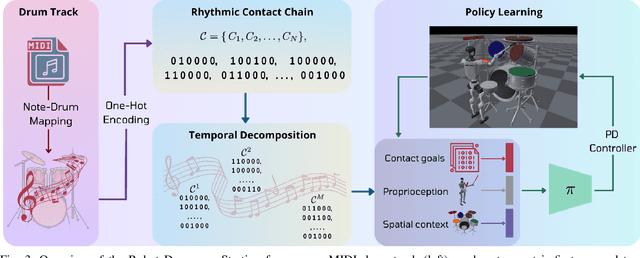

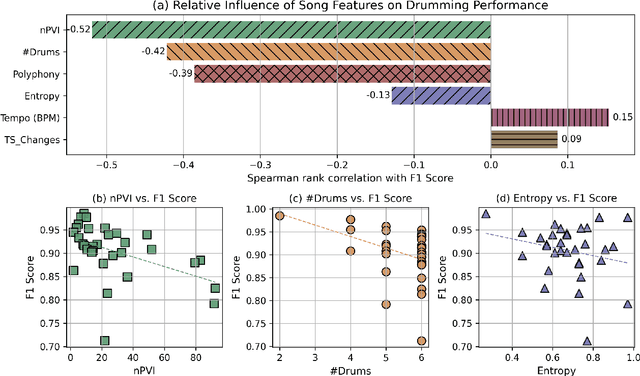

Abstract:Humanoid robots have seen remarkable advances in dexterity, balance, and locomotion, yet their role in expressive domains such as music performance remains largely unexplored. Musical tasks, like drumming, present unique challenges, including split-second timing, rapid contacts, and multi-limb coordination over performances lasting minutes. In this paper, we introduce Robot Drummer, a humanoid capable of expressive, high-precision drumming across a diverse repertoire of songs. We formulate humanoid drumming as sequential fulfillment of timed contacts and transform drum scores into a Rhythmic Contact Chain. To handle the long-horizon nature of musical performance, we decompose each piece into fixed-length segments and train a single policy across all segments in parallel using reinforcement learning. Through extensive experiments on over thirty popular rock, metal, and jazz tracks, our results demonstrate that Robot Drummer consistently achieves high F1 scores. The learned behaviors exhibit emergent human-like drumming strategies, such as cross-arm strikes, and adaptive stick assignments, demonstrating the potential of reinforcement learning to bring humanoid robots into the domain of creative musical performance. Project page: robotdrummer.github.io

Integrating Reinforcement Learning with Foundation Models for Autonomous Robotics: Methods and Perspectives

Oct 21, 2024

Abstract:Foundation models (FMs), large deep learning models pre-trained on vast, unlabeled datasets, exhibit powerful capabilities in understanding complex patterns and generating sophisticated outputs. However, they often struggle to adapt to specific tasks. Reinforcement learning (RL), which allows agents to learn through interaction and feedback, offers a compelling solution. Integrating RL with FMs enables these models to achieve desired outcomes and excel at particular tasks. Additionally, RL can be enhanced by leveraging the reasoning and generalization capabilities of FMs. This synergy is revolutionizing various fields, including robotics. FMs, rich in knowledge and generalization, provide robots with valuable information, while RL facilitates learning and adaptation through real-world interactions. This survey paper comprehensively explores this exciting intersection, examining how these paradigms can be integrated to advance robotic intelligence. We analyze the use of foundation models as action planners, the development of robotics-specific foundation models, and the mutual benefits of combining FMs with RL. Furthermore, we present a taxonomy of integration approaches, including large language models, vision-language models, diffusion models, and transformer-based RL models. We also explore how RL can utilize world representations learned from FMs to enhance robotic task execution. Our survey aims to synthesize current research and highlight key challenges in robotic reasoning and control, particularly in the context of integrating FMs and RL--two rapidly evolving technologies. By doing so, we seek to spark future research and emphasize critical areas that require further investigation to enhance robotics. We provide an updated collection of papers based on our taxonomy, accessible on our open-source project website at: https://github.com/clmoro/Robotics-RL-FMs-Integration.

RoboMorph: In-Context Meta-Learning for Robot Dynamics Modeling

Sep 18, 2024

Abstract:The landscape of Deep Learning has experienced a major shift with the pervasive adoption of Transformer-based architectures, particularly in Natural Language Processing (NLP). Novel avenues for physical applications, such as solving Partial Differential Equations and Image Vision, have been explored. However, in challenging domains like robotics, where high non-linearity poses significant challenges, Transformer-based applications are scarce. While Transformers have been used to provide robots with knowledge about high-level tasks, few efforts have been made to perform system identification. This paper proposes a novel methodology to learn a meta-dynamical model of a high-dimensional physical system, such as the Franka robotic arm, using a Transformer-based architecture without prior knowledge of the system's physical parameters. The objective is to predict quantities of interest (end-effector pose and joint positions) given the torque signals for each joint. This prediction can be useful as a component for Deep Model Predictive Control frameworks in robotics. The meta-model establishes the correlation between torques and positions and predicts the output for the complete trajectory. This work provides empirical evidence of the efficacy of the in-context learning paradigm, suggesting future improvements in learning the dynamics of robotic systems without explicit knowledge of physical parameters. Code, videos, and supplementary materials can be found at project website. See https://sites.google.com/view/robomorph/

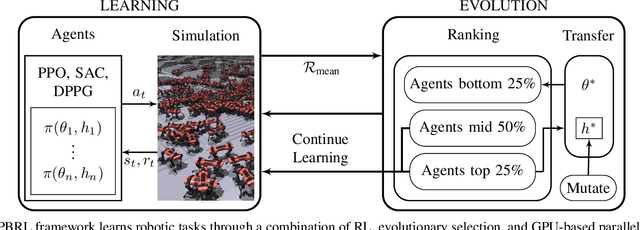

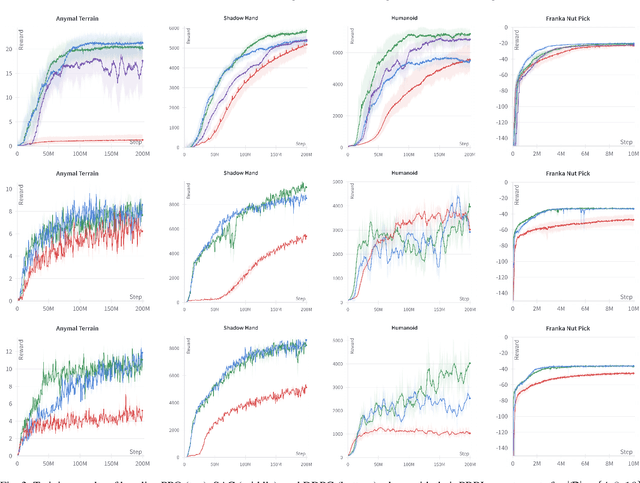

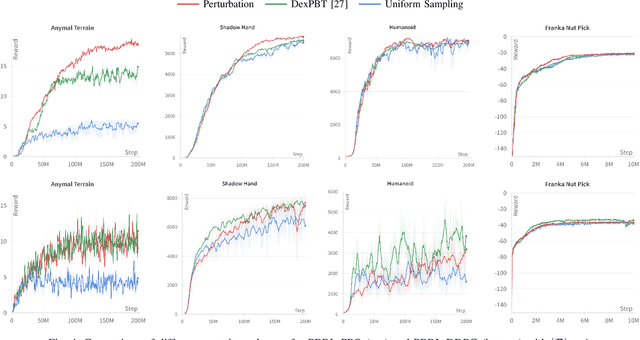

Scaling Population-Based Reinforcement Learning with GPU Accelerated Simulation

Apr 08, 2024

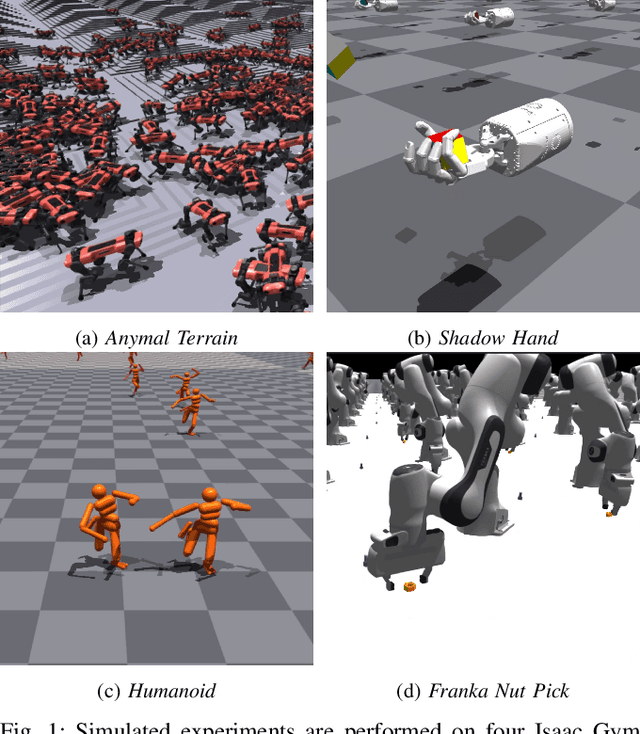

Abstract:In recent years, deep reinforcement learning (RL) has shown its effectiveness in solving complex continuous control tasks like locomotion and dexterous manipulation. However, this comes at the cost of an enormous amount of experience required for training, exacerbated by the sensitivity of learning efficiency and the policy performance to hyperparameter selection, which often requires numerous trials of time-consuming experiments. This work introduces a Population-Based Reinforcement Learning (PBRL) approach that exploits a GPU-accelerated physics simulator to enhance the exploration capabilities of RL by concurrently training multiple policies in parallel. The PBRL framework is applied to three state-of-the-art RL algorithms -- PPO, SAC, and DDPG -- dynamically adjusting hyperparameters based on the performance of learning agents. The experiments are performed on four challenging tasks in Isaac Gym -- Anymal Terrain, Shadow Hand, Humanoid, Franka Nut Pick -- by analyzing the effect of population size and mutation mechanisms for hyperparameters. The results show that PBRL agents achieve superior performance, in terms of cumulative reward, compared to non-evolutionary baseline agents. The trained agents are finally deployed in the real world for a Franka Nut Pick task, demonstrating successful sim-to-real transfer. Code and videos of the learned policies are available on our project website.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge