Anthony D. Joseph

Using Multitask Learning to Improve 12-Lead Electrocardiogram Classification

Dec 04, 2018

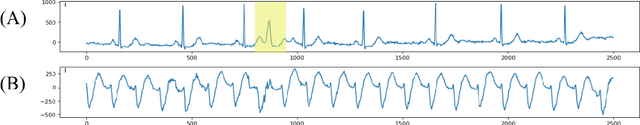

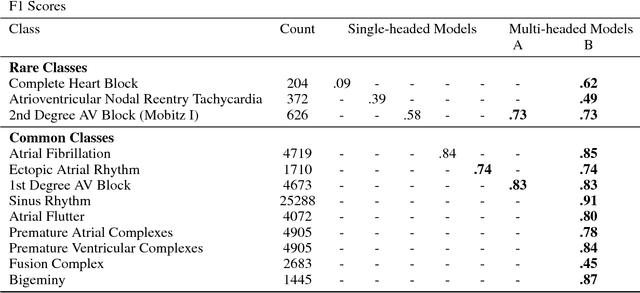

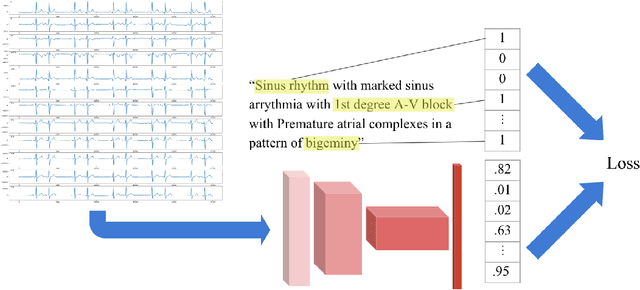

Abstract:We develop a multi-task convolutional neural network (CNN) to classify multiple diagnoses from 12-lead electrocardiograms (ECGs) using a dataset comprised of over 40,000 ECGs, with labels derived from cardiologist clinical interpretations. Since many clinically important classes can occur in low frequencies, approaches are needed to improve performance on rare classes. We compare the performance of several single-class classifiers on rare classes to a multi-headed classifier across all available classes. We demonstrate that the addition of common classes can significantly improve CNN performance on rarer classes when compared to a model trained on the rarer class in isolation. Using this method, we develop a model with high performance as measured by F1 score on multiple clinically relevant classes compared against the gold-standard cardiologist interpretation.

A Berkeley View of Systems Challenges for AI

Dec 15, 2017

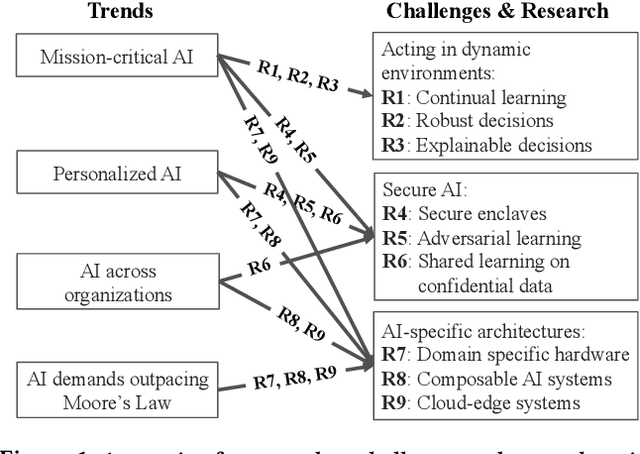

Abstract:With the increasing commoditization of computer vision, speech recognition and machine translation systems and the widespread deployment of learning-based back-end technologies such as digital advertising and intelligent infrastructures, AI (Artificial Intelligence) has moved from research labs to production. These changes have been made possible by unprecedented levels of data and computation, by methodological advances in machine learning, by innovations in systems software and architectures, and by the broad accessibility of these technologies. The next generation of AI systems promises to accelerate these developments and increasingly impact our lives via frequent interactions and making (often mission-critical) decisions on our behalf, often in highly personalized contexts. Realizing this promise, however, raises daunting challenges. In particular, we need AI systems that make timely and safe decisions in unpredictable environments, that are robust against sophisticated adversaries, and that can process ever increasing amounts of data across organizations and individuals without compromising confidentiality. These challenges will be exacerbated by the end of the Moore's Law, which will constrain the amount of data these technologies can store and process. In this paper, we propose several open research directions in systems, architectures, and security that can address these challenges and help unlock AI's potential to improve lives and society.

Evasion and Hardening of Tree Ensemble Classifiers

May 27, 2016

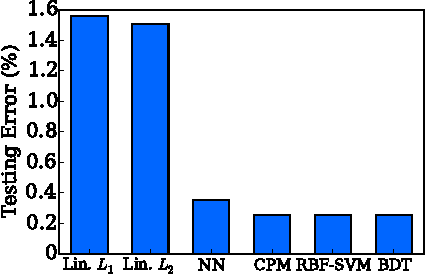

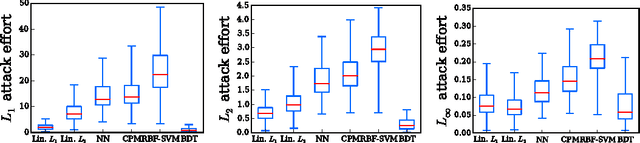

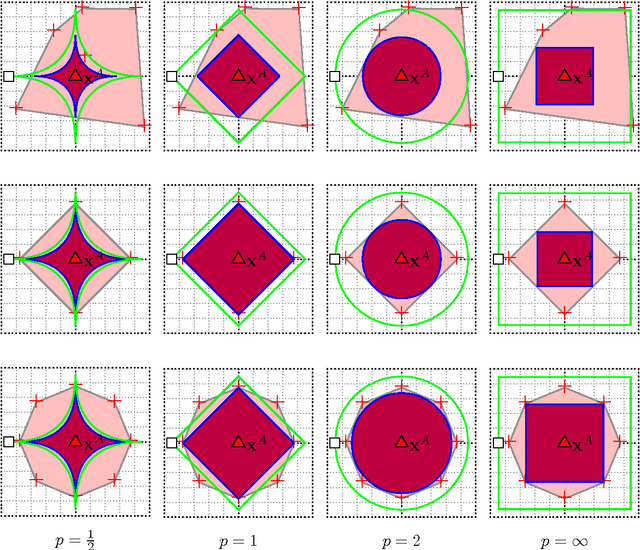

Abstract:Classifier evasion consists in finding for a given instance $x$ the nearest instance $x'$ such that the classifier predictions of $x$ and $x'$ are different. We present two novel algorithms for systematically computing evasions for tree ensembles such as boosted trees and random forests. Our first algorithm uses a Mixed Integer Linear Program solver and finds the optimal evading instance under an expressive set of constraints. Our second algorithm trades off optimality for speed by using symbolic prediction, a novel algorithm for fast finite differences on tree ensembles. On a digit recognition task, we demonstrate that both gradient boosted trees and random forests are extremely susceptible to evasions. Finally, we harden a boosted tree model without loss of predictive accuracy by augmenting the training set of each boosting round with evading instances, a technique we call adversarial boosting.

Query Strategies for Evading Convex-Inducing Classifiers

Jul 03, 2010

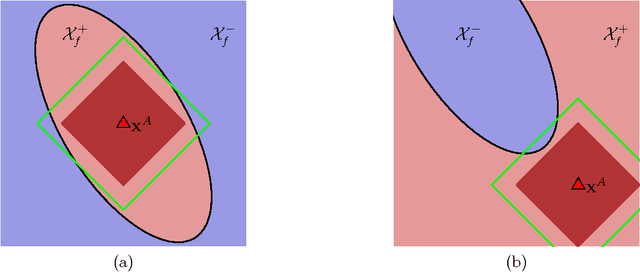

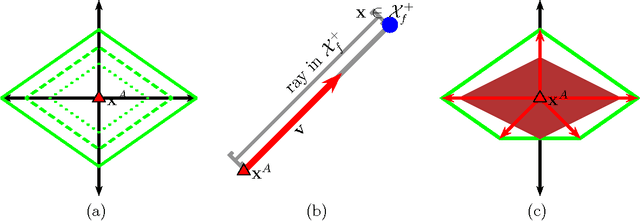

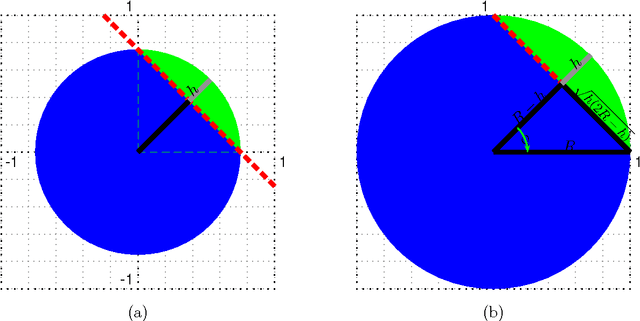

Abstract:Classifiers are often used to detect miscreant activities. We study how an adversary can systematically query a classifier to elicit information that allows the adversary to evade detection while incurring a near-minimal cost of modifying their intended malfeasance. We generalize the theory of Lowd and Meek (2005) to the family of convex-inducing classifiers that partition input space into two sets one of which is convex. We present query algorithms for this family that construct undetected instances of approximately minimal cost using only polynomially-many queries in the dimension of the space and in the level of approximation. Our results demonstrate that near-optimal evasion can be accomplished without reverse-engineering the classifier's decision boundary. We also consider general lp costs and show that near-optimal evasion on the family of convex-inducing classifiers is generally efficient for both positive and negative convexity for all levels of approximation if p=1.

Near-Optimal Evasion of Convex-Inducing Classifiers

Mar 14, 2010Abstract:Classifiers are often used to detect miscreant activities. We study how an adversary can efficiently query a classifier to elicit information that allows the adversary to evade detection at near-minimal cost. We generalize results of Lowd and Meek (2005) to convex-inducing classifiers. We present algorithms that construct undetected instances of near-minimal cost using only polynomially many queries in the dimension of the space and without reverse engineering the decision boundary.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge