Anna-Lisa Vollmer

Interprofessional and Agile Development of Mobirobot: A Socially Assistive Robot for Pediatric Therapy Across Clinical and Therapeutic Settings

Jan 14, 2026Abstract:Introduction: Socially assistive robots hold promise for enhancing therapeutic engagement in paediatric clinical settings. However, their successful implementation requires not only technical robustness but also context-sensitive, co-designed solutions. This paper presents Mobirobot, a socially assistive robot developed to support mobilisation in children recovering from trauma, fractures, or depressive disorders through personalised exercise programmes. Methods: An agile, human-centred development approach guided the iterative design of Mobirobot. Multidisciplinary clinical teams and end users were involved throughout the co-development process, which focused on early integration into real-world paediatric surgical and psychiatric settings. The robot, based on the NAO platform, features a simple setup, adaptable exercise routines with interactive guidance, motivational dialogue, and a graphical user interface (GUI) for monitoring and no-code system feedback. Results: Deployment in hospital environments enabled the identification of key design requirements and usability constraints. Stakeholder feedback led to refinements in interaction design, movement capabilities, and technical configuration. A feasibility study is currently underway to assess acceptance, usability, and perceived therapeutic benefit, with data collection including questionnaires, behavioural observations, and staff-patient interviews. Discussion: Mobirobot demonstrates how multiprofessional, stakeholder-led development can yield a socially assistive system suited for dynamic inpatient settings. Early-stage findings underscore the importance of contextual integration, robustness, and minimal-intrusion design. While challenges such as sensor limitations and patient recruitment remain, the platform offers a promising foundation for further research and clinical application.

Investigating Co-Constructive Behavior of Large Language Models in Explanation Dialogues

Apr 25, 2025Abstract:The ability to generate explanations that are understood by explainees is the quintessence of explainable artificial intelligence. Since understanding depends on the explainee's background and needs, recent research has focused on co-constructive explanation dialogues, where the explainer continuously monitors the explainee's understanding and adapts explanations dynamically. We investigate the ability of large language models (LLMs) to engage as explainers in co-constructive explanation dialogues. In particular, we present a user study in which explainees interact with LLMs, of which some have been instructed to explain a predefined topic co-constructively. We evaluate the explainees' understanding before and after the dialogue, as well as their perception of the LLMs' co-constructive behavior. Our results indicate that current LLMs show some co-constructive behaviors, such as asking verification questions, that foster the explainees' engagement and can improve understanding of a topic. However, their ability to effectively monitor the current understanding and scaffold the explanations accordingly remains limited.

Improving Human-Robot Teaching by Quantifying and Reducing Mental Model Mismatch

Jan 08, 2025

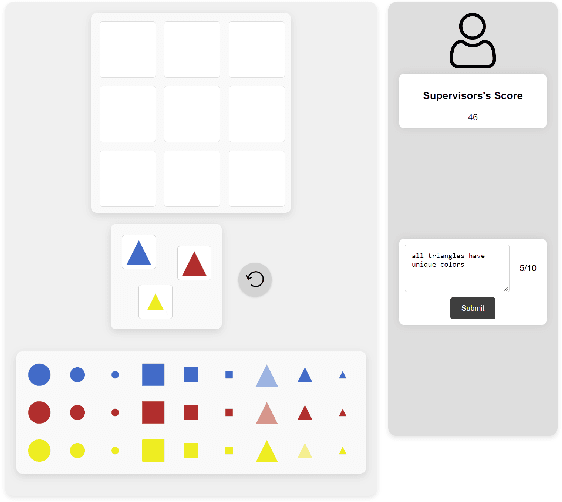

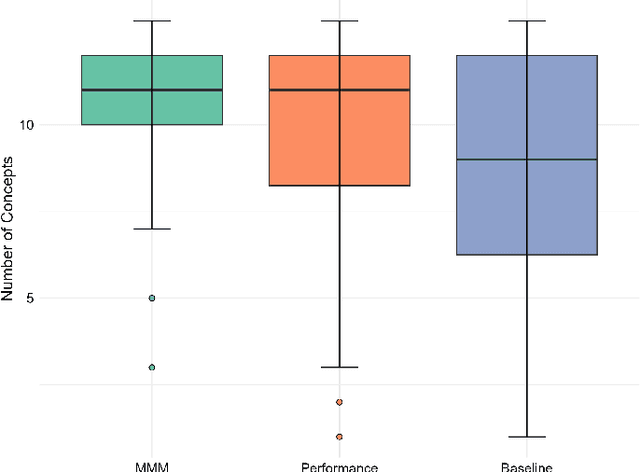

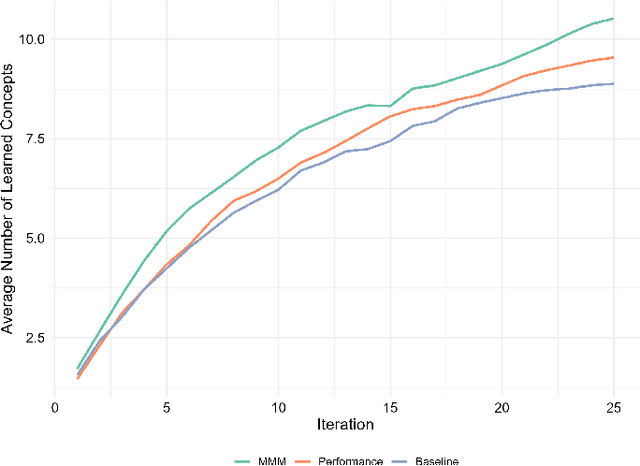

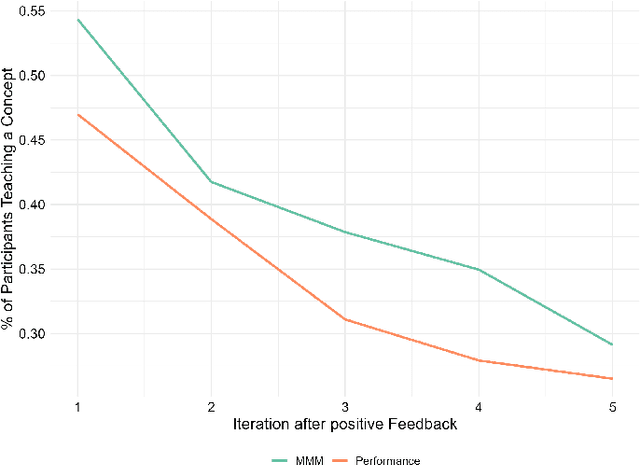

Abstract:The rapid development of artificial intelligence and robotics has had a significant impact on our lives, with intelligent systems increasingly performing tasks traditionally performed by humans. Efficient knowledge transfer requires matching the mental model of the human teacher with the capabilities of the robot learner. This paper introduces the Mental Model Mismatch (MMM) Score, a feedback mechanism designed to quantify and reduce mismatches by aligning human teaching behavior with robot learning behavior. Using Large Language Models (LLMs), we analyze teacher intentions in natural language to generate adaptive feedback. A study with 150 participants teaching a virtual robot to solve a puzzle game shows that intention-based feedback significantly outperforms traditional performance-based feedback or no feedback. The results suggest that intention-based feedback improves instructional outcomes, improves understanding of the robot's learning process and reduces misconceptions. This research addresses a critical gap in human-robot interaction (HRI) by providing a method to quantify and mitigate discrepancies between human mental models and robot capabilities, with the goal of improving robot learning and human teaching effectiveness.

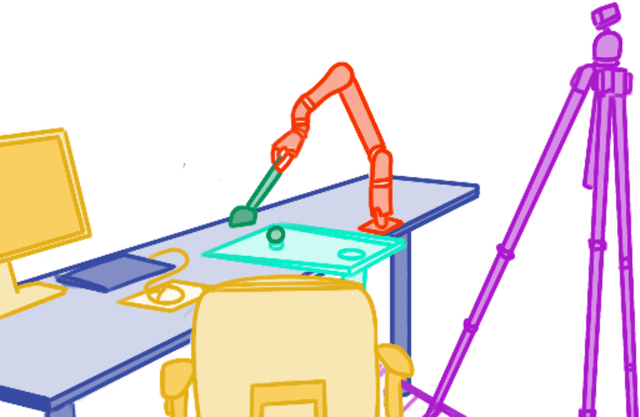

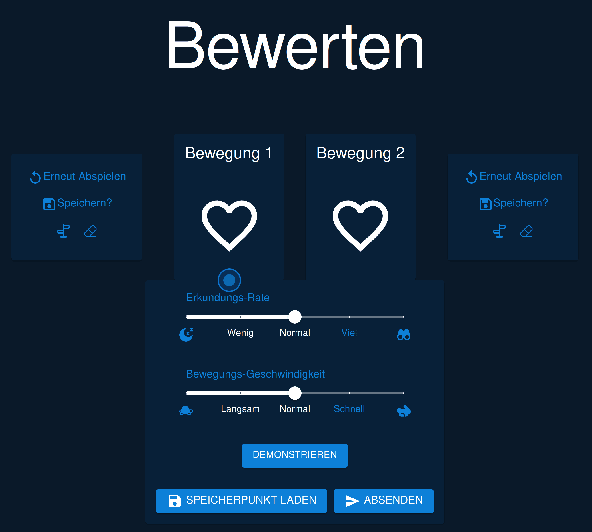

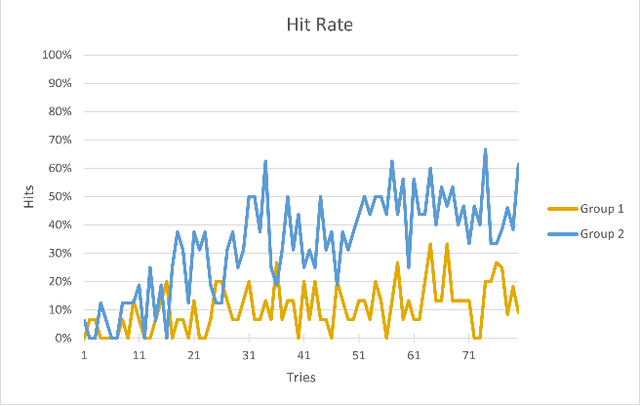

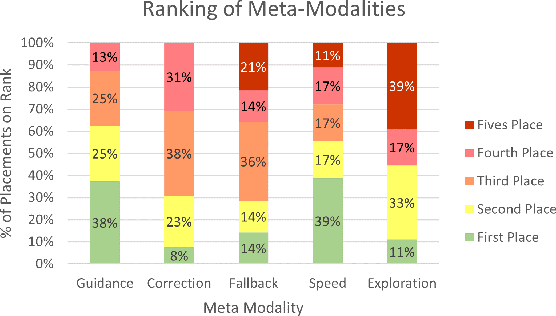

The Power of Combined Modalities in Interactive Robot Learning

May 13, 2024

Abstract:This study contributes to the evolving field of robot learning in interaction with humans, examining the impact of diverse input modalities on learning outcomes. It introduces the concept of "meta-modalities" which encapsulate additional forms of feedback beyond the traditional preference and scalar feedback mechanisms. Unlike prior research that focused on individual meta-modalities, this work evaluates their combined effect on learning outcomes. Through a study with human participants, we explore user preferences for these modalities and their impact on robot learning performance. Our findings reveal that while individual modalities are perceived differently, their combination significantly improves learning behavior and usability. This research not only provides valuable insights into the optimization of human-robot interactive task learning but also opens new avenues for enhancing the interactive freedom and scaffolding capabilities provided to users in such settings.

What you need to know about a learning robot: Identifying the enabling architecture of complex systems

Nov 24, 2023Abstract:Nowadays, we are dealing more and more with robots and AI in everyday life. However, their behavior is not always apparent to most lay users, especially in error situations. As a result, there can be misconceptions about the behavior of the technologies in use. This, in turn, can lead to misuse and rejection by users. Explanation, for example, through transparency, can address these misconceptions. However, it would be confusing and overwhelming for users if the entire software or hardware was explained. Therefore, this paper looks at the 'enabling' architecture. It describes those aspects of a robotic system that might need to be explained to enable someone to use the technology effectively. Furthermore, this paper is concerned with the 'explanandum', which is the corresponding misunderstanding or missing concepts of the enabling architecture that needs to be clarified. We have thus developed and present an approach for determining this 'enabling' architecture and the resulting 'explanandum' of complex technologies.

Forms of Understanding of XAI-Explanations

Nov 15, 2023

Abstract:Explainability has become an important topic in computer science and artificial intelligence, leading to a subfield called Explainable Artificial Intelligence (XAI). The goal of providing or seeking explanations is to achieve (better) 'understanding' on the part of the explainee. However, what it means to 'understand' is still not clearly defined, and the concept itself is rarely the subject of scientific investigation. This conceptual article aims to present a model of forms of understanding in the context of XAI and beyond. From an interdisciplinary perspective bringing together computer science, linguistics, sociology, and psychology, a definition of understanding and its forms, assessment, and dynamics during the process of giving everyday explanations are explored. Two types of understanding are considered as possible outcomes of explanations, namely enabledness, 'knowing how' to do or decide something, and comprehension, 'knowing that' -- both in different degrees (from shallow to deep). Explanations regularly start with shallow understanding in a specific domain and can lead to deep comprehension and enabledness of the explanandum, which we see as a prerequisite for human users to gain agency. In this process, the increase of comprehension and enabledness are highly interdependent. Against the background of this systematization, special challenges of understanding in XAI are discussed.

From Interactive to Co-Constructive Task Learning

May 24, 2023Abstract:Humans have developed the capability to teach relevant aspects of new or adapted tasks to a social peer with very few task demonstrations by making use of scaffolding strategies that leverage prior knowledge and importantly prior joint experience to yield a joint understanding and a joint execution of the required steps to solve the task. This process has been discovered and analyzed in parent-infant interaction and constitutes a ``co-construction'' as it allows both, the teacher and the learner, to jointly contribute to the task. We propose to focus research in robot interactive learning on this co-construction process to enable robots to learn from non-expert users in everyday situations. In the following, we will review current proposals for interactive task learning and discuss their main contributions with respect to the entailing interaction. We then discuss our notion of co-construction and summarize research insights from adult-child and human-robot interactions to elucidate its nature in more detail. From this overview we finally derive research desiderata that entail the dimensions architecture, representation, interaction and explainability.

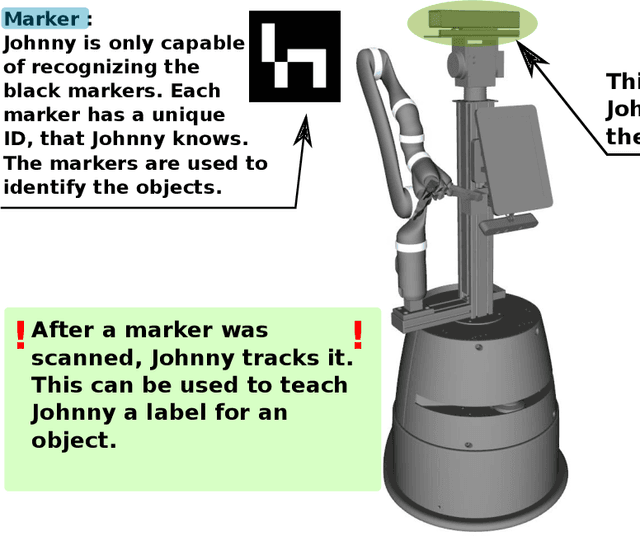

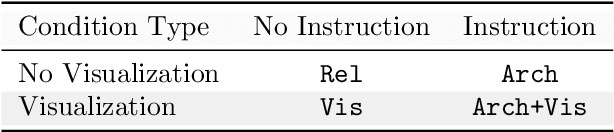

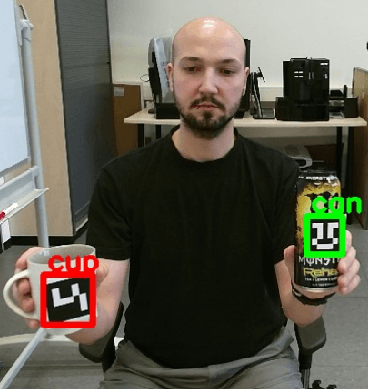

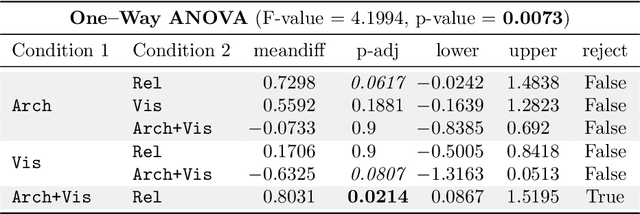

Improving HRI through robot architecture transparency

Aug 26, 2021

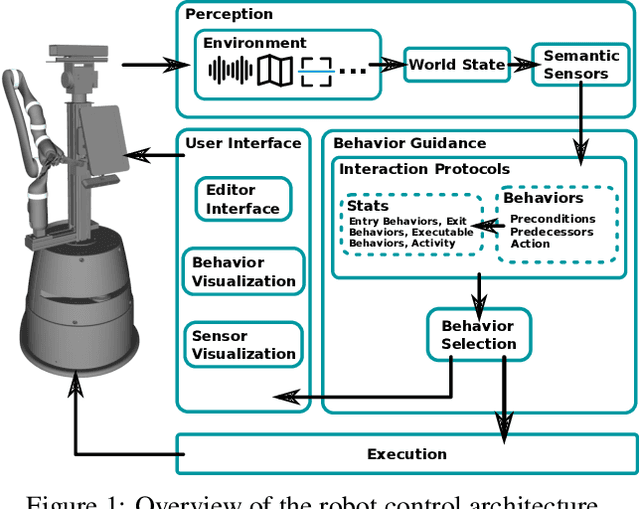

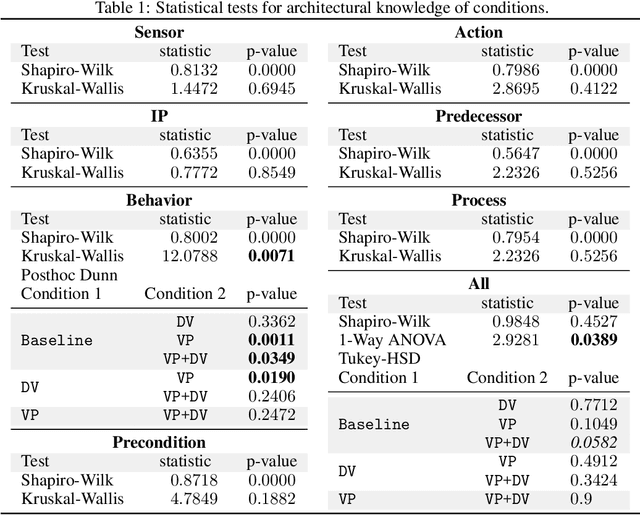

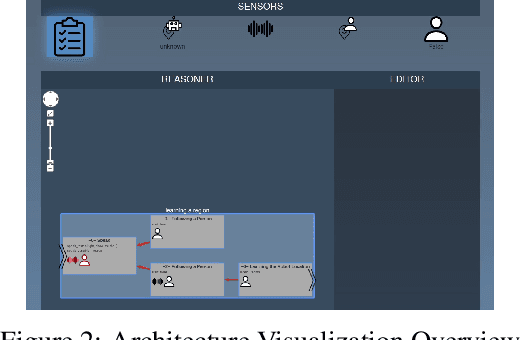

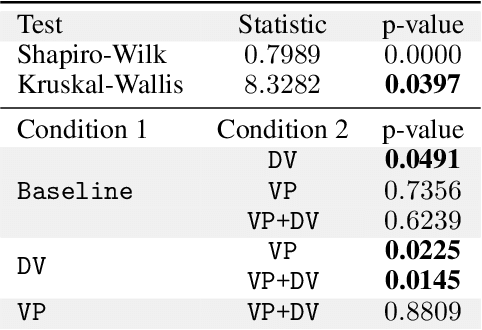

Abstract:In recent years, an increased effort has been invested to improve the capabilities of robots. Nevertheless, human-robot interaction remains a complex field of application where errors occur frequently. The reasons for these errors can primarily be divided into two classes. Foremost, the recent increase in capabilities also widened possible sources of errors on the robot's side. This entails problems in the perception of the world, but also faulty behavior, based on errors in the system. Apart from that, non-expert users frequently have incorrect assumptions about the functionality and limitations of a robotic system. This leads to incompatibilities between the user's behavior and the functioning of the robot's system, causing problems on the robot's side and in the human-robot interaction. While engineers constantly improve the reliability of robots, the user's understanding about robots and their limitations have to be addressed as well. In this work, we investigate ways to improve the understanding about robots. For this, we employ FAMILIAR - FunctionAl user Mental model by Increased LegIbility ARchitecture, a transparent robot architecture with regard to the robot behavior and decision-making process. We conducted an online simulation user study to evaluate two complementary approaches to convey and increase the knowledge about this architecture to non-expert users: a dynamic visualization of the system's processes as well as a visual programming interface. The results of this study reveal that visual programming improves knowledge about the architecture. Furthermore, we show that with increased knowledge about the control architecture of the robot, users were significantly better in reaching the interaction goal. Furthermore, we showed that anthropomorphism may reduce interaction success.

Why robots should be technical: Correcting mental models through technical architecture concepts

Nov 05, 2020

Abstract:Research in social robotics is commonly focused on designing robots that imitate human behavior. While this might increase a user's satisfaction and acceptance of robots at first glance, it does not automatically aid a non-expert user in naturally interacting with robots, and might actually hurt their ability to correctly anticipate a robot's capabilities. We argue that a faulty mental model, that the user has of the robot, is one of the main sources of confusion. In this work we investigate how communicating technical concepts of robotic systems to users affects their mental models, and how this can increase the quality of human-robot interaction. We conducted an online study and investigated possible ways of improving users' mental models. Our results underline that communicating technical concepts can form an improved mental model. Consequently, we show the importance of consciously designing robots that express their capabilities and limitations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge