Alessio Lomuscio

IoUCert: Robustness Verification for Anchor-based Object Detectors

Mar 04, 2026Abstract:While formal robustness verification has seen significant success in image classification, scaling these guarantees to object detection remains notoriously difficult due to complex non-linear coordinate transformations and Intersection-over-Union (IoU) metrics. We introduce IoUCert, a novel formal verification framework designed specifically to overcome these bottlenecks in foundational anchor-based object detection architectures. Focusing on the object localisation component in single-object settings, we propose a coordinate transformation that enables our algorithm to circumvent precision-degrading relaxations of non-linear box prediction functions. This allows us to optimise bounds directly with respect to the anchor box offsets which enables a novel Interval Bound Propagation method that derives optimal IoU bounds. We demonstrate that our method enables, for the first time, the robustness verification of realistic, anchor-based models including SSD, YOLOv2, and YOLOv3 variants against various input perturbations.

LTL Verification of Memoryful Neural Agents

Mar 04, 2025

Abstract:We present a framework for verifying Memoryful Neural Multi-Agent Systems (MN-MAS) against full Linear Temporal Logic (LTL) specifications. In MN-MAS, agents interact with a non-deterministic, partially observable environment. Examples of MN-MAS include multi-agent systems based on feed-forward and recurrent neural networks or state-space models. Different from previous approaches, we support the verification of both bounded and unbounded LTL specifications. We leverage well-established bounded model checking techniques, including lasso search and invariant synthesis, to reduce the verification problem to that of constraint solving. To solve these constraints, we develop efficient methods based on bound propagation, mixed-integer linear programming, and adaptive splitting. We evaluate the effectiveness of our algorithms in single and multi-agent environments from the Gymnasium and PettingZoo libraries, verifying unbounded specifications for the first time and improving the verification time for bounded specifications by an order of magnitude compared to the SoA.

A Scalable Approach to Probabilistic Neuro-Symbolic Verification

Feb 05, 2025

Abstract:Neuro-Symbolic Artificial Intelligence (NeSy AI) has emerged as a promising direction for integrating neural learning with symbolic reasoning. In the probabilistic variant of such systems, a neural network first extracts a set of symbols from sub-symbolic input, which are then used by a symbolic component to reason in a probabilistic manner towards answering a query. In this work, we address the problem of formally verifying the robustness of such NeSy probabilistic reasoning systems, therefore paving the way for their safe deployment in critical domains. We analyze the complexity of solving this problem exactly, and show that it is $\mathrm{NP}^{\# \mathrm{P}}$-hard. To overcome this issue, we propose the first approach for approximate, relaxation-based verification of probabilistic NeSy systems. We demonstrate experimentally that the proposed method scales exponentially better than solver-based solutions and apply our technique to a real-world autonomous driving dataset, where we verify a safety property under large input dimensionalities and network sizes.

Verification of Neural Networks against Convolutional Perturbations via Parameterised Kernels

Nov 07, 2024Abstract:We develop a method for the efficient verification of neural networks against convolutional perturbations such as blurring or sharpening. To define input perturbations we use well-known camera shake, box blur and sharpen kernels. We demonstrate that these kernels can be linearly parameterised in a way that allows for a variation of the perturbation strength while preserving desired kernel properties. To facilitate their use in neural network verification, we develop an efficient way of convolving a given input with these parameterised kernels. The result of this convolution can be used to encode the perturbation in a verification setting by prepending a linear layer to a given network. This leads to tight bounds and a high effectiveness in the resulting verification step. We add further precision by employing input splitting as a branch and bound strategy. We demonstrate that we are able to verify robustness on a number of standard benchmarks where the baseline is unable to provide any safety certificates. To the best of our knowledge, this is the first solution for verifying robustness against specific convolutional perturbations such as camera shake.

Verification of Geometric Robustness of Neural Networks via Piecewise Linear Approximation and Lipschitz Optimisation

Aug 23, 2024Abstract:We address the problem of verifying neural networks against geometric transformations of the input image, including rotation, scaling, shearing, and translation. The proposed method computes provably sound piecewise linear constraints for the pixel values by using sampling and linear approximations in combination with branch-and-bound Lipschitz optimisation. A feature of the method is that it obtains tighter over-approximations of the perturbation region than the present state-of-the-art. We report results from experiments on a comprehensive set of benchmarks. We show that our proposed implementation resolves more verification cases than present approaches while being more computationally efficient.

Tightening the Evaluation of PAC Bounds Using Formal Verification Results

Jul 29, 2024Abstract:Probably Approximately Correct (PAC) bounds are widely used to derive probabilistic guarantees for the generalisation of machine learning models. They highlight the components of the model which contribute to its generalisation capacity. However, current state-of-the-art results are loose in approximating the generalisation capacity of deployed machine learning models. Consequently, while PAC bounds are theoretically useful, their applicability for evaluating a model's generalisation property in a given operational design domain is limited. The underlying classical theory is supported by the idea that bounds can be tightened when the number of test points available to the user to evaluate the model increases. Yet, in the case of neural networks, the number of test points required to obtain bounds of interest is often impractical even for small problems. In this paper, we take the novel approach of using the formal verification of neural systems to inform the evaluation of PAC bounds. Rather than using pointwise information obtained from repeated tests, we use verification results on regions around test points. We show that conditioning existing bounds on verification results leads to a tightening proportional to the underlying probability mass of the verified region.

Tight Verification of Probabilistic Robustness in Bayesian Neural Networks

Jan 21, 2024Abstract:We introduce two algorithms for computing tight guarantees on the probabilistic robustness of Bayesian Neural Networks (BNNs). Computing robustness guarantees for BNNs is a significantly more challenging task than verifying the robustness of standard Neural Networks (NNs) because it requires searching the parameters' space for safe weights. Moreover, tight and complete approaches for the verification of standard NNs, such as those based on Mixed-Integer Linear Programming (MILP), cannot be directly used for the verification of BNNs because of the polynomial terms resulting from the consecutive multiplication of variables encoding the weights. Our algorithms efficiently and effectively search the parameters' space for safe weights by using iterative expansion and the network's gradient and can be used with any verification algorithm of choice for BNNs. In addition to proving that our algorithms compute tighter bounds than the SoA, we also evaluate our algorithms against the SoA on standard benchmarks, such as MNIST and CIFAR10, showing that our algorithms compute bounds up to 40% tighter than the SoA.

Expressive Losses for Verified Robustness via Convex Combinations

May 23, 2023

Abstract:In order to train networks for verified adversarial robustness, previous work typically over-approximates the worst-case loss over (subsets of) perturbation regions or induces verifiability on top of adversarial training. The key to state-of-the-art performance lies in the expressivity of the employed loss function, which should be able to match the tightness of the verifiers to be employed post-training. We formalize a definition of expressivity, and show that it can be satisfied via simple convex combinations between adversarial attacks and IBP bounds. We then show that the resulting algorithms, named CC-IBP and MTL-IBP, yield state-of-the-art results across a variety of settings in spite of their conceptual simplicity. In particular, for $\ell_\infty$ perturbations of radius $\frac{1}{255}$ on TinyImageNet and downscaled ImageNet, MTL-IBP improves on the best standard and verified accuracies from the literature by from $1.98\%$ to $3.92\%$ points while only relying on single-step adversarial attacks.

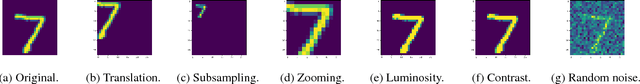

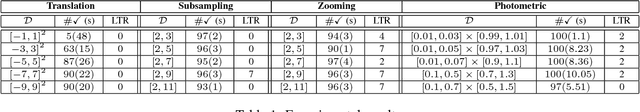

Formal Verification of CNN-based Perception Systems

Nov 28, 2018

Abstract:We address the problem of verifying neural-based perception systems implemented by convolutional neural networks. We define a notion of local robustness based on affine and photometric transformations. We show the notion cannot be captured by previously employed notions of robustness. The method proposed is based on reachability analysis for feed-forward neural networks and relies on MILP encodings of both the CNNs and transformations under question. We present an implementation and discuss the experimental results obtained for a CNN trained from the MNIST data set.

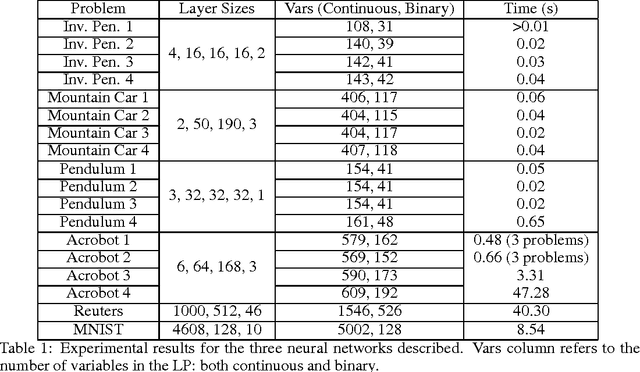

An approach to reachability analysis for feed-forward ReLU neural networks

Jun 22, 2017

Abstract:We study the reachability problem for systems implemented as feed-forward neural networks whose activation function is implemented via ReLU functions. We draw a correspondence between establishing whether some arbitrary output can ever be outputed by a neural system and linear problems characterising a neural system of interest. We present a methodology to solve cases of practical interest by means of a state-of-the-art linear programs solver. We evaluate the technique presented by discussing the experimental results obtained by analysing reachability properties for a number of benchmarks in the literature.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge