Aditi Misra

FragmentNet: Adaptive Graph Fragmentation for Graph-to-Sequence Molecular Representation Learning

Feb 03, 2025

Abstract:Molecular property prediction uses molecular structure to infer chemical properties. Chemically interpretable representations that capture meaningful intramolecular interactions enhance the usability and effectiveness of these predictions. However, existing methods often rely on atom-based or rule-based fragment tokenization, which can be chemically suboptimal and lack scalability. We introduce FragmentNet, a graph-to-sequence foundation model with an adaptive, learned tokenizer that decomposes molecular graphs into chemically valid fragments while preserving structural connectivity. FragmentNet integrates VQVAE-GCN for hierarchical fragment embeddings, spatial positional encodings for graph serialization, global molecular descriptors, and a transformer. Pre-trained with Masked Fragment Modeling and fine-tuned on MoleculeNet tasks, FragmentNet outperforms models with similarly scaled architectures and datasets while rivaling larger state-of-the-art models requiring significantly more resources. This novel framework enables adaptive decomposition, serialization, and reconstruction of molecular graphs, facilitating fragment-based editing and visualization of property trends in learned embeddings - a powerful tool for molecular design and optimization.

The Adversarial Implications of Variable-Time Inference

Sep 05, 2023

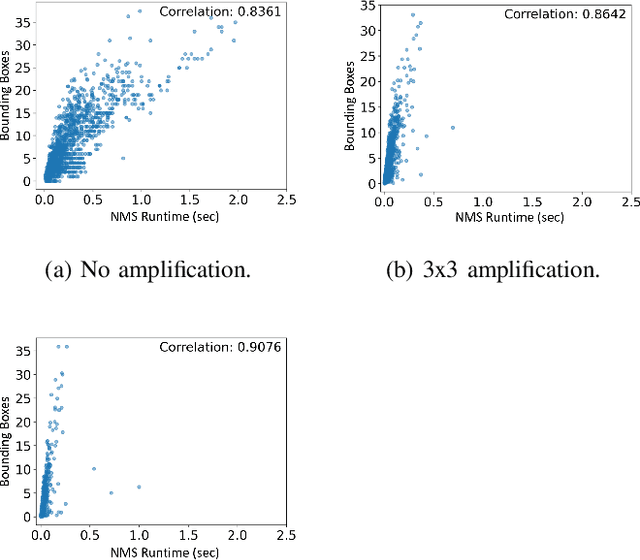

Abstract:Machine learning (ML) models are known to be vulnerable to a number of attacks that target the integrity of their predictions or the privacy of their training data. To carry out these attacks, a black-box adversary must typically possess the ability to query the model and observe its outputs (e.g., labels). In this work, we demonstrate, for the first time, the ability to enhance such decision-based attacks. To accomplish this, we present an approach that exploits a novel side channel in which the adversary simply measures the execution time of the algorithm used to post-process the predictions of the ML model under attack. The leakage of inference-state elements into algorithmic timing side channels has never been studied before, and we have found that it can contain rich information that facilitates superior timing attacks that significantly outperform attacks based solely on label outputs. In a case study, we investigate leakage from the non-maximum suppression (NMS) algorithm, which plays a crucial role in the operation of object detectors. In our examination of the timing side-channel vulnerabilities associated with this algorithm, we identified the potential to enhance decision-based attacks. We demonstrate attacks against the YOLOv3 detector, leveraging the timing leakage to successfully evade object detection using adversarial examples, and perform dataset inference. Our experiments show that our adversarial examples exhibit superior perturbation quality compared to a decision-based attack. In addition, we present a new threat model in which dataset inference based solely on timing leakage is performed. To address the timing leakage vulnerability inherent in the NMS algorithm, we explore the potential and limitations of implementing constant-time inference passes as a mitigation strategy.

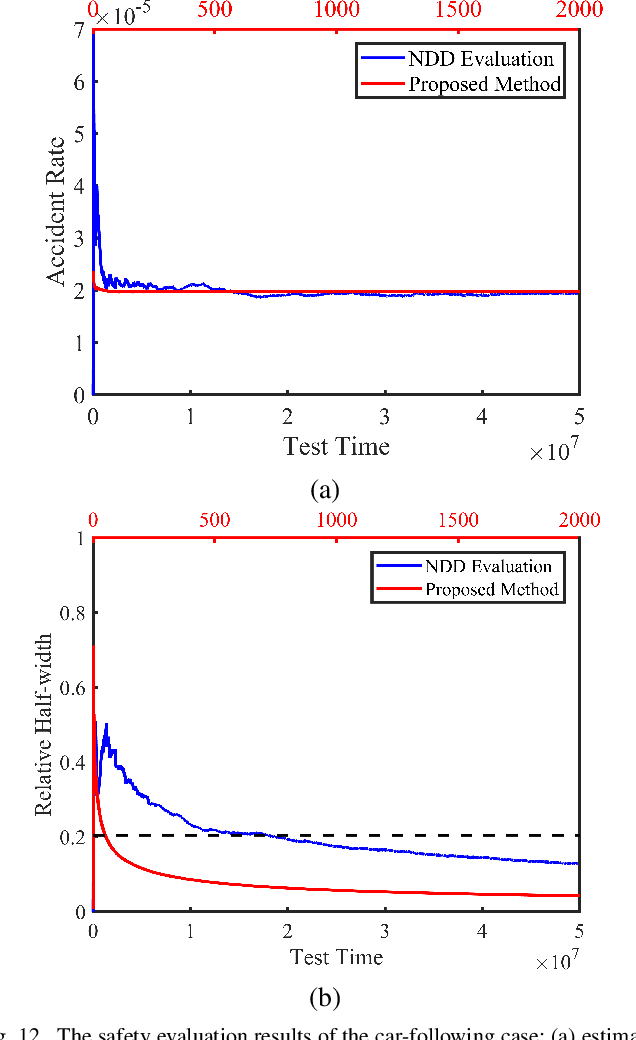

Testing Scenario Library Generation for Connected and Automated Vehicles, Part II: Case Studies

May 09, 2019

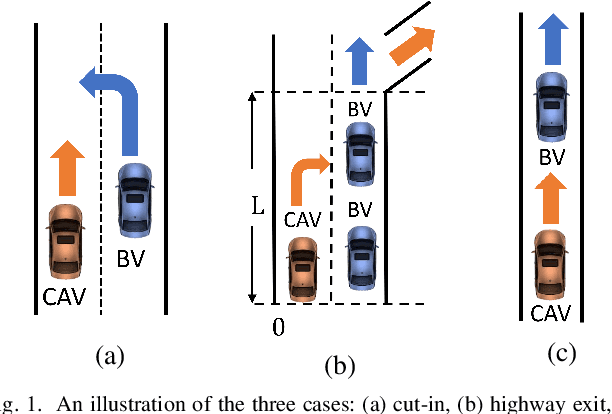

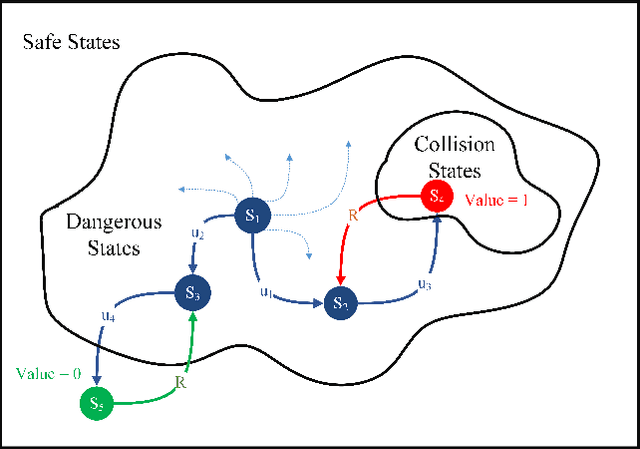

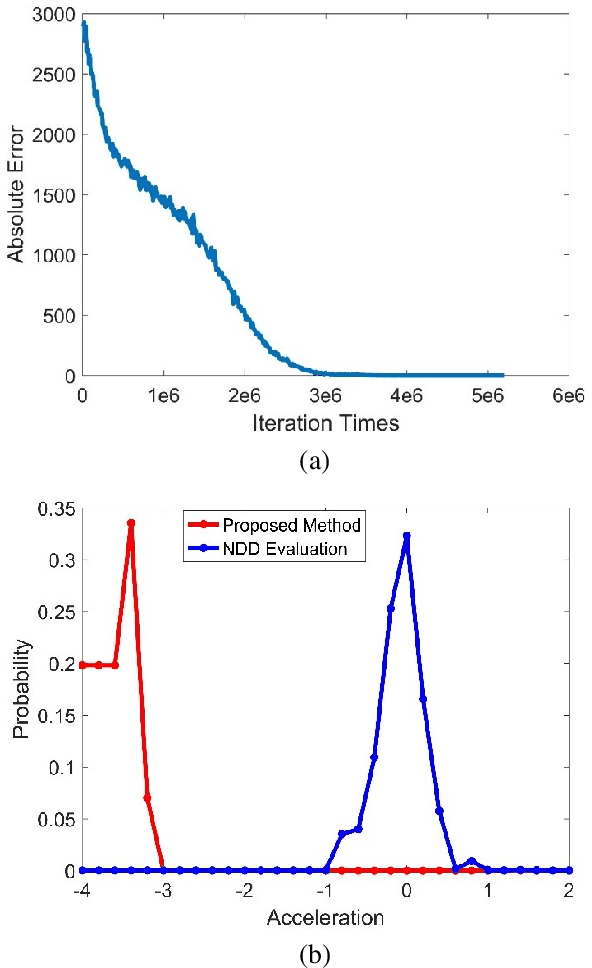

Abstract:Testing and evaluation is a critical step in the development and deployment of connected and automated vehicles (CAVs), and yet there is no systematic framework to generate testing scenario library. In Part I of the paper, a general framework is proposed to solve the testing scenario library generation (TSLG) problem with four associated research questions. The methodologies of solving each research question have been proposed and analyzed theoretically. In Part II of the paper, three case studies are designed and implemented to demonstrate the proposed methodologies. First, a cut-in case is designed for safety evaluation and to provide answers to three particular questions in the framework, i.e., auxiliary objective function design, naturalistic driving data (NDD) analysis, and surrogate model (SM) construction. Second, a highway exit case is designed for functionality evaluation. Third, a car-following case is designed to show the ability of the proposed methods in handling high-dimensional scenarios. To address the challenges brought by higher dimensions, the proposed methods are enhanced by reinforcement learning (RL) techniques. Typical CAV models are chosen and evaluated by simulations. Results show that the proposed methods can accelerate the CAV evaluation process by $255$ to $3.75\times10^5$ times compared with the public road test method, with same accuracy of indices.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge