Shao Bao

Testing Scenario Library Generation for Connected and Automated Vehicles, Part II: Case Studies

May 09, 2019

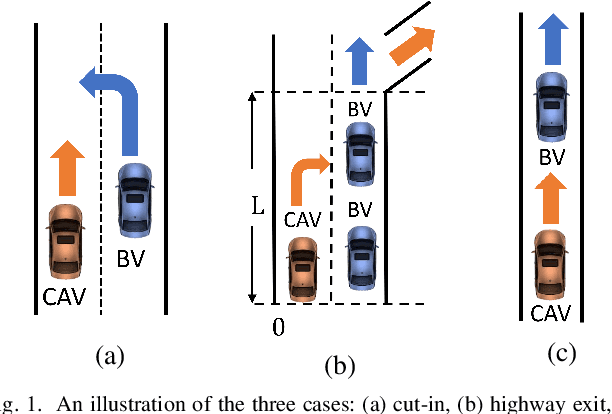

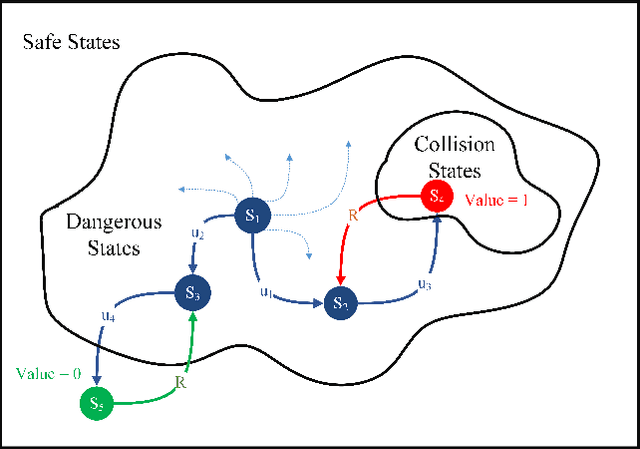

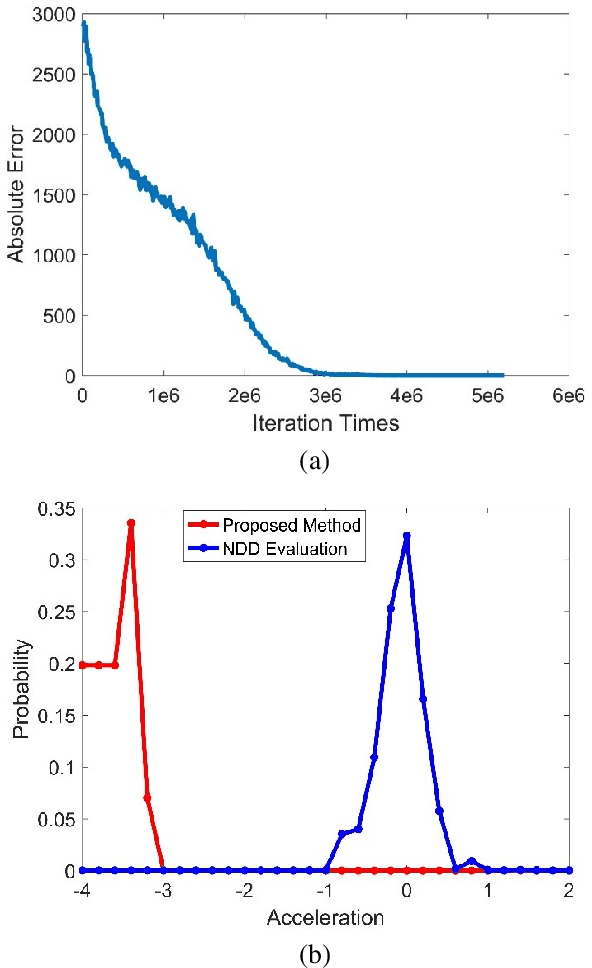

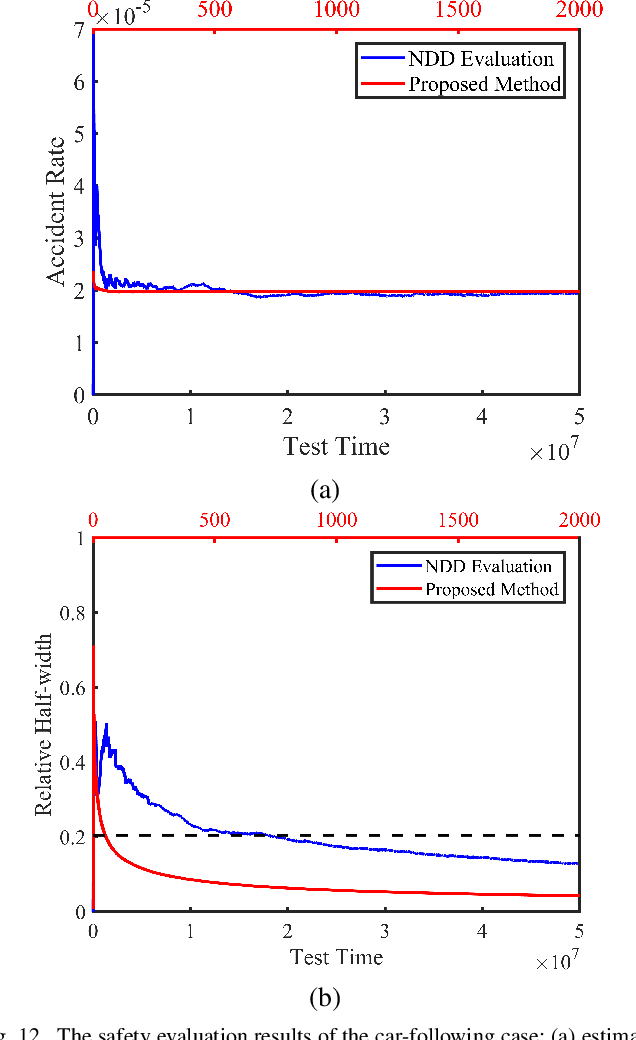

Abstract:Testing and evaluation is a critical step in the development and deployment of connected and automated vehicles (CAVs), and yet there is no systematic framework to generate testing scenario library. In Part I of the paper, a general framework is proposed to solve the testing scenario library generation (TSLG) problem with four associated research questions. The methodologies of solving each research question have been proposed and analyzed theoretically. In Part II of the paper, three case studies are designed and implemented to demonstrate the proposed methodologies. First, a cut-in case is designed for safety evaluation and to provide answers to three particular questions in the framework, i.e., auxiliary objective function design, naturalistic driving data (NDD) analysis, and surrogate model (SM) construction. Second, a highway exit case is designed for functionality evaluation. Third, a car-following case is designed to show the ability of the proposed methods in handling high-dimensional scenarios. To address the challenges brought by higher dimensions, the proposed methods are enhanced by reinforcement learning (RL) techniques. Typical CAV models are chosen and evaluated by simulations. Results show that the proposed methods can accelerate the CAV evaluation process by $255$ to $3.75\times10^5$ times compared with the public road test method, with same accuracy of indices.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge