Zhaonan Sun

Advanced Tool for Traffic Crash Analysis: An AI-Driven Multi-Agent Approach to Pre-Crash Reconstruction

Nov 13, 2025

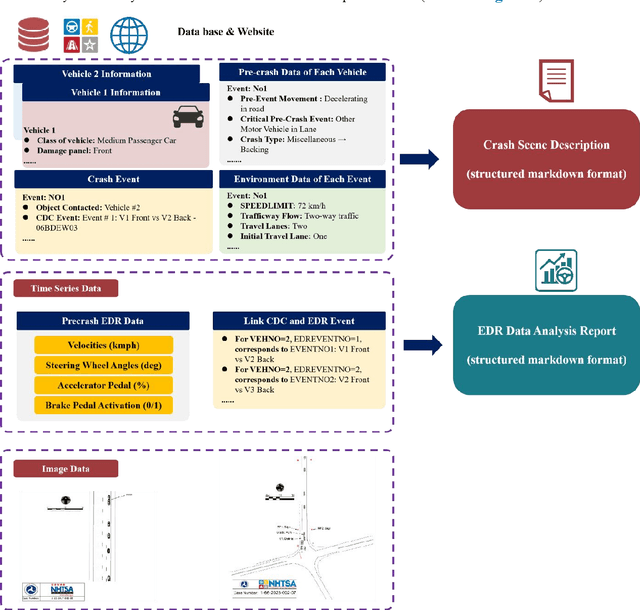

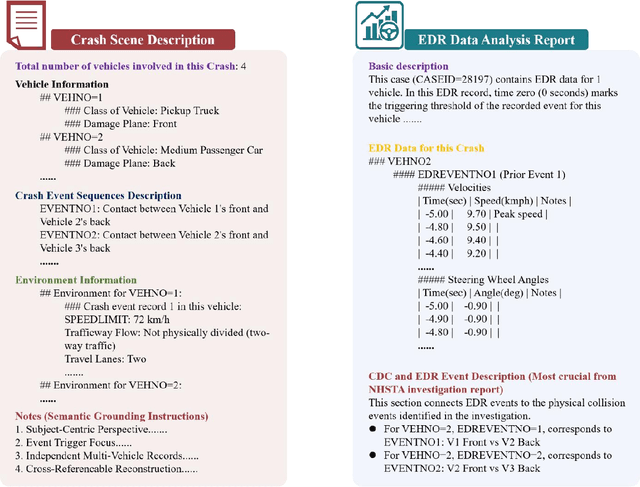

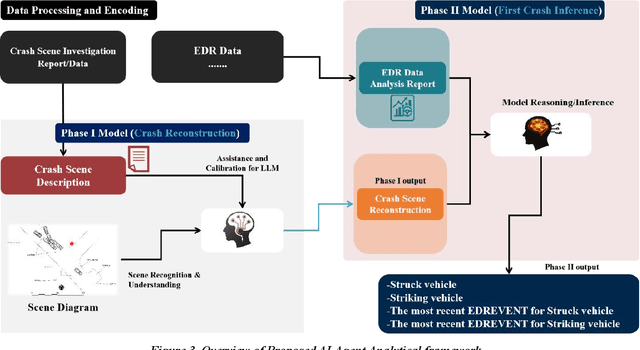

Abstract:Traffic collision reconstruction traditionally relies on human expertise, often yielding inconsistent results when analyzing incomplete multimodal data. This study develops a multi-agent AI framework that reconstructs pre-crash scenarios and infers vehicle behaviors from fragmented collision data. We present a two-phase collaborative framework combining reconstruction and reasoning phases. The system processes 277 rear-end lead vehicle deceleration (LVD) collisions from the Crash Investigation Sampling System, integrating textual crash reports, structured tabular data, and visual scene diagrams. Phase I generates natural-language crash reconstructions from multimodal inputs. Phase II performs in-depth crash reasoning by combining these reconstructions with temporal Event Data Recorder (EDR).For validation, we applied it to all LVD cases, focusing on a subset of 39 complex crashes where multiple EDR records per collision introduced ambiguity (e.g., due to missing or conflicting data).The evaluation of the 39 LVD crash cases revealed our framework achieved perfect accuracy across all test cases, successfully identifying both the most relevant EDR event and correctly distinguishing striking versus struck vehicles, surpassing the 92% accuracy achieved by human researchers on the same challenging dataset. The system maintained robust performance even when processing incomplete data, including missing or erroneous EDR records and ambiguous scene diagrams. This study demonstrates superior AI capabilities in processing heterogeneous collision data, providing unprecedented precision in reconstructing impact dynamics and characterizing pre-crash behaviors.

DPVis: Visual Exploration of Disease Progression Pathways

Apr 26, 2019Abstract:Clinical researchers use disease progression modeling algorithms to predict future patient status and characterize progression patterns. One approach for disease progression modeling is to describe patient status using a small number of states that represent distinctive distributions over a set of observed measures. Hidden Markov models (HMMs) and its variants are a class of models that both discover these states and make predictions concerning future states for new patients. HMMs can be trained using longitudinal observations of subjects from large-scale cohort studies, clinical trials, and electronic health records. Despite the advantages of using the algorithms for discovering interesting patterns, it still remains challenging for medical experts to interpret model outputs, complex modeling parameters, and clinically make sense of the patterns. To tackle this problem, we conducted a design study with physician scientists, statisticians, and visualization experts, with the goal to investigate disease progression pathways of certain chronic diseases, namely type 1 diabetes (T1D), Huntington's disease, Parkinson's disease, and chronic obstructive pulmonary disease (COPD). As a result, we introduce DPVis which seamlessly integrates model parameters and outcomes of HMMs into interpretable, and interactive visualizations. In this study, we demonstrate that DPVis is successful in evaluating disease progression models, visually summarizing disease states, interactively exploring disease progression patterns, and designing and comparing clinically relevant subgroup cohorts by introducing a case study on observation data from clinical studies of T1D.

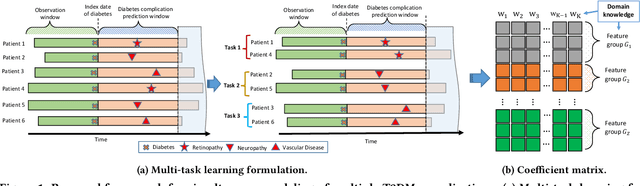

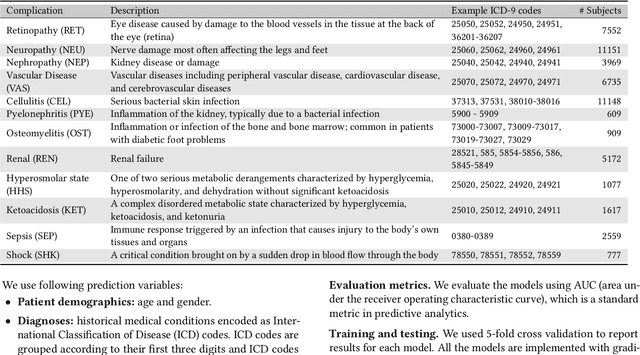

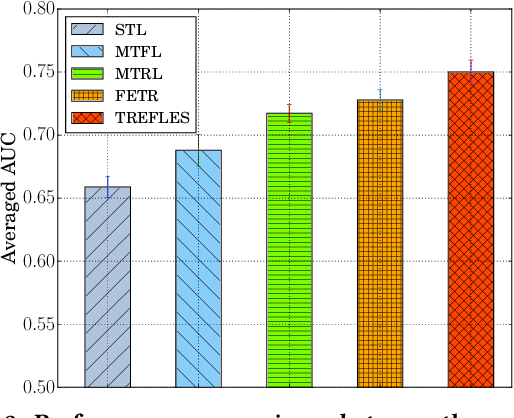

Simultaneous Modeling of Multiple Complications for Risk Profiling in Diabetes Care

Feb 19, 2018

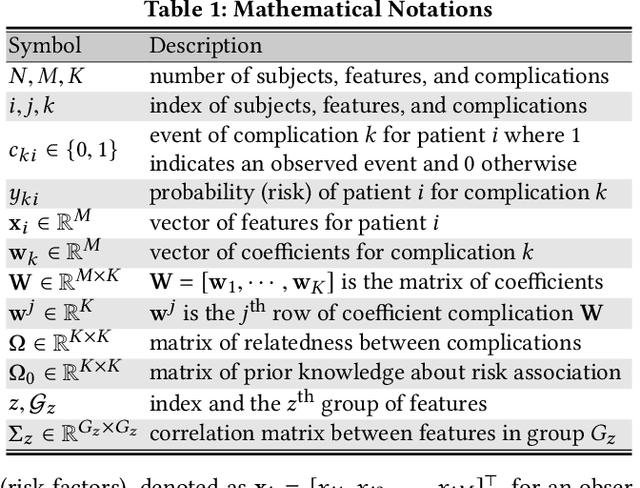

Abstract:Type 2 diabetes mellitus (T2DM) is a chronic disease that often results in multiple complications. Risk prediction and profiling of T2DM complications is critical for healthcare professionals to design personalized treatment plans for patients in diabetes care for improved outcomes. In this paper, we study the risk of developing complications after the initial T2DM diagnosis from longitudinal patient records. We propose a novel multi-task learning approach to simultaneously model multiple complications where each task corresponds to the risk modeling of one complication. Specifically, the proposed method strategically captures the relationships (1) between the risks of multiple T2DM complications, (2) between the different risk factors, and (3) between the risk factor selection patterns. The method uses coefficient shrinkage to identify an informative subset of risk factors from high-dimensional data, and uses a hierarchical Bayesian framework to allow domain knowledge to be incorporated as priors. The proposed method is favorable for healthcare applications because in additional to improved prediction performance, relationships among the different risks and risk factors are also identified. Extensive experimental results on a large electronic medical claims database show that the proposed method outperforms state-of-the-art models by a significant margin. Furthermore, we show that the risk associations learned and the risk factors identified lead to meaningful clinical insights.

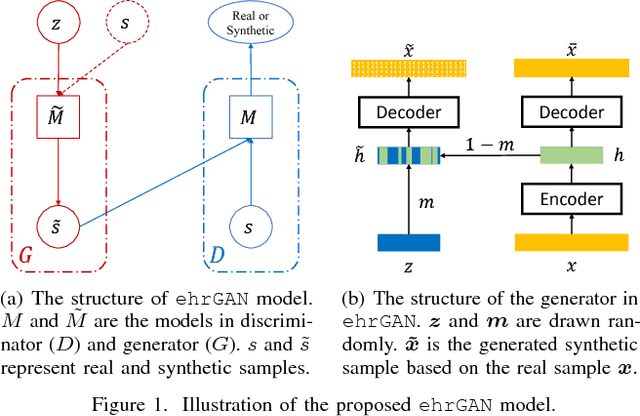

Boosting Deep Learning Risk Prediction with Generative Adversarial Networks for Electronic Health Records

Sep 06, 2017

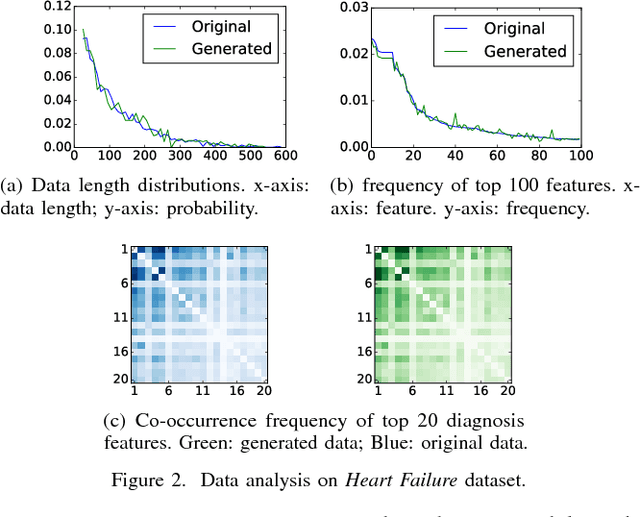

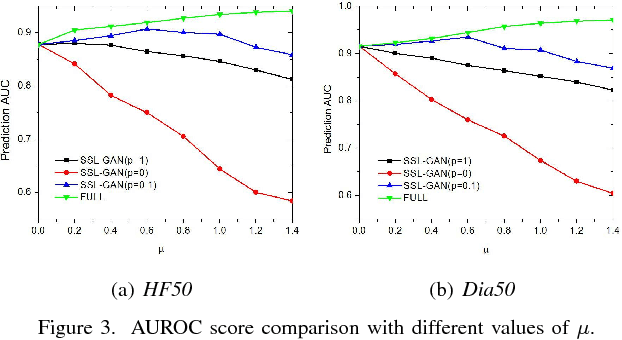

Abstract:The rapid growth of Electronic Health Records (EHRs), as well as the accompanied opportunities in Data-Driven Healthcare (DDH), has been attracting widespread interests and attentions. Recent progress in the design and applications of deep learning methods has shown promising results and is forcing massive changes in healthcare academia and industry, but most of these methods rely on massive labeled data. In this work, we propose a general deep learning framework which is able to boost risk prediction performance with limited EHR data. Our model takes a modified generative adversarial network namely ehrGAN, which can provide plausible labeled EHR data by mimicking real patient records, to augment the training dataset in a semi-supervised learning manner. We use this generative model together with a convolutional neural network (CNN) based prediction model to improve the onset prediction performance. Experiments on two real healthcare datasets demonstrate that our proposed framework produces realistic data samples and achieves significant improvements on classification tasks with the generated data over several stat-of-the-art baselines.

Exploiting Convolutional Neural Network for Risk Prediction with Medical Feature Embedding

Jan 25, 2017

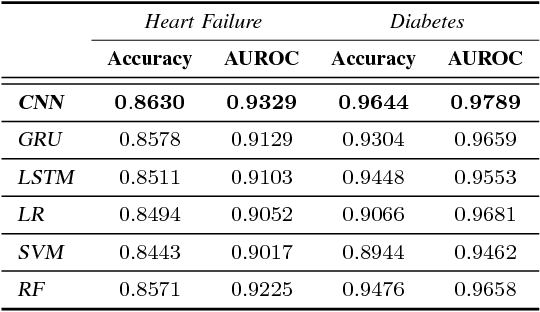

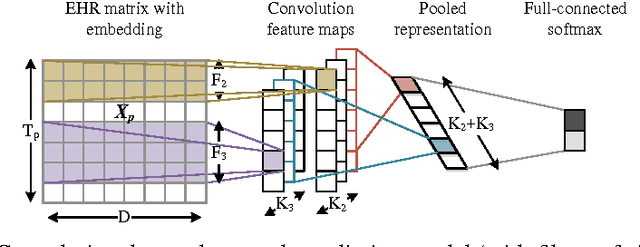

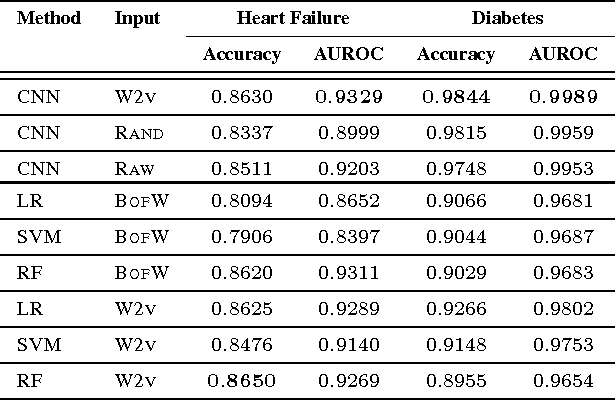

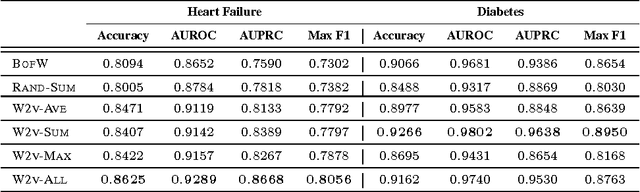

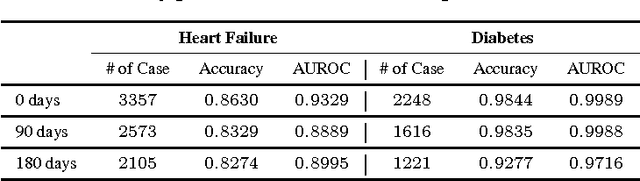

Abstract:The widespread availability of electronic health records (EHRs) promises to usher in the era of personalized medicine. However, the problem of extracting useful clinical representations from longitudinal EHR data remains challenging. In this paper, we explore deep neural network models with learned medical feature embedding to deal with the problems of high dimensionality and temporality. Specifically, we use a multi-layer convolutional neural network (CNN) to parameterize the model and is thus able to capture complex non-linear longitudinal evolution of EHRs. Our model can effectively capture local/short temporal dependency in EHRs, which is beneficial for risk prediction. To account for high dimensionality, we use the embedding medical features in the CNN model which hold the natural medical concepts. Our initial experiments produce promising results and demonstrate the effectiveness of both the medical feature embedding and the proposed convolutional neural network in risk prediction on cohorts of congestive heart failure and diabetes patients compared with several strong baselines.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge