Yunqiu Lv

Contrastive Conditional Latent Diffusion for Audio-visual Segmentation

Jul 31, 2023

Abstract:We propose a latent diffusion model with contrastive learning for audio-visual segmentation (AVS) to extensively explore the contribution of audio. We interpret AVS as a conditional generation task, where audio is defined as the conditional variable for sound producer(s) segmentation. With our new interpretation, it is especially necessary to model the correlation between audio and the final segmentation map to ensure its contribution. We introduce a latent diffusion model to our framework to achieve semantic-correlated representation learning. Specifically, our diffusion model learns the conditional generation process of the ground-truth segmentation map, leading to ground-truth aware inference when we perform the denoising process at the test stage. As a conditional diffusion model, we argue it is essential to ensure that the conditional variable contributes to model output. We then introduce contrastive learning to our framework to learn audio-visual correspondence, which is proven consistent with maximizing the mutual information between model prediction and the audio data. In this way, our latent diffusion model via contrastive learning explicitly maximizes the contribution of audio for AVS. Experimental results on the benchmark dataset verify the effectiveness of our solution. Code and results are online via our project page: https://github.com/OpenNLPLab/DiffusionAVS.

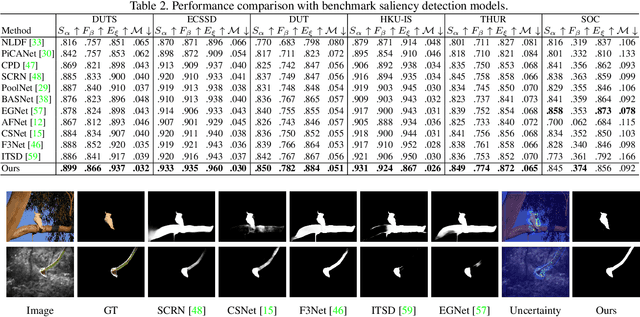

Joint Salient Object Detection and Camouflaged Object Detection via Uncertainty-aware Learning

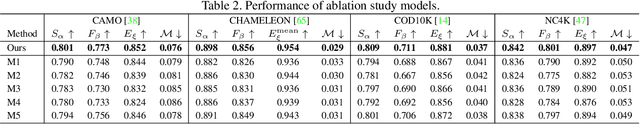

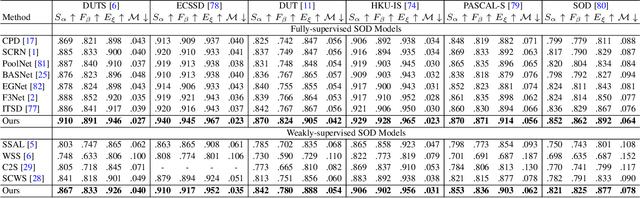

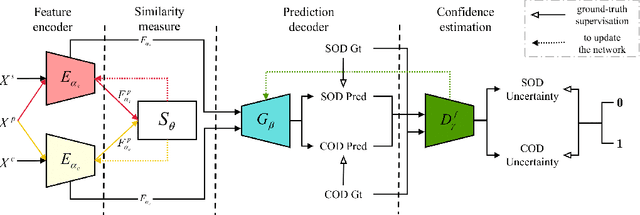

Jul 10, 2023Abstract:Salient objects attract human attention and usually stand out clearly from their surroundings. In contrast, camouflaged objects share similar colors or textures with the environment. In this case, salient objects are typically non-camouflaged, and camouflaged objects are usually not salient. Due to this inherent contradictory attribute, we introduce an uncertainty-aware learning pipeline to extensively explore the contradictory information of salient object detection (SOD) and camouflaged object detection (COD) via data-level and task-wise contradiction modeling. We first exploit the dataset correlation of these two tasks and claim that the easy samples in the COD dataset can serve as hard samples for SOD to improve the robustness of the SOD model. Based on the assumption that these two models should lead to activation maps highlighting different regions of the same input image, we further introduce a contrastive module with a joint-task contrastive learning framework to explicitly model the contradictory attributes of these two tasks. Different from conventional intra-task contrastive learning for unsupervised representation learning, our contrastive module is designed to model the task-wise correlation, leading to cross-task representation learning. To better understand the two tasks from the perspective of uncertainty, we extensively investigate the uncertainty estimation techniques for modeling the main uncertainties of the two tasks, namely task uncertainty (for SOD) and data uncertainty (for COD), and aiming to effectively estimate the challenging regions for each task to achieve difficulty-aware learning. Experimental results on benchmark datasets demonstrate that our solution leads to both state-of-the-art performance and informative uncertainty estimation.

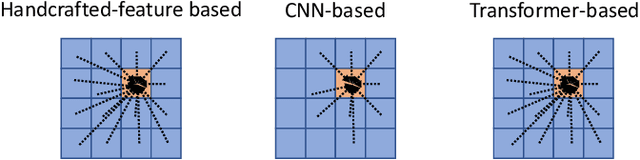

Weakly-supervised Contrastive Learning for Unsupervised Object Discovery

Jul 07, 2023Abstract:Unsupervised object discovery (UOD) refers to the task of discriminating the whole region of objects from the background within a scene without relying on labeled datasets, which benefits the task of bounding-box-level localization and pixel-level segmentation. This task is promising due to its ability to discover objects in a generic manner. We roughly categorise existing techniques into two main directions, namely the generative solutions based on image resynthesis, and the clustering methods based on self-supervised models. We have observed that the former heavily relies on the quality of image reconstruction, while the latter shows limitations in effectively modeling semantic correlations. To directly target at object discovery, we focus on the latter approach and propose a novel solution by incorporating weakly-supervised contrastive learning (WCL) to enhance semantic information exploration. We design a semantic-guided self-supervised learning model to extract high-level semantic features from images, which is achieved by fine-tuning the feature encoder of a self-supervised model, namely DINO, via WCL. Subsequently, we introduce Principal Component Analysis (PCA) to localize object regions. The principal projection direction, corresponding to the maximal eigenvalue, serves as an indicator of the object region(s). Extensive experiments on benchmark unsupervised object discovery datasets demonstrate the effectiveness of our proposed solution. The source code and experimental results are publicly available via our project page at https://github.com/npucvr/WSCUOD.git.

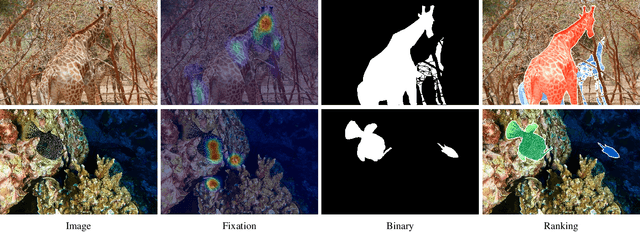

Towards Deeper Understanding of Camouflaged Object Detection

May 23, 2022

Abstract:Preys in the wild evolve to be camouflaged to avoid being recognized by predators. In this way, camouflage acts as a key defence mechanism across species that is critical to survival. To detect and segment the whole scope of a camouflaged object, camouflaged object detection (COD) is introduced as a binary segmentation task, with the binary ground truth camouflage map indicating the exact regions of the camouflaged objects. In this paper, we revisit this task and argue that the binary segmentation setting fails to fully understand the concept of camouflage. We find that explicitly modeling the conspicuousness of camouflaged objects against their particular backgrounds can not only lead to a better understanding about camouflage, but also provide guidance to designing more sophisticated camouflage techniques. Furthermore, we observe that it is some specific parts of camouflaged objects that make them detectable by predators. With the above understanding about camouflaged objects, we present the first triple-task learning framework to simultaneously localize, segment and rank camouflaged objects, indicating the conspicuousness level of camouflage. As no corresponding datasets exist for either the localization model or the ranking model, we generate localization maps with an eye tracker, which are then processed according to the instance level labels to generate our ranking-based training and testing dataset. We also contribute the largest COD testing set to comprehensively analyse performance of the camouflaged object detection models. Experimental results show that our triple-task learning framework achieves new state-of-the-art, leading to a more explainable camouflaged object detection network. Our code, data and results are available at: https://github.com/JingZhang617/COD-Rank-Localize-and-Segment.

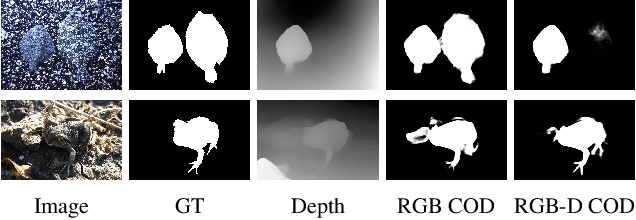

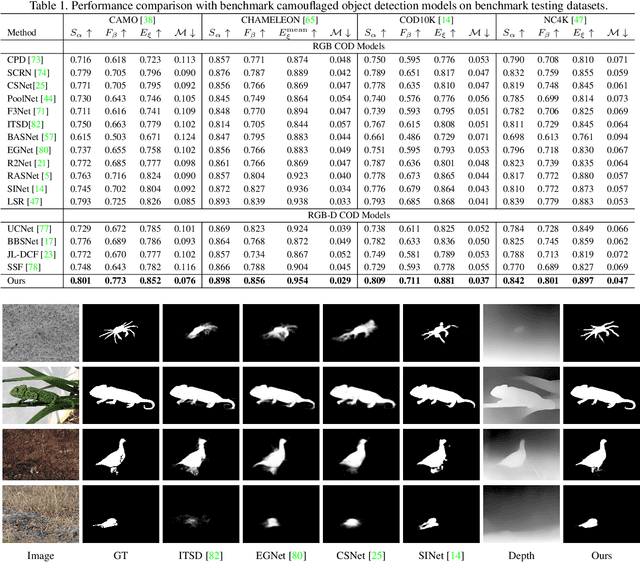

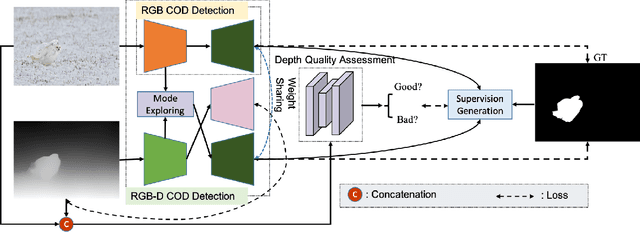

Depth-Guided Camouflaged Object Detection

Jun 26, 2021

Abstract:Camouflaged object detection (COD) aims to segment camouflaged objects hiding in the environment, which is challenging due to the similar appearance of camouflaged objects and their surroundings. Research in biology suggests that depth can provide useful object localization cues for camouflaged object discovery, as all the animals have 3D perception ability. However, the depth information has not been exploited for camouflaged object detection. To explore the contribution of depth for camouflage detection, we present a depth-guided camouflaged object detection network with pre-computed depth maps from existing monocular depth estimation methods. Due to the domain gap between the depth estimation dataset and our camouflaged object detection dataset, the generated depth may not be accurate enough to be directly used in our framework. We then introduce a depth quality assessment module to evaluate the quality of depth based on the model prediction from both RGB COD branch and RGB-D COD branch. During training, only high-quality depth is used to update the modal interaction module for multi-modal learning. During testing, our depth quality assessment module can effectively determine the contribution of depth and select the RGB branch or RGB-D branch for camouflage prediction. Extensive experiments on various camouflaged object detection datasets prove the effectiveness of our solution in exploring the depth information for camouflaged object detection. Our code and data is publicly available at: \url{https://github.com/JingZhang617/RGBD-COD}.

Transformer Transforms Salient Object Detection and Camouflaged Object Detection

Apr 20, 2021

Abstract:The transformer networks, which originate from machine translation, are particularly good at modeling long-range dependencies within a long sequence. Currently, the transformer networks are making revolutionary progress in various vision tasks ranging from high-level classification tasks to low-level dense prediction tasks. In this paper, we conduct research on applying the transformer networks for salient object detection (SOD). Specifically, we adopt the dense transformer backbone for fully supervised RGB image based SOD, RGB-D image pair based SOD, and weakly supervised SOD via scribble supervision. As an extension, we also apply our fully supervised model to the task of camouflaged object detection (COD) for camouflaged object segmentation. For the fully supervised models, we define the dense transformer backbone as feature encoder, and design a very simple decoder to produce a one channel saliency map (or camouflage map for the COD task). For the weakly supervised model, as there exists no structure information in the scribble annotation, we first adopt the recent proposed Gated-CRF loss to effectively model the pair-wise relationships for accurate model prediction. Then, we introduce self-supervised learning strategy to push the model to produce scale-invariant predictions, which is proven effective for weakly supervised models and models trained on small training datasets. Extensive experimental results on various SOD and COD tasks (fully supervised RGB image based SOD, fully supervised RGB-D image pair based SOD, weakly supervised SOD via scribble supervision, and fully supervised RGB image based COD) illustrate that transformer networks can transform salient object detection and camouflaged object detection, leading to new benchmarks for each related task.

Uncertainty-aware Joint Salient Object and Camouflaged Object Detection

Apr 06, 2021

Abstract:Visual salient object detection (SOD) aims at finding the salient object(s) that attract human attention, while camouflaged object detection (COD) on the contrary intends to discover the camouflaged object(s) that hidden in the surrounding. In this paper, we propose a paradigm of leveraging the contradictory information to enhance the detection ability of both salient object detection and camouflaged object detection. We start by exploiting the easy positive samples in the COD dataset to serve as hard positive samples in the SOD task to improve the robustness of the SOD model. Then, we introduce a similarity measure module to explicitly model the contradicting attributes of these two tasks. Furthermore, considering the uncertainty of labeling in both tasks' datasets, we propose an adversarial learning network to achieve both higher order similarity measure and network confidence estimation. Experimental results on benchmark datasets demonstrate that our solution leads to state-of-the-art (SOTA) performance for both tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge