Yi-Chang James Tsai

Y-net: Multi-scale feature aggregation network with wavelet structure similarity loss function for single image dehazing

Mar 31, 2020

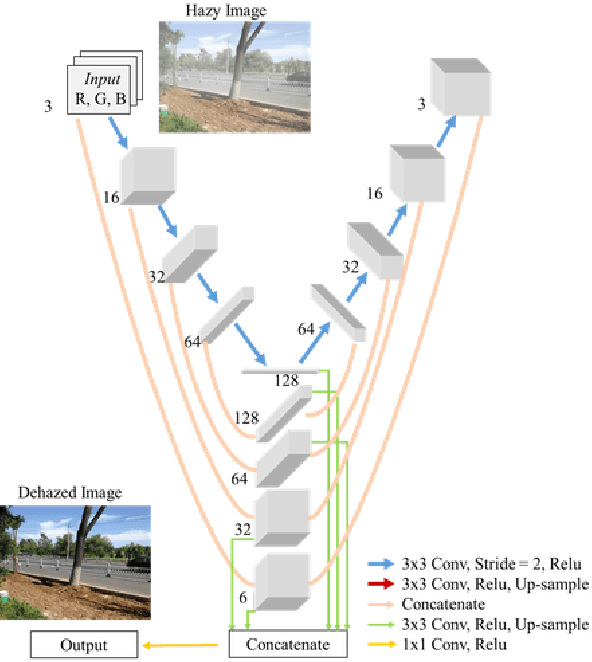

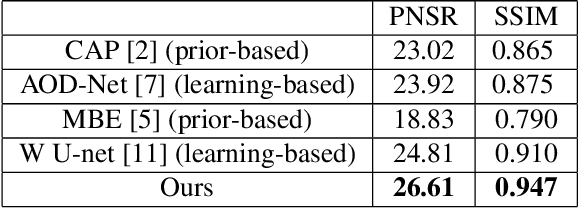

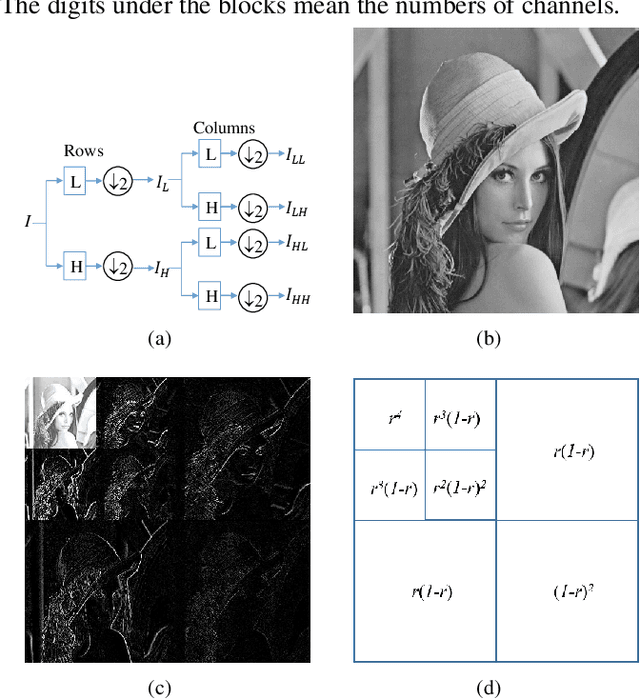

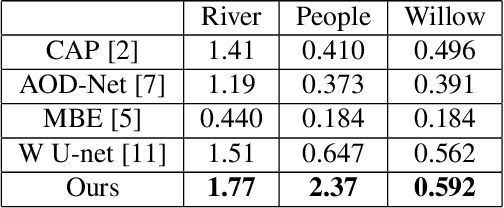

Abstract:Single image dehazing is the ill-posed two-dimensional signal reconstruction problem. Recently, deep convolutional neural networks (CNN) have been successfully used in many computer vision problems. In this paper, we propose a Y-net that is named for its structure. This network reconstructs clear images by aggregating multi-scale features maps. Additionally, we propose a Wavelet Structure SIMilarity (W-SSIM) loss function in the training step. In the proposed loss function, discrete wavelet transforms are applied repeatedly to divide the image into differently sized patches with different frequencies and scales. The proposed loss function is the accumulation of SSIM loss of various patches with respective ratios. Extensive experimental results demonstrate that the proposed Y-net with the W-SSIM loss function restores high-quality clear images and outperforms state-of-the-art algorithms. Code and models are available at https://github.com/dectrfov/Y-net.

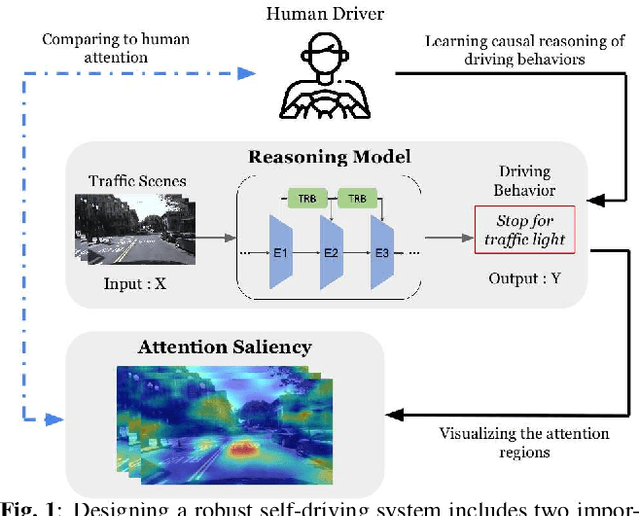

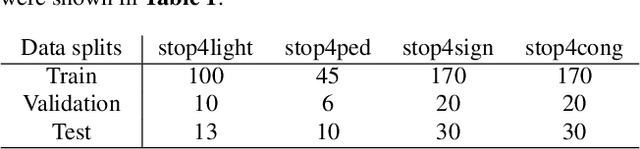

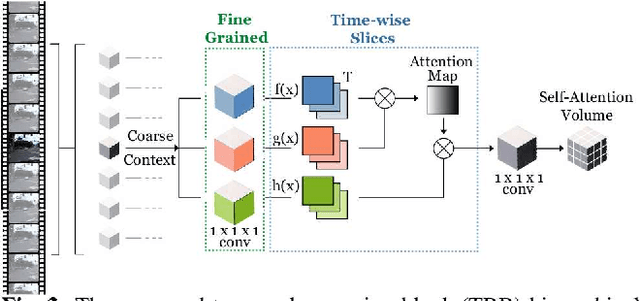

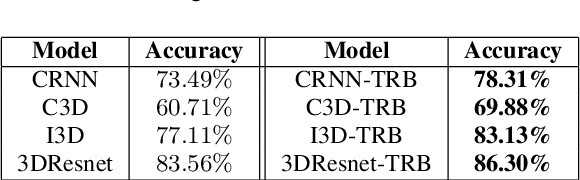

Interpretable Self-Attention Temporal Reasoning for Driving Behavior Understanding

Nov 06, 2019

Abstract:Performing driving behaviors based on causal reasoning is essential to ensure driving safety. In this work, we investigated how state-of-the-art 3D Convolutional Neural Networks (CNNs) perform on classifying driving behaviors based on causal reasoning. We proposed a perturbation-based visual explanation method to inspect the models' performance visually. By examining the video attention saliency, we found that existing models could not precisely capture the causes (e.g., traffic light) of the specific action (e.g., stopping). Therefore, the Temporal Reasoning Block (TRB) was proposed and introduced to the models. With the TRB models, we achieved the accuracy of $\mathbf{86.3\%}$, which outperform the state-of-the-art 3D CNNs from previous works. The attention saliency also demonstrated that TRB helped models focus on the causes more precisely. With both numerical and visual evaluations, we concluded that our proposed TRB models were able to provide accurate driving behavior prediction by learning the causal reasoning of the behaviors.

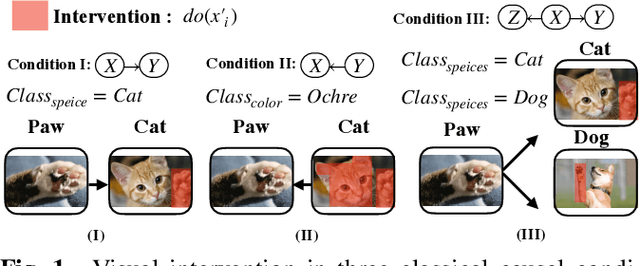

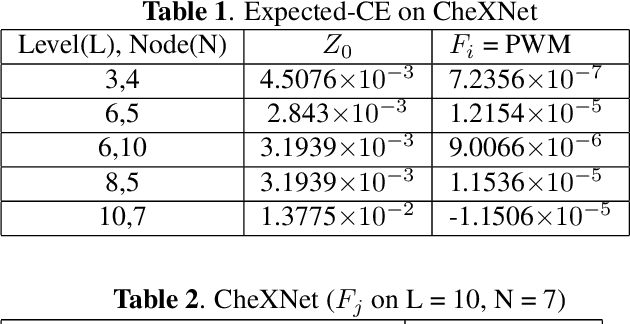

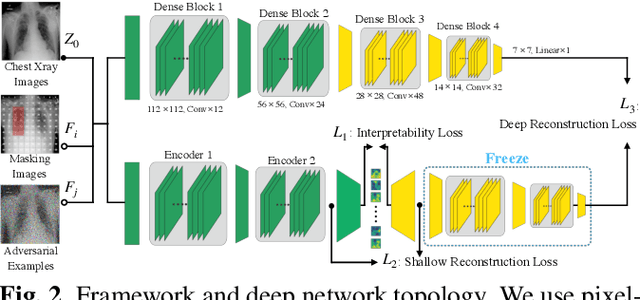

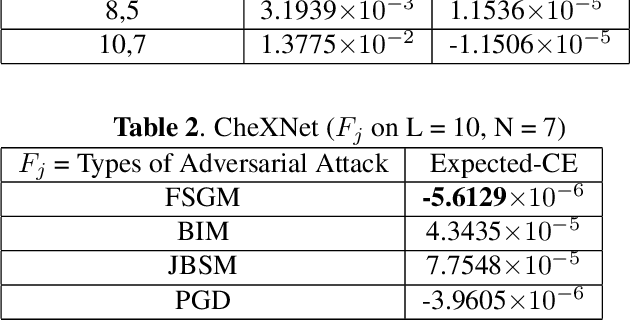

When Causal Intervention Meets Image Masking and Adversarial Perturbation for Deep Neural Networks

Feb 13, 2019

Abstract:Discovering and exploiting the causality in deep neural networks (DNNs) are crucial challenges for understanding and reasoning causal effects (CE) on an explainable visual model. "Intervention" has been widely used for recognizing a causal relation ontologically. In this paper, we propose a causal inference framework for visual reasoning via do-calculus. To study the intervention effects on pixel-level feature(s) for causal reasoning, we introduce pixel-wise masking and adversarial perturbation. In our framework, CE is calculated using features in a latent space and perturbed prediction from a DNN-based model. We further provide a first look into the characteristics of discovered CE of adversarially perturbed images generated by gradient-based methods. Experimental results show that CE is a competitive and robust index for understanding DNNs when compared with conventional methods such as class-activation mappings (CAMs) on the ChestX-ray 14 dataset for human-interpretable feature(s) (e.g., symptom) reasoning. Moreover, CE holds promises for detecting adversarial examples as it possesses distinct characteristics in the presence of adversarial perturbations.

Synthesizing New Retinal Symptom Images by Multiple Generative Models

Feb 11, 2019

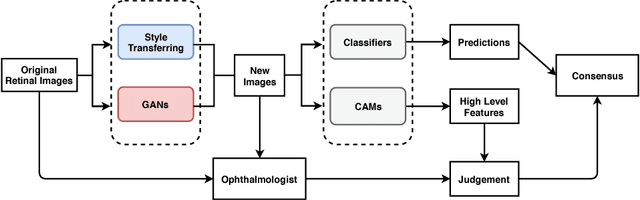

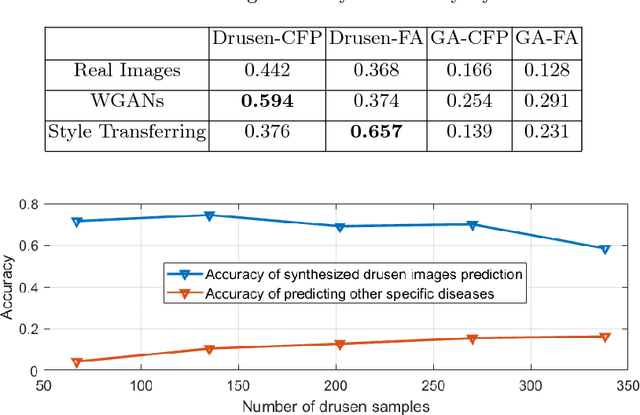

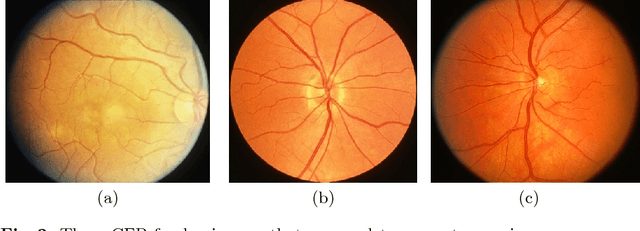

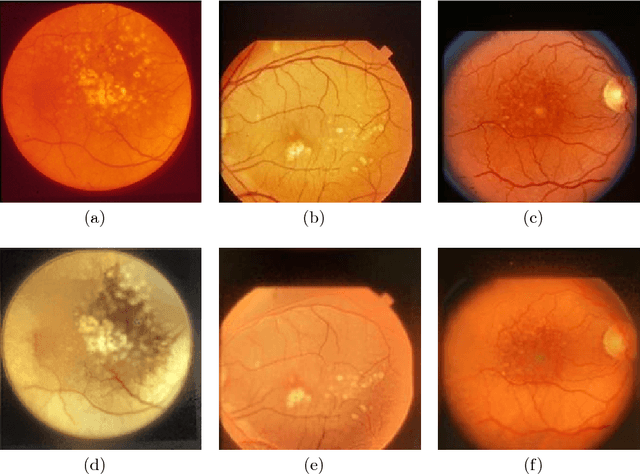

Abstract:Age-Related Macular Degeneration (AMD) is an asymptomatic retinal disease which may result in loss of vision. There is limited access to high-quality relevant retinal images and poor understanding of the features defining sub-classes of this disease. Motivated by recent advances in machine learning we specifically explore the potential of generative modeling, using Generative Adversarial Networks (GANs) and style transferring, to facilitate clinical diagnosis and disease understanding by feature extraction. We design an analytic pipeline which first generates synthetic retinal images from clinical images; a subsequent verification step is applied. In the synthesizing step we merge GANs (DCGANs and WGANs architectures) and style transferring for the image generation, whereas the verified step controls the accuracy of the generated images. We find that the generated images contain sufficient pathological details to facilitate ophthalmologists' task of disease classification and in discovery of disease relevant features. In particular, our system predicts the drusen and geographic atrophy sub-classes of AMD. Furthermore, the performance using CFP images for GANs outperforms the classification based on using only the original clinical dataset. Our results are evaluated using existing classifier of retinal diseases and class activated maps, supporting the predictive power of the synthetic images and their utility for feature extraction. Our code examples are available online.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge