Yi Hua

Watch Out Your Album! On the Inadvertent Privacy Memorization in Multi-Modal Large Language Models

Mar 03, 2025Abstract:Multi-Modal Large Language Models (MLLMs) have exhibited remarkable performance on various vision-language tasks such as Visual Question Answering (VQA). Despite accumulating evidence of privacy concerns associated with task-relevant content, it remains unclear whether MLLMs inadvertently memorize private content that is entirely irrelevant to the training tasks. In this paper, we investigate how randomly generated task-irrelevant private content can become spuriously correlated with downstream objectives due to partial mini-batch training dynamics, thus causing inadvertent memorization. Concretely, we randomly generate task-irrelevant watermarks into VQA fine-tuning images at varying probabilities and propose a novel probing framework to determine whether MLLMs have inadvertently encoded such content. Our experiments reveal that MLLMs exhibit notably different training behaviors in partial mini-batch settings with task-irrelevant watermarks embedded. Furthermore, through layer-wise probing, we demonstrate that MLLMs trigger distinct representational patterns when encountering previously seen task-irrelevant knowledge, even if this knowledge does not influence their output during prompting. Our code is available at https://github.com/illusionhi/ProbingPrivacy.

fNeRF: High Quality Radiance Fields from Practical Cameras

Jun 15, 2024

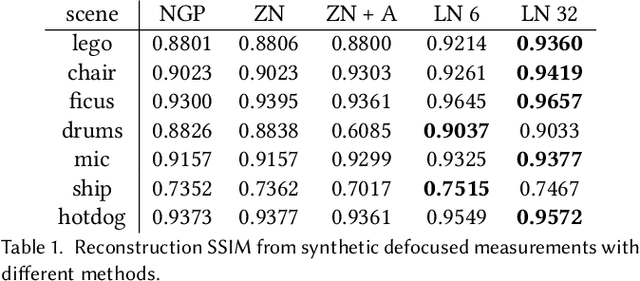

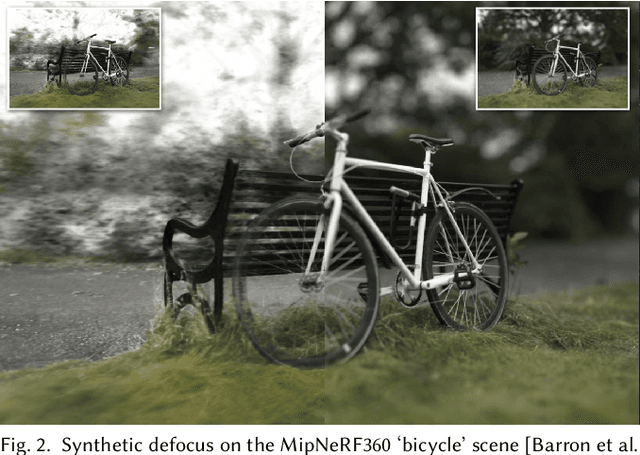

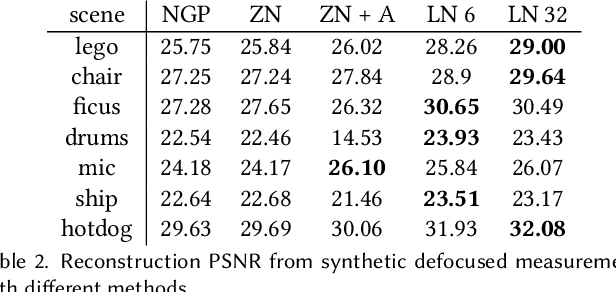

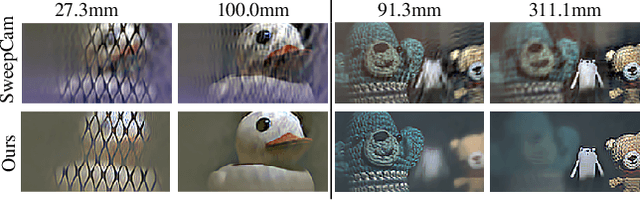

Abstract:In recent years, the development of Neural Radiance Fields has enabled a previously unseen level of photo-realistic 3D reconstruction of scenes and objects from multi-view camera data. However, previous methods use an oversimplified pinhole camera model resulting in defocus blur being `baked' into the reconstructed radiance field. We propose a modification to the ray casting that leverages the optics of lenses to enhance scene reconstruction in the presence of defocus blur. This allows us to improve the quality of radiance field reconstructions from the measurements of a practical camera with finite aperture. We show that the proposed model matches the defocus blur behavior of practical cameras more closely than pinhole models and other approximations of defocus blur models, particularly in the presence of partial occlusions. This allows us to achieve sharper reconstructions, improving the PSNR on validation of all-in-focus images, on both synthetic and real datasets, by up to 3 dB.

Angle Sensitive Pixels for Lensless Imaging on Spherical Sensors

Jun 28, 2023Abstract:We propose OrbCam, a lensless architecture for imaging with spherical sensors. Prior work in lensless imager techniques have focused largely on using planar sensors; for such designs, it is important to use a modulation element, e.g. amplitude or phase masks, to construct a invertible imaging system. In contrast, we show that the diversity of pixel orientations on a curved surface is sufficient to improve the conditioning of the mapping between the scene and the sensor. Hence, when imaging on a spherical sensor, all pixels can have the same angular response function such that the lensless imager is comprised of pixels that are identical to each other and differ only in their orientations. We provide the computational tools for the design of the angular response of the pixels in a spherical sensor that leads to well-conditioned and noise-robust measurements. We validate our design in both simulation and a lab prototype. The implications of our design is that the lensless imaging can be enabled easily for curved and flexible surfaces thereby opening up a new set of application domains.

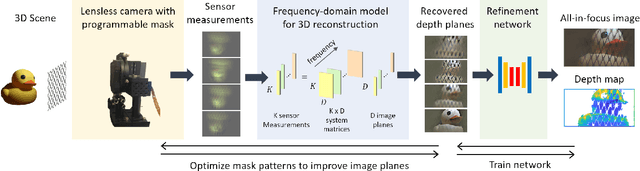

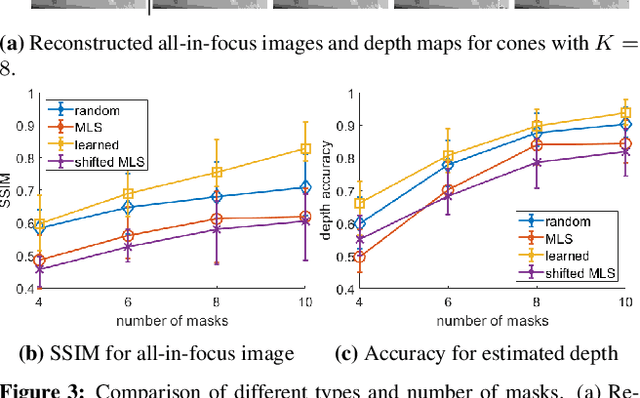

A Simple Framework for 3D Lensless Imaging with Programmable Masks

Aug 18, 2021

Abstract:Lensless cameras provide a framework to build thin imaging systems by replacing the lens in a conventional camera with an amplitude or phase mask near the sensor. Existing methods for lensless imaging can recover the depth and intensity of the scene, but they require solving computationally-expensive inverse problems. Furthermore, existing methods struggle to recover dense scenes with large depth variations. In this paper, we propose a lensless imaging system that captures a small number of measurements using different patterns on a programmable mask. In this context, we make three contributions. First, we present a fast recovery algorithm to recover textures on a fixed number of depth planes in the scene. Second, we consider the mask design problem, for programmable lensless cameras, and provide a design template for optimizing the mask patterns with the goal of improving depth estimation. Third, we use a refinement network as a post-processing step to identify and remove artifacts in the reconstruction. These modifications are evaluated extensively with experimental results on a lensless camera prototype to showcase the performance benefits of the optimized masks and recovery algorithms over the state of the art.

* Supplementary material available at https://github.com/CSIPlab/Programmable3Dcam.git

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge