Xingyu Meng

OpenLS-DGF: An Adaptive Open-Source Dataset Generation Framework for Machine Learning Tasks in Logic Synthesis

Nov 16, 2024

Abstract:This paper introduces OpenLS-DGF, an adaptive logic synthesis dataset generation framework, to enhance machine learning~(ML) applications within the logic synthesis process. Previous dataset generation flows were tailored for specific tasks or lacked integrated machine learning capabilities. While OpenLS-DGF supports various machine learning tasks by encapsulating the three fundamental steps of logic synthesis: Boolean representation, logic optimization, and technology mapping. It preserves the original information in both Verilog and machine-learning-friendly GraphML formats. The verilog files offer semi-customizable capabilities, enabling researchers to insert additional steps and incrementally refine the generated dataset. Furthermore, OpenLS-DGF includes an adaptive circuit engine that facilitates the final dataset management and downstream tasks. The generated OpenLS-D-v1 dataset comprises 46 combinational designs from established benchmarks, totaling over 966,000 Boolean circuits. OpenLS-D-v1 supports integrating new data features, making it more versatile for new challenges. This paper demonstrates the versatility of OpenLS-D-v1 through four distinct downstream tasks: circuit classification, circuit ranking, quality of results (QoR) prediction, and probability prediction. Each task is chosen to represent essential steps of logic synthesis, and the experimental results show the generated dataset from OpenLS-DGF achieves prominent diversity and applicability. The source code and datasets are available at https://github.com/Logic-Factory/ACE/blob/master/OpenLS-DGF/readme.md.

An Adaptive Open-Source Dataset Generation Framework for Machine Learning Tasks in Logic Synthesis

Nov 14, 2024

Abstract:This paper introduces an adaptive logic synthesis dataset generation framework designed to enhance machine learning applications within the logic synthesis process. Unlike previous dataset generation flows that were tailored for specific tasks or lacked integrated machine learning capabilities, the proposed framework supports a comprehensive range of machine learning tasks by encapsulating the three fundamental steps of logic synthesis: Boolean representation, logic optimization, and technology mapping. It preserves the original information in the intermediate files that can be stored in both Verilog and Graphmal format. Verilog files enable semi-customizability, allowing researchers to add steps and incrementally refine the generated dataset. The framework also includes an adaptive circuit engine to facilitate the loading of GraphML files for final dataset packaging and sub-dataset extraction. The generated OpenLS-D dataset comprises 46 combinational designs from established benchmarks, totaling over 966,000 Boolean circuits, with each design containing 21,000 circuits generated from 1000 synthesis recipes, including 7000 Boolean networks, 7000 ASIC netlists, and 7000 FPGA netlists. Furthermore, OpenLS-D supports integrating newly desired data features, making it more versatile for new challenges. The utility of OpenLS-D is demonstrated through four distinct downstream tasks: circuit classification, circuit ranking, quality of results (QoR) prediction, and probability prediction. Each task highlights different internal steps of logic synthesis, with the datasets extracted and relabeled from the OpenLS-D dataset using the circuit engine. The experimental results confirm the dataset's diversity and extensive applicability. The source code and datasets are available at https://github.com/Logic-Factory/ACE/blob/master/OpenLS-D/readme.md.

Uni-ISP: Unifying the Learning of ISPs from Multiple Cameras

Jun 03, 2024

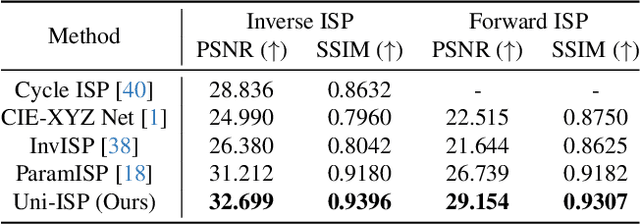

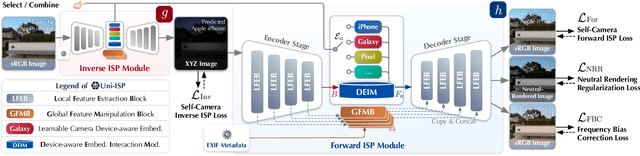

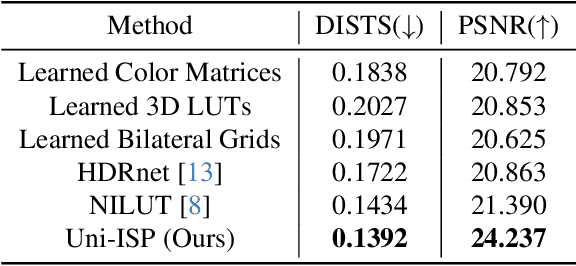

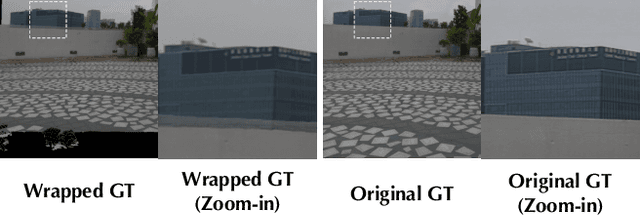

Abstract:Modern end-to-end image signal processors (ISPs) can learn complex mappings from RAW/XYZ data to sRGB (or inverse), opening new possibilities in image processing. However, as the diversity of camera models continues to expand, developing and maintaining individual ISPs is not sustainable in the long term, which inherently lacks versatility, hindering the adaptability to multiple camera models. In this paper, we propose a novel pipeline, Uni-ISP, which unifies the learning of ISPs from multiple cameras, offering an accurate and versatile processor to multiple camera models. The core of Uni-ISP is leveraging device-aware embeddings through learning inverse/forward ISPs and its special training scheme. By doing so, Uni-ISP not only improves the performance of inverse/forward ISPs but also unlocks a variety of new applications inaccessible to existing learned ISPs. Moreover, since there is no dataset synchronously captured by multiple cameras for training, we construct a real-world 4K dataset, FiveCam, comprising more than 2,400 pairs of sRGB-RAW images synchronously captured by five smartphones. We conducted extensive experiments demonstrating Uni-ISP's accuracy in inverse/forward ISPs (with improvements of +1.5dB/2.4dB PSNR), its versatility in enabling new applications, and its adaptability to new camera models.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge