Tryphon Lambrou

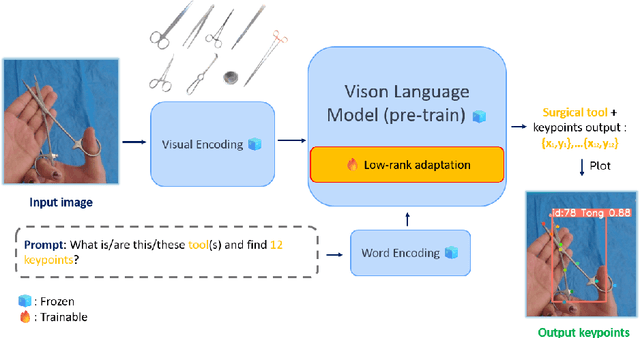

Estimating 2D Keypoints of Surgical Tools Using Vision-Language Models with Low-Rank Adaptation

Aug 28, 2025

Abstract:This paper presents a novel pipeline for 2D keypoint estima- tion of surgical tools by leveraging Vision Language Models (VLMs) fine- tuned using a low rank adjusting (LoRA) technique. Unlike traditional Convolutional Neural Network (CNN) or Transformer-based approaches, which often suffer from overfitting in small-scale medical datasets, our method harnesses the generalization capabilities of pre-trained VLMs. We carefully design prompts to create an instruction-tuning dataset and use them to align visual features with semantic keypoint descriptions. Experimental results show that with only two epochs of fine tuning, the adapted VLM outperforms the baseline models, demonstrating the ef- fectiveness of LoRA in low-resource scenarios. This approach not only improves keypoint detection performance, but also paves the way for future work in 3D surgical hands and tools pose estimation.

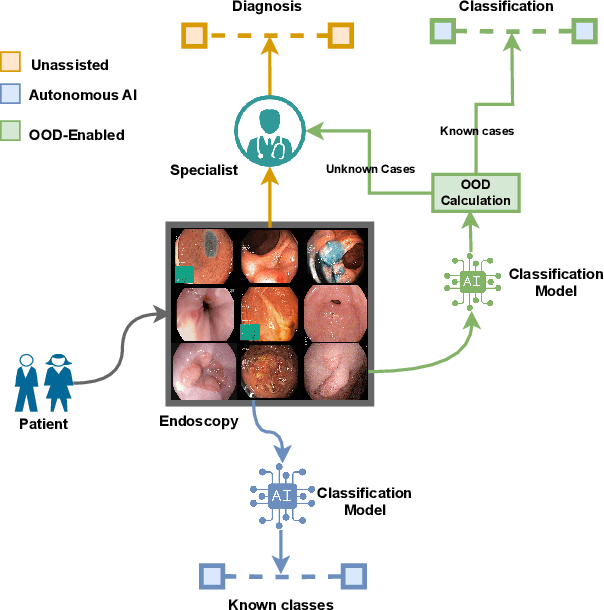

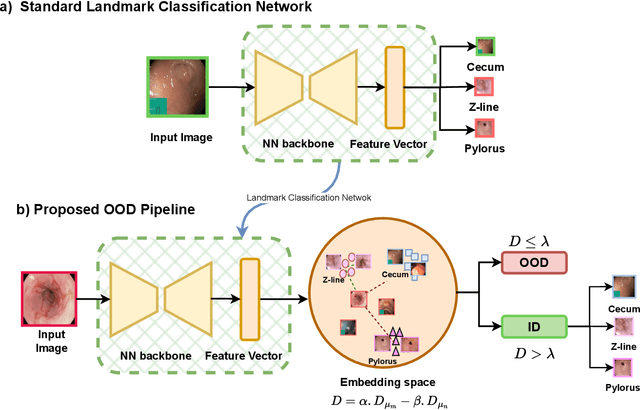

NCDD: Nearest Centroid Distance Deficit for Out-Of-Distribution Detection in Gastrointestinal Vision

Dec 02, 2024

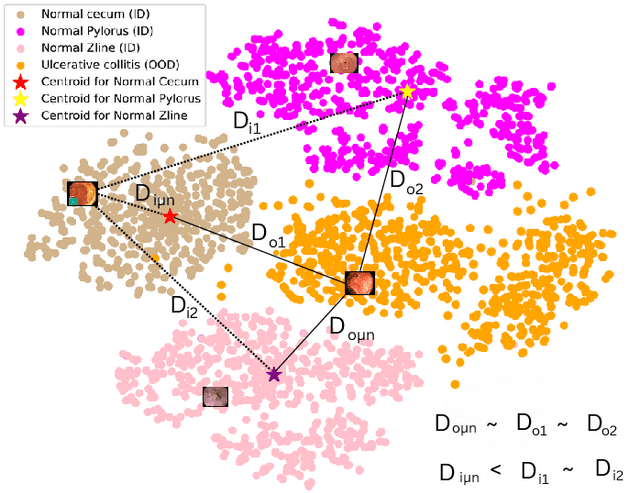

Abstract:The integration of deep learning tools in gastrointestinal vision holds the potential for significant advancements in diagnosis, treatment, and overall patient care. A major challenge, however, is these tools' tendency to make overconfident predictions, even when encountering unseen or newly emerging disease patterns, undermining their reliability. We address this critical issue of reliability by framing it as an out-of-distribution (OOD) detection problem, where previously unseen and emerging diseases are identified as OOD examples. However, gastrointestinal images pose a unique challenge due to the overlapping feature representations between in- Distribution (ID) and OOD examples. Existing approaches often overlook this characteristic, as they are primarily developed for natural image datasets, where feature distinctions are more apparent. Despite the overlap, we hypothesize that the features of an in-distribution example will cluster closer to the centroids of their ground truth class, resulting in a shorter distance to the nearest centroid. In contrast, OOD examples maintain an equal distance from all class centroids. Based on this observation, we propose a novel nearest-centroid distance deficit (NCCD) score in the feature space for gastrointestinal OOD detection. Evaluations across multiple deep learning architectures and two publicly available benchmarks, Kvasir2 and Gastrovision, demonstrate the effectiveness of our approach compared to several state-of-the-art methods. The code and implementation details are publicly available at: https://github.com/bhattarailab/NCDD

TTA-OOD: Test-time Augmentation for Improving Out-of-Distribution Detection in Gastrointestinal Vision

Jul 19, 2024

Abstract:Deep learning has significantly advanced the field of gastrointestinal vision, enhancing disease diagnosis capabilities. One major challenge in automating diagnosis within gastrointestinal settings is the detection of abnormal cases in endoscopic images. Due to the sparsity of data, this process of distinguishing normal from abnormal cases has faced significant challenges, particularly with rare and unseen conditions. To address this issue, we frame abnormality detection as an out-of-distribution (OOD) detection problem. In this setup, a model trained on In-Distribution (ID) data, which represents a healthy GI tract, can accurately identify healthy cases, while abnormalities are detected as OOD, regardless of their class. We introduce a test-time augmentation segment into the OOD detection pipeline, which enhances the distinction between ID and OOD examples, thereby improving the effectiveness of existing OOD methods with the same model. This augmentation shifts the pixel space, which translates into a more distinct semantic representation for OOD examples compared to ID examples. We evaluated our method against existing state-of-the-art OOD scores, showing improvements with test-time augmentation over the baseline approach.

MRI Brain Tumor Segmentation using Random Forests and Fully Convolutional Networks

Sep 13, 2019

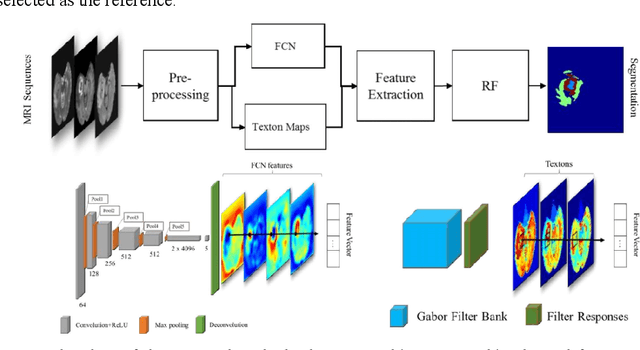

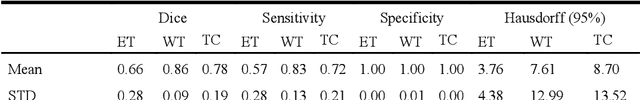

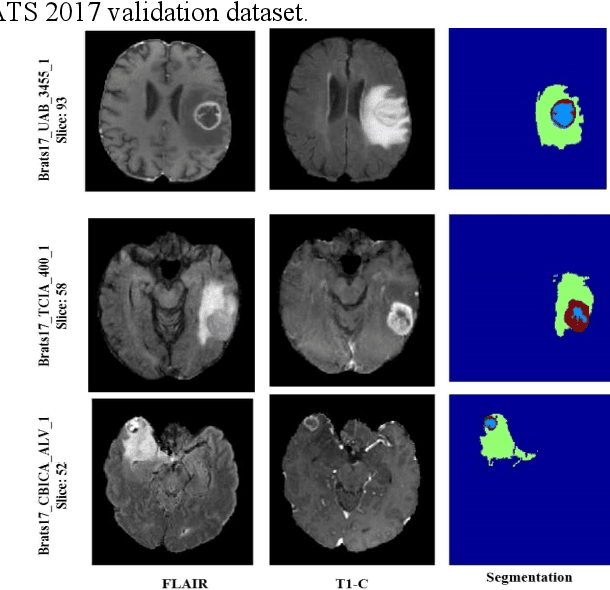

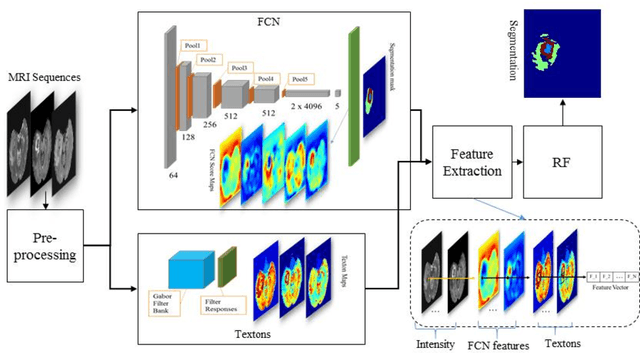

Abstract:In this paper, we propose a novel learning based method for automated segmentation of brain tumor in multimodal MRI images, which incorporates two sets of machine -learned and hand crafted features. Fully convolutional networks (FCN) forms the machine learned features and texton based features are considered as hand-crafted features. Random forest (RF) is used to classify the MRI image voxels into normal brain tissues and different parts of tumors, i.e. edema, necrosis and enhancing tumor. The method was evaluated on BRATS 2017 challenge dataset. The results show that the proposed method provides promising segmentations. The mean Dice overlap measure for automatic brain tumor segmentation against ground truth is 0.86, 0.78 and 0.66 for whole tumor, core and enhancing tumor, respectively.

* Published in the pre-conference proceeding of "2017 International MICCAI BraTS Challenge"

Multimodal MRI brain tumor segmentation using random forests with features learned from fully convolutional neural network

Apr 26, 2017

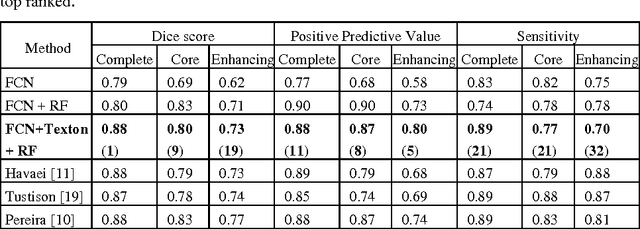

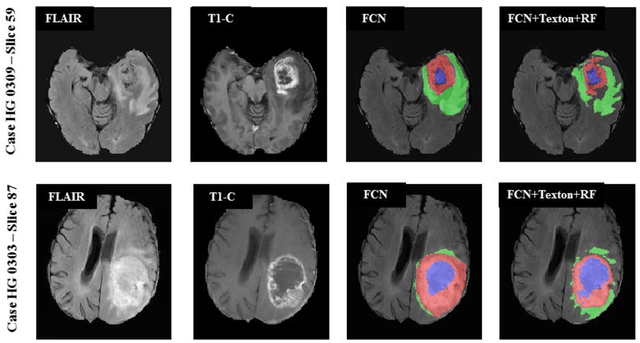

Abstract:In this paper, we propose a novel learning based method for automated segmenta-tion of brain tumor in multimodal MRI images. The machine learned features from fully convolutional neural network (FCN) and hand-designed texton fea-tures are used to classify the MRI image voxels. The score map with pixel-wise predictions is used as a feature map which is learned from multimodal MRI train-ing dataset using the FCN. The learned features are then applied to random for-ests to classify each MRI image voxel into normal brain tissues and different parts of tumor. The method was evaluated on BRATS 2013 challenge dataset. The results show that the application of the random forest classifier to multimodal MRI images using machine-learned features based on FCN and hand-designed features based on textons provides promising segmentations. The Dice overlap measure for automatic brain tumor segmentation against ground truth is 0.88, 080 and 0.73 for complete tumor, core and enhancing tumor, respectively.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge