Tryambak Gangopadhyay

A Deep Learning Approach to Detect Lean Blowout in Combustion Systems

Aug 03, 2022

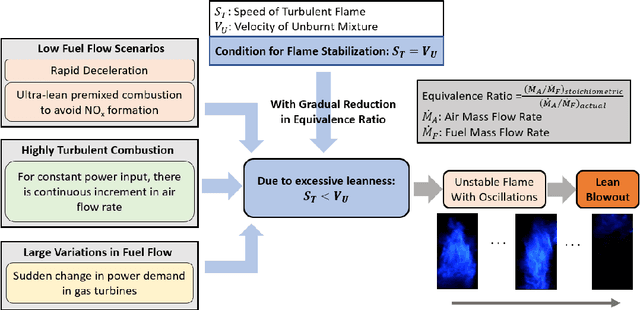

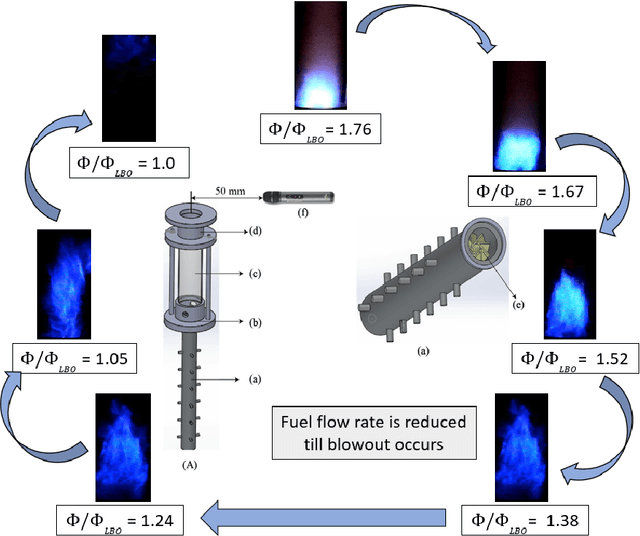

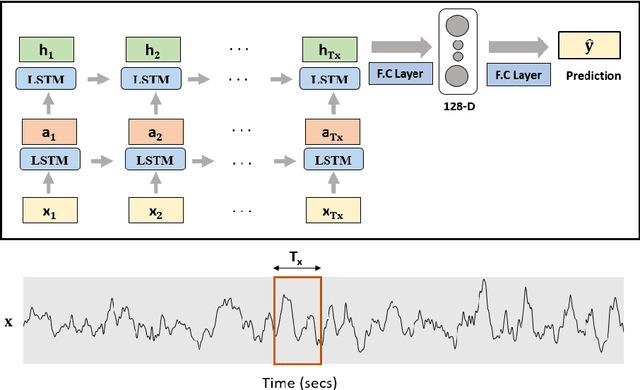

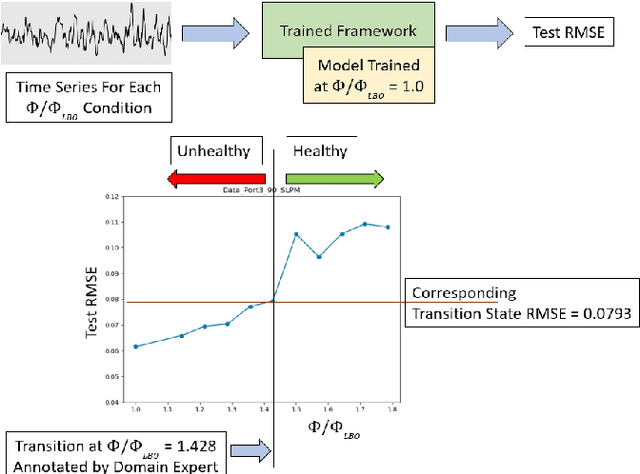

Abstract:Lean combustion is environment friendly with low NOx emissions and also provides better fuel efficiency in a combustion system. However, approaching towards lean combustion can make engines more susceptible to lean blowout. Lean blowout (LBO) is an undesirable phenomenon that can cause sudden flame extinction leading to sudden loss of power. During the design stage, it is quite challenging for the scientists to accurately determine the optimal operating limits to avoid sudden LBO occurrence. Therefore, it is crucial to develop accurate and computationally tractable frameworks for online LBO detection in low NOx emission engines. To the best of our knowledge, for the first time, we propose a deep learning approach to detect lean blowout in combustion systems. In this work, we utilize a laboratory-scale combustor to collect data for different protocols. We start far from LBO for each protocol and gradually move towards the LBO regime, capturing a quasi-static time series dataset at each condition. Using one of the protocols in our dataset as the reference protocol and with conditions annotated by domain experts, we find a transition state metric for our trained deep learning model to detect LBO in the other test protocols. We find that our proposed approach is more accurate and computationally faster than other baseline models to detect the transitions to LBO. Therefore, we recommend this method for real-time performance monitoring in lean combustion engines.

Cross-Modal Virtual Sensing for Combustion Instability Monitoring

Oct 06, 2021

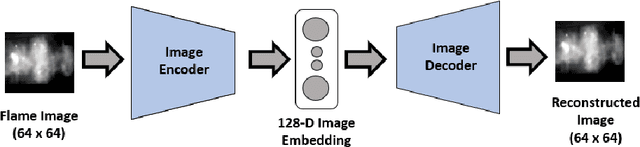

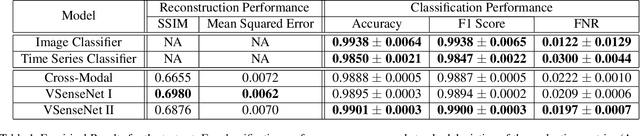

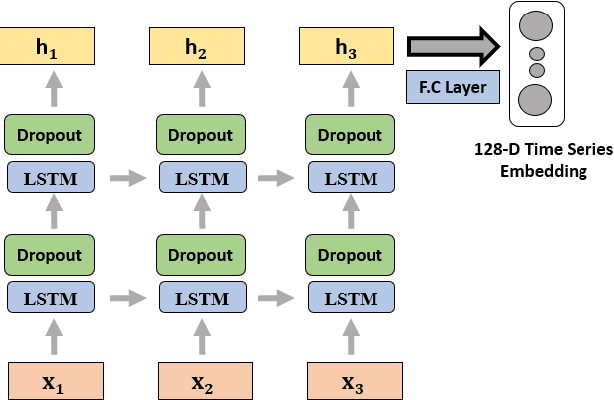

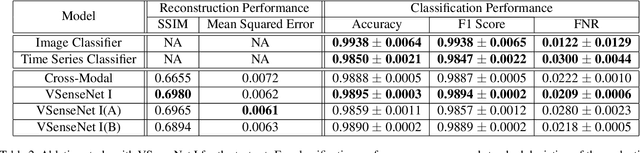

Abstract:In many cyber-physical systems, imaging can be an important but expensive or 'difficult to deploy' sensing modality. One such example is detecting combustion instability using flame images, where deep learning frameworks have demonstrated state-of-the-art performance. The proposed frameworks are also shown to be quite trustworthy such that domain experts can have sufficient confidence to use these models in real systems to prevent unwanted incidents. However, flame imaging is not a common sensing modality in engine combustors today. Therefore, the current roadblock exists on the hardware side regarding the acquisition and processing of high-volume flame images. On the other hand, the acoustic pressure time series is a more feasible modality for data collection in real combustors. To utilize acoustic time series as a sensing modality, we propose a novel cross-modal encoder-decoder architecture that can reconstruct cross-modal visual features from acoustic pressure time series in combustion systems. With the "distillation" of cross-modal features, the results demonstrate that the detection accuracy can be enhanced using the virtual visual sensing modality. By providing the benefit of cross-modal reconstruction, our framework can prove to be useful in different domains well beyond the power generation and transportation industries.

3D Convolutional Selective Autoencoder For Instability Detection in Combustion Systems

Jan 06, 2021

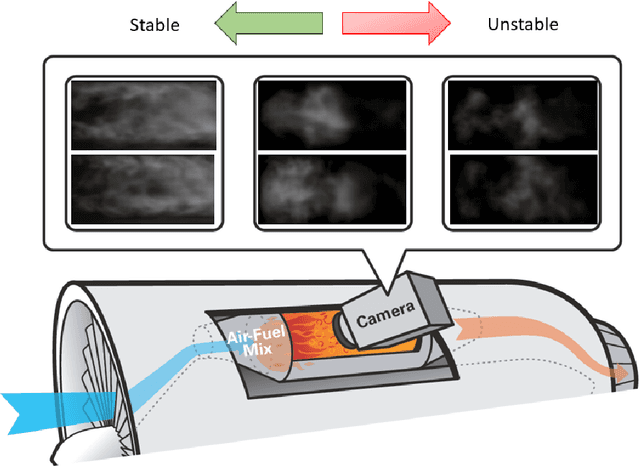

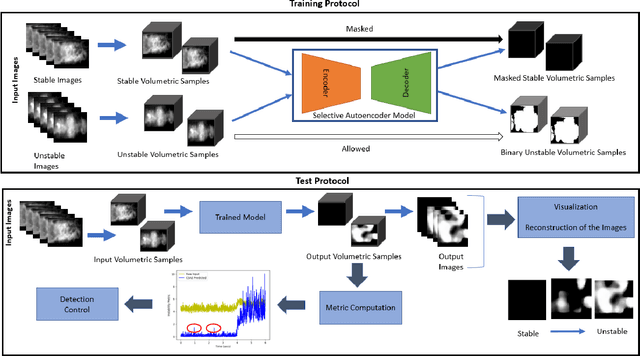

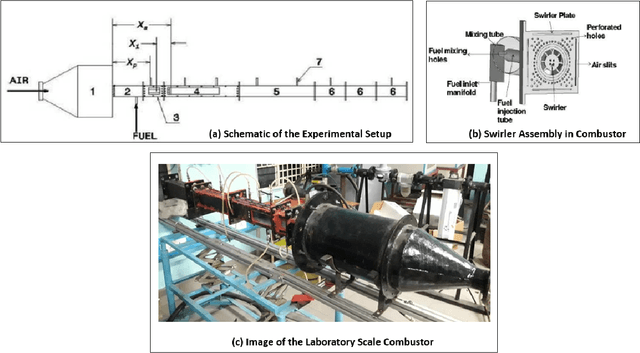

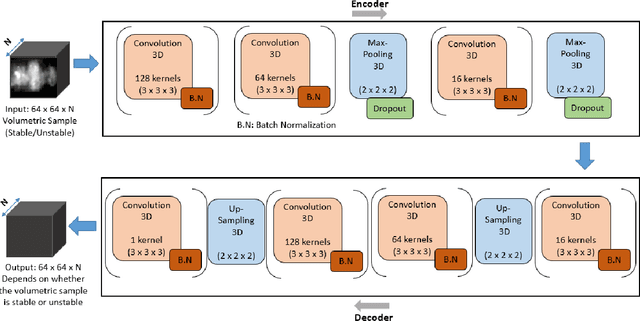

Abstract:While analytical solutions of critical (phase) transitions in physical systems are abundant for simple nonlinear systems, such analysis remains intractable for real-life dynamical systems. A key example of such a physical system is thermoacoustic instability in combustion, where prediction or early detection of an onset of instability is a hard technical challenge, which needs to be addressed to build safer and more energy-efficient gas turbine engines powering aerospace and energy industries. The instabilities arising in combustion chambers of engines are mathematically too complex to model. To address this issue in a data-driven manner instead, we propose a novel deep learning architecture called 3D convolutional selective autoencoder (3D-CSAE) to detect the evolution of self-excited oscillations using spatiotemporal data, i.e., hi-speed videos taken from a swirl-stabilized combustor (laboratory surrogate of gas turbine engine combustor). 3D-CSAE consists of filters to learn, in a hierarchical fashion, the complex visual and dynamic features related to combustion instability. We train the 3D-CSAE on frames of videos obtained from a limited set of operating conditions. We select the 3D-CSAE hyper-parameters that are effective for characterizing hierarchical and multiscale instability structure evolution by utilizing the dynamic information available in the video. The proposed model clearly shows performance improvement in detecting the precursors of instability. The machine learning-driven results are verified with physics-based off-line measures. Advanced active control mechanisms can directly leverage the proposed online detection capability of 3D-CSAE to mitigate the adverse effects of combustion instabilities on the engine operating under various stringent requirements and conditions.

Spatiotemporal Attention for Multivariate Time Series Prediction and Interpretation

Aug 11, 2020

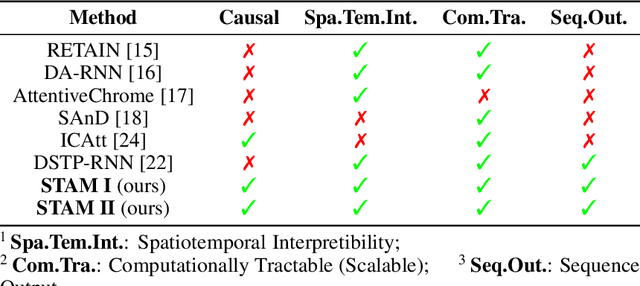

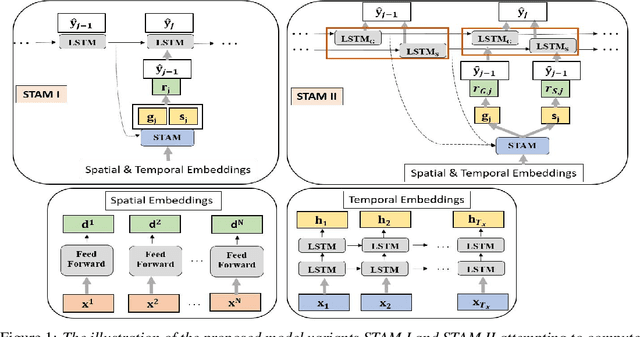

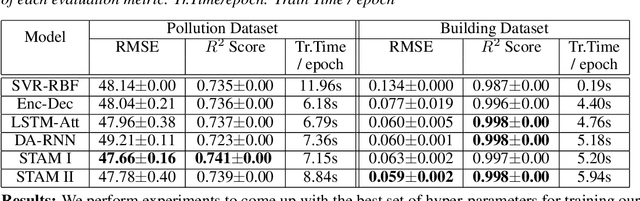

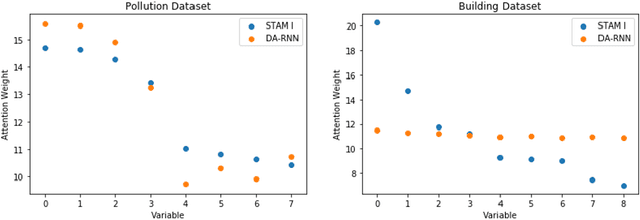

Abstract:Multivariate time series modeling and prediction problems are abundant in many machine learning application domains. Accurate interpretation of such prediction outcomes from a machine learning model that explicitly captures temporal correlations can be a major benefit to the domain experts. In this context, temporal attention has been successfully applied to isolate the important time steps for the input time series. However, in multivariate time series problems, spatial interpretation is also critical to understand the contributions of different variables on the model outputs. We propose a novel deep learning architecture, called spatiotemporal attention mechanism (STAM) for simultaneous learning of the most important time steps and variables. STAM is a causal (i.e., only depends on past inputs and does not use future inputs) and scalable (i.e., scales well with an increase in the number of variables) approach that is comparable to the state-of-the-art models in terms of computational tractability. We demonstrate the performance of our models both on a popular public dataset as well as on a domain-specific dataset. When compared with the baseline models, the results show that STAM maintains state-of-the-art prediction accuracy while offering the benefit of accurate spatiotemporal interpretability. We validate the learned attention weights from a domain knowledge perspective for the real-world datasets.

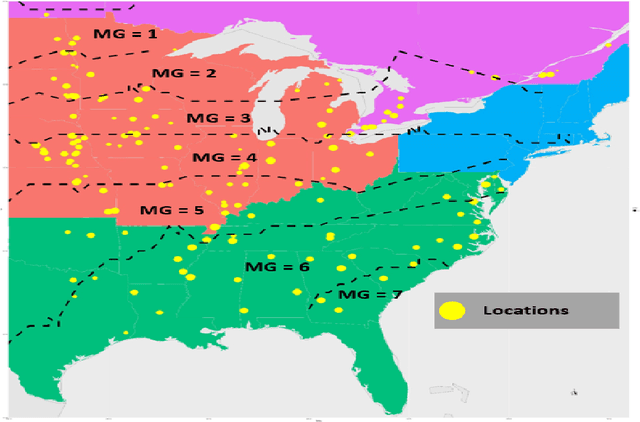

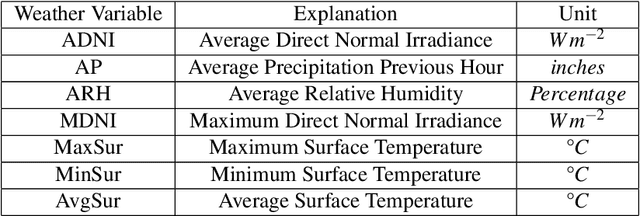

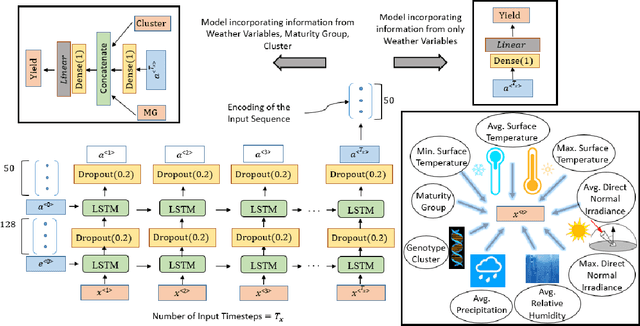

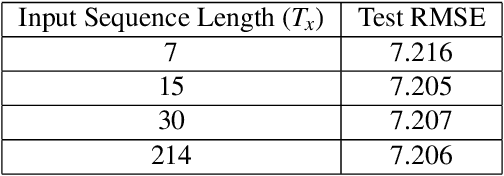

Crop Yield Prediction Integrating Genotype and Weather Variables Using Deep Learning

Jun 24, 2020

Abstract:Accurate prediction of crop yield supported by scientific and domain-relevant insights, can help improve agricultural breeding, provide monitoring across diverse climatic conditions and thereby protect against climatic challenges to crop production including erratic rainfall and temperature variations. We used historical performance records from Uniform Soybean Tests (UST) in North America spanning 13 years of data to build a Long Short Term Memory - Recurrent Neural Network based model to dissect and predict genotype response in multiple-environments by leveraging pedigree relatedness measures along with weekly weather parameters. Additionally, for providing explainability of the important time-windows in the growing season, we developed a model based on temporal attention mechanism. The combination of these two models outperformed random forest (RF), LASSO regression and the data-driven USDA model for yield prediction. We deployed this deep learning framework as a 'hypotheses generation tool' to unravel GxExM relationships. Attention-based time series models provide a significant advancement in interpretability of yield prediction models. The insights provided by explainable models are applicable in understanding how plant breeding programs can adapt their approaches for global climate change, for example identification of superior varieties for commercial release, intelligent sampling of testing environments in variety development, and integrating weather parameters for a targeted breeding approach. Using DL models as hypothesis generation tools will enable development of varieties with plasticity response in variable climatic conditions. We envision broad applicability of this approach (via conducting sensitivity analysis and "what-if" scenarios) for soybean and other crop species under different climatic conditions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge