Spatiotemporal Attention for Multivariate Time Series Prediction and Interpretation

Paper and Code

Aug 11, 2020

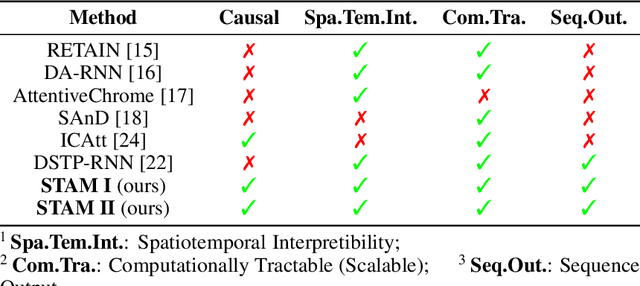

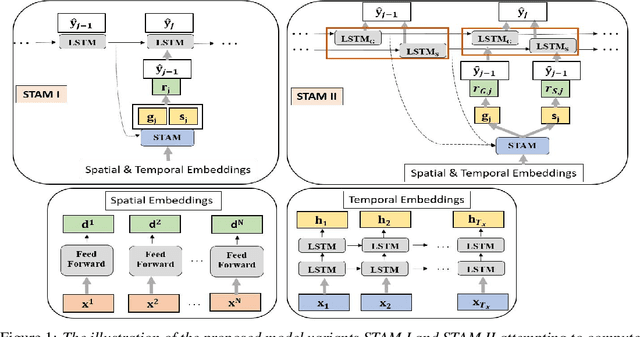

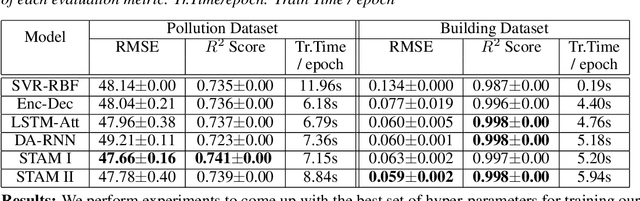

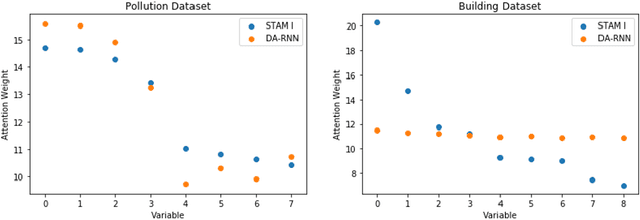

Multivariate time series modeling and prediction problems are abundant in many machine learning application domains. Accurate interpretation of such prediction outcomes from a machine learning model that explicitly captures temporal correlations can be a major benefit to the domain experts. In this context, temporal attention has been successfully applied to isolate the important time steps for the input time series. However, in multivariate time series problems, spatial interpretation is also critical to understand the contributions of different variables on the model outputs. We propose a novel deep learning architecture, called spatiotemporal attention mechanism (STAM) for simultaneous learning of the most important time steps and variables. STAM is a causal (i.e., only depends on past inputs and does not use future inputs) and scalable (i.e., scales well with an increase in the number of variables) approach that is comparable to the state-of-the-art models in terms of computational tractability. We demonstrate the performance of our models both on a popular public dataset as well as on a domain-specific dataset. When compared with the baseline models, the results show that STAM maintains state-of-the-art prediction accuracy while offering the benefit of accurate spatiotemporal interpretability. We validate the learned attention weights from a domain knowledge perspective for the real-world datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge