Tianjiao Zeng

A generative approach for lensless imaging in low-light conditions

Jan 07, 2025

Abstract:Lensless imaging offers a lightweight, compact alternative to traditional lens-based systems, ideal for exploration in space-constrained environments. However, the absence of a focusing lens and limited lighting in such environments often result in low-light conditions, where the measurements suffer from complex noise interference due to insufficient capture of photons. This study presents a robust reconstruction method for high-quality imaging in low-light scenarios, employing two complementary perspectives: model-driven and data-driven. First, we apply a physic-model-driven perspective to reconstruct in the range space of the pseudo-inverse of the measurement model as a first guidance to extract information in the noisy measurements. Then, we integrate a generative-model based perspective to suppress residual noises as the second guidance to suppress noises in the initial noisy results. Specifically, a learnable Wiener filter-based module generates an initial noisy reconstruction. Then, for fast and, more importantly, stable generation of the clear image from the noisy version, we implement a modified conditional generative diffusion module. This module converts the raw image into the latent wavelet domain for efficiency and uses modified bidirectional training processes for stabilization. Simulations and real-world experiments demonstrate substantial improvements in overall visual quality, advancing lensless imaging in challenging low-light environments.

Technical Report: Towards Spatial Feature Regularization in Deep-Learning-Based Array-SAR Reconstruction

Dec 22, 2024Abstract:Array synthetic aperture radar (Array-SAR), also known as tomographic SAR (TomoSAR), has demonstrated significant potential for high-quality 3D mapping, particularly in urban areas.While deep learning (DL) methods have recently shown strengths in reconstruction, most studies rely on pixel-by-pixel reconstruction, neglecting spatial features like building structures, leading to artifacts such as holes and fragmented edges. Spatial feature regularization, effective in traditional methods, remains underexplored in DL-based approaches. Our study integrates spatial feature regularization into DL-based Array-SAR reconstruction, addressing key questions: What spatial features are relevant in urban-area mapping? How can these features be effectively described, modeled, regularized, and incorporated into DL networks? The study comprises five phases: spatial feature description and modeling, regularization, feature-enhanced network design, evaluation, and discussions. Sharp edges and geometric shapes in urban scenes are analyzed as key features. An intra-slice and inter-slice strategy is proposed, using 2D slices as reconstruction units and fusing them into 3D scenes through parallel and serial fusion. Two computational frameworks-iterative reconstruction with enhancement and light reconstruction with enhancement-are designed, incorporating spatial feature modules into DL networks, leading to four specialized reconstruction networks. Using our urban building simulation dataset and two public datasets, six tests evaluate close-point resolution, structural integrity, and robustness in urban scenarios. Results show that spatial feature regularization significantly improves reconstruction accuracy, retrieves more complete building structures, and enhances robustness by reducing noise and outliers.

Multiple Latent Space Mapping for Compressed Dark Image Enhancement

Mar 12, 2024

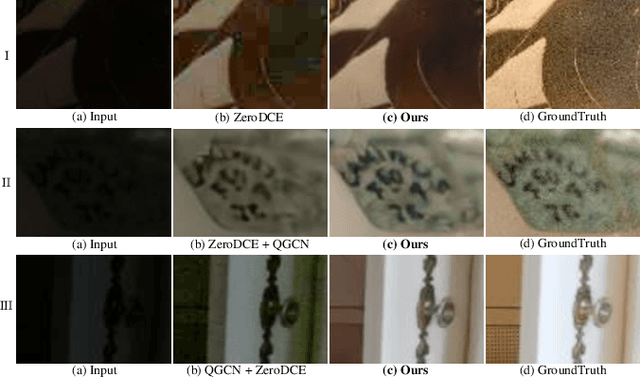

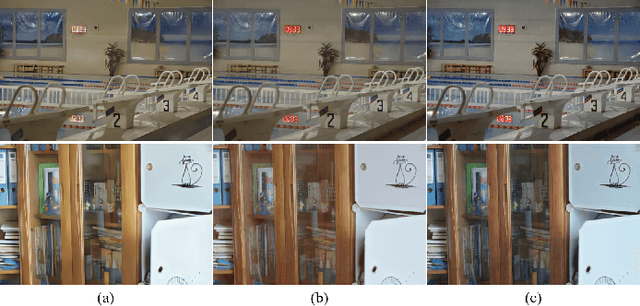

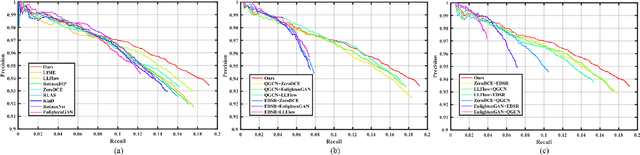

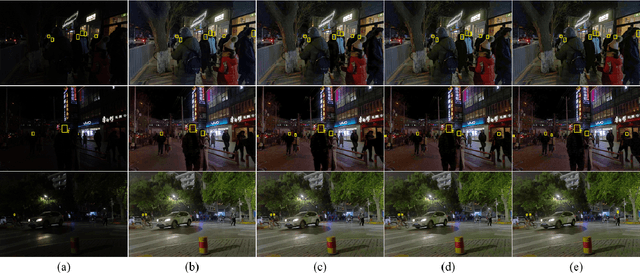

Abstract:Dark image enhancement aims at converting dark images to normal-light images. Existing dark image enhancement methods take uncompressed dark images as inputs and achieve great performance. However, in practice, dark images are often compressed before storage or transmission over the Internet. Current methods get poor performance when processing compressed dark images. Artifacts hidden in the dark regions are amplified by current methods, which results in uncomfortable visual effects for observers. Based on this observation, this study aims at enhancing compressed dark images while avoiding compression artifacts amplification. Since texture details intertwine with compression artifacts in compressed dark images, detail enhancement and blocking artifacts suppression contradict each other in image space. Therefore, we handle the task in latent space. To this end, we propose a novel latent mapping network based on variational auto-encoder (VAE). Firstly, different from previous VAE-based methods with single-resolution features only, we exploit multiple latent spaces with multi-resolution features, to reduce the detail blur and improve image fidelity. Specifically, we train two multi-level VAEs to project compressed dark images and normal-light images into their latent spaces respectively. Secondly, we leverage a latent mapping network to transform features from compressed dark space to normal-light space. Specifically, since the degradation models of darkness and compression are different from each other, the latent mapping process is divided mapping into enlightening branch and deblocking branch. Comprehensive experiments demonstrate that the proposed method achieves state-of-the-art performance in compressed dark image enhancement.

Shadow-Oriented Tracking Method for Multi-Target Tracking in Video-SAR

Nov 29, 2022Abstract:This work focuses on multi-target tracking in Video synthetic aperture radar. Specifically, we refer to tracking based on targets' shadows. Current methods have limited accuracy as they fail to consider shadows' characteristics and surroundings fully. Shades are low-scattering and varied, resulting in missed tracking. Surroundings can cause interferences, resulting in false tracking. To solve these, we propose a shadow-oriented multi-target tracking method (SOTrack). To avoid false tracking, a pre-processing module is proposed to enhance shadows from surroundings, thus reducing their interferences. To avoid missed tracking, a detection method based on deep learning is designed to thoroughly learn shadows' features, thus increasing the accurate estimation. And further, a recall module is designed to recall missed shadows. We conduct experiments on measured data. Results demonstrate that, compared with other methods, SOTrack achieves much higher performance in tracking accuracy-18.4%. And ablation study confirms the effectiveness of the proposed modules.

Solving 3D Radar Imaging Inverse Problems with a Multi-cognition Task-oriented Framework

Nov 28, 2022

Abstract:This work focuses on 3D Radar imaging inverse problems. Current methods obtain undifferentiated results that suffer task-depended information retrieval loss and thus don't meet the task's specific demands well. For example, biased scattering energy may be acceptable for screen imaging but not for scattering diagnosis. To address this issue, we propose a new task-oriented imaging framework. The imaging principle is task-oriented through an analysis phase to obtain task's demands. The imaging model is multi-cognition regularized to embed and fulfill demands. The imaging method is designed to be general-ized, where couplings between cognitions are decoupled and solved individually with approximation and variable-splitting techniques. Tasks include scattering diagnosis, person screen imaging, and parcel screening imaging are given as examples. Experiments on data from two systems indicate that the pro-posed framework outperforms the current ones in task-depended information retrieval.

Near-filed SAR Image Restoration with Deep Learning Inverse Technique: A Preliminary Study

Nov 28, 2022

Abstract:Benefiting from a relatively larger aperture's angle, and in combination with a wide transmitting bandwidth, near-field synthetic aperture radar (SAR) provides a high-resolution image of a target's scattering distribution-hot spots. Meanwhile, imaging result suffers inevitable degradation from sidelobes, clutters, and noises, hindering the information retrieval of the target. To restore the image, current methods make simplified assumptions; for example, the point spread function (PSF) is spatially consistent, the target consists of sparse point scatters, etc. Thus, they achieve limited restoration performance in terms of the target's shape, especially for complex targets. To address these issues, a preliminary study is conducted on restoration with the recent promising deep learning inverse technique in this work. We reformulate the degradation model into a spatially variable complex-convolution model, where the near-field SAR's system response is considered. Adhering to it, a model-based deep learning network is designed to restore the image. A simulated degraded image dataset from multiple complex target models is constructed to validate the network. All the images are formulated using the electromagnetic simulation tool. Experiments on the dataset reveal their effectiveness. Compared with current methods, superior performance is achieved regarding the target's shape and energy estimation.

A Model-data-driven Network Embedding Multidimensional Features for Tomographic SAR Imaging

Nov 28, 2022

Abstract:Deep learning (DL)-based tomographic SAR imaging algorithms are gradually being studied. Typically, they use an unfolding network to mimic the iterative calculation of the classical compressive sensing (CS)-based methods and process each range-azimuth unit individually. However, only one-dimensional features are effectively utilized in this way. The correlation between adjacent resolution units is ignored directly. To address that, we propose a new model-data-driven network to achieve tomoSAR imaging based on multi-dimensional features. Guided by the deep unfolding methodology, a two-dimensional deep unfolding imaging network is constructed. On the basis of it, we add two 2D processing modules, both convolutional encoder-decoder structures, to enhance multi-dimensional features of the imaging scene effectively. Meanwhile, to train the proposed multifeature-based imaging network, we construct a tomoSAR simulation dataset consisting entirely of simulation data of buildings. Experiments verify the effectiveness of the model. Compared with the conventional CS-based FISTA method and DL-based gamma-Net method, the result of our proposed method has better performance on completeness while having decent imaging accuracy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge