Subba R. Digumarthy

Integrative Analysis for COVID-19 Patient Outcome Prediction

Jul 20, 2020

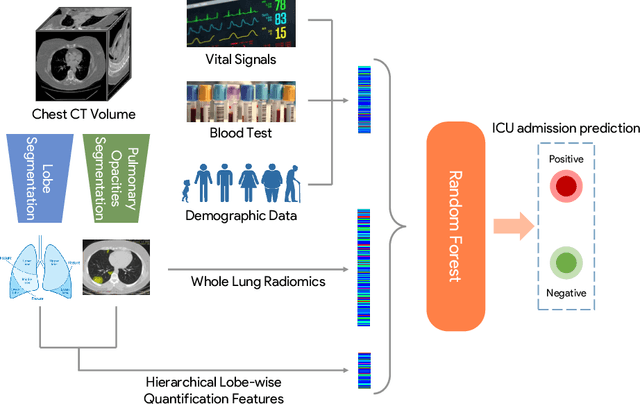

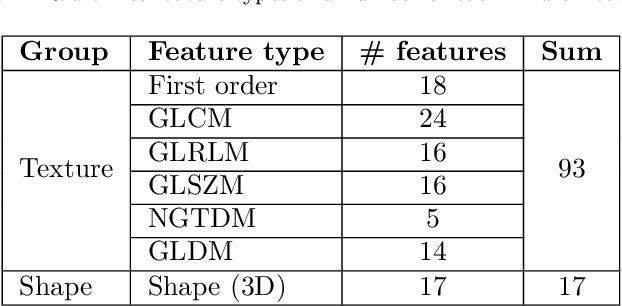

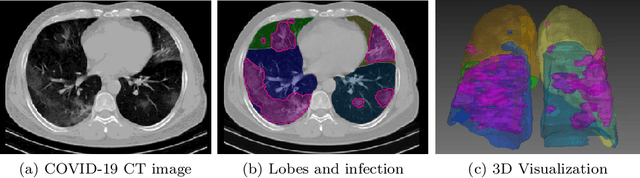

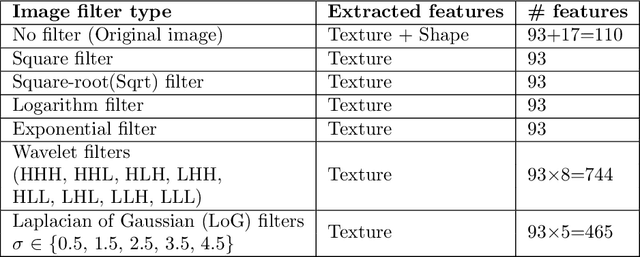

Abstract:While image analysis of chest computed tomography (CT) for COVID-19 diagnosis has been intensively studied, little work has been performed for image-based patient outcome prediction. Management of high-risk patients with early intervention is a key to lower the fatality rate of COVID-19 pneumonia, as a majority of patients recover naturally. Therefore, an accurate prediction of disease progression with baseline imaging at the time of the initial presentation can help in patient management. In lieu of only size and volume information of pulmonary abnormalities and features through deep learning based image segmentation, here we combine radiomics of lung opacities and non-imaging features from demographic data, vital signs, and laboratory findings to predict need for intensive care unit (ICU) admission. To our knowledge, this is the first study that uses holistic information of a patient including both imaging and non-imaging data for outcome prediction. The proposed methods were thoroughly evaluated on datasets separately collected from three hospitals, one in the United States, one in Iran, and another in Italy, with a total 295 patients with reverse transcription polymerase chain reaction (RT-PCR) assay positive COVID-19 pneumonia. Our experimental results demonstrate that adding non-imaging features can significantly improve the performance of prediction to achieve AUC up to 0.884 and sensitivity as high as 96.1%, which can be valuable to provide clinical decision support in managing COVID-19 patients. Our methods may also be applied to other lung diseases including but not limited to community acquired pneumonia.

Quantifying and Leveraging Predictive Uncertainty for Medical Image Assessment

Jul 08, 2020

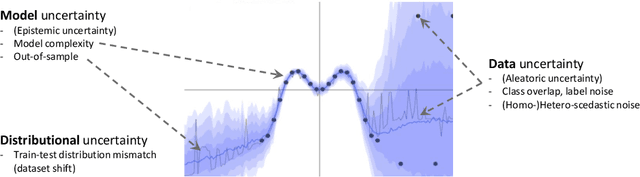

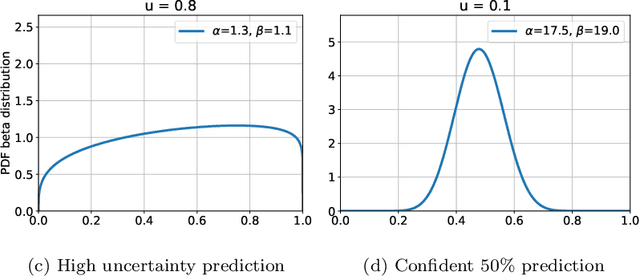

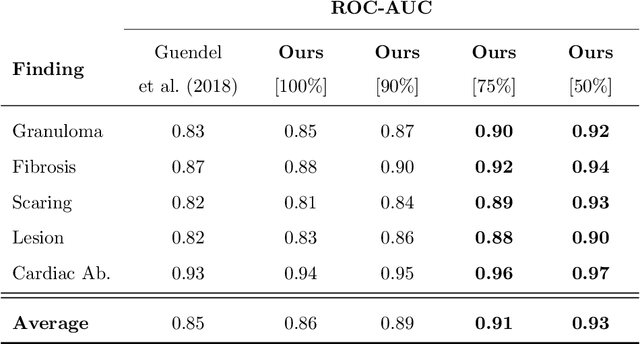

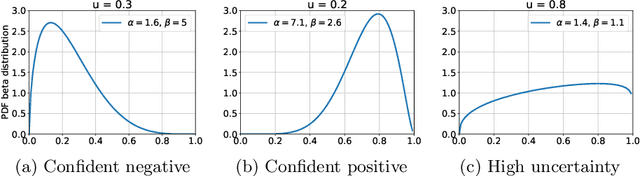

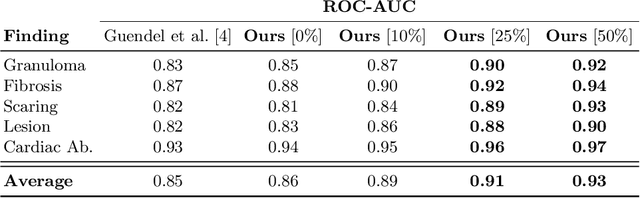

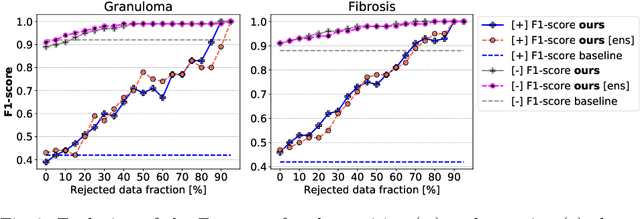

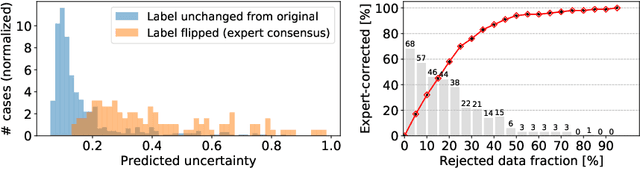

Abstract:The interpretation of medical images is a challenging task, often complicated by the presence of artifacts, occlusions, limited contrast and more. Most notable is the case of chest radiography, where there is a high inter-rater variability in the detection and classification of abnormalities. This is largely due to inconclusive evidence in the data or subjective definitions of disease appearance. An additional example is the classification of anatomical views based on 2D Ultrasound images. Often, the anatomical context captured in a frame is not sufficient to recognize the underlying anatomy. Current machine learning solutions for these problems are typically limited to providing probabilistic predictions, relying on the capacity of underlying models to adapt to limited information and the high degree of label noise. In practice, however, this leads to overconfident systems with poor generalization on unseen data. To account for this, we propose a system that learns not only the probabilistic estimate for classification, but also an explicit uncertainty measure which captures the confidence of the system in the predicted output. We argue that this approach is essential to account for the inherent ambiguity characteristic of medical images from different radiologic exams including computed radiography, ultrasonography and magnetic resonance imaging. In our experiments we demonstrate that sample rejection based on the predicted uncertainty can significantly improve the ROC-AUC for various tasks, e.g., by 8% to 0.91 with an expected rejection rate of under 25% for the classification of different abnormalities in chest radiographs. In addition, we show that using uncertainty-driven bootstrapping to filter the training data, one can achieve a significant increase in robustness and accuracy.

Quantifying and Leveraging Classification Uncertainty for Chest Radiograph Assessment

Jun 18, 2019

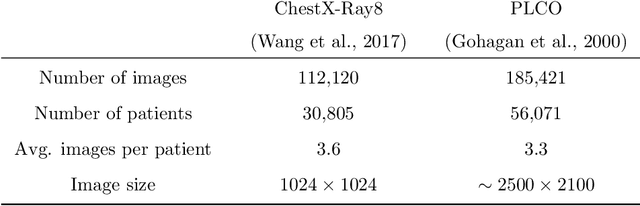

Abstract:The interpretation of chest radiographs is an essential task for the detection of thoracic diseases and abnormalities. However, it is a challenging problem with high inter-rater variability and inherent ambiguity due to inconclusive evidence in the data, limited data quality or subjective definitions of disease appearance. Current deep learning solutions for chest radiograph abnormality classification are typically limited to providing probabilistic predictions, relying on the capacity of learning models to adapt to the high degree of label noise and become robust to the enumerated causal factors. In practice, however, this leads to overconfident systems with poor generalization on unseen data. To account for this, we propose an automatic system that learns not only the probabilistic estimate on the presence of an abnormality, but also an explicit uncertainty measure which captures the confidence of the system in the predicted output. We argue that explicitly learning the classification uncertainty as an orthogonal measure to the predicted output, is essential to account for the inherent variability characteristic of this data. Experiments were conducted on two datasets of chest radiographs of over 85,000 patients. Sample rejection based on the predicted uncertainty can significantly improve the ROC-AUC, e.g., by 8% to 0.91 with an expected rejection rate of under 25%. Eliminating training samples using uncertainty-driven bootstrapping, enables a significant increase in robustness and accuracy. In addition, we present a multi-reader study showing that the predictive uncertainty is indicative of reader errors.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge