Steven Diamond

Differentiable Convex Optimization Layers

Oct 28, 2019

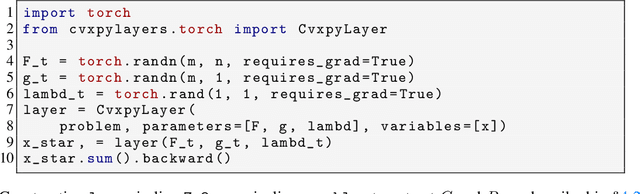

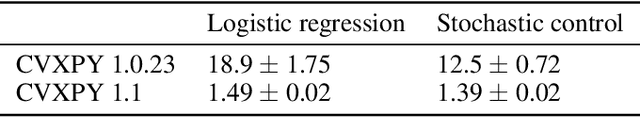

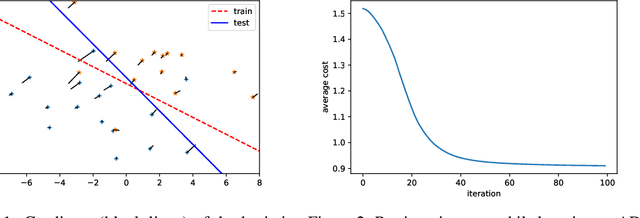

Abstract:Recent work has shown how to embed differentiable optimization problems (that is, problems whose solutions can be backpropagated through) as layers within deep learning architectures. This method provides a useful inductive bias for certain problems, but existing software for differentiable optimization layers is rigid and difficult to apply to new settings. In this paper, we propose an approach to differentiating through disciplined convex programs, a subclass of convex optimization problems used by domain-specific languages (DSLs) for convex optimization. We introduce disciplined parametrized programming, a subset of disciplined convex programming, and we show that every disciplined parametrized program can be represented as the composition of an affine map from parameters to problem data, a solver, and an affine map from the solver's solution to a solution of the original problem (a new form we refer to as affine-solver-affine form). We then demonstrate how to efficiently differentiate through each of these components, allowing for end-to-end analytical differentiation through the entire convex program. We implement our methodology in version 1.1 of CVXPY, a popular Python-embedded DSL for convex optimization, and additionally implement differentiable layers for disciplined convex programs in PyTorch and TensorFlow 2.0. Our implementation significantly lowers the barrier to using convex optimization problems in differentiable programs. We present applications in linear machine learning models and in stochastic control, and we show that our layer is competitive (in execution time) compared to specialized differentiable solvers from past work.

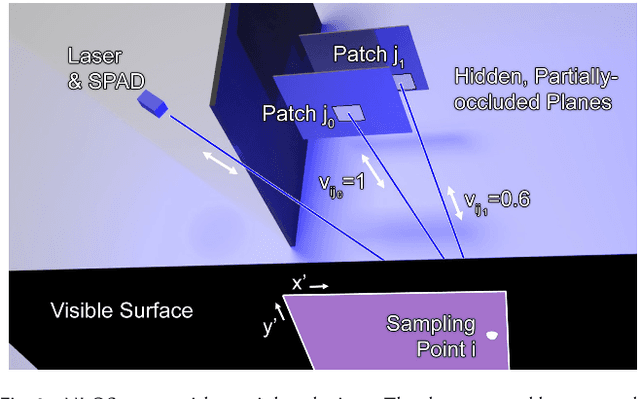

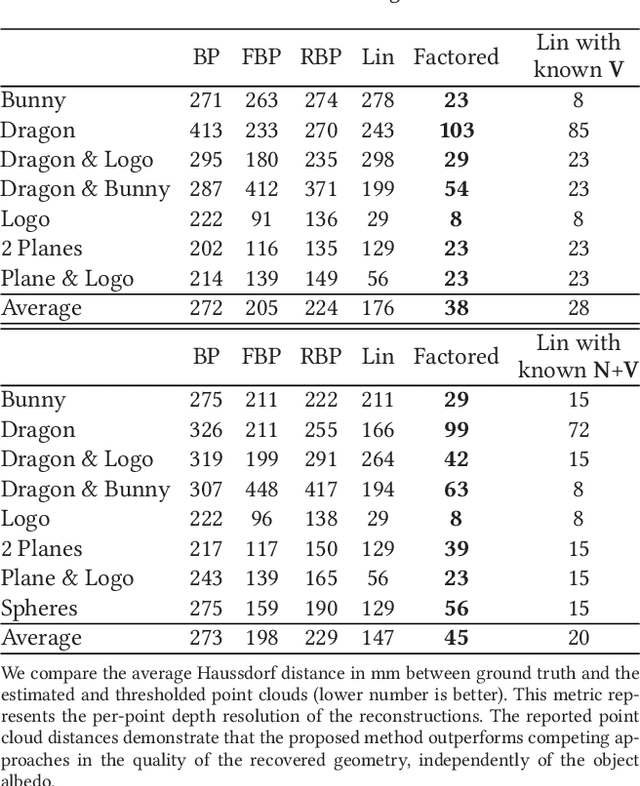

Non-line-of-sight Imaging with Partial Occluders and Surface Normals

Apr 04, 2018

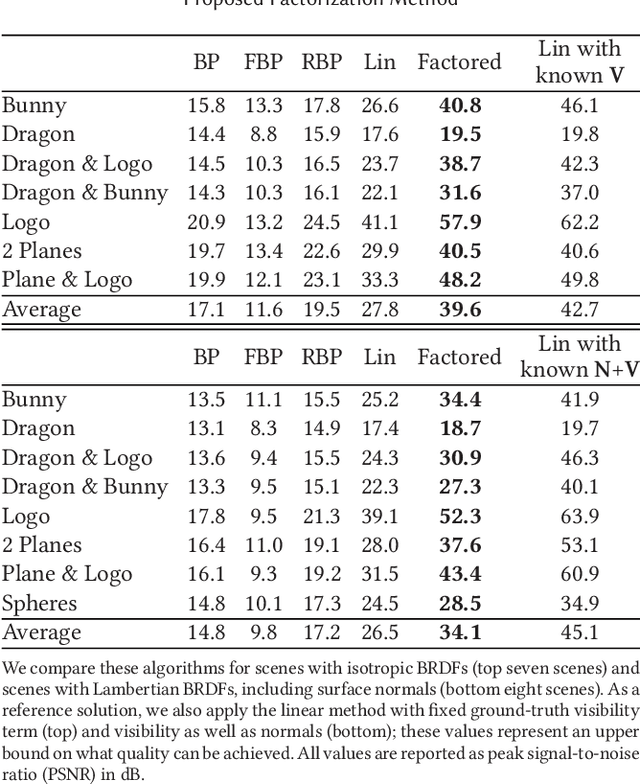

Abstract:Imaging objects obscured by occluders is a significant challenge for many applications. A camera that could "see around corners" could help improve navigation and mapping capabilities of autonomous vehicles or make search and rescue missions more effective. Time-resolved single-photon imaging systems have recently been demonstrated to record optical information of a scene that can lead to an estimation of the shape and reflectance of objects hidden from the line of sight of a camera. However, existing non-line-of-sight (NLOS) reconstruction algorithms have been constrained in the types of light transport effects they model for the hidden scene parts. We introduce a factored NLOS light transport representation that accounts for partial occlusions and surface normals. Based on this model, we develop a factorization approach for inverse time-resolved light transport and demonstrate high-fidelity NLOS reconstructions for challenging scenes both in simulation and with an experimental NLOS imaging system.

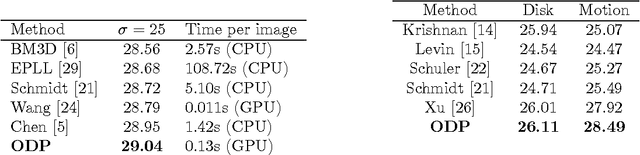

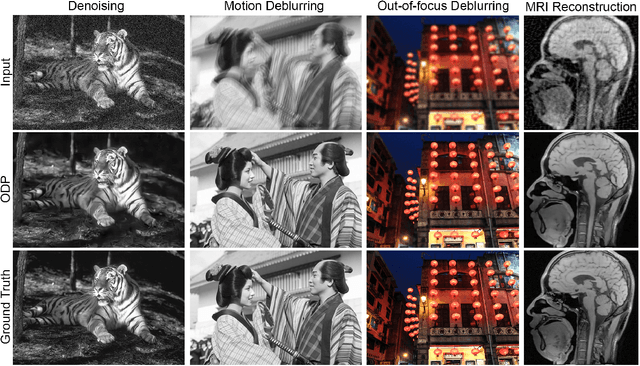

Unrolled Optimization with Deep Priors

May 22, 2017

Abstract:A broad class of problems at the core of computational imaging, sensing, and low-level computer vision reduces to the inverse problem of extracting latent images that follow a prior distribution, from measurements taken under a known physical image formation model. Traditionally, hand-crafted priors along with iterative optimization methods have been used to solve such problems. In this paper we present unrolled optimization with deep priors, a principled framework for infusing knowledge of the image formation into deep networks that solve inverse problems in imaging, inspired by classical iterative methods. We show that instances of the framework outperform the state-of-the-art by a substantial margin for a wide variety of imaging problems, such as denoising, deblurring, and compressed sensing magnetic resonance imaging (MRI). Moreover, we conduct experiments that explain how the framework is best used and why it outperforms previous methods.

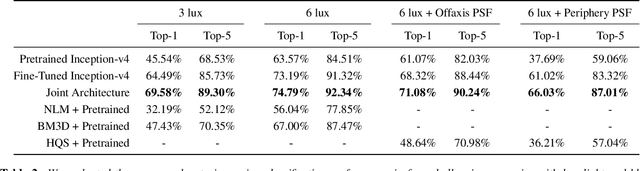

Dirty Pixels: Optimizing Image Classification Architectures for Raw Sensor Data

Jan 23, 2017

Abstract:Real-world sensors suffer from noise, blur, and other imperfections that make high-level computer vision tasks like scene segmentation, tracking, and scene understanding difficult. Making high-level computer vision networks robust is imperative for real-world applications like autonomous driving, robotics, and surveillance. We propose a novel end-to-end differentiable architecture for joint denoising, deblurring, and classification that makes classification robust to realistic noise and blur. The proposed architecture dramatically improves the accuracy of a classification network in low light and other challenging conditions, outperforming alternative approaches such as retraining the network on noisy and blurry images and preprocessing raw sensor inputs with conventional denoising and deblurring algorithms. The architecture learns denoising and deblurring pipelines optimized for classification whose outputs differ markedly from those of state-of-the-art denoising and deblurring methods, preserving fine detail at the cost of more noise and artifacts. Our results suggest that the best low-level image processing for computer vision is different from existing algorithms designed to produce visually pleasing images. The principles used to design the proposed architecture easily extend to other high-level computer vision tasks and image formation models, providing a general framework for integrating low-level and high-level image processing.

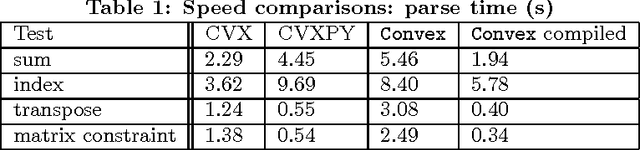

Convex Optimization in Julia

Oct 17, 2014

Abstract:This paper describes Convex, a convex optimization modeling framework in Julia. Convex translates problems from a user-friendly functional language into an abstract syntax tree describing the problem. This concise representation of the global structure of the problem allows Convex to infer whether the problem complies with the rules of disciplined convex programming (DCP), and to pass the problem to a suitable solver. These operations are carried out in Julia using multiple dispatch, which dramatically reduces the time required to verify DCP compliance and to parse a problem into conic form. Convex then automatically chooses an appropriate backend solver to solve the conic form problem.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge