Soohwan Kim

K-HATERS: A Hate Speech Detection Corpus in Korean with Target-Specific Ratings

Oct 24, 2023

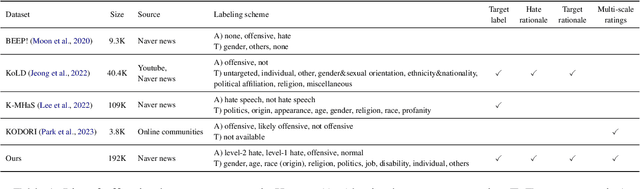

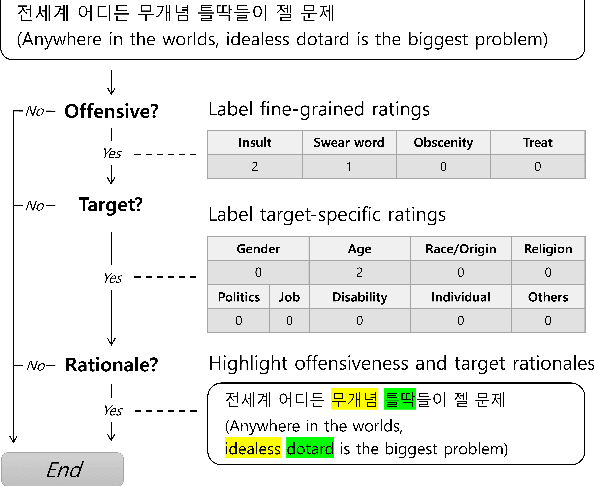

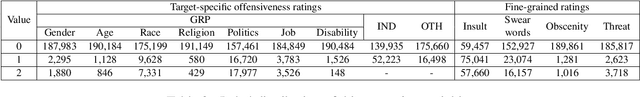

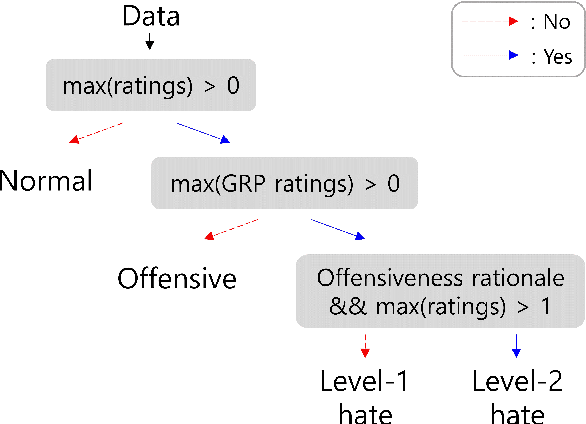

Abstract:Numerous datasets have been proposed to combat the spread of online hate. Despite these efforts, a majority of these resources are English-centric, primarily focusing on overt forms of hate. This research gap calls for developing high-quality corpora in diverse languages that also encapsulate more subtle hate expressions. This study introduces K-HATERS, a new corpus for hate speech detection in Korean, comprising approximately 192K news comments with target-specific offensiveness ratings. This resource is the largest offensive language corpus in Korean and is the first to offer target-specific ratings on a three-point Likert scale, enabling the detection of hate expressions in Korean across varying degrees of offensiveness. We conduct experiments showing the effectiveness of the proposed corpus, including a comparison with existing datasets. Additionally, to address potential noise and bias in human annotations, we explore a novel idea of adopting the Cognitive Reflection Test, which is widely used in social science for assessing an individual's cognitive ability, as a proxy of labeling quality. Findings indicate that annotations from individuals with the lowest test scores tend to yield detection models that make biased predictions toward specific target groups and are less accurate. This study contributes to the NLP research on hate speech detection and resource construction. The code and dataset can be accessed at https://github.com/ssu-humane/K-HATERS.

KoSpeech: Open-Source Toolkit for End-to-End Korean Speech Recognition

Sep 26, 2020

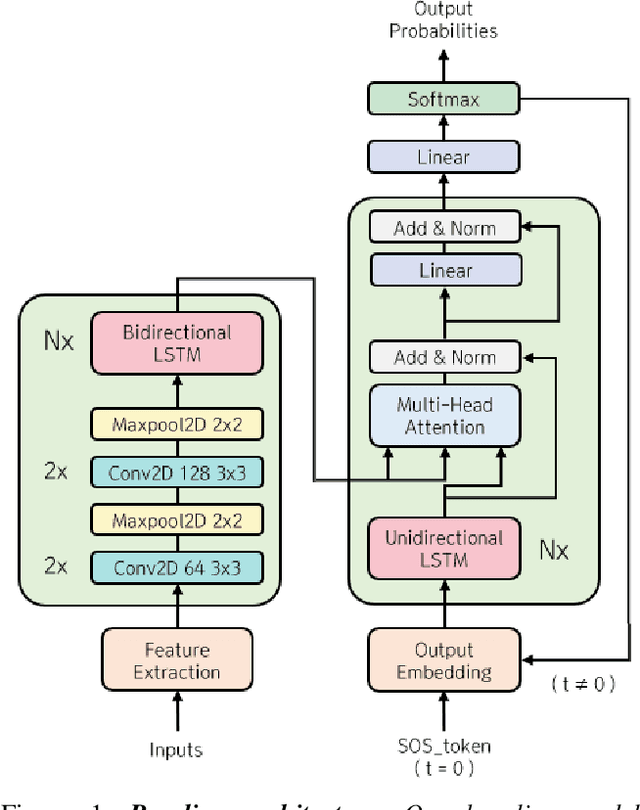

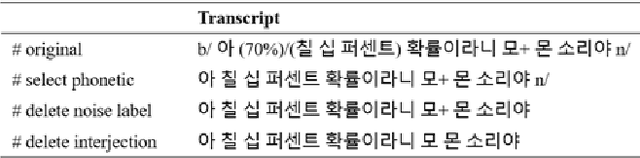

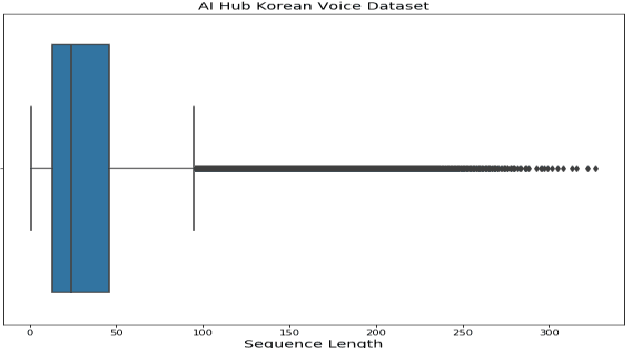

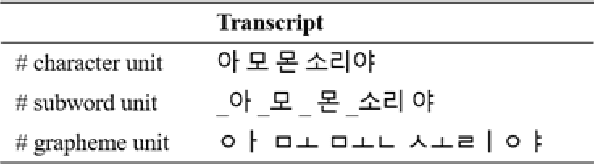

Abstract:We present KoSpeech, an open-source software, which is modular and extensible end-to-end Korean automatic speech recognition (ASR) toolkit based on the deep learning library PyTorch. Several automatic speech recognition open-source toolkits have been released, but all of them deal with non-Korean languages, such as English (e.g. ESPnet, Espresso). Although AI Hub opened 1,000 hours of Korean speech corpus known as KsponSpeech, there is no established preprocessing method and baseline model to compare model performances. Therefore, we propose preprocessing methods for KsponSpeech corpus and a baseline model for benchmarks. Our baseline model is based on Listen, Attend and Spell (LAS) architecture and ables to customize various training hyperparameters conveniently. By KoSpeech, we hope this could be a guideline for those who research Korean speech recognition. Our baseline model achieved 10.31% character error rate (CER) at KsponSpeech corpus only with the acoustic model. Our source code is available here.

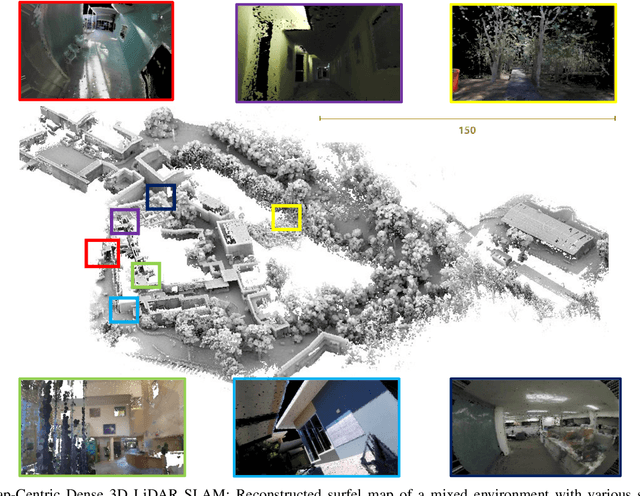

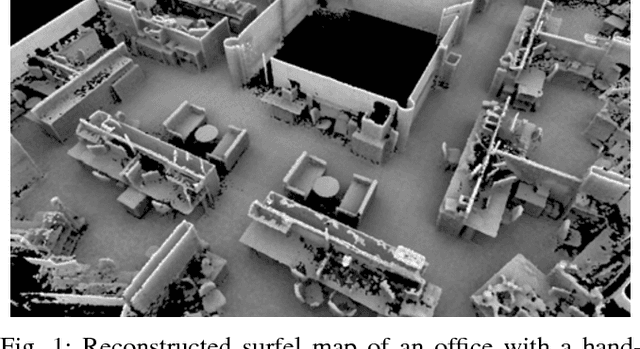

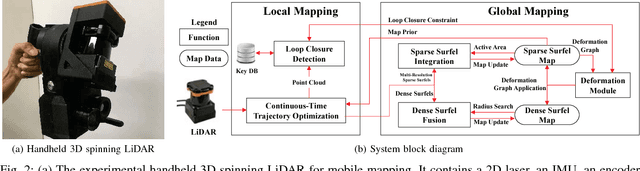

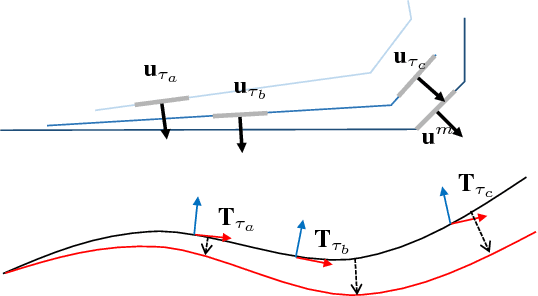

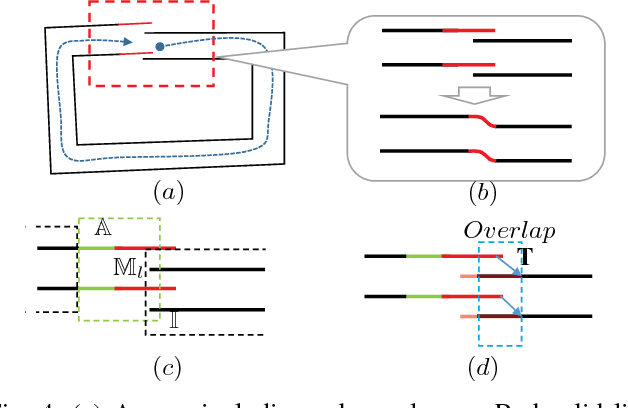

Elasticity Meets Continuous-Time: Map-Centric Dense 3D LiDAR SLAM

Aug 05, 2020

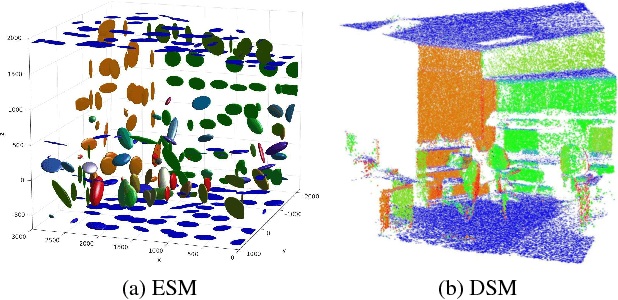

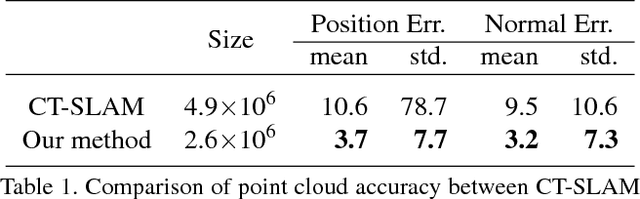

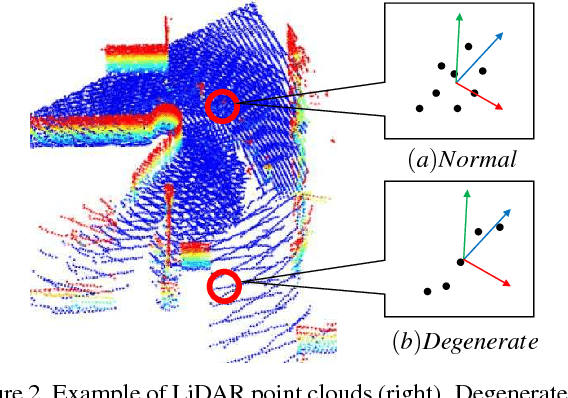

Abstract:Map-centric SLAM utilizes elasticity as a means of loop closure. This approach reduces the cost of loop closure while still provides large-scale fusion-based dense maps, when compared to the trajectory-centric SLAM approaches. In this paper, we present a novel framework for 3D LiDAR-based map-centric SLAM. Having the advantages of a map-centric approach, our method exhibits new features to overcome the shortcomings of existing systems, associated with multi-modal sensor fusion and LiDAR motion distortion. This is accomplished through the use of a local Continuous-Time (CT) trajectory representation. Also, our surface resolution preservative matching algorithm and Wishart-based surfel fusion model enables non-redundant yet dense mapping. Furthermore, we present a robust metric loop closure model to make the approach stable regardless of where the loop closure occurs. Finally, we demonstrate our approach through both simulation and real data experiments using multiple sensor payload configurations and environments to illustrate its utility and robustness.

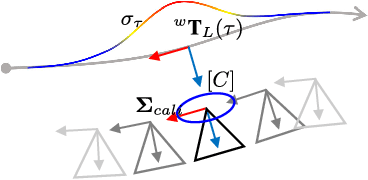

Spatiotemporal Camera-LiDAR Calibration: A Targetless and Structureless Approach

Jan 17, 2020

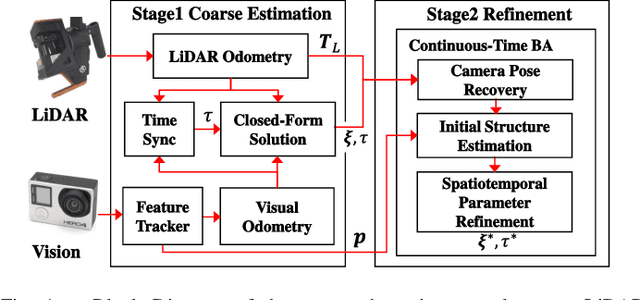

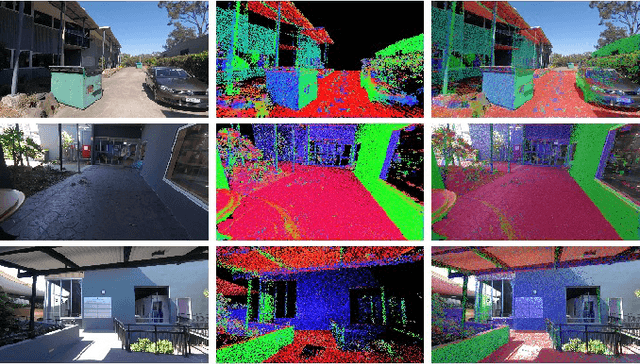

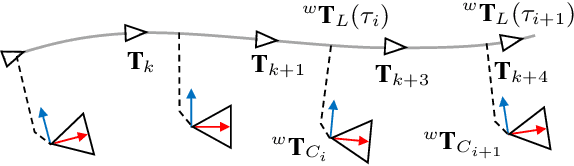

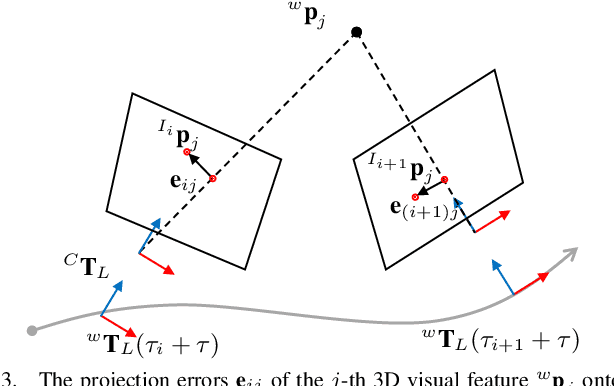

Abstract:The demand for multimodal sensing systems for robotics is growing due to the increase in robustness, reliability and accuracy offered by these systems. These systems also need to be spatially and temporally co-registered to be effective. In this paper, we propose a targetless and structureless spatiotemporal camera-LiDAR calibration method. Our method combines a closed-form solution with a modified structureless bundle adjustment where the coarse-to-fine approach does not {require} an initial guess on the spatiotemporal parameters. Also, as 3D features (structure) are calculated from triangulation only, there is no need to have a calibration target or to match 2D features with the 3D point cloud which provides flexibility in the calibration process and sensor configuration. We demonstrate the accuracy and robustness of the proposed method through both simulation and real data experiments using multiple sensor payload configurations mounted to hand-held, aerial and legged robot systems. Also, qualitative results are given in the form of a colorized point cloud visualization.

Robust Photogeometric Localization over Time for Map-Centric Loop Closure

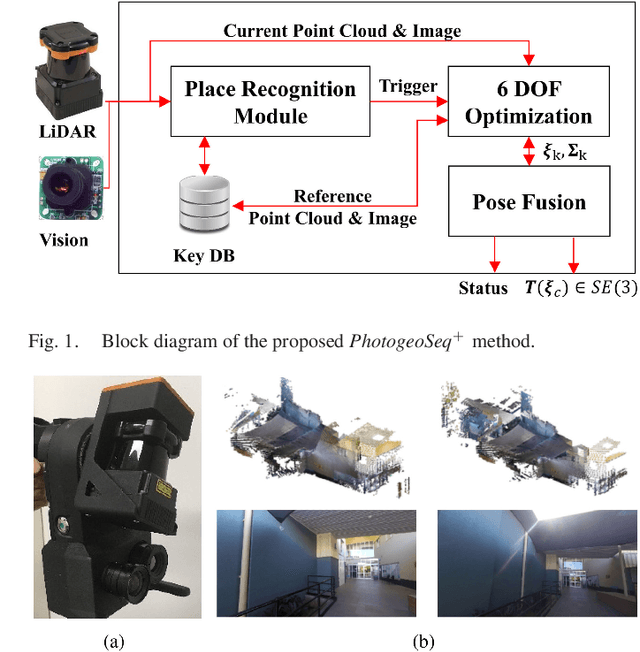

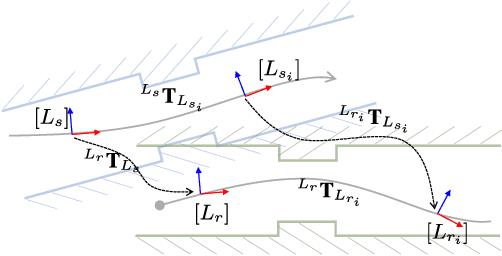

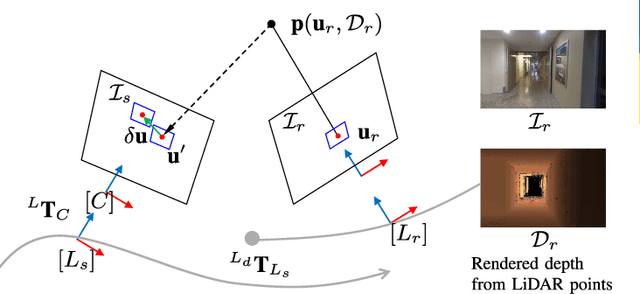

Jan 30, 2019

Abstract:Map-centric SLAM is emerging as an alternative of conventional graph-based SLAM for its accuracy and efficiency in long-term mapping problems. However, in map-centric SLAM, the process of loop closure differs from that of conventional SLAM and the result of incorrect loop closure is more destructive and is not reversible. In this paper, we present a tightly coupled photogeometric metric localization for the loop closure problem in map-centric SLAM. In particular, our method combines complementary constraints from LiDAR and camera sensors, and validates loop closure candidates with sequential observations. The proposed method provides a visual evidence-based outlier rejection where failures caused by either place recognition or localization outliers can be effectively removed. We demonstrate the proposed method is not only more accurate than the conventional global ICP methods but is also robust to incorrect initial pose guesses.

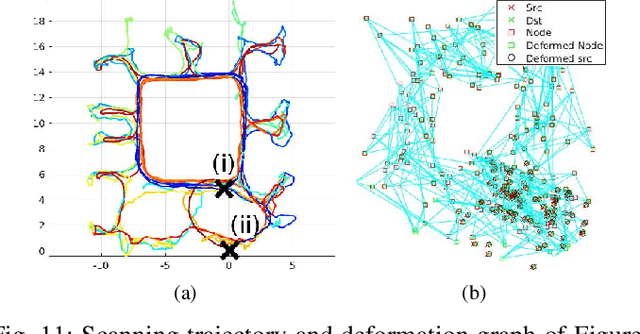

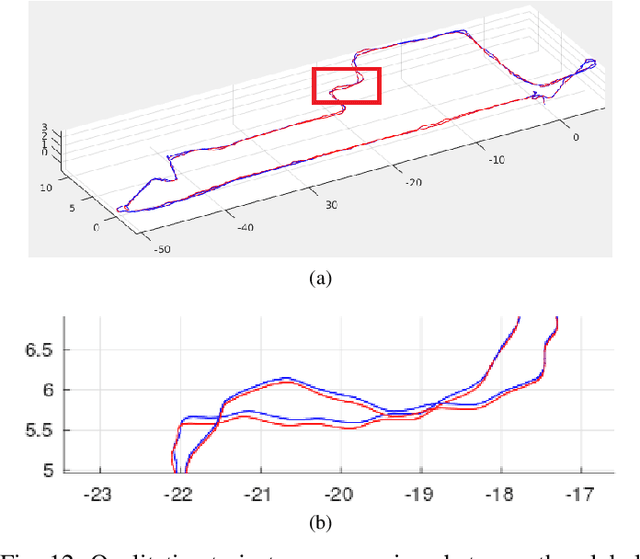

Elastic LiDAR Fusion: Dense Map-Centric Continuous-Time SLAM

Mar 05, 2018

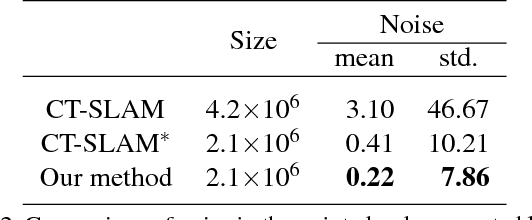

Abstract:The concept of continuous-time trajectory representation has brought increased accuracy and efficiency to multi-modal sensor fusion in modern SLAM. However, regardless of these advantages, its offline property caused by the requirement of global batch optimization is critically hindering its relevance for real-time and life-long applications. In this paper, we present a dense map-centric SLAM method based on a continuous-time trajectory to cope with this problem. The proposed system locally functions in a similar fashion to conventional Continuous-Time SLAM (CT-SLAM). However, it removes the need for global trajectory optimization by introducing map deformation. The computational complexity of the proposed approach for loop closure does not depend on the operation time, but only on the size of the space it explored before the loop closure. It is therefore more suitable for long term operation compared to the conventional CT-SLAM. Furthermore, the proposed method reduces uncertainty in the reconstructed dense map by using probabilistic surface element (surfel) fusion. We demonstrate that the proposed method produces globally consistent maps without global batch trajectory optimization, and effectively reduces LiDAR noise by surfel fusion.

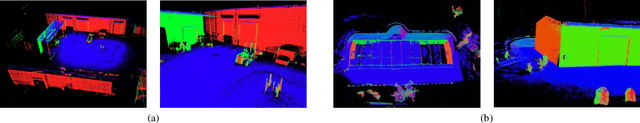

Probabilistic Surfel Fusion for Dense LiDAR Mapping

Sep 17, 2017

Abstract:With the recent development of high-end LiDARs, more and more systems are able to continuously map the environment while moving and producing spatially redundant information. However, none of the previous approaches were able to effectively exploit this redundancy in a dense LiDAR mapping problem. In this paper, we present a new approach for dense LiDAR mapping using probabilistic surfel fusion. The proposed system is capable of reconstructing a high-quality dense surface element (surfel) map from spatially redundant multiple views. This is achieved by a proposed probabilistic surfel fusion along with a geometry considered data association. The proposed surfel data association method considers surface resolution as well as high measurement uncertainty along its beam direction which enables the mapping system to be able to control surface resolution without introducing spatial digitization. The proposed fusion method successfully suppresses the map noise level by considering measurement noise caused by laser beam incident angle and depth distance in a Bayesian filtering framework. Experimental results with simulated and real data for the dense surfel mapping prove the ability of the proposed method to accurately find the canonical form of the environment without further post-processing.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge