Shuyu Shi

An Adaptive and Fast Convergent Approach to Differentially Private Deep Learning

Dec 19, 2019

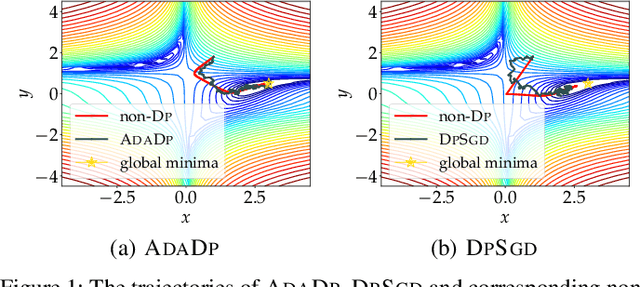

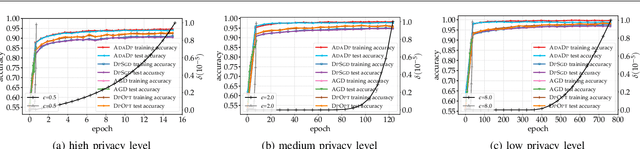

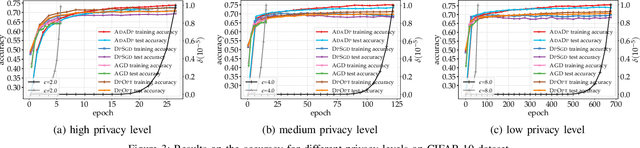

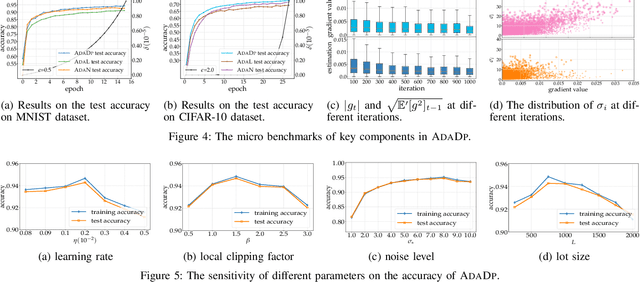

Abstract:With the advent of the era of big data, deep learning has become a prevalent building block in a variety of machine learning or data mining tasks, such as signal processing, network modeling and traffic analysis, to name a few. The massive user data crowdsourced plays a crucial role in the success of deep learning models. However, it has been shown that user data may be inferred from trained neural models and thereby exposed to potential adversaries, which raises information security and privacy concerns. To address this issue, recent studies leverage the technique of differential privacy to design private-preserving deep learning algorithms. Albeit successful at privacy protection, differential privacy degrades the performance of neural models. In this paper, we develop ADADP, an adaptive and fast convergent learning algorithm with a provable privacy guarantee. ADADP significantly reduces the privacy cost by improving the convergence speed with an adaptive learning rate and mitigates the negative effect of differential privacy upon the model accuracy by introducing adaptive noise. The performance of ADADP is evaluated on real-world datasets. Experiment results show that it outperforms state-of-the-art differentially private approaches in terms of both privacy cost and model accuracy.

Reviewing and Improving the Gaussian Mechanism for Differential Privacy

Dec 07, 2019

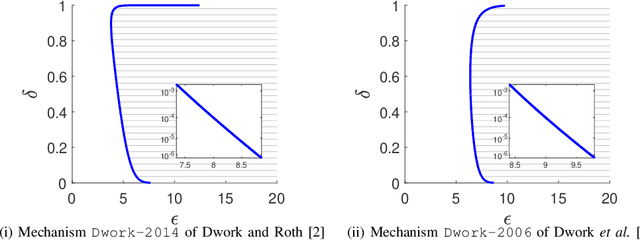

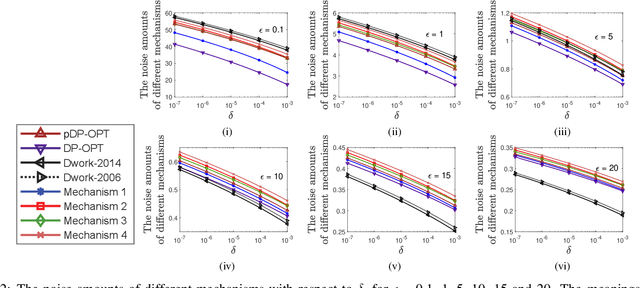

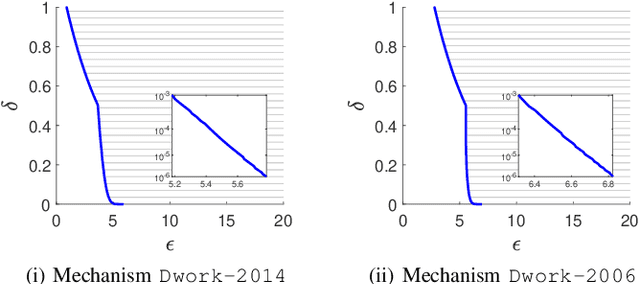

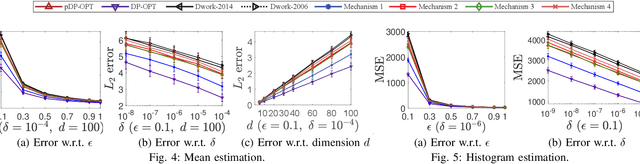

Abstract:Differential privacy provides a rigorous framework to quantify data privacy, and has received considerable interest recently. A randomized mechanism satisfying $(\epsilon, \delta)$-differential privacy (DP) roughly means that, except with a small probability $\delta$, altering a record in a dataset cannot change the probability that an output is seen by more than a multiplicative factor $e^{\epsilon} $. A well-known solution to $(\epsilon, \delta)$-DP is the Gaussian mechanism initiated by Dwork et al. [1] in 2006 with an improvement by Dwork and Roth [2] in 2014, where a Gaussian noise amount $\sqrt{2\ln \frac{2}{\delta}} \times \frac{\Delta}{\epsilon}$ of [1] or $\sqrt{2\ln \frac{1.25}{\delta}} \times \frac{\Delta}{\epsilon}$ of [2] is added independently to each dimension of the query result, for a query with $\ell_2$-sensitivity $\Delta$. Although both classical Gaussian mechanisms [1,2] assume $0 < \epsilon \leq 1$, our review finds that many studies in the literature have used the classical Gaussian mechanisms under values of $\epsilon$ and $\delta$ where the added noise amounts of [1,2] do not achieve $(\epsilon,\delta)$-DP. We obtain such result by analyzing the optimal noise amount $\sigma_{DP-OPT}$ for $(\epsilon,\delta)$-DP and identifying $\epsilon$ and $\delta$ where the noise amounts of classical mechanisms are even less than $\sigma_{DP-OPT}$. Since $\sigma_{DP-OPT}$ has no closed-form expression and needs to be approximated in an iterative manner, we propose Gaussian mechanisms by deriving closed-form upper bounds for $\sigma_{DP-OPT}$. Our mechanisms achieve $(\epsilon,\delta)$-DP for any $\epsilon$, while the classical mechanisms [1,2] do not achieve $(\epsilon,\delta)$-DP for large $\epsilon$ given $\delta$. Moreover, the utilities of our mechanisms improve those of [1,2] and are close to that of the optimal yet more computationally expensive Gaussian mechanism.

Privacy-preserving Crowd-guided AI Decision-making in Ethical Dilemmas

Jun 04, 2019

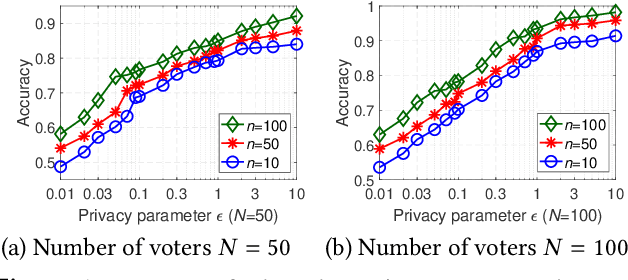

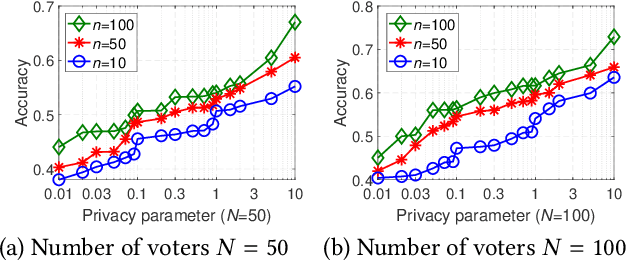

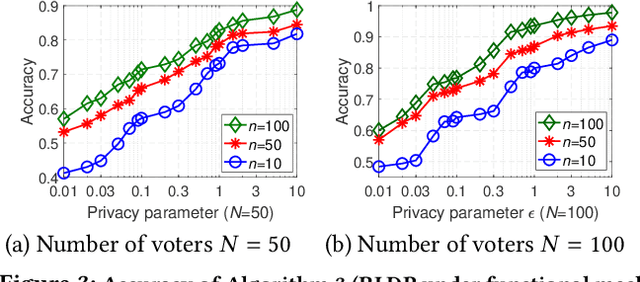

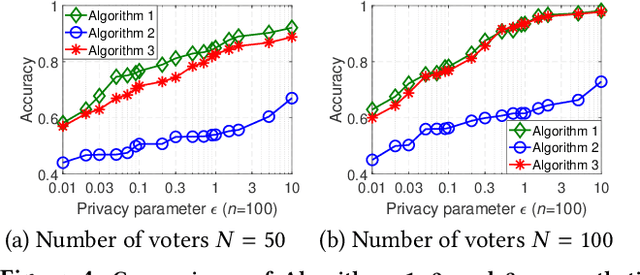

Abstract:With the rapid development of artificial intelligence (AI), ethical issues surrounding AI have attracted increasing attention. In particular, autonomous vehicles may face moral dilemmas in accident scenarios, such as staying the course resulting in hurting pedestrians or swerving leading to hurting passengers. To investigate such ethical dilemmas, recent studies have adopted preference aggregation, in which each voter expresses her/his preferences over decisions for the possible ethical dilemma scenarios, and a centralized system aggregates these preferences to obtain the winning decision. Although a useful methodology for building ethical AI systems, such an approach can potentially violate the privacy of voters since moral preferences are sensitive information and their disclosure can be exploited by malicious parties. In this paper, we report a first-of-its-kind privacy-preserving crowd-guided AI decision-making approach in ethical dilemmas. We adopt the notion of differential privacy to quantify privacy and consider four granularities of privacy protection by taking voter-/record-level privacy protection and centralized/distributed perturbation into account, resulting in four approaches VLCP, RLCP, VLDP, and RLDP. Moreover, we propose different algorithms to achieve these privacy protection granularities, while retaining the accuracy of the learned moral preference model. Specifically, VLCP and RLCP are implemented with the data aggregator setting a universal privacy parameter and perturbing the averaged moral preference to protect the privacy of voters' data. VLDP and RLDP are implemented in such a way that each voter perturbs her/his local moral preference with a personalized privacy parameter. Extensive experiments on both synthetic and real data demonstrate that the proposed approach can achieve high accuracy of preference aggregation while protecting individual voter's privacy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge