Shiyue Zhao

RiskNet: Interaction-Aware Risk Forecasting for Autonomous Driving in Long-Tail Scenarios

Apr 22, 2025Abstract:Ensuring the safety of autonomous vehicles (AVs) in long-tail scenarios remains a critical challenge, particularly under high uncertainty and complex multi-agent interactions. To address this, we propose RiskNet, an interaction-aware risk forecasting framework, which integrates deterministic risk modeling with probabilistic behavior prediction for comprehensive risk assessment. At its core, RiskNet employs a field-theoretic model that captures interactions among ego vehicle, surrounding agents, and infrastructure via interaction fields and force. This model supports multidimensional risk evaluation across diverse scenarios (highways, intersections, and roundabouts), and shows robustness under high-risk and long-tail settings. To capture the behavioral uncertainty, we incorporate a graph neural network (GNN)-based trajectory prediction module, which learns multi-modal future motion distributions. Coupled with the deterministic risk field, it enables dynamic, probabilistic risk inference across time, enabling proactive safety assessment under uncertainty. Evaluations on the highD, inD, and rounD datasets, spanning lane changes, turns, and complex merges, demonstrate that our method significantly outperforms traditional approaches (e.g., TTC, THW, RSS, NC Field) in terms of accuracy, responsiveness, and directional sensitivity, while maintaining strong generalization across scenarios. This framework supports real-time, scenario-adaptive risk forecasting and demonstrates strong generalization across uncertain driving environments. It offers a unified foundation for safety-critical decision-making in long-tail scenarios.

SACA: A Scenario-Aware Collision Avoidance Framework for Autonomous Vehicles Integrating LLMs-Driven Reasoning

Mar 31, 2025Abstract:Reliable collision avoidance under extreme situations remains a critical challenge for autonomous vehicles. While large language models (LLMs) offer promising reasoning capabilities, their application in safety-critical evasive maneuvers is limited by latency and robustness issues. Even so, LLMs stand out for their ability to weigh emotional, legal, and ethical factors, enabling socially responsible and context-aware collision avoidance. This paper proposes a scenario-aware collision avoidance (SACA) framework for extreme situations by integrating predictive scenario evaluation, data-driven reasoning, and scenario-preview-based deployment to improve collision avoidance decision-making. SACA consists of three key components. First, a predictive scenario analysis module utilizes obstacle reachability analysis and motion intention prediction to construct a comprehensive situational prompt. Second, an online reasoning module refines decision-making by leveraging prior collision avoidance knowledge and fine-tuning with scenario data. Third, an offline evaluation module assesses performance and stores scenarios in a memory bank. Additionally, A precomputed policy method improves deployability by previewing scenarios and retrieving or reasoning policies based on similarity and confidence levels. Real-vehicle tests show that, compared with baseline methods, SACA effectively reduces collision losses in extreme high-risk scenarios and lowers false triggering under complex conditions. Project page: https://sean-shiyuez.github.io/SACA/.

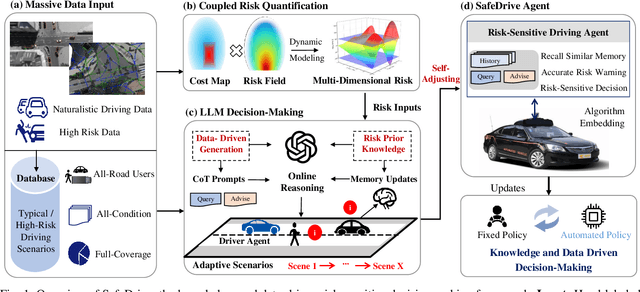

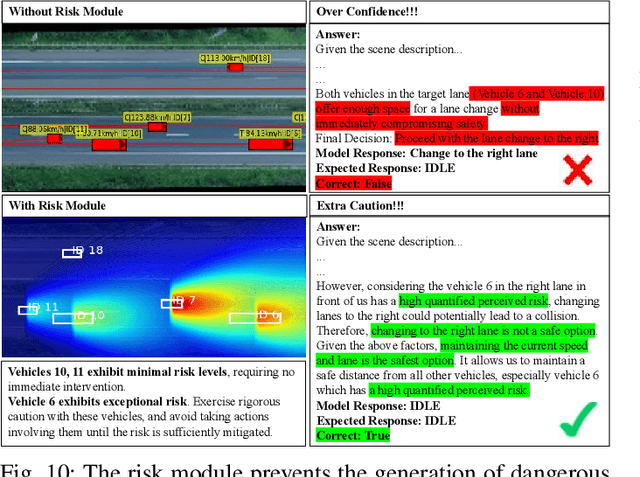

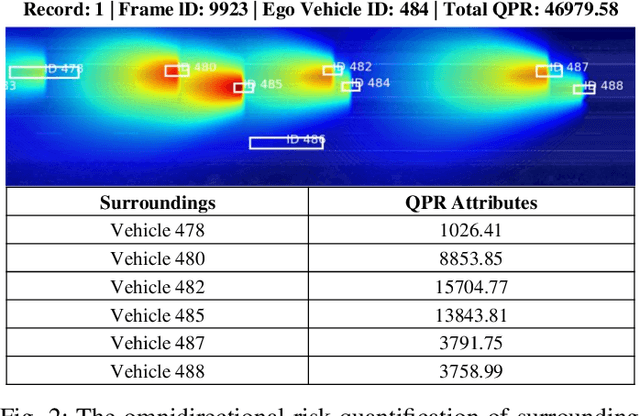

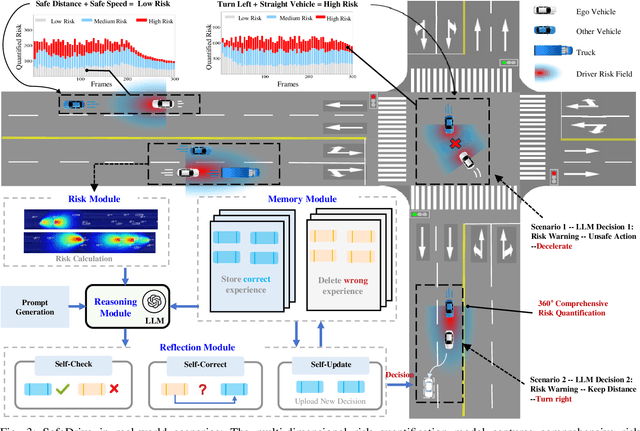

SafeDrive: Knowledge- and Data-Driven Risk-Sensitive Decision-Making for Autonomous Vehicles with Large Language Models

Dec 19, 2024

Abstract:Recent advancements in autonomous vehicles (AVs) use Large Language Models (LLMs) to perform well in normal driving scenarios. However, ensuring safety in dynamic, high-risk environments and managing safety-critical long-tail events remain significant challenges. To address these issues, we propose SafeDrive, a knowledge- and data-driven risk-sensitive decision-making framework to enhance AV safety and adaptability. The proposed framework introduces a modular system comprising: (1) a Risk Module for quantifying multi-factor coupled risks involving driver, vehicle, and road interactions; (2) a Memory Module for storing and retrieving typical scenarios to improve adaptability; (3) a LLM-powered Reasoning Module for context-aware safety decision-making; and (4) a Reflection Module for refining decisions through iterative learning. By integrating knowledge-driven insights with adaptive learning mechanisms, the framework ensures robust decision-making under uncertain conditions. Extensive evaluations on real-world traffic datasets, including highways (HighD), intersections (InD), and roundabouts (RounD), validate the framework's ability to enhance decision-making safety (achieving a 100% safety rate), replicate human-like driving behaviors (with decision alignment exceeding 85%), and adapt effectively to unpredictable scenarios. SafeDrive establishes a novel paradigm for integrating knowledge- and data-driven methods, highlighting significant potential to improve safety and adaptability of autonomous driving in high-risk traffic scenarios. Project Page: https://mezzi33.github.io/SafeDrive/

High-Speed Cornering Control and Real-Vehicle Deployment for Autonomous Electric Vehicles

Nov 18, 2024Abstract:Executing drift maneuvers during high-speed cornering presents significant challenges for autonomous vehicles, yet offers the potential to minimize turning time and enhance driving dynamics. While reinforcement learning (RL) has shown promising results in simulated environments, discrepancies between simulations and real-world conditions have limited its practical deployment. This study introduces an innovative control framework that integrates trajectory optimization with drift maneuvers, aiming to improve the algorithm's adaptability for real-vehicle implementation. We leveraged Bezier-based pre-trajectory optimization to enhance rewards and optimize the controller through Twin Delayed Deep Deterministic Policy Gradient (TD3) in a simulated environment. For real-world deployment, we implement a hybrid RL-MPC fusion mechanism, , where TD3-derived maneuvers serve as primary inputs for a Model Predictive Controller (MPC). This integration enables precise real-time tracking of the optimal trajectory, with MPC providing corrective inputs to bridge the gap between simulation and reality. The efficacy of this method is validated through real-vehicle tests on consumer-grade electric vehicles, focusing on drift U-turns and drift right-angle turns. The control outcomes of these real-vehicle tests are thoroughly documented in the paper, supported by supplementary video evidence (https://youtu.be/5wp67FcpfL8). Notably, this study is the first to deploy and apply an RL-based transient drift cornering algorithm on consumer-grade electric vehicles.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge