Shaojie Qiao

Disentangled Graph Representation Based on Substructure-Aware Graph Optimal Matching Kernel Convolutional Networks

Apr 23, 2025

Abstract:Graphs effectively characterize relational data, driving graph representation learning methods that uncover underlying predictive information. As state-of-the-art approaches, Graph Neural Networks (GNNs) enable end-to-end learning for diverse tasks. Recent disentangled graph representation learning enhances interpretability by decoupling independent factors in graph data. However, existing methods often implicitly and coarsely characterize graph structures, limiting structural pattern analysis within the graph. This paper proposes the Graph Optimal Matching Kernel Convolutional Network (GOMKCN) to address this limitation. We view graphs as node-centric subgraphs, where each subgraph acts as a structural factor encoding position-specific information. This transforms graph prediction into structural pattern recognition. Inspired by CNNs, GOMKCN introduces the Graph Optimal Matching Kernel (GOMK) as a convolutional operator, computing similarities between subgraphs and learnable graph filters. Mathematically, GOMK maps subgraphs and filters into a Hilbert space, representing graphs as point sets. Disentangled representations emerge from projecting subgraphs onto task-optimized filters, which adaptively capture relevant structural patterns via gradient descent. Crucially, GOMK incorporates local correspondences in similarity measurement, resolving the trade-off between differentiability and accuracy in graph kernels. Experiments validate that GOMKCN achieves superior accuracy and interpretability in graph pattern mining and prediction. The framework advances the theoretical foundation for disentangled graph representation learning.

Explainable AI Security: Exploring Robustness of Graph Neural Networks to Adversarial Attacks

Jun 20, 2024

Abstract:Graph neural networks (GNNs) have achieved tremendous success, but recent studies have shown that GNNs are vulnerable to adversarial attacks, which significantly hinders their use in safety-critical scenarios. Therefore, the design of robust GNNs has attracted increasing attention. However, existing research has mainly been conducted via experimental trial and error, and thus far, there remains a lack of a comprehensive understanding of the vulnerability of GNNs. To address this limitation, we systematically investigate the adversarial robustness of GNNs by considering graph data patterns, model-specific factors, and the transferability of adversarial examples. Through extensive experiments, a set of principled guidelines is obtained for improving the adversarial robustness of GNNs, for example: (i) rather than highly regular graphs, the training graph data with diverse structural patterns is crucial for model robustness, which is consistent with the concept of adversarial training; (ii) the large model capacity of GNNs with sufficient training data has a positive effect on model robustness, and only a small percentage of neurons in GNNs are affected by adversarial attacks; (iii) adversarial transfer is not symmetric and the adversarial examples produced by the small-capacity model have stronger adversarial transferability. This work illuminates the vulnerabilities of GNNs and opens many promising avenues for designing robust GNNs.

GraphMU: Repairing Robustness of Graph Neural Networks via Machine Unlearning

Jun 19, 2024

Abstract:Graph Neural Networks (GNNs) have demonstrated significant application potential in various fields. However, GNNs are still vulnerable to adversarial attacks. Numerous adversarial defense methods on GNNs are proposed to address the problem of adversarial attacks. However, these methods can only serve as a defense before poisoning, but cannot repair poisoned GNN. Therefore, there is an urgent need for a method to repair poisoned GNN. In this paper, we address this gap by introducing the novel concept of model repair for GNNs. We propose a repair framework, Repairing Robustness of Graph Neural Networks via Machine Unlearning (GraphMU), which aims to fine-tune poisoned GNN to forget adversarial samples without the need for complete retraining. We also introduce a unlearning validation method to ensure that our approach effectively forget specified poisoned data. To evaluate the effectiveness of GraphMU, we explore three fine-tuned subgraph construction scenarios based on the available perturbation information: (i) Known Perturbation Ratios, (ii) Known Complete Knowledge of Perturbations, and (iii) Unknown any Knowledge of Perturbations. Our extensive experiments, conducted across four citation datasets and four adversarial attack scenarios, demonstrate that GraphMU can effectively restore the performance of poisoned GNN.

Generative Graph Neural Networks for Link Prediction

Dec 31, 2022

Abstract:Inferring missing links or detecting spurious ones based on observed graphs, known as link prediction, is a long-standing challenge in graph data analysis. With the recent advances in deep learning, graph neural networks have been used for link prediction and have achieved state-of-the-art performance. Nevertheless, existing methods developed for this purpose are typically discriminative, computing features of local subgraphs around two neighboring nodes and predicting potential links between them from the perspective of subgraph classification. In this formalism, the selection of enclosing subgraphs and heuristic structural features for subgraph classification significantly affects the performance of the methods. To overcome this limitation, this paper proposes a novel and radically different link prediction algorithm based on the network reconstruction theory, called GraphLP. Instead of sampling positive and negative links and heuristically computing the features of their enclosing subgraphs, GraphLP utilizes the feature learning ability of deep-learning models to automatically extract the structural patterns of graphs for link prediction under the assumption that real-world graphs are not locally isolated. Moreover, GraphLP explores high-order connectivity patterns to utilize the hierarchical organizational structures of graphs for link prediction. Our experimental results on all common benchmark datasets from different applications demonstrate that the proposed method consistently outperforms other state-of-the-art methods. Unlike the discriminative neural network models used for link prediction, GraphLP is generative, which provides a new paradigm for neural-network-based link prediction.

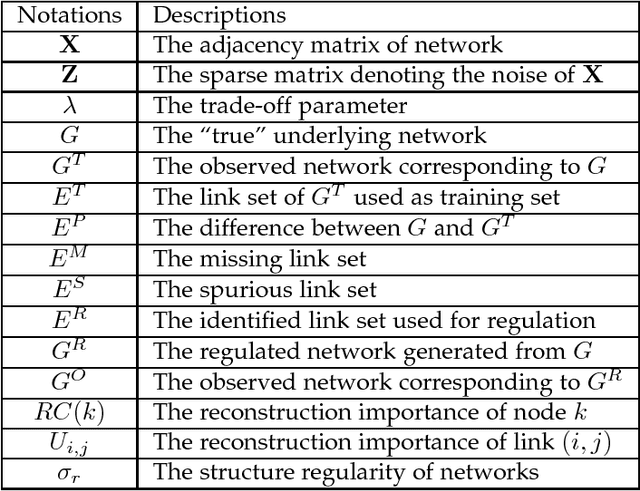

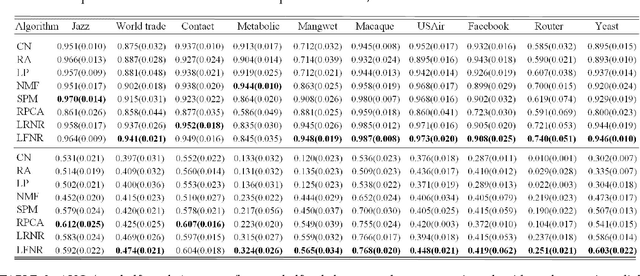

Network Reconstruction and Controlling Based on Structural Regularity Analysis

Aug 29, 2018

Abstract:From the perspective of network analysis, the ubiquitous networks are comprised of regular and irregular components, which makes uncovering the complexity of network structures to be a fundamental challenge. Exploring the regular information and identifying the roles of microscopic elements in network data can help us recognize the principle of network organization and contribute to network data utilization. However, the intrinsic structural properties of networks remain so far inadequately explored and theorised. With the realistic assumption that there are consistent features across the local structures of networks, we propose a low-rank pursuit based self-representation network model, in which the principle of network organization can be uncovered by a representation matrix. According to this model, original true networks can be reconstructed based on the observed unreliable network topology. In particular, the proposed model enables us to estimate the extent to which the networks are regulable, i.e., measuring the reconstructability of networks. In addition, the model is capable of measuring the importance of microscopic network elements, i.e., nodes and links, in terms of network regularity thereby allowing us to regulate the reconstructability of networks based on them. Extensive experiments on disparate real-world networks demonstrate the effectiveness of the proposed network reconstruction and regulation algorithm. Specifically, the network regularity metric can reflect the reconstructability of networks, and the reconstruction accuracy can be improved by removing irregular network links. Lastly, our approach provides an unique and novel insight into the organization exploring of complex networks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge