Seokjun Choi

A Real-world Display Inverse Rendering Dataset

Aug 20, 2025

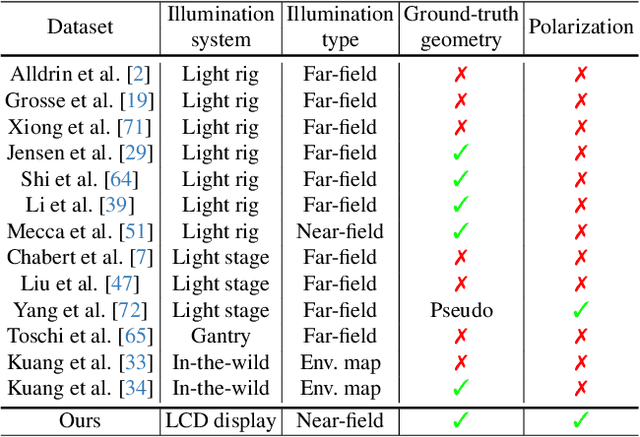

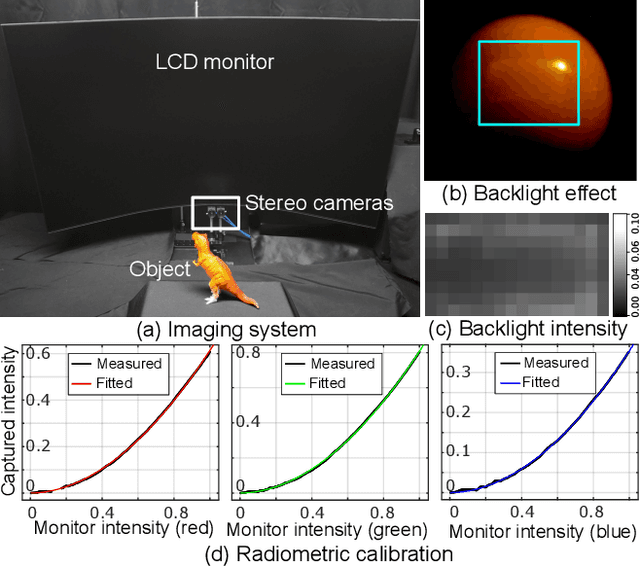

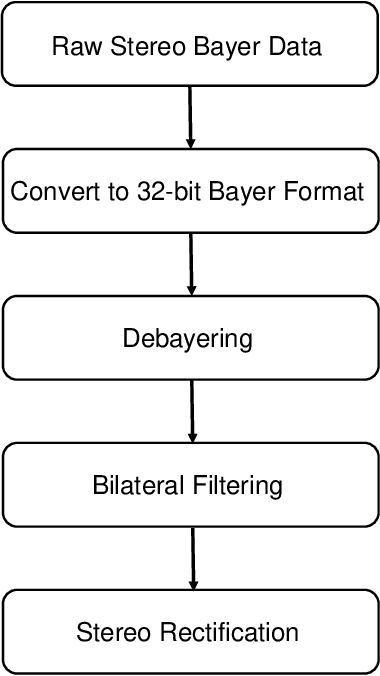

Abstract:Inverse rendering aims to reconstruct geometry and reflectance from captured images. Display-camera imaging systems offer unique advantages for this task: each pixel can easily function as a programmable point light source, and the polarized light emitted by LCD displays facilitates diffuse-specular separation. Despite these benefits, there is currently no public real-world dataset captured using display-camera systems, unlike other setups such as light stages. This absence hinders the development and evaluation of display-based inverse rendering methods. In this paper, we introduce the first real-world dataset for display-based inverse rendering. To achieve this, we construct and calibrate an imaging system comprising an LCD display and stereo polarization cameras. We then capture a diverse set of objects with diverse geometry and reflectance under one-light-at-a-time (OLAT) display patterns. We also provide high-quality ground-truth geometry. Our dataset enables the synthesis of captured images under arbitrary display patterns and different noise levels. Using this dataset, we evaluate the performance of existing photometric stereo and inverse rendering methods, and provide a simple, yet effective baseline for display inverse rendering, outperforming state-of-the-art inverse rendering methods. Code and dataset are available on our project page at https://michaelcsj.github.io/DIR/

Differentiable Mobile Display Photometric Stereo

Feb 07, 2025Abstract:Display photometric stereo uses a display as a programmable light source to illuminate a scene with diverse illumination conditions. Recently, differentiable display photometric stereo (DDPS) demonstrated improved normal reconstruction accuracy by using learned display patterns. However, DDPS faced limitations in practicality, requiring a fixed desktop imaging setup using a polarization camera and a desktop-scale monitor. In this paper, we propose a more practical physics-based photometric stereo, differentiable mobile display photometric stereo (DMDPS), that leverages a mobile phone consisting of a display and a camera. We overcome the limitations of using a mobile device by developing a mobile app and method that simultaneously displays patterns and captures high-quality HDR images. Using this technique, we capture real-world 3D-printed objects and learn display patterns via a differentiable learning process. We demonstrate the effectiveness of DMDPS on both a 3D printed dataset and a first dataset of fallen leaves. The leaf dataset contains reconstructed surface normals and albedos of fallen leaves that may enable future research beyond computer graphics and vision. We believe that DMDPS takes a step forward for practical physics-based photometric stereo.

Dual Exposure Stereo for Extended Dynamic Range 3D Imaging

Dec 03, 2024

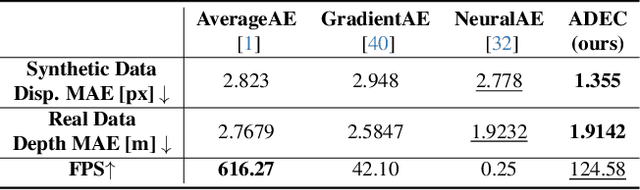

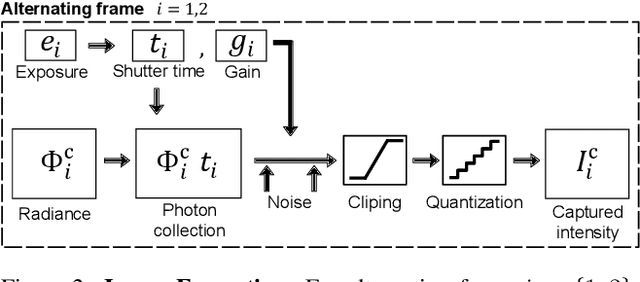

Abstract:Achieving robust stereo 3D imaging under diverse illumination conditions is an important however challenging task, due to the limited dynamic ranges (DRs) of cameras, which are significantly smaller than real world DR. As a result, the accuracy of existing stereo depth estimation methods is often compromised by under- or over-exposed images. Here, we introduce dual-exposure stereo for extended dynamic range 3D imaging. We develop automatic dual-exposure control method that adjusts the dual exposures, diverging them when the scene DR exceeds the camera DR, thereby providing information about broader DR. From the captured dual-exposure stereo images, we estimate depth using motion-aware dual-exposure stereo network. To validate our method, we develop a robot-vision system, collect stereo video datasets, and generate a synthetic dataset. Our method outperforms other exposure control methods.

Differentiable Inverse Rendering with Interpretable Basis BRDFs

Nov 27, 2024Abstract:Inverse rendering seeks to reconstruct both geometry and spatially varying BRDFs (SVBRDFs) from captured images. To address the inherent ill-posedness of inverse rendering, basis BRDF representations are commonly used, modeling SVBRDFs as spatially varying blends of a set of basis BRDFs. However, existing methods often yield basis BRDFs that lack intuitive separation and have limited scalability to scenes of varying complexity. In this paper, we introduce a differentiable inverse rendering method that produces interpretable basis BRDFs. Our approach models a scene using 2D Gaussians, where the reflectance of each Gaussian is defined by a weighted blend of basis BRDFs. We efficiently render an image from the 2D Gaussians and basis BRDFs using differentiable rasterization and impose a rendering loss with the input images. During this analysis-by-synthesis optimization process of differentiable inverse rendering, we dynamically adjust the number of basis BRDFs to fit the target scene while encouraging sparsity in the basis weights. This ensures that the reflectance of each Gaussian is represented by only a few basis BRDFs. This approach enables the reconstruction of accurate geometry and interpretable basis BRDFs that are spatially separated. Consequently, the resulting scene representation, comprising basis BRDFs and 2D Gaussians, supports physically-based novel-view relighting and intuitive scene editing.

Differentiable Point-based Inverse Rendering

Dec 05, 2023

Abstract:We present differentiable point-based inverse rendering, DPIR, an analysis-by-synthesis method that processes images captured under diverse illuminations to estimate shape and spatially-varying BRDF. To this end, we adopt point-based rendering, eliminating the need for multiple samplings per ray, typical of volumetric rendering, thus significantly enhancing the speed of inverse rendering. To realize this idea, we devise a hybrid point-volumetric representation for geometry and a regularized basis-BRDF representation for reflectance. The hybrid geometric representation enables fast rendering through point-based splatting while retaining the geometric details and stability inherent to SDF-based representations. The regularized basis-BRDF mitigates the ill-posedness of inverse rendering stemming from limited light-view angular samples. We also propose an efficient shadow detection method using point-based shadow map rendering. Our extensive evaluations demonstrate that DPIR outperforms prior works in terms of reconstruction accuracy, computational efficiency, and memory footprint. Furthermore, our explicit point-based representation and rendering enables intuitive geometry and reflectance editing. The code will be publicly available.

Dispersed Structured Light for Hyperspectral 3D Imaging

Nov 30, 2023Abstract:Hyperspectral 3D imaging aims to acquire both depth and spectral information of a scene. However, existing methods are either prohibitively expensive and bulky or compromise on spectral and depth accuracy. In this work, we present Dispersed Structured Light (DSL), a cost-effective and compact method for accurate hyperspectral 3D imaging. DSL modifies a traditional projector-camera system by placing a sub-millimeter thick diffraction grating film front of the projector. The grating disperses structured light based on light wavelength. To utilize the dispersed structured light, we devise a model for dispersive projection image formation and a per-pixel hyperspectral 3D reconstruction method. We validate DSL by instantiating a compact experimental prototype. DSL achieves spectral accuracy of 18.8nm full-width half-maximum (FWHM) and depth error of 1mm. We demonstrate that DSL outperforms prior work on practical hyperspectral 3D imaging. DSL promises accurate and practical hyperspectral 3D imaging for diverse application domains, including computer vision and graphics, cultural heritage, geology, and biology.

Differentiable Display Photometric Stereo

Jun 28, 2023

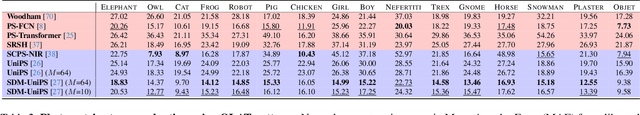

Abstract:Photometric stereo leverages variations in illumination conditions to reconstruct per-pixel surface normals. The concept of display photometric stereo, which employs a conventional monitor as an illumination source, has the potential to overcome limitations often encountered in bulky and difficult-to-use conventional setups. In this paper, we introduce Differentiable Display Photometric Stereo (DDPS), a method designed to achieve high-fidelity normal reconstruction using an off-the-shelf monitor and camera. DDPS addresses a critical yet often neglected challenge in photometric stereo: the optimization of display patterns for enhanced normal reconstruction. We present a differentiable framework that couples basis-illumination image formation with a photometric-stereo reconstruction method. This facilitates the learning of display patterns that leads to high-quality normal reconstruction through automatic differentiation. Addressing the synthetic-real domain gap inherent in end-to-end optimization, we propose the use of a real-world photometric-stereo training dataset composed of 3D-printed objects. Moreover, to reduce the ill-posed nature of photometric stereo, we exploit the linearly polarized light emitted from the monitor to optically separate diffuse and specular reflections in the captured images. We demonstrate that DDPS allows for learning display patterns optimized for a target configuration and is robust to initialization. We assess DDPS on 3D-printed objects with ground-truth normals and diverse real-world objects, validating that DDPS enables effective photometric-stereo reconstruction.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge