Seungwoo Yoon

Learned split-spectrum metalens for obstruction-free broadband imaging in the visible

Jan 27, 2026Abstract:Obstructions such as raindrops, fences, or dust degrade captured images, especially when mechanical cleaning is infeasible. Conventional solutions to obstructions rely on a bulky compound optics array or computational inpainting, which compromise compactness or fidelity. Metalenses composed of subwavelength meta-atoms promise compact imaging, but simultaneous achievement of broadband and obstruction-free imaging remains a challenge, since a metalens that images distant scenes across a broadband spectrum cannot properly defocus near-depth occlusions. Here, we introduce a learned split-spectrum metalens that enables broadband obstruction-free imaging. Our approach divides the spectrum of each RGB channel into pass and stop bands with multi-band spectral filtering and learns the metalens to focus light from far objects through pass bands, while filtering focused near-depth light through stop bands. This optical signal is further enhanced using a neural network. Our learned split-spectrum metalens achieves broadband and obstruction-free imaging with relative PSNR gains of 32.29% and improves object detection and semantic segmentation accuracies with absolute gains of +13.54% mAP, +48.45% IoU, and +20.35% mIoU over a conventional hyperbolic design. This promises robust obstruction-free sensing and vision for space-constrained systems, such as mobile robots, drones, and endoscopes.

Decomposing Complex Visual Comprehension into Atomic Visual Skills for Vision Language Models

May 26, 2025Abstract:Recent Vision-Language Models (VLMs) have demonstrated impressive multimodal comprehension and reasoning capabilities, yet they often struggle with trivially simple visual tasks. In this work, we focus on the domain of basic 2D Euclidean geometry and systematically categorize the fundamental, indivisible visual perception skills, which we refer to as atomic visual skills. We then introduce the Atomic Visual Skills Dataset (AVSD) for evaluating VLMs on the atomic visual skills. Using AVSD, we benchmark state-of-the-art VLMs and find that they struggle with these tasks, despite being trivial for adult humans. Our findings highlight the need for purpose-built datasets to train and evaluate VLMs on atomic, rather than composite, visual perception tasks.

Differentiable Mobile Display Photometric Stereo

Feb 07, 2025Abstract:Display photometric stereo uses a display as a programmable light source to illuminate a scene with diverse illumination conditions. Recently, differentiable display photometric stereo (DDPS) demonstrated improved normal reconstruction accuracy by using learned display patterns. However, DDPS faced limitations in practicality, requiring a fixed desktop imaging setup using a polarization camera and a desktop-scale monitor. In this paper, we propose a more practical physics-based photometric stereo, differentiable mobile display photometric stereo (DMDPS), that leverages a mobile phone consisting of a display and a camera. We overcome the limitations of using a mobile device by developing a mobile app and method that simultaneously displays patterns and captures high-quality HDR images. Using this technique, we capture real-world 3D-printed objects and learn display patterns via a differentiable learning process. We demonstrate the effectiveness of DMDPS on both a 3D printed dataset and a first dataset of fallen leaves. The leaf dataset contains reconstructed surface normals and albedos of fallen leaves that may enable future research beyond computer graphics and vision. We believe that DMDPS takes a step forward for practical physics-based photometric stereo.

Dense Dispersed Structured Light for Hyperspectral 3D Imaging of Dynamic Scenes

Dec 02, 2024

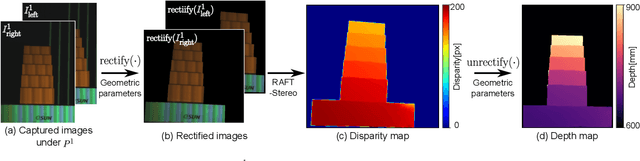

Abstract:Hyperspectral 3D imaging captures both depth maps and hyperspectral images, enabling comprehensive geometric and material analysis. Recent methods achieve high spectral and depth accuracy; however, they require long acquisition times often over several minutes or rely on large, expensive systems, restricting their use to static scenes. We present Dense Dispersed Structured Light (DDSL), an accurate hyperspectral 3D imaging method for dynamic scenes that utilizes stereo RGB cameras and an RGB projector equipped with an affordable diffraction grating film. We design spectrally multiplexed DDSL patterns that significantly reduce the number of required projector patterns, thereby accelerating acquisition speed. Additionally, we formulate an image formation model and a reconstruction method to estimate a hyperspectral image and depth map from captured stereo images. As the first practical and accurate hyperspectral 3D imaging method for dynamic scenes, we experimentally demonstrate that DDSL achieves a spectral resolution of 15.5 nm full width at half maximum (FWHM), a depth error of 4 mm, and a frame rate of 6.6 fps.

Differentiable Display Photometric Stereo

Jun 28, 2023

Abstract:Photometric stereo leverages variations in illumination conditions to reconstruct per-pixel surface normals. The concept of display photometric stereo, which employs a conventional monitor as an illumination source, has the potential to overcome limitations often encountered in bulky and difficult-to-use conventional setups. In this paper, we introduce Differentiable Display Photometric Stereo (DDPS), a method designed to achieve high-fidelity normal reconstruction using an off-the-shelf monitor and camera. DDPS addresses a critical yet often neglected challenge in photometric stereo: the optimization of display patterns for enhanced normal reconstruction. We present a differentiable framework that couples basis-illumination image formation with a photometric-stereo reconstruction method. This facilitates the learning of display patterns that leads to high-quality normal reconstruction through automatic differentiation. Addressing the synthetic-real domain gap inherent in end-to-end optimization, we propose the use of a real-world photometric-stereo training dataset composed of 3D-printed objects. Moreover, to reduce the ill-posed nature of photometric stereo, we exploit the linearly polarized light emitted from the monitor to optically separate diffuse and specular reflections in the captured images. We demonstrate that DDPS allows for learning display patterns optimized for a target configuration and is robust to initialization. We assess DDPS on 3D-printed objects with ground-truth normals and diverse real-world objects, validating that DDPS enables effective photometric-stereo reconstruction.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge