Sebastijan Dumančić

Revisiting Landmarks: Learning from Previous Plans to Generalize over Problem Instances

Aug 29, 2025

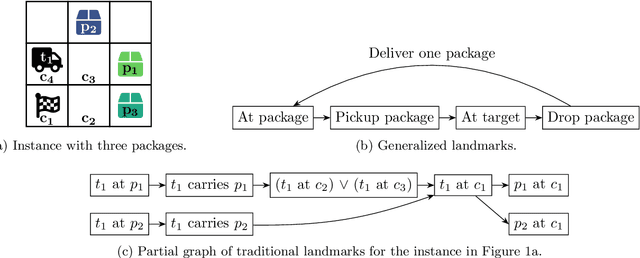

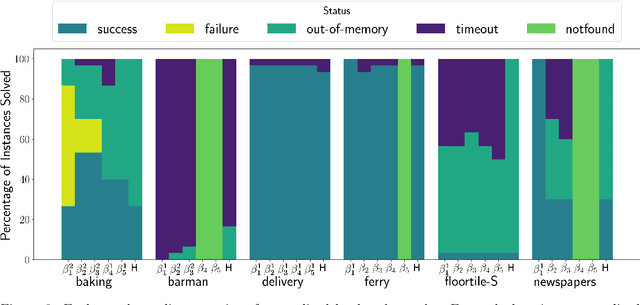

Abstract:We propose a new framework for discovering landmarks that automatically generalize across a domain. These generalized landmarks are learned from a set of solved instances and describe intermediate goals for planning problems where traditional landmark extraction algorithms fall short. Our generalized landmarks extend beyond the predicates of a domain by using state functions that are independent of the objects of a specific problem and apply to all similar objects, thus capturing repetition. Based on these functions, we construct a directed generalized landmark graph that defines the landmark progression, including loop possibilities for repetitive subplans. We show how to use this graph in a heuristic to solve new problem instances of the same domain. Our results show that the generalized landmark graphs learned from a few small instances are also effective for larger instances in the same domain. If a loop that indicates repetition is identified, we see a significant improvement in heuristic performance over the baseline. Generalized landmarks capture domain information that is interpretable and useful to an automated planner. This information can be discovered from a small set of plans for the same domain.

Learning Logical Rules using Minimum Message Length

Aug 08, 2025

Abstract:Unifying probabilistic and logical learning is a key challenge in AI. We introduce a Bayesian inductive logic programming approach that learns minimum message length programs from noisy data. Our approach balances hypothesis complexity and data fit through priors, which explicitly favour more general programs, and a likelihood that favours accurate programs. Our experiments on several domains, including game playing and drug design, show that our method significantly outperforms previous methods, notably those that learn minimum description length programs. Our results also show that our approach is data-efficient and insensitive to example balance, including the ability to learn from exclusively positive examples.

Learning Logic Programs by Discovering Higher-Order Abstractions

Aug 16, 2023Abstract:Discovering novel abstractions is important for human-level AI. We introduce an approach to discover higher-order abstractions, such as map, filter, and fold. We focus on inductive logic programming, which induces logic programs from examples and background knowledge. We introduce the higher-order refactoring problem, where the goal is to compress a logic program by introducing higher-order abstractions. We implement our approach in STEVIE, which formulates the higher-order refactoring problem as a constraint optimisation problem. Our experimental results on multiple domains, including program synthesis and visual reasoning, show that, compared to no refactoring, STEVIE can improve predictive accuracies by 27% and reduce learning times by 47%. We also show that STEVIE can discover abstractions that transfer to different domains

A Divide-Align-Conquer Strategy for Program Synthesis

Jan 08, 2023

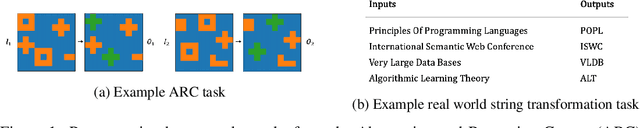

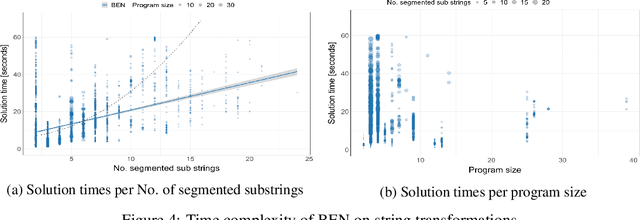

Abstract:A major bottleneck in search-based program synthesis is the exponentially growing search space which makes learning large programs intractable. Humans mitigate this problem by leveraging the compositional nature of the real world: In structured domains, a logical specification can often be decomposed into smaller, complementary solution programs. We show that compositional segmentation can be applied in the programming by examples setting to divide the search for large programs across multiple smaller program synthesis problems. For each example, we search for a decomposition into smaller units which maximizes the reconstruction accuracy in the output under a latent task program. A structural alignment of the constituent parts in the input and output leads to pairwise correspondences used to guide the program synthesis search. In order to align the input/output structures, we make use of the Structure-Mapping Theory (SMT), a formal model of human analogical reasoning which originated in the cognitive sciences. We show that decomposition-driven program synthesis with structural alignment outperforms Inductive Logic Programming (ILP) baselines on string transformation tasks even with minimal knowledge priors. Unlike existing methods, the predictive accuracy of our agent monotonically increases for additional examples and achieves an average time complexity of $\mathcal{O}(m)$ in the number $m$ of partial programs for highly structured domains such as strings. We extend this method to the complex setting of visual reasoning in the Abstraction and Reasoning Corpus (ARC) for which ILP methods were previously infeasible.

From Statistical Relational to Neural Symbolic Artificial Intelligence: a Survey

Aug 25, 2021

Abstract:Neural-symbolic and statistical relational artificial intelligence both integrate frameworks for learning with logical reasoning. This survey identifies several parallels across seven different dimensions between these two fields. These cannot only be used to characterize and position neural-symbolic artificial intelligence approaches but also to identify a number of directions for further research.

Inductive logic programming at 30

Feb 21, 2021

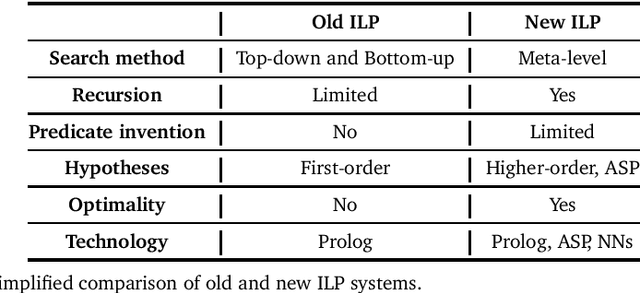

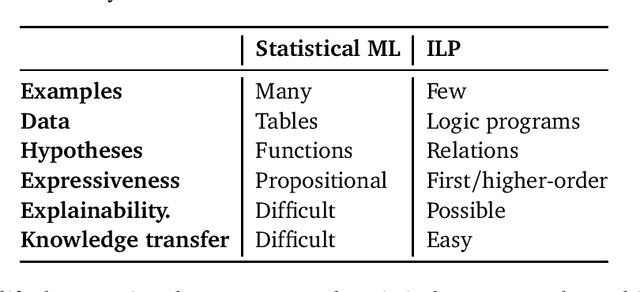

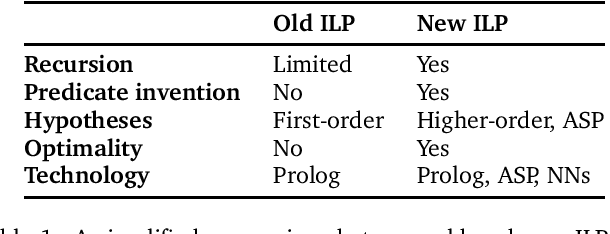

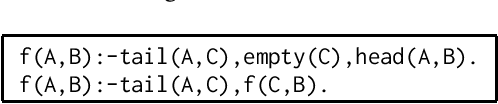

Abstract:Inductive logic programming (ILP) is a form of logic-based machine learning. The goal of ILP is to induce a hypothesis (a logic program) that generalises given training examples and background knowledge. As ILP turns 30, we survey recent work in the field. In this survey, we focus on (i) new meta-level search methods, (ii) techniques for learning recursive programs that generalise from few examples, (iii) new approaches for predicate invention, and (iv) the use of different technologies, notably answer set programming and neural networks. We conclude by discussing some of the current limitations of ILP and discuss directions for future research.

Inductive logic programming at 30: a new introduction

Aug 18, 2020

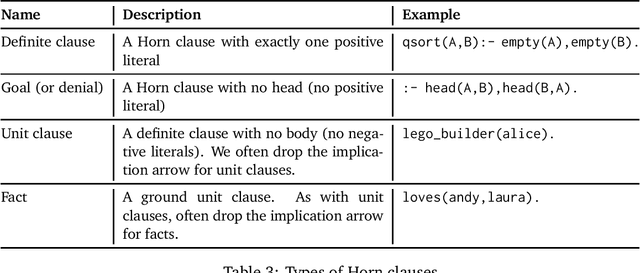

Abstract:Inductive logic programming (ILP) is a form of machine learning. The goal of ILP is to induce a logic program (a set of logical rules) that generalises training examples. As ILP approaches 30, we provide a new introduction to the field. We introduce the necessary logical notation and the main ILP learning settings. We describe the main building blocks of an ILP system. We compare several ILP systems on several dimensions. We detail four systems (Aleph, TILDE, ASPAL, and Metagol). We contrast ILP with other forms of machine learning. Finally, we summarise the current limitations and outline promising directions for future research.

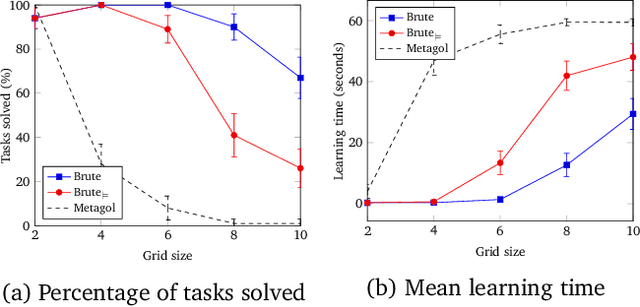

Learning large logic programs by going beyond entailment

Apr 22, 2020

Abstract:A major challenge in inductive logic programming (ILP) is learning large programs. We argue that a key limitation of existing systems is that they use entailment to guide the hypothesis search. This approach is limited because entailment is a binary decision: a hypothesis either entails an example or does not, and there is no intermediate position. To address this limitation, we go beyond entailment and use \emph{example-dependent} loss functions to guide the search, where a hypothesis can partially cover an example. We implement our idea in Brute, a new ILP system which uses best-first search, guided by an example-dependent loss function, to incrementally build programs. Our experiments on three diverse program synthesis domains (robot planning, string transformations, and ASCII art), show that Brute can substantially outperform existing ILP systems, both in terms of predictive accuracies and learning times, and can learn programs 20 times larger than state-of-the-art systems.

From Statistical Relational to Neuro-Symbolic Artificial Intelligence

Mar 24, 2020

Abstract:Neuro-symbolic and statistical relational artificial intelligence both integrate frameworks for learning with logical reasoning. This survey identifies several parallels across seven different dimensions between these two fields. These cannot only be used to characterize and position neuro-symbolic artificial intelligence approaches but also to identify a number of directions for further research.

Turning 30: New Ideas in Inductive Logic Programming

Mar 01, 2020

Abstract:Common criticisms of state-of-the-art machine learning include poor generalisation, a lack of interpretability, and a need for large amounts of training data. We survey recent work in inductive logic programming (ILP), a form of machine learning that induces logic programs from data, which has shown promise at addressing these limitations. We focus on new methods for learning recursive programs that generalise from few examples, a shift from using hand-crafted background knowledge to \emph{learning} background knowledge, and the use of different technologies, notably answer set programming and neural networks. As ILP approaches 30, we also discuss directions for future research.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge