Céline Hocquette

An Empirical Comparison of Cost Functions in Inductive Logic Programming

Mar 10, 2025Abstract:Recent inductive logic programming (ILP) approaches learn optimal hypotheses. An optimal hypothesis minimises a given cost function on the training data. There are many cost functions, such as minimising training error, textual complexity, or the description length of hypotheses. However, selecting an appropriate cost function remains a key question. To address this gap, we extend a constraint-based ILP system to learn optimal hypotheses for seven standard cost functions. We then empirically compare the generalisation error of optimal hypotheses induced under these standard cost functions. Our results on over 20 domains and 1000 tasks, including game playing, program synthesis, and image reasoning, show that, while no cost function consistently outperforms the others, minimising training error or description length has the best overall performance. Notably, our results indicate that minimising the size of hypotheses does not always reduce generalisation error.

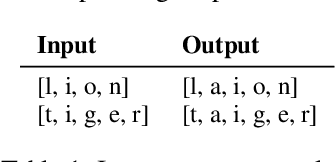

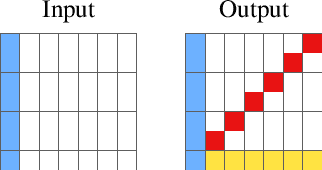

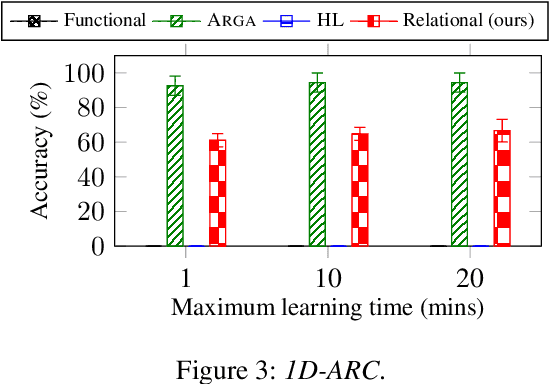

Relational decomposition for program synthesis

Aug 22, 2024

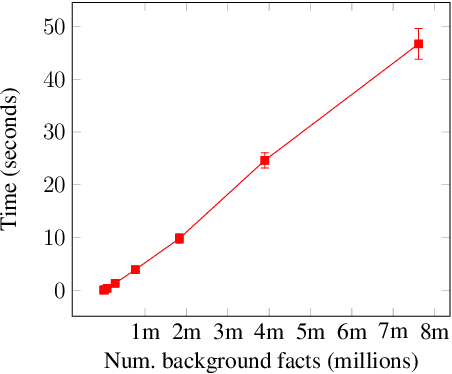

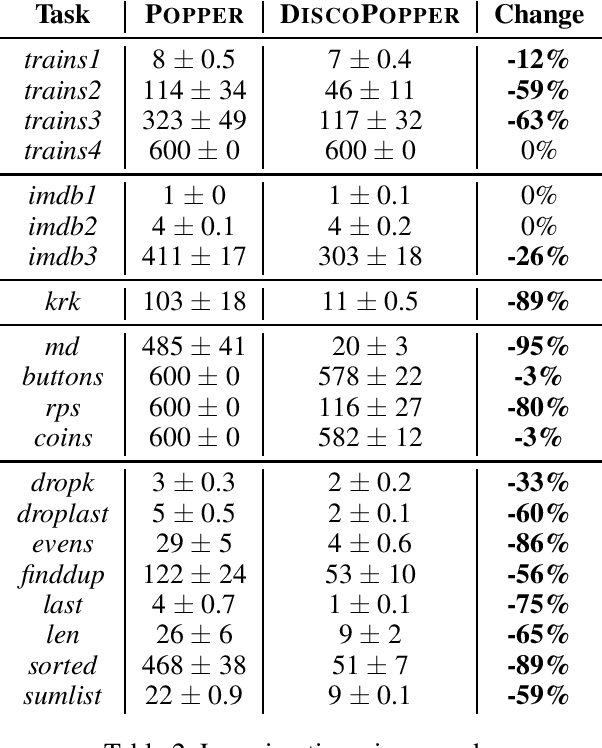

Abstract:We introduce a novel approach to program synthesis that decomposes complex functional tasks into simpler relational synthesis sub-tasks. We demonstrate the effectiveness of our approach using an off-the-shelf inductive logic programming (ILP) system on three challenging datasets. Our results show that (i) a relational representation can outperform a functional one, and (ii) an off-the-shelf ILP system with a relational encoding can outperform domain-specific approaches.

Can humans teach machines to code?

Apr 30, 2024

Abstract:The goal of inductive program synthesis is for a machine to automatically generate a program from user-supplied examples of the desired behaviour of the program. A key underlying assumption is that humans can provide examples of sufficient quality to teach a concept to a machine. However, as far as we are aware, this assumption lacks both empirical and theoretical support. To address this limitation, we explore the question `Can humans teach machines to code?'. To answer this question, we conduct a study where we ask humans to generate examples for six programming tasks, such as finding the maximum element of a list. We compare the performance of a program synthesis system trained on (i) human-provided examples, (ii) randomly sampled examples, and (iii) expert-provided examples. Our results show that, on most of the tasks, non-expert participants did not provide sufficient examples for a program synthesis system to learn an accurate program. Our results also show that non-experts need to provide more examples than both randomly sampled and expert-provided examples.

Learning logic programs by finding minimal unsatisfiable subprograms

Jan 29, 2024Abstract:The goal of inductive logic programming (ILP) is to search for a logic program that generalises training examples and background knowledge. We introduce an ILP approach that identifies minimal unsatisfiable subprograms (MUSPs). We show that finding MUSPs allows us to efficiently and soundly prune the search space. Our experiments on multiple domains, including program synthesis and game playing, show that our approach can reduce learning times by 99%.

Learning big logical rules by joining small rules

Jan 29, 2024

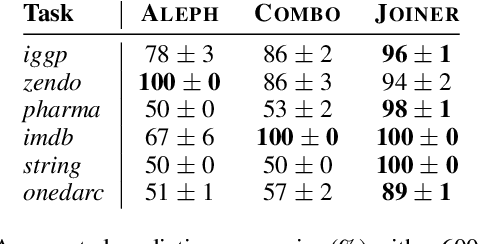

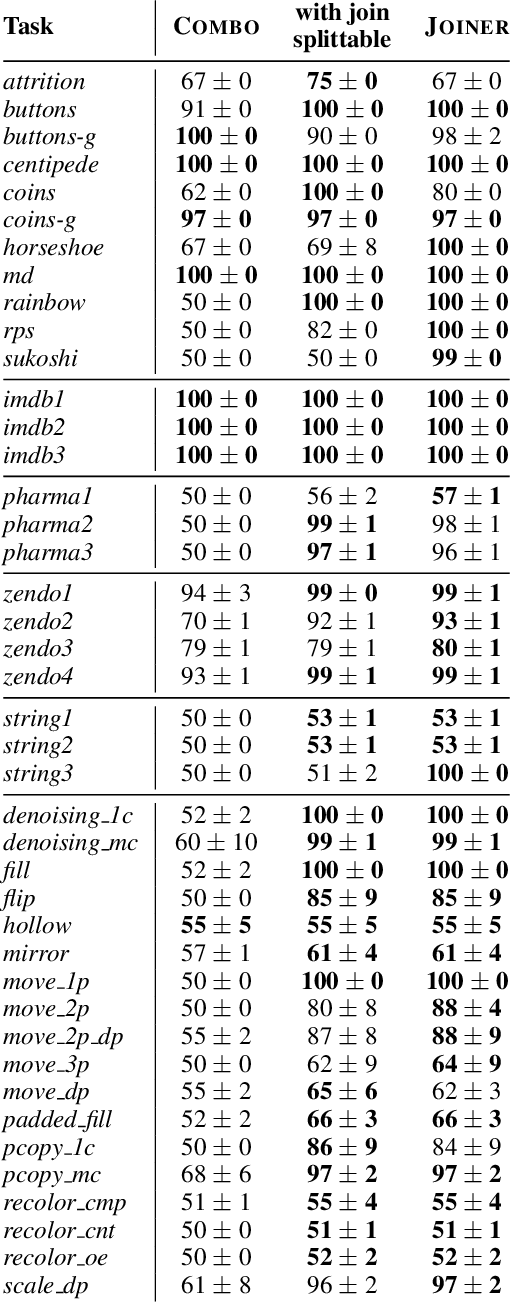

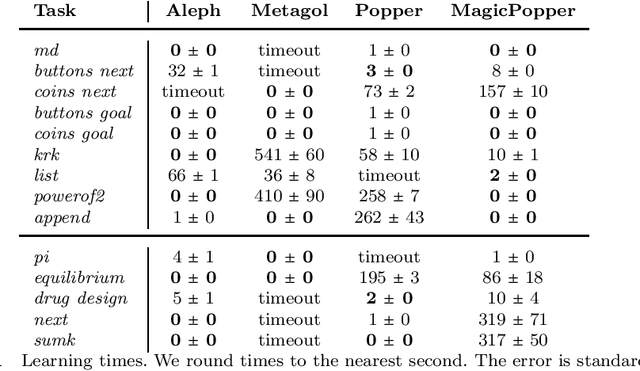

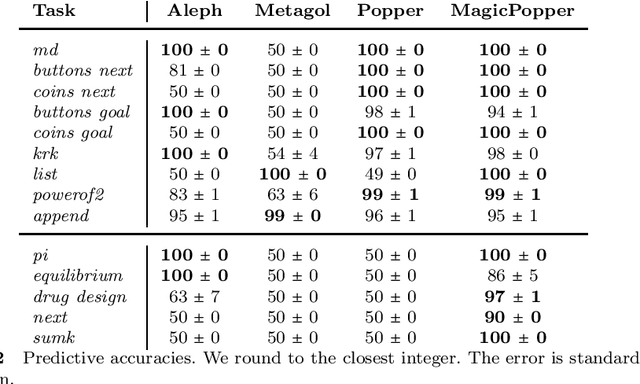

Abstract:A major challenge in inductive logic programming is learning big rules. To address this challenge, we introduce an approach where we join small rules to learn big rules. We implement our approach in a constraint-driven system and use constraint solvers to efficiently join rules. Our experiments on many domains, including game playing and drug design, show that our approach can (i) learn rules with more than 100 literals, and (ii) drastically outperform existing approaches in terms of predictive accuracies.

Learning MDL logic programs from noisy data

Aug 18, 2023

Abstract:Many inductive logic programming approaches struggle to learn programs from noisy data. To overcome this limitation, we introduce an approach that learns minimal description length programs from noisy data, including recursive programs. Our experiments on several domains, including drug design, game playing, and program synthesis, show that our approach can outperform existing approaches in terms of predictive accuracies and scale to moderate amounts of noise.

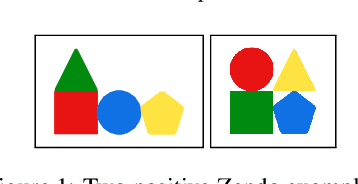

Learning Logic Programs by Discovering Higher-Order Abstractions

Aug 16, 2023Abstract:Discovering novel abstractions is important for human-level AI. We introduce an approach to discover higher-order abstractions, such as map, filter, and fold. We focus on inductive logic programming, which induces logic programs from examples and background knowledge. We introduce the higher-order refactoring problem, where the goal is to compress a logic program by introducing higher-order abstractions. We implement our approach in STEVIE, which formulates the higher-order refactoring problem as a constraint optimisation problem. Our experimental results on multiple domains, including program synthesis and visual reasoning, show that, compared to no refactoring, STEVIE can improve predictive accuracies by 27% and reduce learning times by 47%. We also show that STEVIE can discover abstractions that transfer to different domains

Relational program synthesis with numerical reasoning

Oct 04, 2022

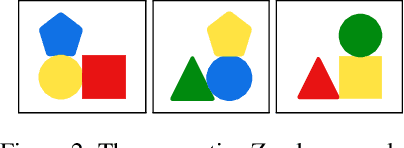

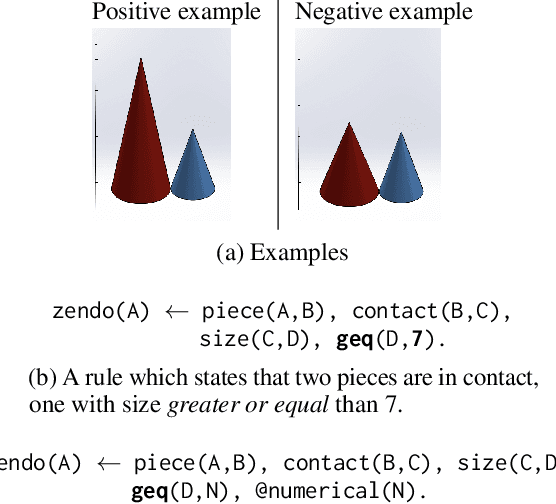

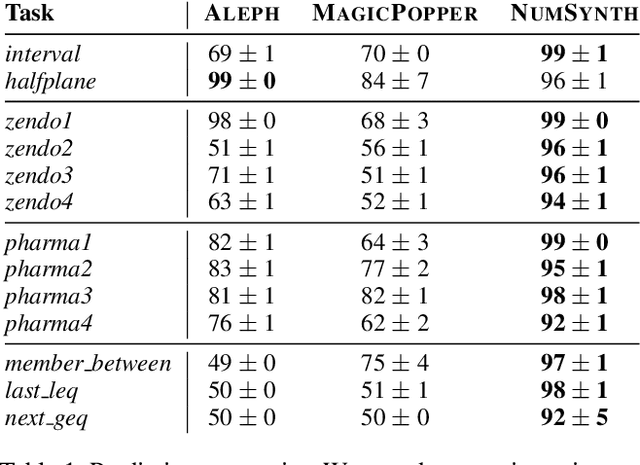

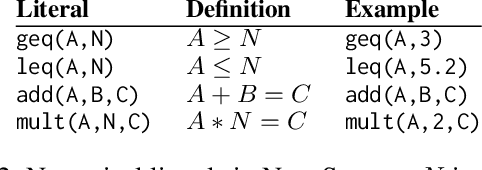

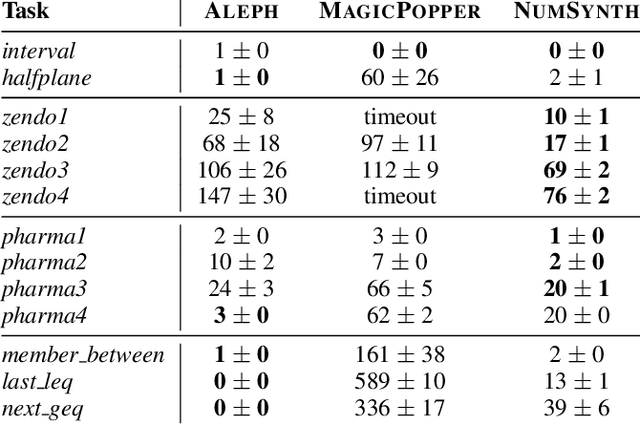

Abstract:Program synthesis approaches struggle to learn programs with numerical values. An especially difficult problem is learning continuous values over multiple examples, such as intervals. To overcome this limitation, we introduce an inductive logic programming approach which combines relational learning with numerical reasoning. Our approach, which we call NUMSYNTH, uses satisfiability modulo theories solvers to efficiently learn programs with numerical values. Our approach can identify numerical values in linear arithmetic fragments, such as real difference logic, and from infinite domains, such as real numbers or integers. Our experiments on four diverse domains, including game playing and program synthesis, show that our approach can (i) learn programs with numerical values from linear arithmetical reasoning, and (ii) outperform existing approaches in terms of predictive accuracies and learning times.

Learning programs with magic values

Aug 05, 2022

Abstract:A magic value in a program is a constant symbol that is essential for the execution of the program but has no clear explanation for its choice. Learning programs with magic values is difficult for existing program synthesis approaches. To overcome this limitation, we introduce an inductive logic programming approach to efficiently learn programs with magic values. Our experiments on diverse domains, including program synthesis, drug design, and game playing, show that our approach can (i) outperform existing approaches in terms of predictive accuracies and learning times, (ii) learn magic values from infinite domains, such as the value of pi, and (iii) scale to domains with millions of constant symbols.

Learning logic programs by discovering where not to search

Feb 20, 2022

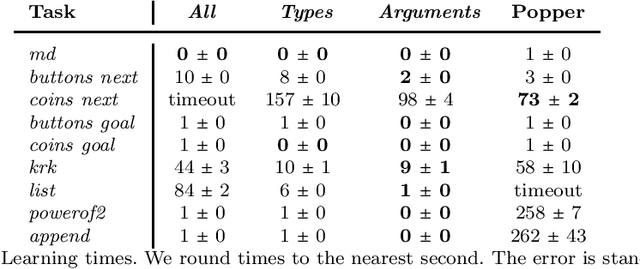

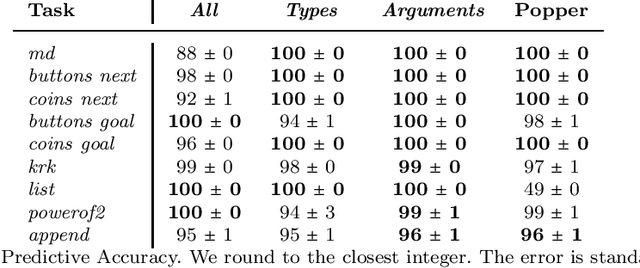

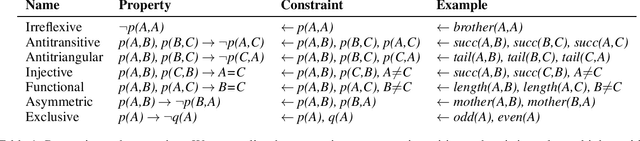

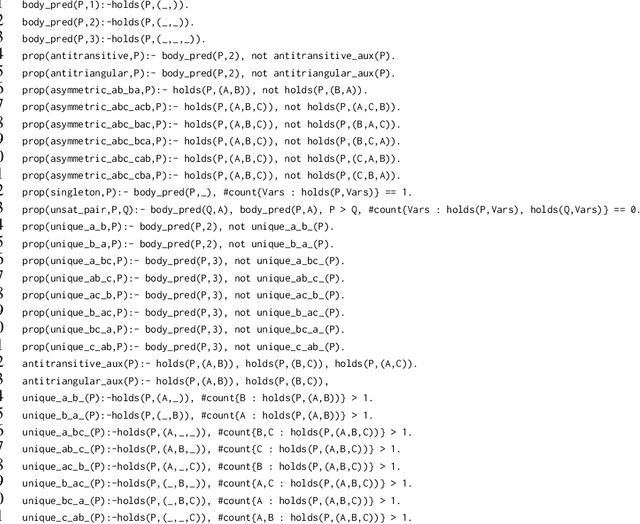

Abstract:The goal of inductive logic programming (ILP) is to search for a hypothesis that generalises training examples and background knowledge (BK). To improve performance, we introduce an approach that, before searching for a hypothesis, first discovers `where not to search'. We use given BK to discover constraints on hypotheses, such as that a number cannot be both even and odd. We use the constraints to bootstrap a constraint-driven ILP system. Our experiments on multiple domains (including program synthesis and inductive general game playing) show that our approach can substantially reduce learning times.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge