Sasskia Brüers

Event-Based Eye Tracking. AIS 2024 Challenge Survey

Apr 17, 2024

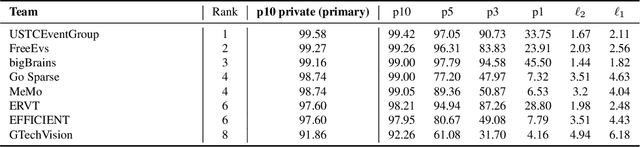

Abstract:This survey reviews the AIS 2024 Event-Based Eye Tracking (EET) Challenge. The task of the challenge focuses on processing eye movement recorded with event cameras and predicting the pupil center of the eye. The challenge emphasizes efficient eye tracking with event cameras to achieve good task accuracy and efficiency trade-off. During the challenge period, 38 participants registered for the Kaggle competition, and 8 teams submitted a challenge factsheet. The novel and diverse methods from the submitted factsheets are reviewed and analyzed in this survey to advance future event-based eye tracking research.

A Lightweight Spatiotemporal Network for Online Eye Tracking with Event Camera

Apr 13, 2024Abstract:Event-based data are commonly encountered in edge computing environments where efficiency and low latency are critical. To interface with such data and leverage their rich temporal features, we propose a causal spatiotemporal convolutional network. This solution targets efficient implementation on edge-appropriate hardware with limited resources in three ways: 1) deliberately targets a simple architecture and set of operations (convolutions, ReLU activations) 2) can be configured to perform online inference efficiently via buffering of layer outputs 3) can achieve more than 90% activation sparsity through regularization during training, enabling very significant efficiency gains on event-based processors. In addition, we propose a general affine augmentation strategy acting directly on the events, which alleviates the problem of dataset scarcity for event-based systems. We apply our model on the AIS 2024 event-based eye tracking challenge, reaching a score of 0.9916 p10 accuracy on the Kaggle private testset.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge