Rong Du

TAGPerson: A Target-Aware Generation Pipeline for Person Re-identification

Dec 28, 2021

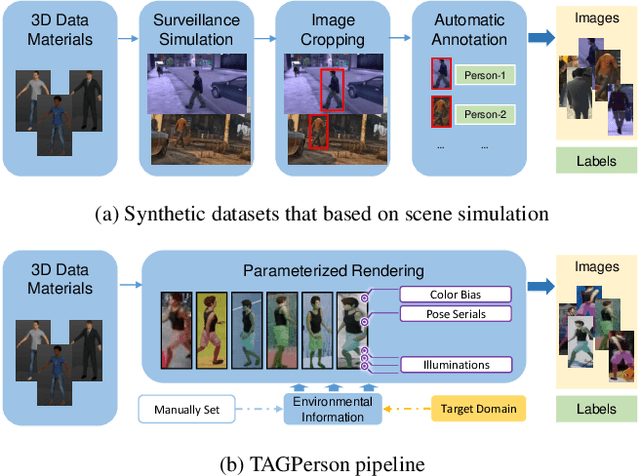

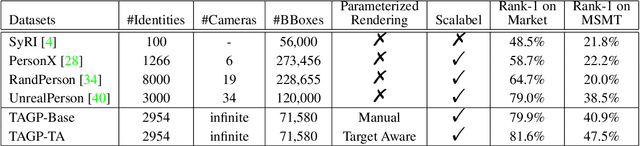

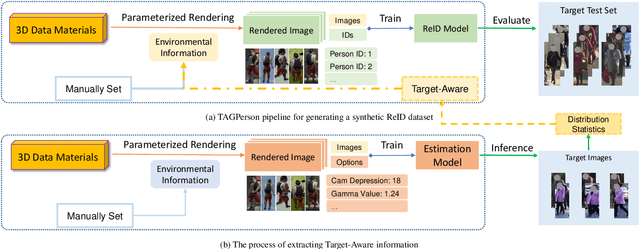

Abstract:Nowadays, real data in person re-identification (ReID) task is facing privacy issues, e.g., the banned dataset DukeMTMC-ReID. Thus it becomes much harder to collect real data for ReID task. Meanwhile, the labor cost of labeling ReID data is still very high and further hinders the development of the ReID research. Therefore, many methods turn to generate synthetic images for ReID algorithms as alternatives instead of real images. However, there is an inevitable domain gap between synthetic and real images. In previous methods, the generation process is based on virtual scenes, and their synthetic training data can not be changed according to different target real scenes automatically. To handle this problem, we propose a novel Target-Aware Generation pipeline to produce synthetic person images, called TAGPerson. Specifically, it involves a parameterized rendering method, where the parameters are controllable and can be adjusted according to target scenes. In TAGPerson, we extract information from target scenes and use them to control our parameterized rendering process to generate target-aware synthetic images, which would hold a smaller gap to the real images in the target domain. In our experiments, our target-aware synthetic images can achieve a much higher performance than the generalized synthetic images on MSMT17, i.e. 47.5% vs. 40.9% for rank-1 accuracy. We will release this toolkit\footnote{\noindent Code is available at \href{https://github.com/tagperson/tagperson-blender}{https://github.com/tagperson/tagperson-blender}} for the ReID community to generate synthetic images at any desired taste.

GCN-Based Linkage Prediction for Face Clustering on Imbalanced Datasets: An Empirical Study

Jul 07, 2021

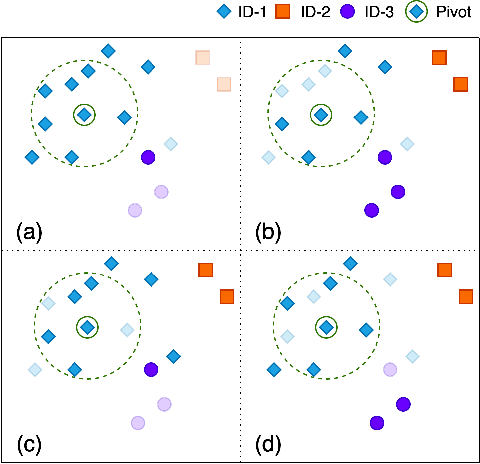

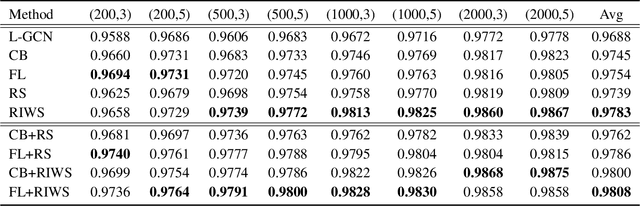

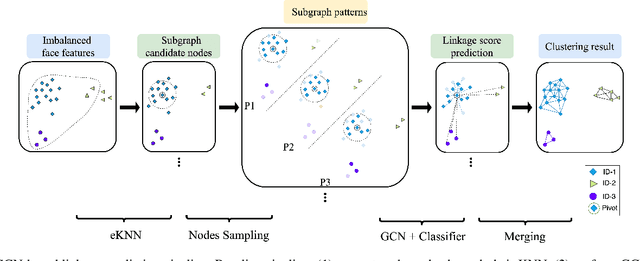

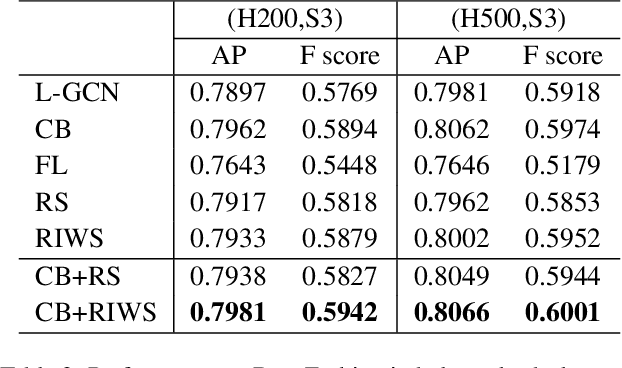

Abstract:In recent years, benefiting from the expressive power of Graph Convolutional Networks (GCNs), significant breakthroughs have been made in face clustering. However, rare attention has been paid to GCN-based clustering on imbalanced data. Although imbalance problem has been extensively studied, the impact of imbalanced data on GCN-based linkage prediction task is quite different, which would cause problems in two aspects: imbalanced linkage labels and biased graph representations. The problem of imbalanced linkage labels is similar to that in image classification task, but the latter is a particular problem in GCN-based clustering via linkage prediction. Significantly biased graph representations in training can cause catastrophic overfitting of a GCN model. To tackle these problems, we evaluate the feasibility of those existing methods for imbalanced image classification problem on graphs with extensive experiments, and present a new method to alleviate the imbalanced labels and also augment graph representations using a Reverse-Imbalance Weighted Sampling (RIWS) strategy, followed with insightful analyses and discussions. The code and a series of imbalanced benchmark datasets synthesized from MS-Celeb-1M and DeepFashion are available on https://github.com/espectre/GCNs_on_imbalanced_datasets.

The Internet of Things as a Deep Neural Network

Mar 23, 2020

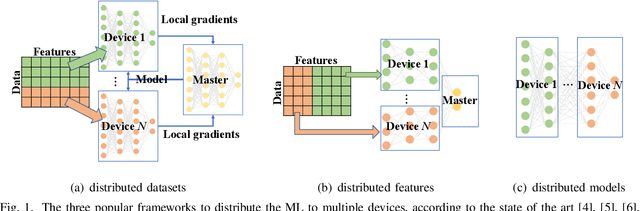

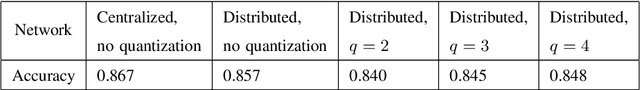

Abstract:An important task in the Internet of Things (IoT) is field monitoring, where multiple IoT nodes take measurements and communicate them to the base station or the cloud for processing, inference, and analysis. This communication becomes costly when the measurements are high-dimensional (e.g., videos or time-series data). The IoT networks with limited bandwidth and low power devices may not be able to support such frequent transmissions with high data rates. To ensure communication efficiency, this article proposes to model the measurement compression at IoT nodes and the inference at the base station or cloud as a deep neural network (DNN). We propose a new framework where the data to be transmitted from nodes are the intermediate outputs of a layer of the DNN. We show how to learn the model parameters of the DNN and study the trade-off between the communication rate and the inference accuracy. The experimental results show that we can save approximately 96% transmissions with only a degradation of 2.5% in inference accuracy. Our findings have the potentiality to enable many new IoT data analysis applications generating large amount of measurements.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge