Richard Tran

The Open Catalyst 2022 Dataset and Challenges for Oxide Electrocatalysis

Jun 17, 2022

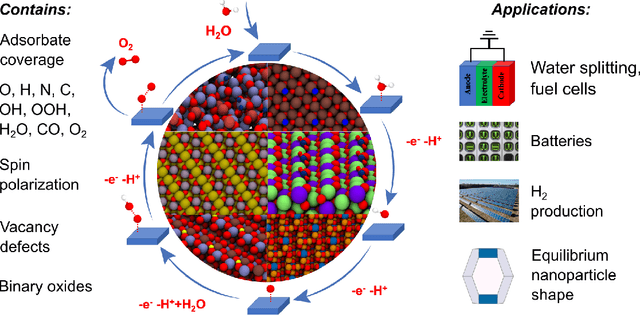

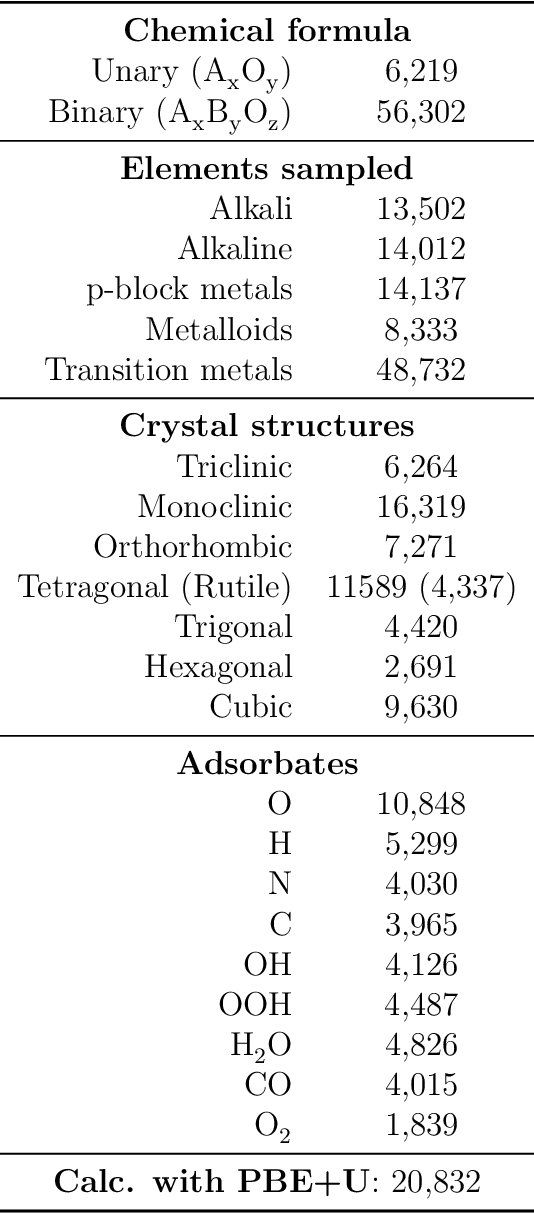

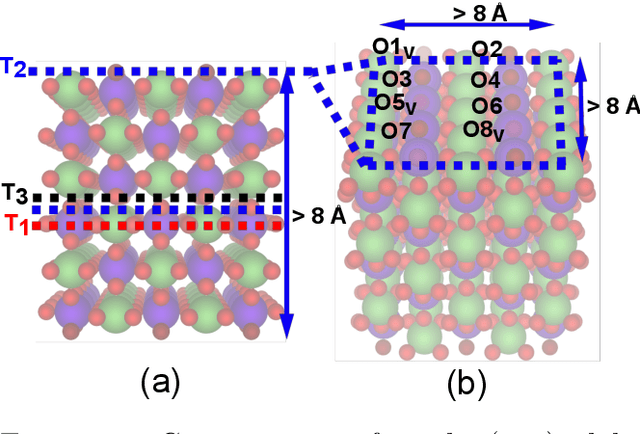

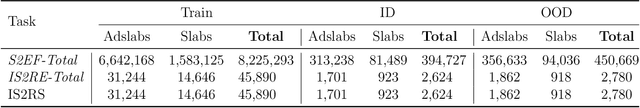

Abstract:Computational catalysis and machine learning communities have made considerable progress in developing machine learning models for catalyst discovery and design. Yet, a general machine learning potential that spans the chemical space of catalysis is still out of reach. A significant hurdle is obtaining access to training data across a wide range of materials. One important class of materials where data is lacking are oxides, which inhibits models from studying the Oxygen Evolution Reaction and oxide electrocatalysis more generally. To address this we developed the Open Catalyst 2022(OC22) dataset, consisting of 62,521 Density Functional Theory (DFT) relaxations (~9,884,504 single point calculations) across a range of oxide materials, coverages, and adsorbates (*H, *O, *N, *C, *OOH, *OH, *OH2, *O2, *CO). We define generalized tasks to predict the total system energy that are applicable across catalysis, develop baseline performance of several graph neural networks (SchNet, DimeNet++, ForceNet, SpinConv, PaiNN, GemNet-dT, GemNet-OC), and provide pre-defined dataset splits to establish clear benchmarks for future efforts. For all tasks, we study whether combining datasets leads to better results, even if they contain different materials or adsorbates. Specifically, we jointly train models on Open Catalyst 2020 (OC20) Dataset and OC22, or fine-tune pretrained OC20 models on OC22. In the most general task, GemNet-OC sees a ~32% improvement in energy predictions through fine-tuning and a ~9% improvement in force predictions via joint training. Surprisingly, joint training on both the OC20 and much smaller OC22 datasets also improves total energy predictions on OC20 by ~19%. The dataset and baseline models are open sourced, and a public leaderboard will follow to encourage continued community developments on the total energy tasks and data.

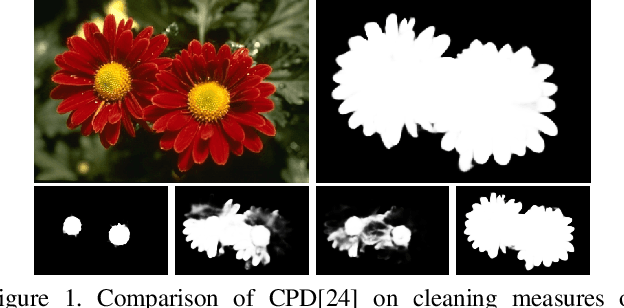

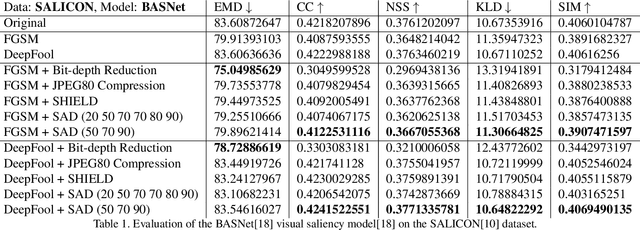

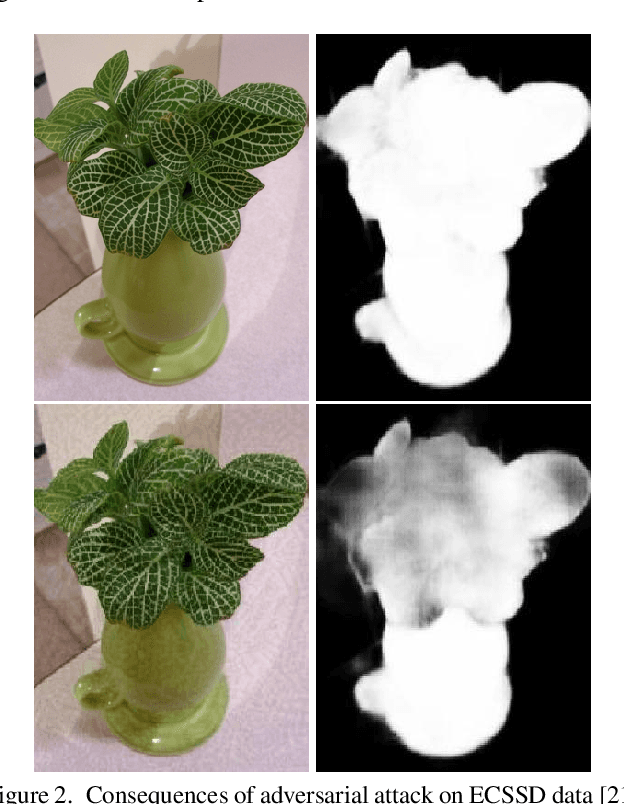

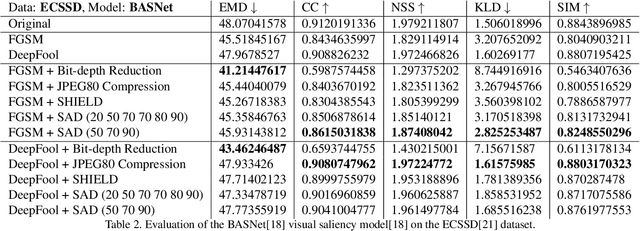

SAD: Saliency-based Defenses Against Adversarial Examples

Mar 10, 2020

Abstract:With the rise in popularity of machine and deep learning models, there is an increased focus on their vulnerability to malicious inputs. These adversarial examples drift model predictions away from the original intent of the network and are a growing concern in practical security. In order to combat these attacks, neural networks can leverage traditional image processing approaches or state-of-the-art defensive models to reduce perturbations in the data. Defensive approaches that take a global approach to noise reduction are effective against adversarial attacks, however their lossy approach often distorts important data within the image. In this work, we propose a visual saliency based approach to cleaning data affected by an adversarial attack. Our model leverages the salient regions of an adversarial image in order to provide a targeted countermeasure while comparatively reducing loss within the cleaned images. We measure the accuracy of our model by evaluating the effectiveness of state-of-the-art saliency methods prior to attack, under attack, and after application of cleaning methods. We demonstrate the effectiveness of our proposed approach in comparison with related defenses and against established adversarial attack methods, across two saliency datasets. Our targeted approach shows significant improvements in a range of standard statistical and distance saliency metrics, in comparison with both traditional and state-of-the-art approaches.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge