Qiuchi Zhao

LXLv2: Enhanced LiDAR Excluded Lean 3D Object Detection with Fusion of 4D Radar and Camera

Feb 20, 2025Abstract:As the previous state-of-the-art 4D radar-camera fusion-based 3D object detection method, LXL utilizes the predicted image depth distribution maps and radar 3D occupancy grids to assist the sampling-based image view transformation. However, the depth prediction lacks accuracy and consistency, and the concatenation-based fusion in LXL impedes the model robustness. In this work, we propose LXLv2, where modifications are made to overcome the limitations and improve the performance. Specifically, considering the position error in radar measurements, we devise a one-to-many depth supervision strategy via radar points, where the radar cross section (RCS) value is further exploited to adjust the supervision area for object-level depth consistency. Additionally, a channel and spatial attention-based fusion module named CSAFusion is introduced to improve feature adaptiveness. Experimental results on the View-of-Delft and TJ4DRadSet datasets show that the proposed LXLv2 can outperform LXL in detection accuracy, inference speed and robustness, demonstrating the effectiveness of the model.

RadarNeXt: Real-Time and Reliable 3D Object Detector Based On 4D mmWave Imaging Radar

Jan 04, 2025

Abstract:3D object detection is crucial for Autonomous Driving (AD) and Advanced Driver Assistance Systems (ADAS). However, most 3D detectors prioritize detection accuracy, often overlooking network inference speed in practical applications. In this paper, we propose RadarNeXt, a real-time and reliable 3D object detector based on the 4D mmWave radar point clouds. It leverages the re-parameterizable neural networks to catch multi-scale features, reduce memory cost and accelerate the inference. Moreover, to highlight the irregular foreground features of radar point clouds and suppress background clutter, we propose a Multi-path Deformable Foreground Enhancement Network (MDFEN), ensuring detection accuracy while minimizing the sacrifice of speed and excessive number of parameters. Experimental results on View-of-Delft and TJ4DRadSet datasets validate the exceptional performance and efficiency of RadarNeXt, achieving 50.48 and 32.30 mAPs with the variant using our proposed MDFEN. Notably, our RadarNeXt variants achieve inference speeds of over 67.10 FPS on the RTX A4000 GPU and 28.40 FPS on the Jetson AGX Orin. This research demonstrates that RadarNeXt brings a novel and effective paradigm for 3D perception based on 4D mmWave radar.

SMURF: Spatial Multi-Representation Fusion for 3D Object Detection with 4D Imaging Radar

Aug 02, 2023

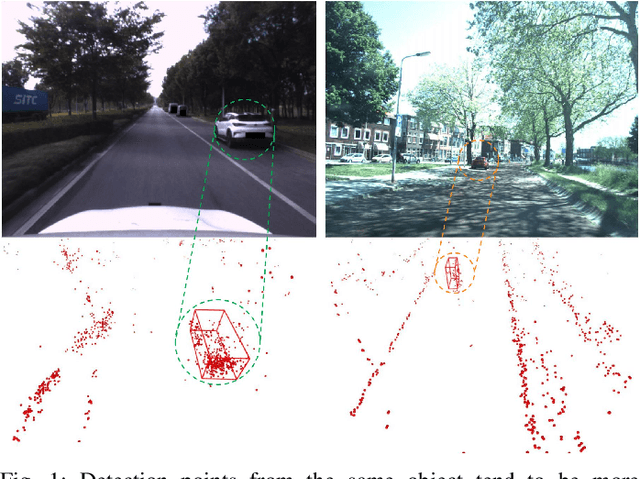

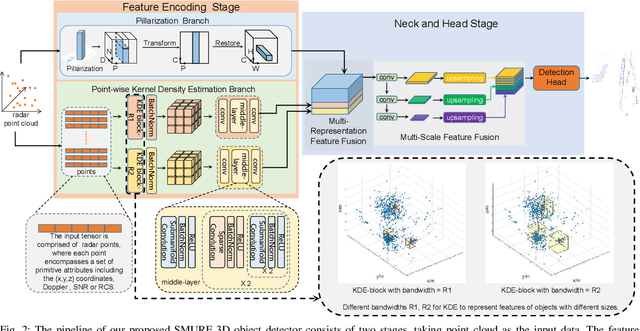

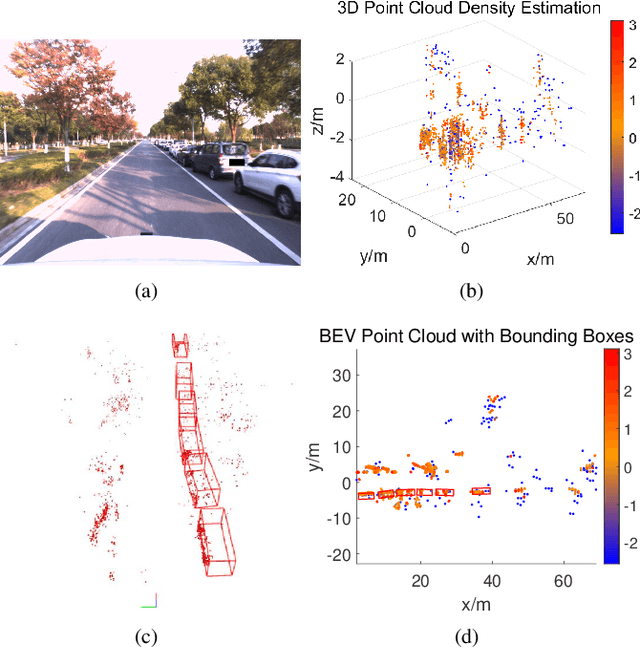

Abstract:The 4D Millimeter wave (mmWave) radar is a promising technology for vehicle sensing due to its cost-effectiveness and operability in adverse weather conditions. However, the adoption of this technology has been hindered by sparsity and noise issues in radar point cloud data. This paper introduces spatial multi-representation fusion (SMURF), a novel approach to 3D object detection using a single 4D imaging radar. SMURF leverages multiple representations of radar detection points, including pillarization and density features of a multi-dimensional Gaussian mixture distribution through kernel density estimation (KDE). KDE effectively mitigates measurement inaccuracy caused by limited angular resolution and multi-path propagation of radar signals. Additionally, KDE helps alleviate point cloud sparsity by capturing density features. Experimental evaluations on View-of-Delft (VoD) and TJ4DRadSet datasets demonstrate the effectiveness and generalization ability of SMURF, outperforming recently proposed 4D imaging radar-based single-representation models. Moreover, while using 4D imaging radar only, SMURF still achieves comparable performance to the state-of-the-art 4D imaging radar and camera fusion-based method, with an increase of 1.22% in the mean average precision on bird's-eye view of TJ4DRadSet dataset and 1.32% in the 3D mean average precision on the entire annotated area of VoD dataset. Our proposed method demonstrates impressive inference time and addresses the challenges of real-time detection, with the inference time no more than 0.05 seconds for most scans on both datasets. This research highlights the benefits of 4D mmWave radar and is a strong benchmark for subsequent works regarding 3D object detection with 4D imaging radar.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge