Pilar Bachiller

Guessing human intentions to avoid dangerous situations in caregiving robots

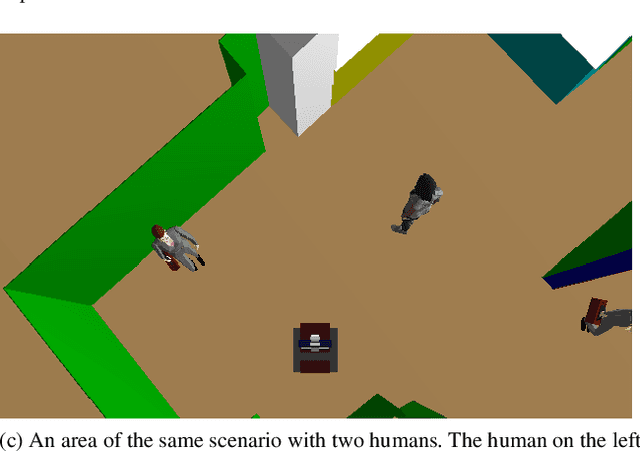

Mar 26, 2024Abstract:For robots to interact socially, they must interpret human intentions and anticipate their potential outcomes accurately. This is particularly important for social robots designed for human care, which may face potentially dangerous situations for people, such as unseen obstacles in their way, that should be avoided. This paper explores the Artificial Theory of Mind (ATM) approach to inferring and interpreting human intentions. We propose an algorithm that detects risky situations for humans, selecting a robot action that removes the danger in real time. We use the simulation-based approach to ATM and adopt the 'like-me' policy to assign intentions and actions to people. Using this strategy, the robot can detect and act with a high rate of success under time-constrained situations. The algorithm has been implemented as part of an existing robotics cognitive architecture and tested in simulation scenarios. Three experiments have been conducted to test the implementation's robustness, precision and real-time response, including a simulated scenario, a human-in-the-loop hybrid configuration and a real-world scenario.

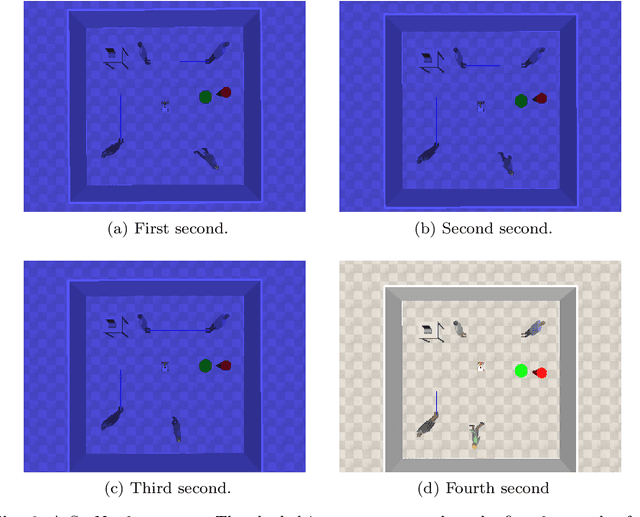

SocNavGym: A Reinforcement Learning Gym for Social Navigation

Apr 27, 2023

Abstract:It is essential for autonomous robots to be socially compliant while navigating in human-populated environments. Machine Learning and, especially, Deep Reinforcement Learning have recently gained considerable traction in the field of Social Navigation. This can be partially attributed to the resulting policies not being bound by human limitations in terms of code complexity or the number of variables that are handled. Unfortunately, the lack of safety guarantees and the large data requirements by DRL algorithms make learning in the real world unfeasible. To bridge this gap, simulation environments are frequently used. We propose SocNavGym, an advanced simulation environment for social navigation that can generate a wide variety of social navigation scenarios and facilitates the development of intelligent social agents. SocNavGym is light-weight, fast, easy-to-use, and can be effortlessly configured to generate different types of social navigation scenarios. It can also be configured to work with different hand-crafted and data-driven social reward signals and to yield a variety of evaluation metrics to benchmark agents' performance. Further, we also provide a case study where a Dueling-DQN agent is trained to learn social-navigation policies using SocNavGym. The results provides evidence that SocNavGym can be used to train an agent from scratch to navigate in simple as well as complex social scenarios. Our experiments also show that the agents trained using the data-driven reward function displays more advanced social compliance in comparison to the heuristic-based reward function.

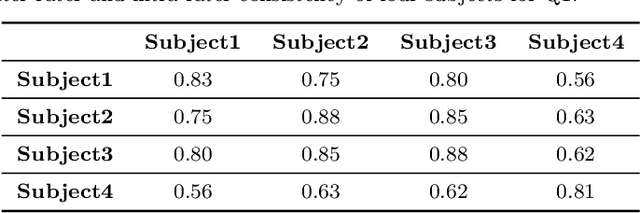

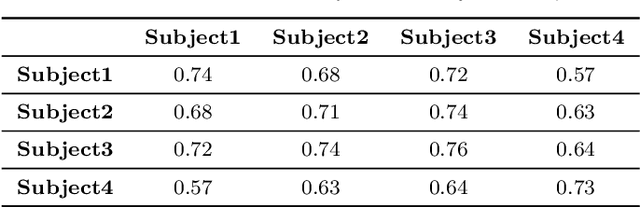

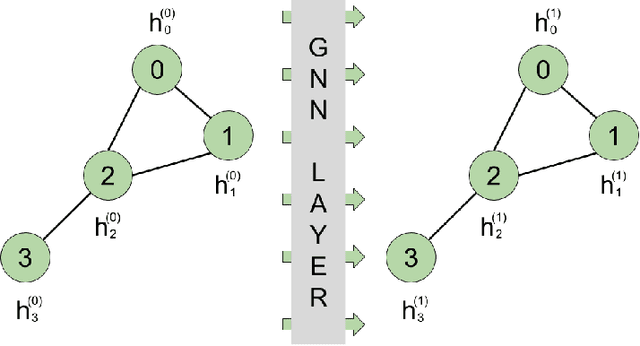

Multi-person 3D pose estimation from unlabelled data

Dec 16, 2022Abstract:Its numerous applications make multi-human 3D pose estimation a remarkably impactful area of research. Nevertheless, assuming a multiple-view system composed of several regular RGB cameras, 3D multi-pose estimation presents several challenges. First of all, each person must be uniquely identified in the different views to separate the 2D information provided by the cameras. Secondly, the 3D pose estimation process from the multi-view 2D information of each person must be robust against noise and potential occlusions in the scenario. In this work, we address these two challenges with the help of deep learning. Specifically, we present a model based on Graph Neural Networks capable of predicting the cross-view correspondence of the people in the scenario along with a Multilayer Perceptron that takes the 2D points to yield the 3D poses of each person. These two models are trained in a self-supervised manner, thus avoiding the need for large datasets with 3D annotations.

A Graph Neural Network to Model User Comfort in Robot Navigation

Feb 17, 2021

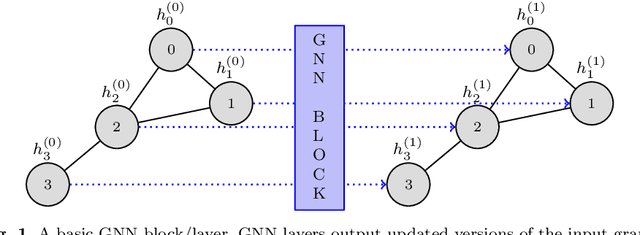

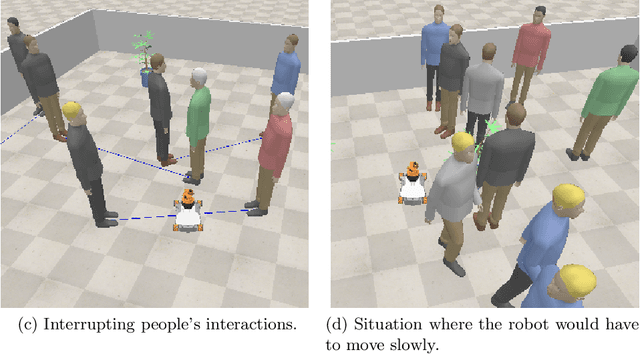

Abstract:Autonomous navigation is a key skill for assistive and service robots. To be successful, robots have to minimise the disruption caused to humans while moving. This implies predicting how people will move and complying with social conventions. Avoiding disrupting personal spaces, people's paths and interactions are examples of these social conventions. This paper leverages Graph Neural Networks to model robot disruption considering the movement of the humans and the robot so that the model built can be used by path planning algorithms. Along with the model, this paper presents an evolution of the dataset SocNav1 which considers the movement of the robot and the humans, and an updated scenario-to-graph transformation which is tested using different Graph Neural Network blocks. The model trained achieves close-to-human performance in the dataset. In addition to its accuracy, the main advantage of the approach is its scalability in terms of the number of social factors that can be considered in comparison with handcrafted models.

Generation of Human-aware Navigation Maps using Graph Neural Networks

Nov 10, 2020

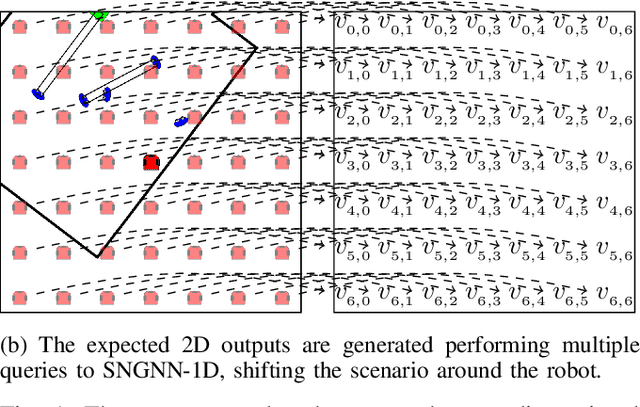

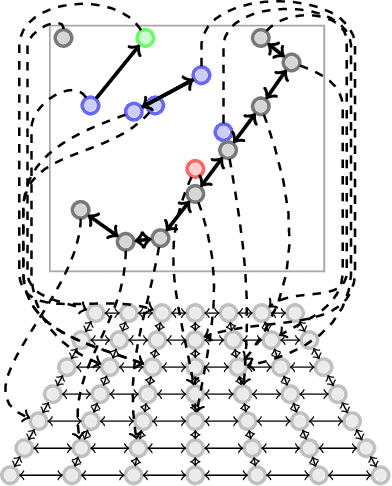

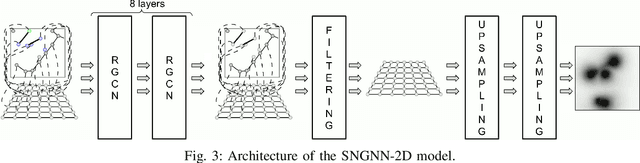

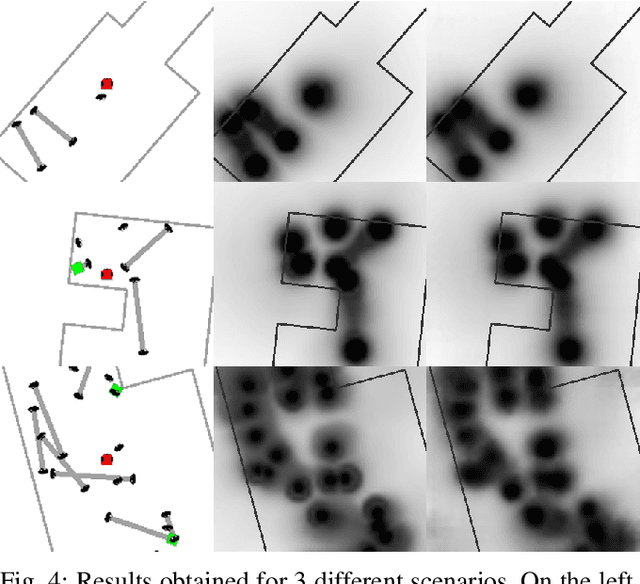

Abstract:Minimising the discomfort caused by robots when navigating in social situations is crucial for them to be accepted. The paper presents a machine learning-based framework that bootstraps existing one-dimensional datasets to generate a cost map dataset and a model combining Graph Neural Network and Convolutional Neural Network layers to produce cost maps for human-aware navigation in real-time. The proposed framework is evaluated against the original one-dimensional dataset and in simulated navigation tasks. The results outperform similar state-of-the-art-methods considering the accuracy on the dataset and the navigation metrics used. The applications of the proposed framework are not limited to human-aware navigation, it could be applied to other fields where map generation is needed.

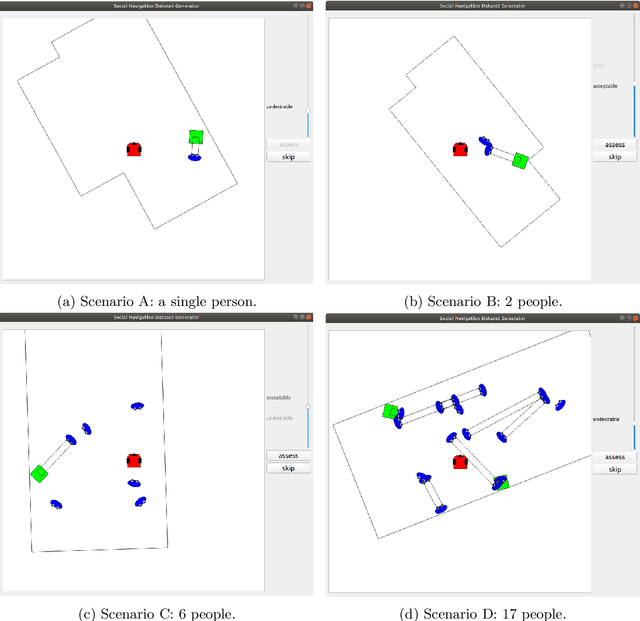

A Toolkit to Generate Social Navigation Datasets

Sep 11, 2020

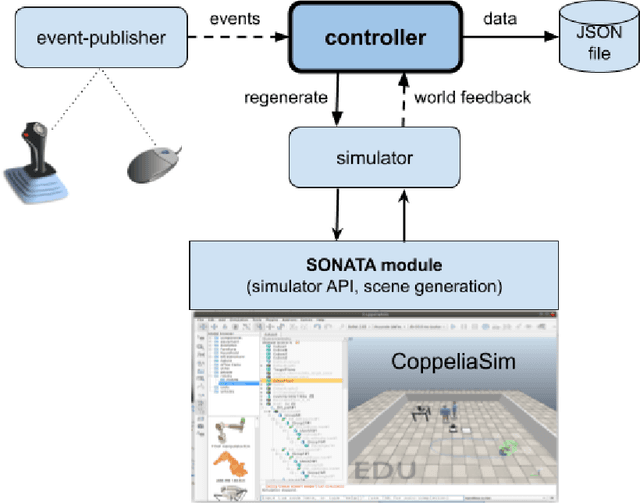

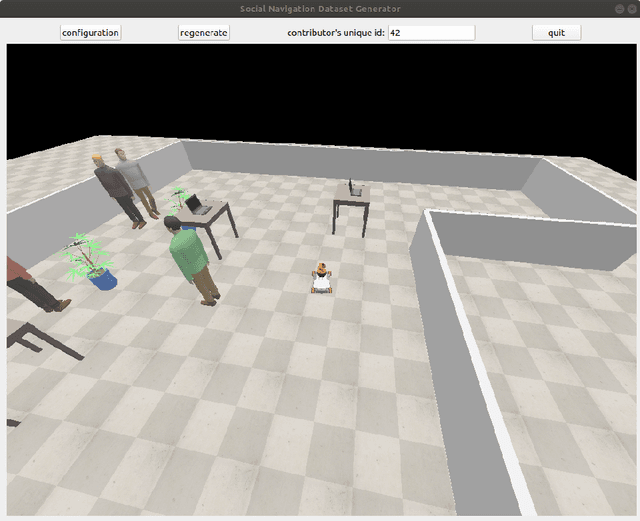

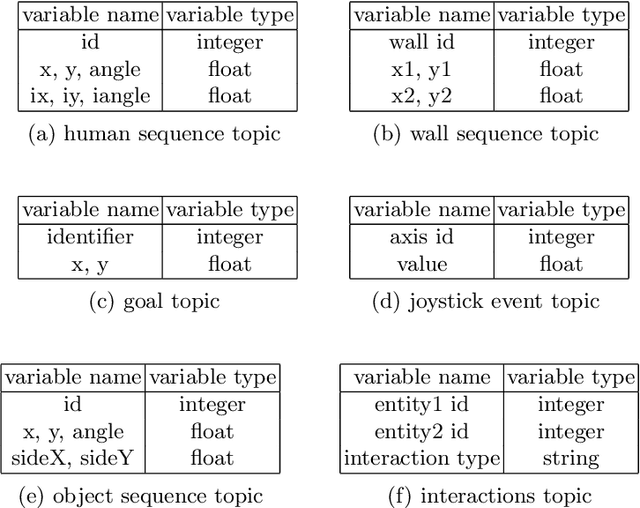

Abstract:Social navigation datasets are necessary to assess social navigation algorithms and train machine learning algorithms. Most of the currently available datasets target pedestrians' movements as a pattern to be replicated by robots. It can be argued that one of the main reasons for this to happen is that compiling datasets where real robots are manually controlled, as they would be expected to behave when moving, is a very resource-intensive task. Another aspect that is often missing in datasets is symbolic information that could be relevant, such as human activities, relationships or interactions. Unfortunately, the available datasets targeting robots and supporting symbolic information are restricted to static scenes. This paper argues that simulation can be used to gather social navigation data in an effective and cost-efficient way and presents a toolkit for this purpose. A use case studying the application of graph neural networks to create learned control policies using supervised learning is presented as an example of how it can be used.

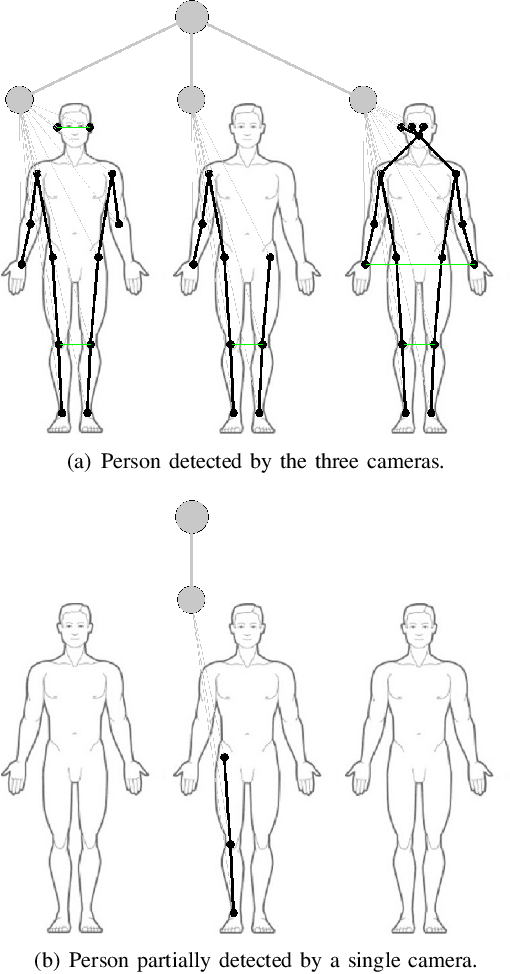

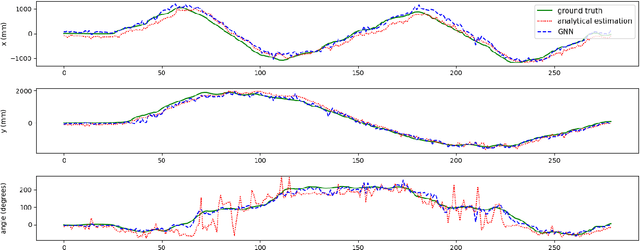

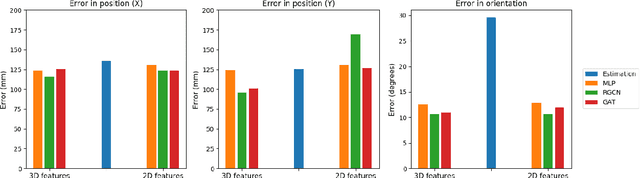

Multi-camera Torso Pose Estimation using Graph Neural Networks

Jul 28, 2020

Abstract:Estimating the location and orientation of humans is an essential skill for service and assistive robots. To achieve a reliable estimation in a wide area such as an apartment, multiple RGBD cameras are frequently used. Firstly, these setups are relatively expensive. Secondly, they seldom perform an effective data fusion using the multiple camera sources at an early stage of the processing pipeline. Occlusions and partial views make this second point very relevant in these scenarios. The proposal presented in this paper makes use of graph neural networks to merge the information acquired from multiple camera sources, achieving a mean absolute error below 125 mm for the location and 10 degrees for the orientation using low-resolution RGB images. The experiments, conducted in an apartment with three cameras, benchmarked two different graph neural network implementations and a third architecture based on fully connected layers. The software used has been released as open-source in a public repository (https://github.com/vangiel/WheresTheFellow).

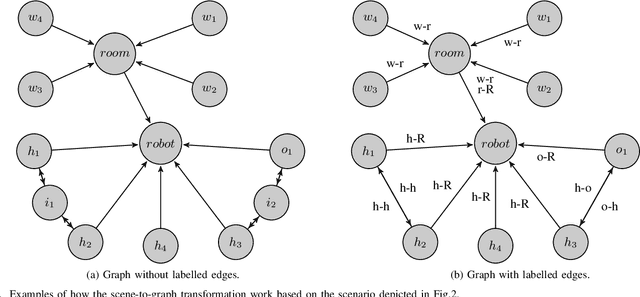

Graph Neural Networks for Human-aware Social Navigation

Sep 19, 2019

Abstract:Autonomous navigation is a key skill for assistive and service robots. To be successful, robots have to navigate avoiding going through the personal spaces of the people surrounding them. Complying with social rules such as not getting in the middle of human-to-human and human-to-object interactions is also important. This paper suggests using Graph Neural Networks to model how inconvenient the presence of a robot would be in a particular scenario according to learned human conventions so that it can be used by path planning algorithms. To do so, we propose two ways of modelling social interactions using graphs and benchmark them with different Graph Neural Networks using the SocNav1 dataset. We achieve close-to-human performance in the dataset and argue that, in addition to promising results, the main advantage of the approach is its scalability in terms of the number of social factors that can be considered and easily embedded in code, in comparison with model-based approaches. The code used to train and test the resulting graph neural network is available in a public repository.

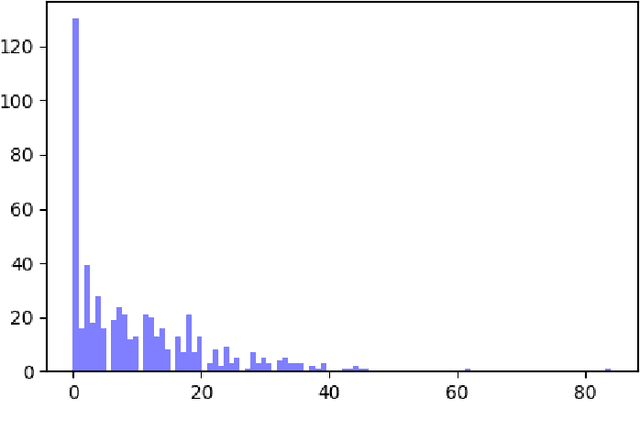

SocNav1: A Dataset to Benchmark and Learn Social Navigation Conventions

Sep 06, 2019

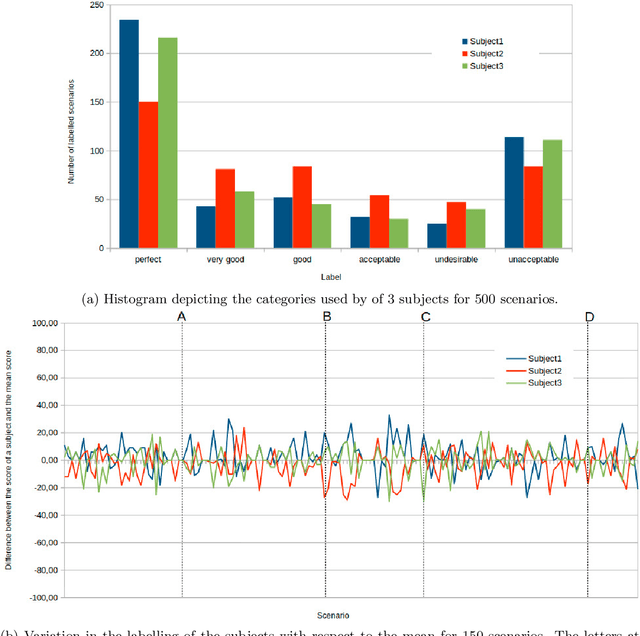

Abstract:Adapting to social conventions is an unavoidable requirement for the acceptance of assistive and social robots. While the scientific community broadly accepts that assistive robots and social robot companions are unlikely to have widespread use in the near future, their presence in health-care and other medium-sized institutions is becoming a reality. These robots will have a beneficial impact in industry and other fields such as health care. The growing number of research contributions to social navigation is also indicative of the importance of the topic. To foster the future prevalence of these robots, they must be useful, but also socially accepted. The first step to be able to actively ask for collaboration or permission is to estimate whether the robot would make people feel uncomfortable otherwise, and that is precisely the goal of algorithms evaluating social navigation compliance. Some approaches provide analytic models, whereas others use machine learning techniques such as neural networks. This data report presents and describes SocNav1, a dataset for social navigation conventions. The aims of SocNav1 are two-fold: a) enabling comparison of the algorithms that robots use to assess the convenience of their presence in a particular position when navigating; b) providing a sufficient amount of data so that modern machine learning algorithms such as deep neural networks can be used. Because of the structured nature of the data, SocNav1 is particularly well-suited to be used to benchmark non-Euclidean machine learning algorithms such as Graph Neural Networks (see [1]). The dataset has been made available in a public repository.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge