Paul Grouchy

FlowCLAS: Enhancing Normalizing Flow Via Contrastive Learning For Anomaly Segmentation

Nov 29, 2024

Abstract:Anomaly segmentation is a valuable computer vision task for safety-critical applications that need to be aware of unexpected events. Current state-of-the-art (SOTA) scene-level anomaly segmentation approaches rely on diverse inlier class labels during training, limiting their ability to leverage vast unlabeled datasets and pre-trained vision encoders. These methods may underperform in domains with reduced color diversity and limited object classes. Conversely, existing unsupervised methods struggle with anomaly segmentation with the diverse scenes of less restricted domains. To address these challenges, we introduce FlowCLAS, a novel self-supervised framework that utilizes vision foundation models to extract rich features and employs a normalizing flow network to learn their density distribution. We enhance the model's discriminative power by incorporating Outlier Exposure and contrastive learning in the latent space. FlowCLAS significantly outperforms all existing methods on the ALLO anomaly segmentation benchmark for space robotics and demonstrates competitive results on multiple road anomaly segmentation benchmarks for autonomous driving, including Fishyscapes Lost&Found and Road Anomaly. These results highlight FlowCLAS's effectiveness in addressing the unique challenges of space anomaly segmentation while retaining SOTA performance in the autonomous driving domain without reliance on inlier segmentation labels.

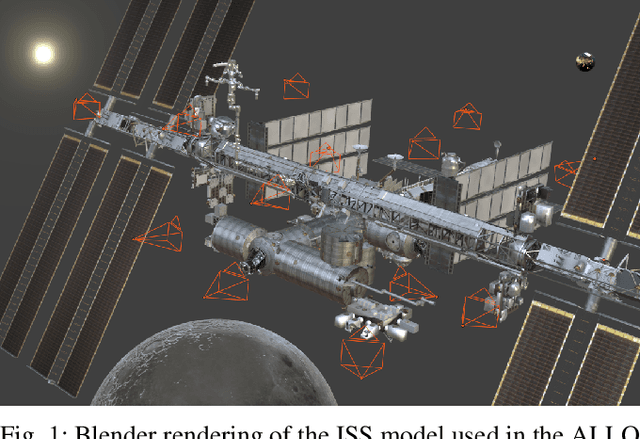

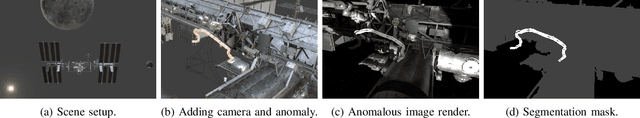

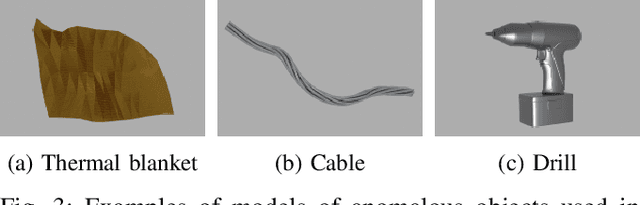

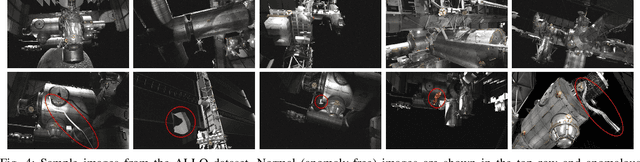

ALLO: A Photorealistic Dataset and Data Generation Pipeline for Anomaly Detection During Robotic Proximity Operations in Lunar Orbit

Sep 30, 2024

Abstract:NASA's forthcoming Lunar Gateway space station, which will be uncrewed most of the time, will need to operate with an unprecedented level of autonomy. Enhancing autonomy on the Gateway presents several unique challenges, one of which is to equip the Canadarm3, the Gateway's external robotic system, with the capability to perform worksite monitoring. Monitoring will involve using the arm's inspection cameras to detect any anomalies within the operating environment, a task complicated by the widely-varying lighting conditions in space. In this paper, we introduce the visual anomaly detection and localization task for space applications and establish a benchmark with our novel synthetic dataset called ALLO (for Anomaly Localization in Lunar Orbit). We develop a complete data generation pipeline to create ALLO, which we use to evaluate the performance of state-of-the-art visual anomaly detection algorithms. Given the low tolerance for risk during space operations and the lack of relevant data, we emphasize the need for novel, robust, and accurate anomaly detection methods to handle the challenging visual conditions found in lunar orbit and beyond.

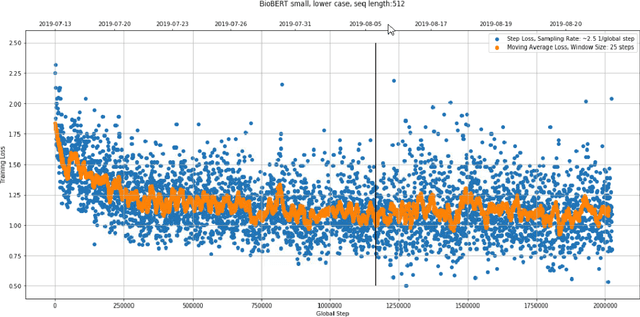

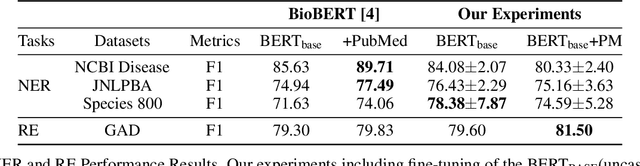

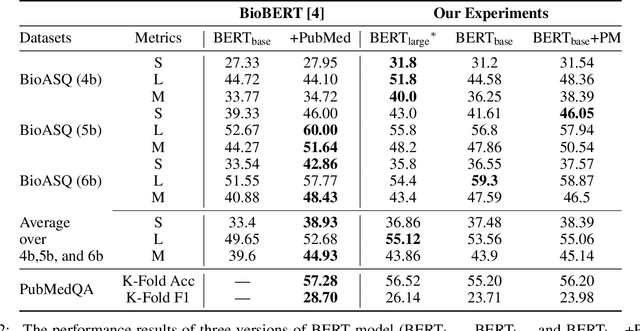

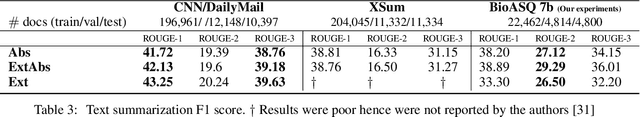

An Experimental Evaluation of Transformer-based Language Models in the Biomedical Domain

Dec 31, 2020

Abstract:With the growing amount of text in health data, there have been rapid advances in large pre-trained models that can be applied to a wide variety of biomedical tasks with minimal task-specific modifications. Emphasizing the cost of these models, which renders technical replication challenging, this paper summarizes experiments conducted in replicating BioBERT and further pre-training and careful fine-tuning in the biomedical domain. We also investigate the effectiveness of domain-specific and domain-agnostic pre-trained models across downstream biomedical NLP tasks. Our finding confirms that pre-trained models can be impactful in some downstream NLP tasks (QA and NER) in the biomedical domain; however, this improvement may not justify the high cost of domain-specific pre-training.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge