Chang Won Lee

FlowCLAS: Enhancing Normalizing Flow Via Contrastive Learning For Anomaly Segmentation

Nov 29, 2024

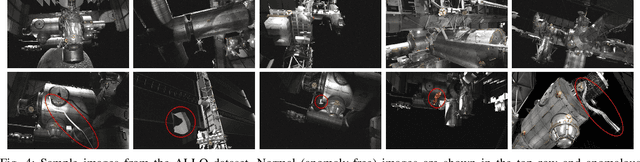

Abstract:Anomaly segmentation is a valuable computer vision task for safety-critical applications that need to be aware of unexpected events. Current state-of-the-art (SOTA) scene-level anomaly segmentation approaches rely on diverse inlier class labels during training, limiting their ability to leverage vast unlabeled datasets and pre-trained vision encoders. These methods may underperform in domains with reduced color diversity and limited object classes. Conversely, existing unsupervised methods struggle with anomaly segmentation with the diverse scenes of less restricted domains. To address these challenges, we introduce FlowCLAS, a novel self-supervised framework that utilizes vision foundation models to extract rich features and employs a normalizing flow network to learn their density distribution. We enhance the model's discriminative power by incorporating Outlier Exposure and contrastive learning in the latent space. FlowCLAS significantly outperforms all existing methods on the ALLO anomaly segmentation benchmark for space robotics and demonstrates competitive results on multiple road anomaly segmentation benchmarks for autonomous driving, including Fishyscapes Lost&Found and Road Anomaly. These results highlight FlowCLAS's effectiveness in addressing the unique challenges of space anomaly segmentation while retaining SOTA performance in the autonomous driving domain without reliance on inlier segmentation labels.

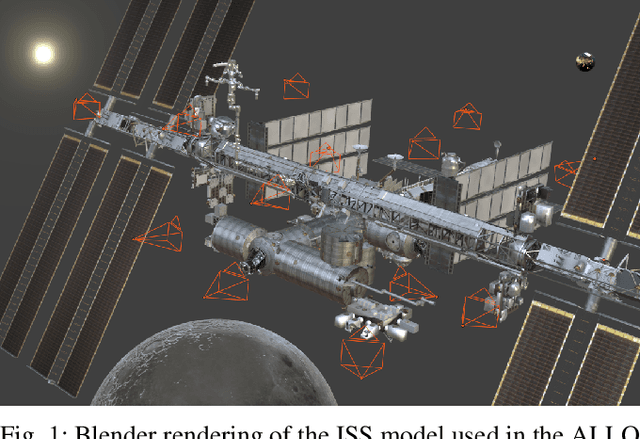

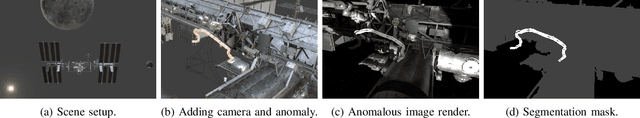

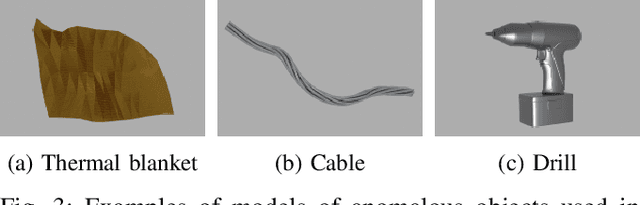

ALLO: A Photorealistic Dataset and Data Generation Pipeline for Anomaly Detection During Robotic Proximity Operations in Lunar Orbit

Sep 30, 2024

Abstract:NASA's forthcoming Lunar Gateway space station, which will be uncrewed most of the time, will need to operate with an unprecedented level of autonomy. Enhancing autonomy on the Gateway presents several unique challenges, one of which is to equip the Canadarm3, the Gateway's external robotic system, with the capability to perform worksite monitoring. Monitoring will involve using the arm's inspection cameras to detect any anomalies within the operating environment, a task complicated by the widely-varying lighting conditions in space. In this paper, we introduce the visual anomaly detection and localization task for space applications and establish a benchmark with our novel synthetic dataset called ALLO (for Anomaly Localization in Lunar Orbit). We develop a complete data generation pipeline to create ALLO, which we use to evaluate the performance of state-of-the-art visual anomaly detection algorithms. Given the low tolerance for risk during space operations and the lack of relevant data, we emphasize the need for novel, robust, and accurate anomaly detection methods to handle the challenging visual conditions found in lunar orbit and beyond.

UncertaintyTrack: Exploiting Detection and Localization Uncertainty in Multi-Object Tracking

Feb 19, 2024Abstract:Multi-object tracking (MOT) methods have seen a significant boost in performance recently, due to strong interest from the research community and steadily improving object detection methods. The majority of tracking methods follow the tracking-by-detection (TBD) paradigm, blindly trust the incoming detections with no sense of their associated localization uncertainty. This lack of uncertainty awareness poses a problem in safety-critical tasks such as autonomous driving where passengers could be put at risk due to erroneous detections that have propagated to downstream tasks, including MOT. While there are existing works in probabilistic object detection that predict the localization uncertainty around the boxes, no work in 2D MOT for autonomous driving has studied whether these estimates are meaningful enough to be leveraged effectively in object tracking. We introduce UncertaintyTrack, a collection of extensions that can be applied to multiple TBD trackers to account for localization uncertainty estimates from probabilistic object detectors. Experiments on the Berkeley Deep Drive MOT dataset show that the combination of our method and informative uncertainty estimates reduces the number of ID switches by around 19\% and improves mMOTA by 2-3%. The source code is available at https://github.com/TRAILab/UncertaintyTrack

ProPanDL: A Modular Architecture for Uncertainty-Aware Panoptic Segmentation

Apr 17, 2023Abstract:We introduce ProPanDL, a family of networks capable of uncertainty-aware panoptic segmentation. Unlike existing segmentation methods, ProPanDL is capable of estimating full probability distributions for both the semantic and spatial aspects of panoptic segmentation. We implement and evaluate ProPanDL variants capable of estimating both parametric (Variance Network) and parameter-free (SampleNet) distributions quantifying pixel-wise spatial uncertainty. We couple these approaches with two methods (Temperature Scaling and Evidential Deep Learning) for semantic uncertainty estimation. To evaluate the uncertainty-aware panoptic segmentation task, we address limitations with existing approaches by proposing new metrics that enable separate evaluation of spatial and semantic uncertainty. We additionally propose the use of the energy score, a proper scoring rule, for more robust evaluation of spatial output distributions. Using these metrics, we conduct an extensive evaluation of ProPanDL variants. Our results demonstrate that ProPanDL is capable of estimating well-calibrated and meaningful output distributions while still retaining strong performance on the base panoptic segmentation task.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge