Patrick Zheng

Tendon-Actuated Concentric Tube Endonasal Robot (TACTER)

Apr 28, 2025

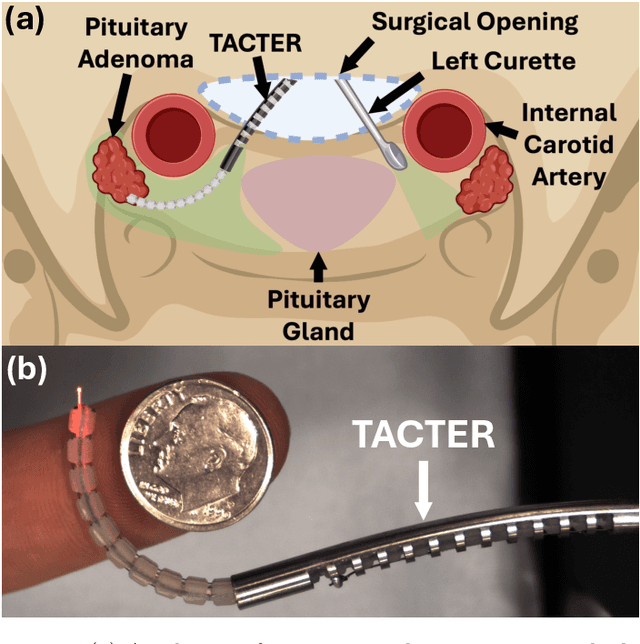

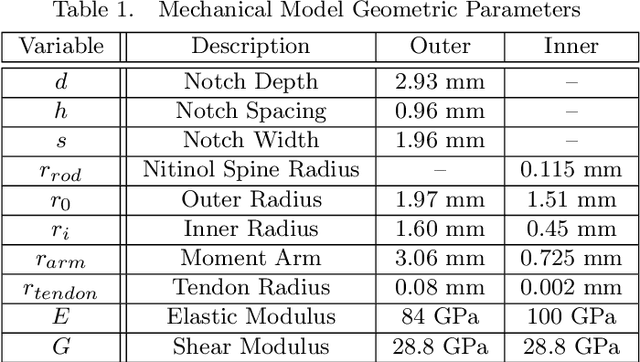

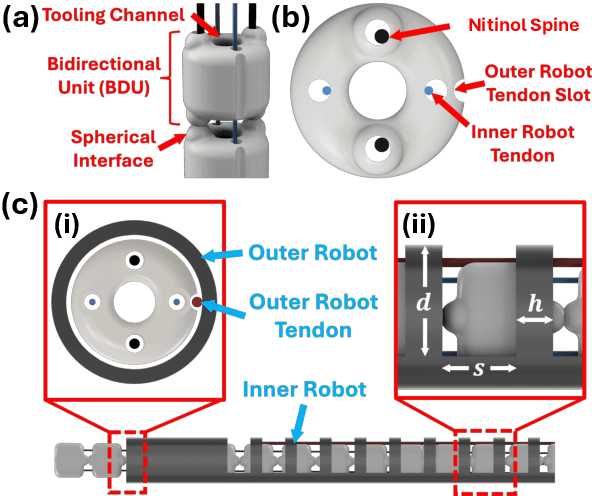

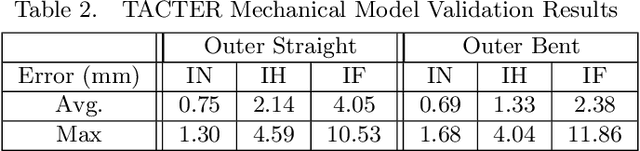

Abstract:Endoscopic endonasal approaches (EEA) have become more prevalent for minimally invasive skull base and sinus surgeries. However, rigid scopes and tools significantly decrease the surgeon's ability to operate in tight anatomical spaces and avoid critical structures such as the internal carotid artery and cranial nerves. This paper proposes a novel tendon-actuated concentric tube endonasal robot (TACTER) design in which two tendon-actuated robots are concentric to each other, resulting in an outer and inner robot that can bend independently. The outer robot is a unidirectionally asymmetric notch (UAN) nickel-titanium robot, and the inner robot is a 3D-printed bidirectional robot, with a nickel-titanium bending member. In addition, the inner robot can translate axially within the outer robot, allowing the tool to traverse through structures while bending, thereby executing follow-the-leader motion. A Cosserat-rod based mechanical model is proposed that uses tendon tension of both tendon-actuated robots and the relative translation between the robots as inputs and predicts the TACTER tip position for varying input parameters. The model is validated with experiments, and a human cadaver experiment is presented to demonstrate maneuverability from the nostril to the sphenoid sinus. This work presents the first tendon-actuated concentric tube (TACT) dexterous robotic tool capable of performing follow-the-leader motion within natural nasal orifices to cover workspaces typically required for a successful EEA.

SNAP: Stopping Catastrophic Forgetting in Hebbian Learning with Sigmoidal Neuronal Adaptive Plasticity

Oct 20, 2024

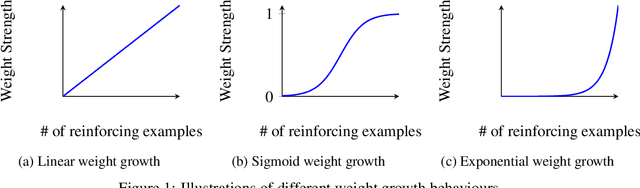

Abstract:Artificial Neural Networks (ANNs) suffer from catastrophic forgetting, where the learning of new tasks causes the catastrophic forgetting of old tasks. Existing Machine Learning (ML) algorithms, including those using Stochastic Gradient Descent (SGD) and Hebbian Learning typically update their weights linearly with experience i.e., independently of their current strength. This contrasts with biological neurons, which at intermediate strengths are very plastic, but consolidate with Long-Term Potentiation (LTP) once they reach a certain strength. We hypothesize this mechanism might help mitigate catastrophic forgetting. We introduce Sigmoidal Neuronal Adaptive Plasticity (SNAP) an artificial approximation to Long-Term Potentiation for ANNs by having the weights follow a sigmoidal growth behaviour allowing the weights to consolidate and stabilize when they reach sufficiently large or small values. We then compare SNAP to linear weight growth and exponential weight growth and see that SNAP completely prevents the forgetting of previous tasks for Hebbian Learning but not for SGD-base learning.

Technical Design Review of Duke Robotics Club's Oogway: An AUV for RoboSub 2024

Oct 13, 2024

Abstract:The Duke Robotics Club is proud to present our robot for the 2024 RoboSub Competition: Oogway. Now in its second year, Oogway has been dramatically upgraded in both its capabilities and reliability. Oogway was built on the principle of independent, well-integrated, and reliable subsystems. Individual components and subsystems were tested and designed separately. Oogway's most advanced capabilities are a result of the tight integration between these subsystems. Such examples include a re-envisioned controls system, an entirely new electrical stack, advanced sonar integration, additional cameras and system monitoring, a new marker dropper, and a watertight capsule mechanism. These additions enabled Oogway to prequalify for Robosub 2024.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge