Parham M. Kebria

Leveraging Optimal Transport for Enhanced Offline Reinforcement Learning in Surgical Robotic Environments

Oct 13, 2023

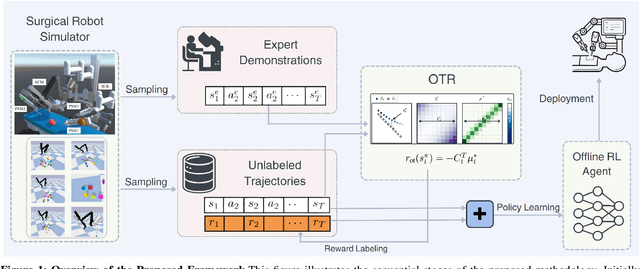

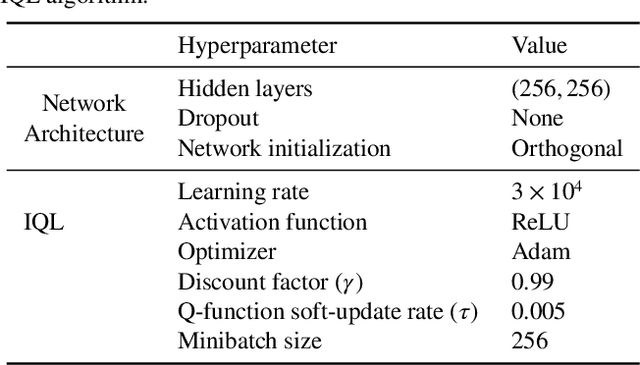

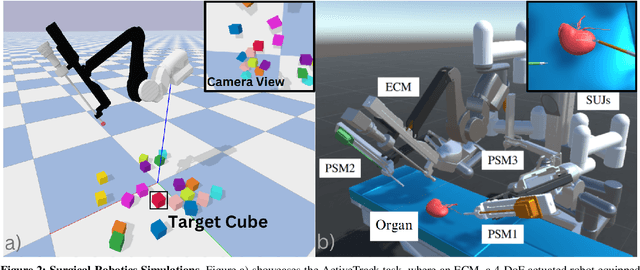

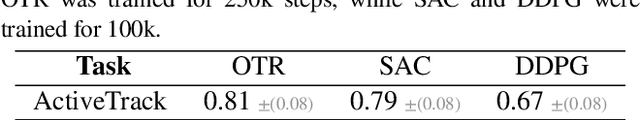

Abstract:Most Reinforcement Learning (RL) methods are traditionally studied in an active learning setting, where agents directly interact with their environments, observe action outcomes, and learn through trial and error. However, allowing partially trained agents to interact with real physical systems poses significant challenges, including high costs, safety risks, and the need for constant supervision. Offline RL addresses these cost and safety concerns by leveraging existing datasets and reducing the need for resource-intensive real-time interactions. Nevertheless, a substantial challenge lies in the demand for these datasets to be meticulously annotated with rewards. In this paper, we introduce Optimal Transport Reward (OTR) labelling, an innovative algorithm designed to assign rewards to offline trajectories, using a small number of high-quality expert demonstrations. The core principle of OTR involves employing Optimal Transport (OT) to calculate an optimal alignment between an unlabeled trajectory from the dataset and an expert demonstration. This alignment yields a similarity measure that is effectively interpreted as a reward signal. An offline RL algorithm can then utilize these reward signals to learn a policy. This approach circumvents the need for handcrafted rewards, unlocking the potential to harness vast datasets for policy learning. Leveraging the SurRoL simulation platform tailored for surgical robot learning, we generate datasets and employ them to train policies using the OTR algorithm. By demonstrating the efficacy of OTR in a different domain, we emphasize its versatility and its potential to expedite RL deployment across a wide range of fields.

A Review of Machine Learning-based Security in Cloud Computing

Sep 10, 2023

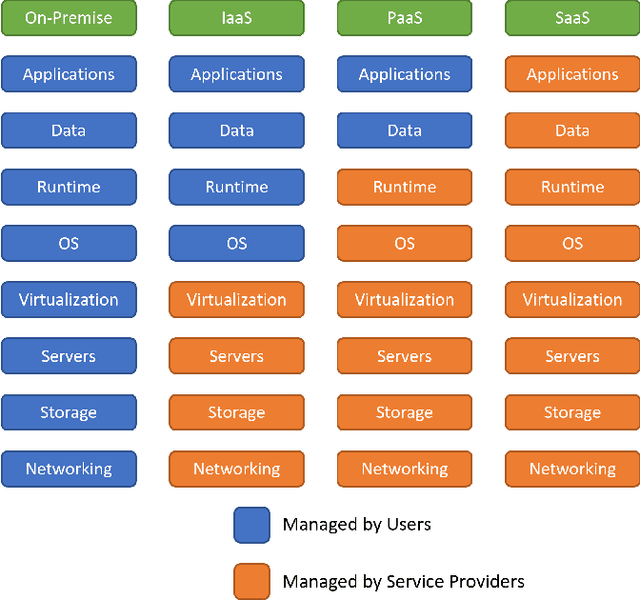

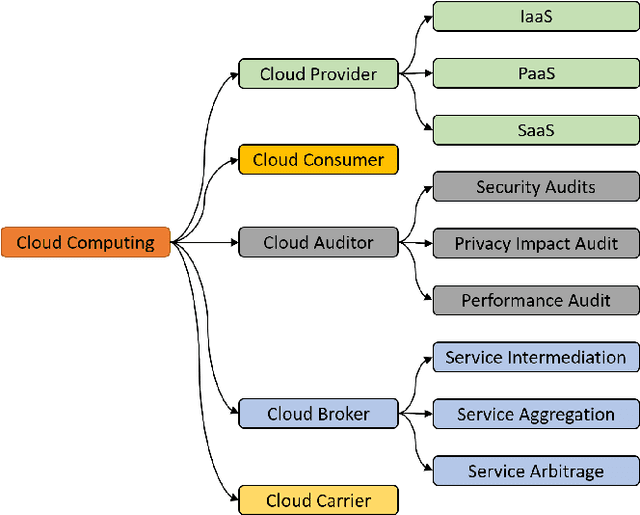

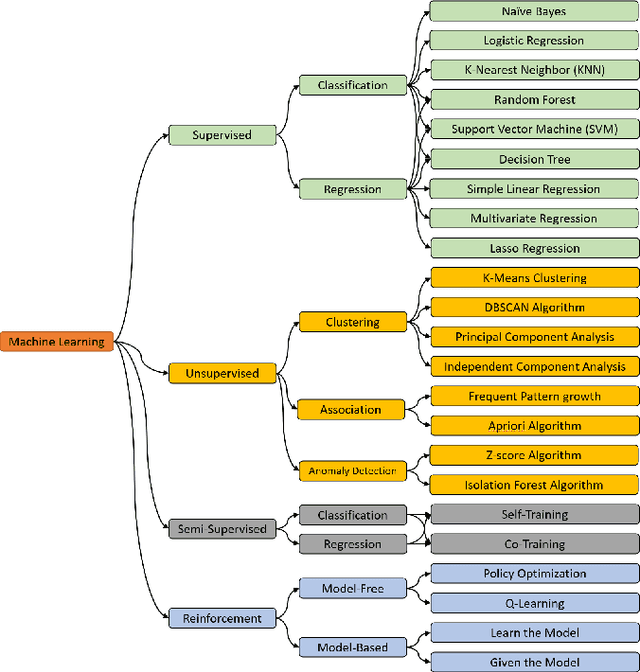

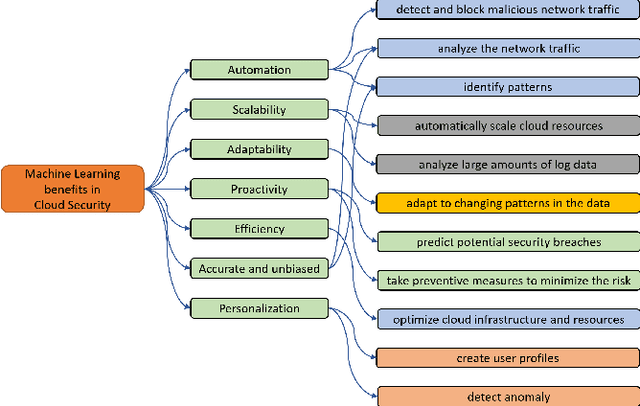

Abstract:Cloud Computing (CC) is revolutionizing the way IT resources are delivered to users, allowing them to access and manage their systems with increased cost-effectiveness and simplified infrastructure. However, with the growth of CC comes a host of security risks, including threats to availability, integrity, and confidentiality. To address these challenges, Machine Learning (ML) is increasingly being used by Cloud Service Providers (CSPs) to reduce the need for human intervention in identifying and resolving security issues. With the ability to analyze vast amounts of data, and make high-accuracy predictions, ML can transform the way CSPs approach security. In this paper, we will explore some of the most recent research in the field of ML-based security in Cloud Computing. We will examine the features and effectiveness of a range of ML algorithms, highlighting their unique strengths and potential limitations. Our goal is to provide a comprehensive overview of the current state of ML in cloud security and to shed light on the exciting possibilities that this emerging field has to offer.

A Review on Robot Manipulation Methods in Human-Robot Interactions

Sep 09, 2023Abstract:Robot manipulation is an important part of human-robot interaction technology. However, traditional pre-programmed methods can only accomplish simple and repetitive tasks. To enable effective communication between robots and humans, and to predict and adapt to uncertain environments, this paper reviews recent autonomous and adaptive learning in robotic manipulation algorithms. It includes typical applications and challenges of human-robot interaction, fundamental tasks of robot manipulation and one of the most widely used formulations of robot manipulation, Markov Decision Process. Recent research focusing on robot manipulation is mainly based on Reinforcement Learning and Imitation Learning. This review paper shows the importance of Deep Reinforcement Learning, which plays an important role in manipulating robots to complete complex tasks in disturbed and unfamiliar environments. With the introduction of Imitation Learning, it is possible for robot manipulation to get rid of reward function design and achieve a simple, stable and supervised learning process. This paper reviews and compares the main features and popular algorithms for both Reinforcement Learning and Imitation Learning.

A Survey of Imitation Learning: Algorithms, Recent Developments, and Challenges

Sep 05, 2023Abstract:In recent years, the development of robotics and artificial intelligence (AI) systems has been nothing short of remarkable. As these systems continue to evolve, they are being utilized in increasingly complex and unstructured environments, such as autonomous driving, aerial robotics, and natural language processing. As a consequence, programming their behaviors manually or defining their behavior through reward functions (as done in reinforcement learning (RL)) has become exceedingly difficult. This is because such environments require a high degree of flexibility and adaptability, making it challenging to specify an optimal set of rules or reward signals that can account for all possible situations. In such environments, learning from an expert's behavior through imitation is often more appealing. This is where imitation learning (IL) comes into play - a process where desired behavior is learned by imitating an expert's behavior, which is provided through demonstrations. This paper aims to provide an introduction to IL and an overview of its underlying assumptions and approaches. It also offers a detailed description of recent advances and emerging areas of research in the field. Additionally, the paper discusses how researchers have addressed common challenges associated with IL and provides potential directions for future research. Overall, the goal of the paper is to provide a comprehensive guide to the growing field of IL in robotics and AI.

A Comprehensive Study on Torchvision Pre-trained Models for Fine-grained Inter-species Classification

Oct 14, 2021

Abstract:This study aims to explore different pre-trained models offered in the Torchvision package which is available in the PyTorch library. And investigate their effectiveness on fine-grained images classification. Transfer Learning is an effective method of achieving extremely good performance with insufficient training data. In many real-world situations, people cannot collect sufficient data required to train a deep neural network model efficiently. Transfer Learning models are pre-trained on a large data set, and can bring a good performance on smaller datasets with significantly lower training time. Torchvision package offers us many models to apply the Transfer Learning on smaller datasets. Therefore, researchers may need a guideline for the selection of a good model. We investigate Torchvision pre-trained models on four different data sets: 10 Monkey Species, 225 Bird Species, Fruits 360, and Oxford 102 Flowers. These data sets have images of different resolutions, class numbers, and different achievable accuracies. We also apply their usual fully-connected layer and the Spinal fully-connected layer to investigate the effectiveness of SpinalNet. The Spinal fully-connected layer brings better performance in most situations. We apply the same augmentation for different models for the same data set for a fair comparison. This paper may help future Computer Vision researchers in choosing a proper Transfer Learning model.

* Accepted

An Uncertainty-aware Transfer Learning-based Framework for Covid-19 Diagnosis

Jul 26, 2020

Abstract:The early and reliable detection of COVID-19 infected patients is essential to prevent and limit its outbreak. The PCR tests for COVID-19 detection are not available in many countries and also there are genuine concerns about their reliability and performance. Motivated by these shortcomings, this paper proposes a deep uncertainty-aware transfer learning framework for COVID-19 detection using medical images. Four popular convolutional neural networks (CNNs) including VGG16, ResNet50, DenseNet121, and InceptionResNetV2 are first applied to extract deep features from chest X-ray and computed tomography (CT) images. Extracted features are then processed by different machine learning and statistical modelling techniques to identify COVID-19 cases. We also calculate and report the epistemic uncertainty of classification results to identify regions where the trained models are not confident about their decisions (out of distribution problem). Comprehensive simulation results for X-ray and CT image datasets indicate that linear support vector machine and neural network models achieve the best results as measured by accuracy, sensitivity, specificity, and AUC. Also it is found that predictive uncertainty estimates are much higher for CT images compared to X-ray images.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge