Nicolas Talabot

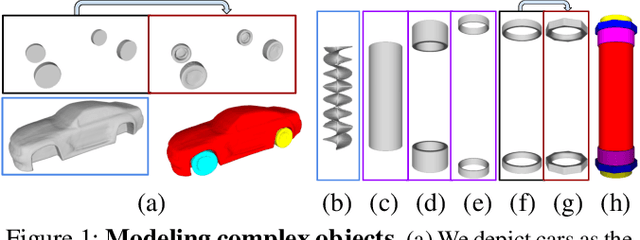

PartSDF: Part-Based Implicit Neural Representation for Composite 3D Shape Parametrization and Optimization

Feb 18, 2025

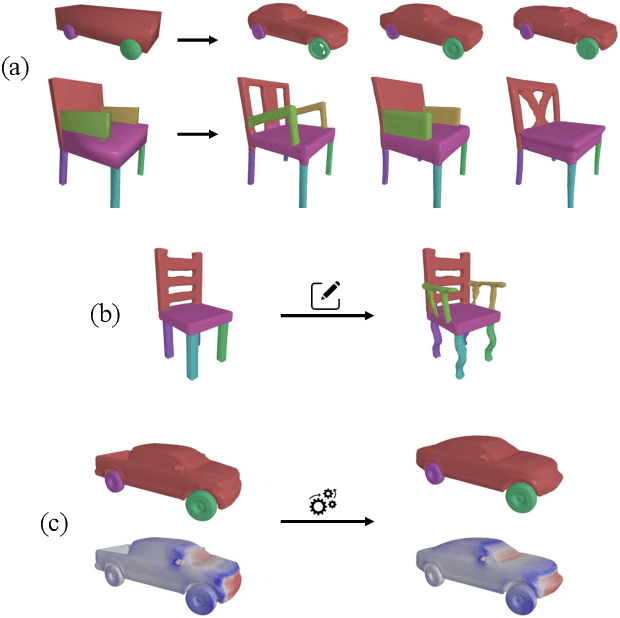

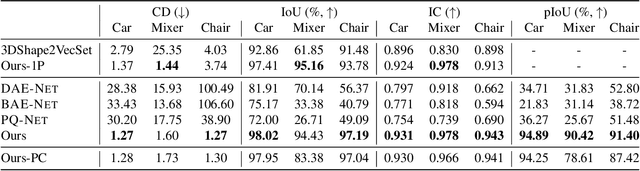

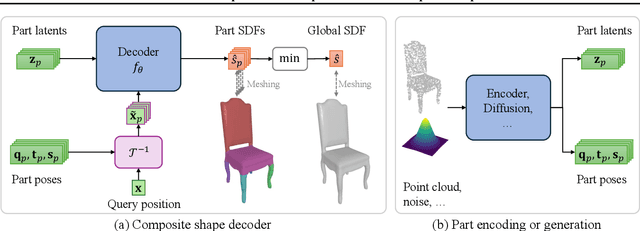

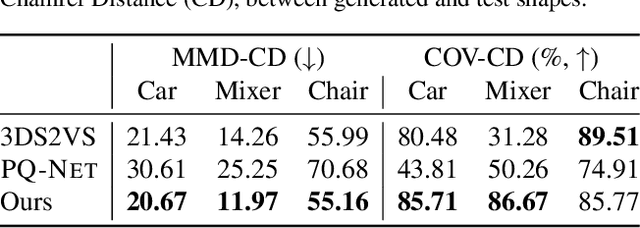

Abstract:Accurate 3D shape representation is essential in engineering applications such as design, optimization, and simulation. In practice, engineering workflows require structured, part-aware representations, as objects are inherently designed as assemblies of distinct components. However, most existing methods either model shapes holistically or decompose them without predefined part structures, limiting their applicability in real-world design tasks. We propose PartSDF, a supervised implicit representation framework that explicitly models composite shapes with independent, controllable parts while maintaining shape consistency. Despite its simple single-decoder architecture, PartSDF outperforms both supervised and unsupervised baselines in reconstruction and generation tasks. We further demonstrate its effectiveness as a structured shape prior for engineering applications, enabling precise control over individual components while preserving overall coherence. Code available at https://github.com/cvlab-epfl/PartSDF.

DiscoNeRF: Class-Agnostic Object Field for 3D Object Discovery

Aug 19, 2024

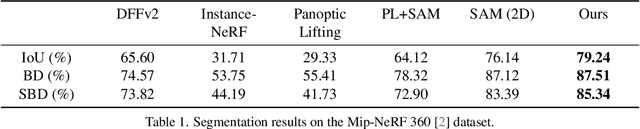

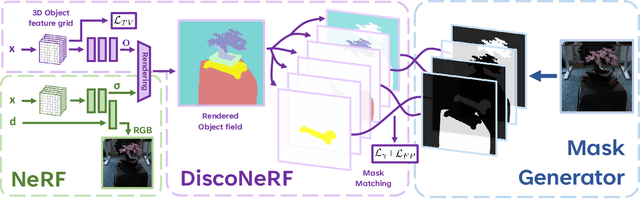

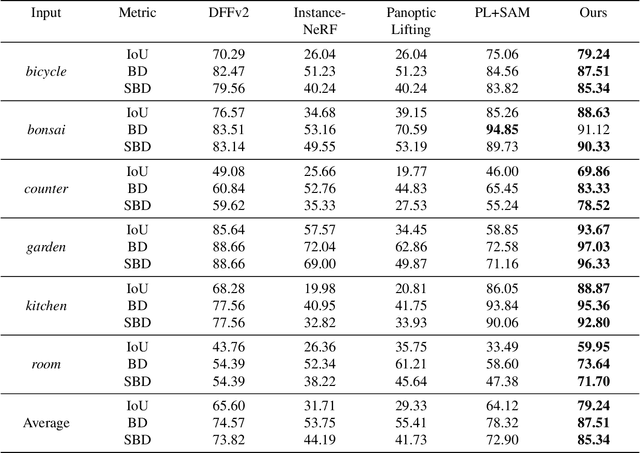

Abstract:Neural Radiance Fields (NeRFs) have become a powerful tool for modeling 3D scenes from multiple images. However, NeRFs remain difficult to segment into semantically meaningful regions. Previous approaches to 3D segmentation of NeRFs either require user interaction to isolate a single object, or they rely on 2D semantic masks with a limited number of classes for supervision. As a consequence, they generalize poorly to class-agnostic masks automatically generated in real scenes. This is attributable to the ambiguity arising from zero-shot segmentation, yielding inconsistent masks across views. In contrast, we propose a method that is robust to inconsistent segmentations and successfully decomposes the scene into a set of objects of any class. By introducing a limited number of competing object slots against which masks are matched, a meaningful object representation emerges that best explains the 2D supervision and minimizes an additional regularization term. Our experiments demonstrate the ability of our method to generate 3D panoptic segmentations on complex scenes, and extract high-quality 3D assets from NeRFs that can then be used in virtual 3D environments.

Neural Surface Detection for Unsigned Distance Fields

Jul 25, 2024

Abstract:Extracting surfaces from Signed Distance Fields (SDFs) can be accomplished using traditional algorithms, such as Marching Cubes. However, since they rely on sign flips across the surface, these algorithms cannot be used directly on Unsigned Distance Fields (UDFs). In this work, we introduce a deep-learning approach to taking a UDF and turning it locally into an SDF, so that it can be effectively triangulated using existing algorithms. We show that it achieves better accuracy in surface detection than existing methods. Furthermore it generalizes well to unseen shapes and datasets, while being parallelizable. We also demonstrate the flexibily of the method by using it in conjunction with DualMeshUDF, a state of the art dual meshing method that can operate on UDFs, improving its results and removing the need to tune its parameters.

Enforcing Topological Interaction between Implicit Surfaces via Uniform Sampling

Jul 16, 2023

Abstract:Objects interact with each other in various ways, including containment, contact, or maintaining fixed distances. Ensuring these topological interactions is crucial for accurate modeling in many scenarios. In this paper, we propose a novel method to refine 3D object representations, ensuring that their surfaces adhere to a topological prior. Our key observation is that the object interaction can be observed via a stochastic approximation method: the statistic of signed distances between a large number of random points to the object surfaces reflect the interaction between them. Thus, the object interaction can be indirectly manipulated by using choosing a set of points as anchors to refine the object surfaces. In particular, we show that our method can be used to enforce two objects to have a specific contact ratio while having no surface intersection. The conducted experiments show that our proposed method enables accurate 3D reconstruction of human hearts, ensuring proper topological connectivity between components. Further, we show that our proposed method can be used to simulate various ways a hand can interact with an arbitrary object.

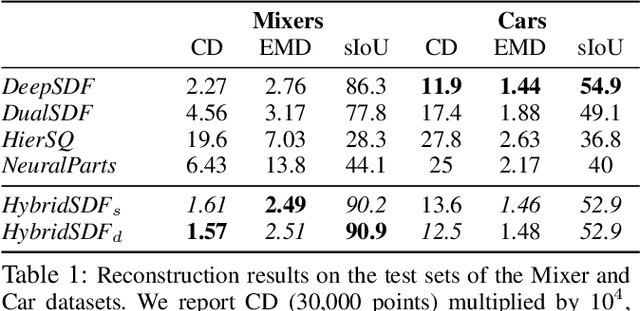

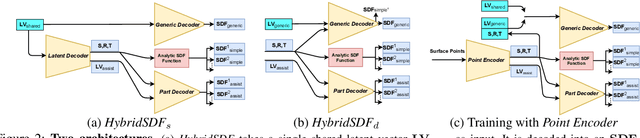

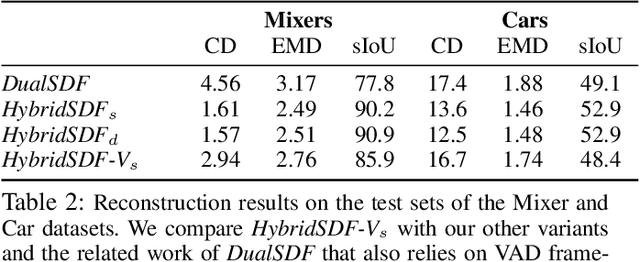

HybridSDF: Combining Free Form Shapes and Geometric Primitives for effective Shape Manipulation

Sep 24, 2021

Abstract:CAD modeling typically involves the use of simple geometric primitives whereas recent advances in deep-learning based 3D surface modeling have opened new shape design avenues. Unfortunately, these advances have not yet been accepted by the CAD community because they cannot be integrated into engineering workflows. To remedy this, we propose a novel approach to effectively combining geometric primitives and free-form surfaces represented by implicit surfaces for accurate modeling that preserves interpretability, enforces consistency, and enables easy manipulation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge