Natalia Criado

Moral Uncertainty and the Problem of Fanaticism

Dec 18, 2023Abstract:While there is universal agreement that agents ought to act ethically, there is no agreement as to what constitutes ethical behaviour. To address this problem, recent philosophical approaches to `moral uncertainty' propose aggregation of multiple ethical theories to guide agent behaviour. However, one of the foundational proposals for aggregation - Maximising Expected Choiceworthiness (MEC) - has been criticised as being vulnerable to fanaticism; the problem of an ethical theory dominating agent behaviour despite low credence (confidence) in said theory. Fanaticism thus undermines the `democratic' motivation for accommodating multiple ethical perspectives. The problem of fanaticism has not yet been mathematically defined. Representing moral uncertainty as an instance of social welfare aggregation, this paper contributes to the field of moral uncertainty by 1) formalising the problem of fanaticism as a property of social welfare functionals and 2) providing non-fanatical alternatives to MEC, i.e. Highest k-trimmed Mean and Highest Median.

Collaborative filtering to capture AI user's preferences as norms

Aug 10, 2023Abstract:Customising AI technologies to each user's preferences is fundamental to them functioning well. Unfortunately, current methods require too much user involvement and fail to capture their true preferences. In fact, to avoid the nuisance of manually setting preferences, users usually accept the default settings even if these do not conform to their true preferences. Norms can be useful to regulate behaviour and ensure it adheres to user preferences but, while the literature has thoroughly studied norms, most proposals take a formal perspective. Indeed, while there has been some research on constructing norms to capture a user's privacy preferences, these methods rely on domain knowledge which, in the case of AI technologies, is difficult to obtain and maintain. We argue that a new perspective is required when constructing norms, which is to exploit the large amount of preference information readily available from whole systems of users. Inspired by recommender systems, we believe that collaborative filtering can offer a suitable approach to identifying a user's norm preferences without excessive user involvement.

Predicting Privacy Preferences for Smart Devices as Norms

Feb 21, 2023Abstract:Smart devices, such as smart speakers, are becoming ubiquitous, and users expect these devices to act in accordance with their preferences. In particular, since these devices gather and manage personal data, users expect them to adhere to their privacy preferences. However, the current approach of gathering these preferences consists in asking the users directly, which usually triggers automatic responses failing to capture their true preferences. In response, in this paper we present a collaborative filtering approach to predict user preferences as norms. These preference predictions can be readily adopted or can serve to assist users in determining their own preferences. Using a dataset of privacy preferences of smart assistant users, we test the accuracy of our predictions.

Discovering and Interpreting Conceptual Biases in Online Communities

Oct 27, 2020

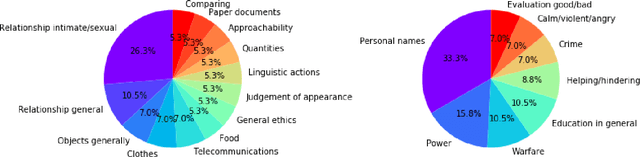

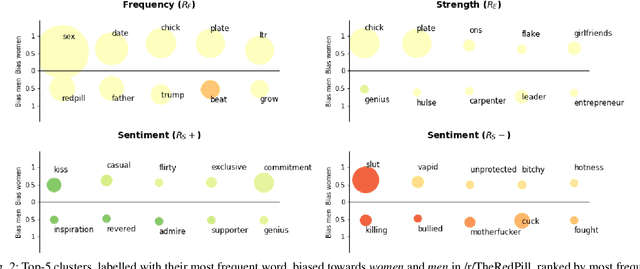

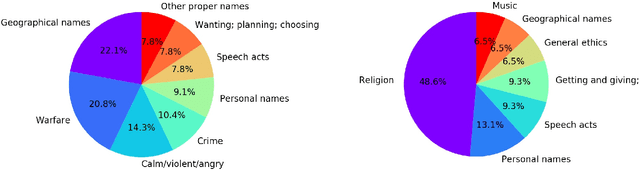

Abstract:Language carries implicit human biases, functioning both as a reflection and a perpetuation of stereotypes that people carry with them. Recently, ML-based NLP methods such as word embeddings have been shown to learn such language biases with striking accuracy. This capability of word embeddings has been successfully exploited as a tool to quantify and study human biases. However, previous studies only consider a predefined set of conceptual biases to attest (e.g., whether gender is more or less associated with particular jobs), or just discover biased words without helping to understand their meaning at the conceptual level. As such, these approaches are either unable to find conceptual biases that have not been defined in advance, or the biases they find are difficult to interpret and study. This makes existing approaches unsuitable to discover and interpret biases in online communities, as such communities may carry different biases than those in mainstream culture. This paper proposes a general, data-driven approach to automatically discover and help interpret conceptual biases encoded in word embeddings. We apply this approach to study the conceptual biases present in the language used in online communities and experimentally show the validity and stability of our method.

Discovering and Categorising Language Biases in Reddit

Aug 13, 2020

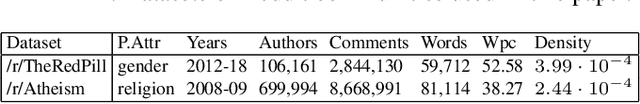

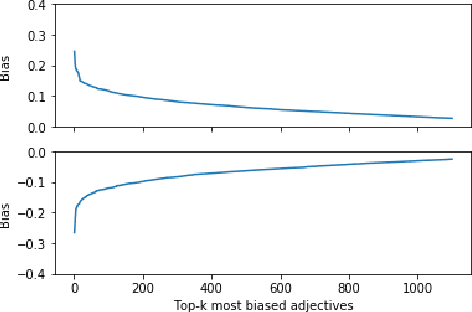

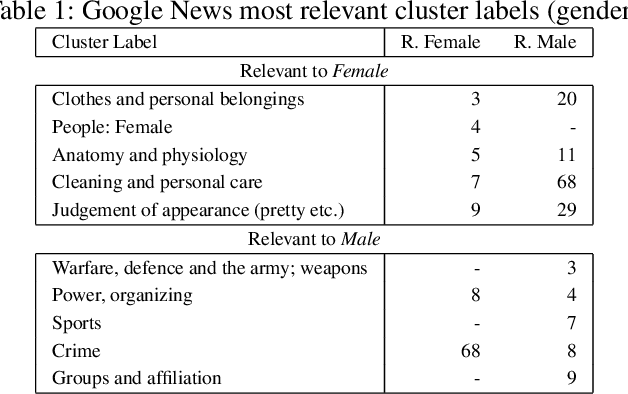

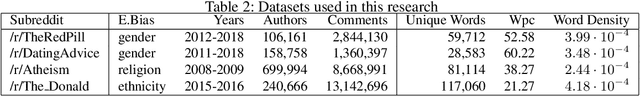

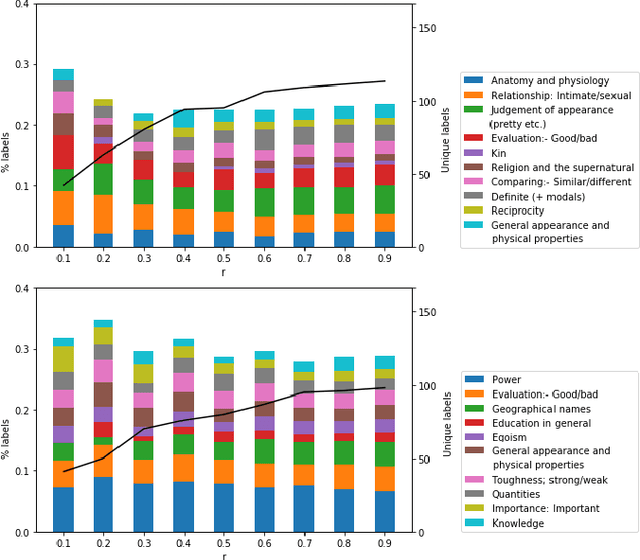

Abstract:We present a data-driven approach using word embeddings to discover and categorise language biases on the discussion platform Reddit. As spaces for isolated user communities, platforms such as Reddit are increasingly connected to issues of racism, sexism and other forms of discrimination. Hence, there is a need to monitor the language of these groups. One of the most promising AI approaches to trace linguistic biases in large textual datasets involves word embeddings, which transform text into high-dimensional dense vectors and capture semantic relations between words. Yet, previous studies require predefined sets of potential biases to study, e.g., whether gender is more or less associated with particular types of jobs. This makes these approaches unfit to deal with smaller and community-centric datasets such as those on Reddit, which contain smaller vocabularies and slang, as well as biases that may be particular to that community. This paper proposes a data-driven approach to automatically discover language biases encoded in the vocabulary of online discourse communities on Reddit. In our approach, protected attributes are connected to evaluative words found in the data, which are then categorised through a semantic analysis system. We verify the effectiveness of our method by comparing the biases we discover in the Google News dataset with those found in previous literature. We then successfully discover gender bias, religion bias, and ethnic bias in different Reddit communities. We conclude by discussing potential application scenarios and limitations of this data-driven bias discovery method.

* Author's copy of the paper accepted at the International AAAI Conference on Web and Social Media (ICWSM 2021)

Bias and Discrimination in AI: a cross-disciplinary perspective

Aug 11, 2020Abstract:With the widespread and pervasive use of Artificial Intelligence (AI) for automated decision-making systems, AI bias is becoming more apparent and problematic. One of its negative consequences is discrimination: the unfair, or unequal treatment of individuals based on certain characteristics. However, the relationship between bias and discrimination is not always clear. In this paper, we survey relevant literature about bias and discrimination in AI from an interdisciplinary perspective that embeds technical, legal, social and ethical dimensions. We show that finding solutions to bias and discrimination in AI requires robust cross-disciplinary collaborations.

A Normative approach to Attest Digital Discrimination

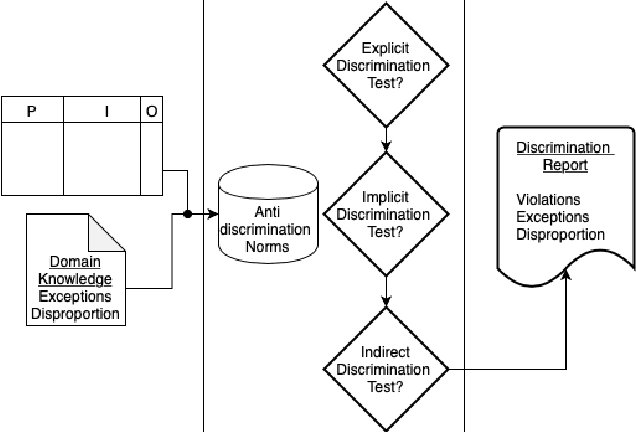

Aug 03, 2020

Abstract:Digital discrimination is a form of discrimination whereby users are automatically treated unfairly, unethically or just differently based on their personal data by a machine learning (ML) system. Examples of digital discrimination include low-income neighbourhood's targeted with high-interest loans or low credit scores, and women being undervalued by 21% in online marketing. Recently, different techniques and tools have been proposed to detect biases that may lead to digital discrimination. These tools often require technical expertise to be executed and for their results to be interpreted. To allow non-technical users to benefit from ML, simpler notions and concepts to represent and reason about digital discrimination are needed. In this paper, we use norms as an abstraction to represent different situations that may lead to digital discrimination. In particular, we formalise non-discrimination norms in the context of ML systems and propose an algorithm to check whether ML systems violate these norms.

A model to support collective reasoning: Formalization, analysis and computational assessment

Jul 14, 2020

Abstract:Inspired by e-participation systems, in this paper we propose a new model to represent human debates and methods to obtain collective conclusions from them. This model overcomes drawbacks of existing approaches by allowing users to introduce new pieces of information into the discussion, to relate them to existing pieces, and also to express their opinion on the pieces proposed by other users. In addition, our model does not assume that users' opinions are rational in order to extract information from it, an assumption that significantly limits current approaches. Instead, we define a weaker notion of rationality that characterises coherent opinions, and we consider different scenarios based on the coherence of individual opinions and the level of consensus that users have on the debate structure. Considering these two factors, we analyse the outcomes of different opinion aggregation functions that compute a collective decision based on the individual opinions and the debate structure. In particular, we demonstrate that aggregated opinions can be coherent even if there is a lack of consensus and individual opinions are not coherent. We conclude our analysis with a computational evaluation demonstrating that collective opinions can be computed efficiently for real-sized debates.

Attesting Biases and Discrimination using Language Semantics

Sep 10, 2019Abstract:AI agents are increasingly deployed and used to make automated decisions that affect our lives on a daily basis. It is imperative to ensure that these systems embed ethical principles and respect human values. We focus on how we can attest to whether AI agents treat users fairly without discriminating against particular individuals or groups through biases in language. In particular, we discuss human unconscious biases, how they are embedded in language, and how AI systems inherit those biases by learning from and processing human language. Then, we outline a roadmap for future research to better understand and attest problematic AI biases derived from language.

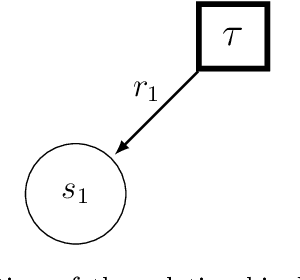

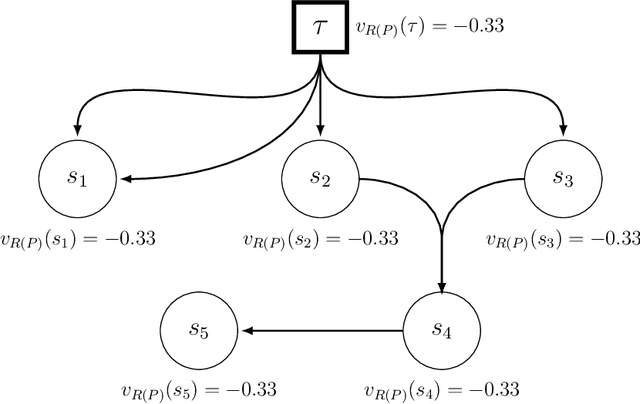

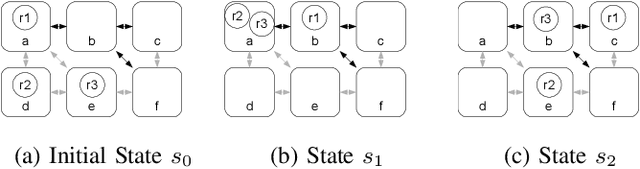

Norm Monitoring under Partial Action Observability

Apr 21, 2016

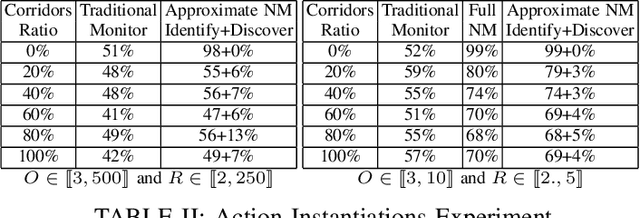

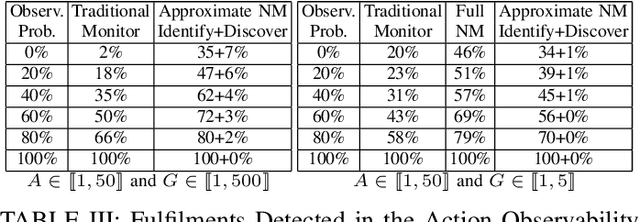

Abstract:In the context of using norms for controlling multi-agent systems, a vitally important question that has not yet been addressed in the literature is the development of mechanisms for monitoring norm compliance under partial action observability. This paper proposes the reconstruction of unobserved actions to tackle this problem. In particular, we formalise the problem of reconstructing unobserved actions, and propose an information model and algorithms for monitoring norms under partial action observability using two different processes for reconstructing unobserved actions. Our evaluation shows that reconstructing unobserved actions increases significantly the number of norm violations and fulfilments detected.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge