Michael Wan

SCIZOR: A Self-Supervised Approach to Data Curation for Large-Scale Imitation Learning

May 28, 2025Abstract:Imitation learning advances robot capabilities by enabling the acquisition of diverse behaviors from human demonstrations. However, large-scale datasets used for policy training often introduce substantial variability in quality, which can negatively impact performance. As a result, automatically curating datasets by filtering low-quality samples to improve quality becomes essential. Existing robotic curation approaches rely on costly manual annotations and perform curation at a coarse granularity, such as the dataset or trajectory level, failing to account for the quality of individual state-action pairs. To address this, we introduce SCIZOR, a self-supervised data curation framework that filters out low-quality state-action pairs to improve the performance of imitation learning policies. SCIZOR targets two complementary sources of low-quality data: suboptimal data, which hinders learning with undesirable actions, and redundant data, which dilutes training with repetitive patterns. SCIZOR leverages a self-supervised task progress predictor for suboptimal data to remove samples lacking task progression, and a deduplication module operating on joint state-action representation for samples with redundant patterns. Empirically, we show that SCIZOR enables imitation learning policies to achieve higher performance with less data, yielding an average improvement of 15.4% across multiple benchmarks. More information is available at: https://ut-austin-rpl.github.io/SCIZOR/

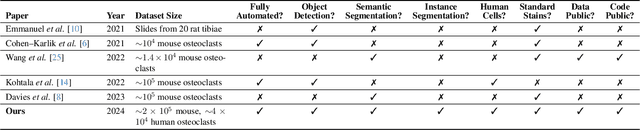

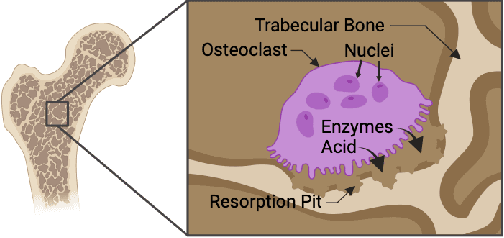

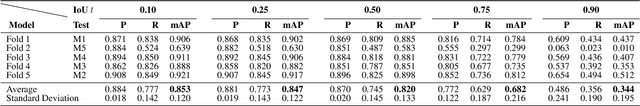

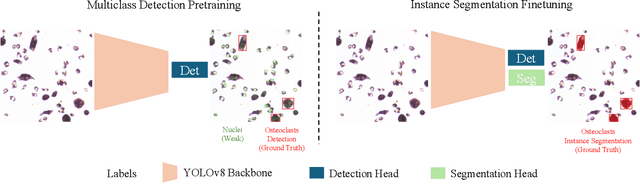

NOISe: Nuclei-Aware Osteoclast Instance Segmentation for Mouse-to-Human Domain Transfer

Apr 15, 2024

Abstract:Osteoclast cell image analysis plays a key role in osteoporosis research, but it typically involves extensive manual image processing and hand annotations by a trained expert. In the last few years, a handful of machine learning approaches for osteoclast image analysis have been developed, but none have addressed the full instance segmentation task required to produce the same output as that of the human expert led process. Furthermore, none of the prior, fully automated algorithms have publicly available code, pretrained models, or annotated datasets, inhibiting reproduction and extension of their work. We present a new dataset with ~2*10^5 expert annotated mouse osteoclast masks, together with a deep learning instance segmentation method which works for both in vitro mouse osteoclast cells on plastic tissue culture plates and human osteoclast cells on bone chips. To our knowledge, this is the first work to automate the full osteoclast instance segmentation task. Our method achieves a performance of 0.82 mAP_0.5 (mean average precision at intersection-over-union threshold of 0.5) in cross validation for mouse osteoclasts. We present a novel nuclei-aware osteoclast instance segmentation training strategy (NOISe) based on the unique biology of osteoclasts, to improve the model's generalizability and boost the mAP_0.5 from 0.60 to 0.82 on human osteoclasts. We publish our annotated mouse osteoclast image dataset, instance segmentation models, and code at github.com/michaelwwan/noise to enable reproducibility and to provide a public tool to accelerate osteoporosis research.

WB LUTs: Contrastive Learning for White Balancing Lookup Tables

Apr 15, 2024

Abstract:Automatic white balancing (AWB), one of the first steps in an integrated signal processing (ISP) pipeline, aims to correct the color cast induced by the scene illuminant. An incorrect white balance (WB) setting or AWB failure can lead to an undesired blue or red tint in the rendered sRGB image. To address this, recent methods pose the post-capture WB correction problem as an image-to-image translation task and train deep neural networks to learn the necessary color adjustments at a lower resolution. These low resolution outputs are post-processed to generate high resolution WB corrected images, forming a bottleneck in the end-to-end run time. In this paper we present a 3D Lookup Table (LUT) based WB correction model called WB LUTs that can generate high resolution outputs in real time. We introduce a contrastive learning framework with a novel hard sample mining strategy, which improves the WB correction quality of baseline 3D LUTs by 25.5%. Experimental results demonstrate that the proposed WB LUTs perform competitively against state-of-the-art models on two benchmark datasets while being 300 times faster using 12.7 times less memory. Our model and code are available at https://github.com/skrmanne/3DLUT_sRGB_WB.

Subtle Signals: Video-based Detection of Infant Non-nutritive Sucking as a Neurodevelopmental Cue

Oct 24, 2023Abstract:Non-nutritive sucking (NNS), which refers to the act of sucking on a pacifier, finger, or similar object without nutrient intake, plays a crucial role in assessing healthy early development. In the case of preterm infants, NNS behavior is a key component in determining their readiness for feeding. In older infants, the characteristics of NNS behavior offer valuable insights into neural and motor development. Additionally, NNS activity has been proposed as a potential safeguard against sudden infant death syndrome (SIDS). However, the clinical application of NNS assessment is currently hindered by labor-intensive and subjective finger-in-mouth evaluations. Consequently, researchers often resort to expensive pressure transducers for objective NNS signal measurement. To enhance the accessibility and reliability of NNS signal monitoring for both clinicians and researchers, we introduce a vision-based algorithm designed for non-contact detection of NNS activity using baby monitor footage in natural settings. Our approach involves a comprehensive exploration of optical flow and temporal convolutional networks, enabling the detection and amplification of subtle infant-sucking signals. We successfully classify short video clips of uniform length into NNS and non-NNS periods. Furthermore, we investigate manual and learning-based techniques to piece together local classification results, facilitating the segmentation of longer mixed-activity videos into NNS and non-NNS segments of varying duration. Our research introduces two novel datasets of annotated infant videos, including one sourced from our clinical study featuring 19 infant subjects and 183 hours of overnight baby monitor footage.

Automatic Infant Respiration Estimation from Video: A Deep Flow-based Algorithm and a Novel Public Benchmark

Jul 24, 2023Abstract:Respiration is a critical vital sign for infants, and continuous respiratory monitoring is particularly important for newborns. However, neonates are sensitive and contact-based sensors present challenges in comfort, hygiene, and skin health, especially for preterm babies. As a step toward fully automatic, continuous, and contactless respiratory monitoring, we develop a deep-learning method for estimating respiratory rate and waveform from plain video footage in natural settings. Our automated infant respiration flow-based network (AIRFlowNet) combines video-extracted optical flow input and spatiotemporal convolutional processing tuned to the infant domain. We support our model with the first public annotated infant respiration dataset with 125 videos (AIR-125), drawn from eight infant subjects, set varied pose, lighting, and camera conditions. We include manual respiration annotations and optimize AIRFlowNet training on them using a novel spectral bandpass loss function. When trained and tested on the AIR-125 infant data, our method significantly outperforms other state-of-the-art methods in respiratory rate estimation, achieving a mean absolute error of $\sim$2.9 breaths per minute, compared to $\sim$4.7--6.2 for other public models designed for adult subjects and more uniform environments.

A Video-based End-to-end Pipeline for Non-nutritive Sucking Action Recognition and Segmentation in Young Infants

Mar 29, 2023Abstract:We present an end-to-end computer vision pipeline to detect non-nutritive sucking (NNS) -- an infant sucking pattern with no nutrition delivered -- as a potential biomarker for developmental delays, using off-the-shelf baby monitor video footage. One barrier to clinical (or algorithmic) assessment of NNS stems from its sparsity, requiring experts to wade through hours of footage to find minutes of relevant activity. Our NNS activity segmentation algorithm solves this problem by identifying periods of NNS with high certainty -- up to 94.0\% average precision and 84.9\% average recall across 30 heterogeneous 60 s clips, drawn from our manually annotated NNS clinical in-crib dataset of 183 hours of overnight baby monitor footage from 19 infants. Our method is based on an underlying NNS action recognition algorithm, which uses spatiotemporal deep learning networks and infant-specific pose estimation, achieving 94.9\% accuracy in binary classification of 960 2.5 s balanced NNS vs. non-NNS clips. Tested on our second, independent, and public NNS in-the-wild dataset, NNS recognition classification reaches 92.3\% accuracy, and NNS segmentation achieves 90.8\% precision and 84.2\% recall.

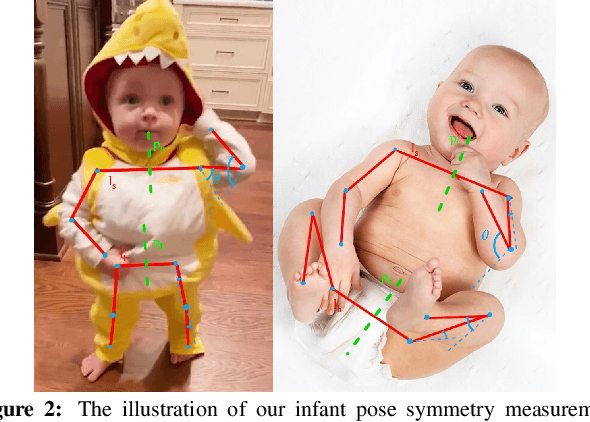

Automatic Assessment of Infant Face and Upper-Body Symmetry as Early Signs of Torticollis

Nov 07, 2022Abstract:We apply computer vision pose estimation techniques developed expressly for the data-scarce infant domain to the study of torticollis, a common condition in infants for which early identification and treatment is critical. Specifically, we use a combination of facial landmark and body joint estimation techniques designed for infants to estimate a range of geometric measures pertaining to face and upper body symmetry, drawn from an array of sources in the physical therapy and ophthalmology research literature in torticollis. We gauge performance with a range of metrics and show that the estimates of most these geometric measures are successful, yielding strong to very strong Spearman's $\rho$ correlation with ground truth values. Furthermore, we show that these estimates, derived from pose estimation neural networks designed for the infant domain, cleanly outperform estimates derived from more widely known networks designed for the adult domain

Computer Vision to the Rescue: Infant Postural Symmetry Estimation from Incongruent Annotations

Jul 19, 2022

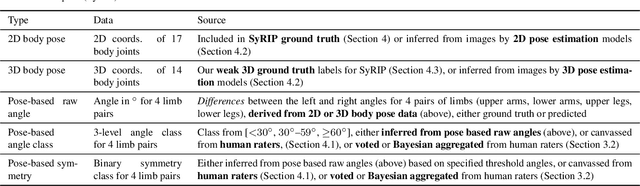

Abstract:Bilateral postural symmetry plays a key role as a potential risk marker for autism spectrum disorder (ASD) and as a symptom of congenital muscular torticollis (CMT) in infants, but current methods of assessing symmetry require laborious clinical expert assessments. In this paper, we develop a computer vision based infant symmetry assessment system, leveraging 3D human pose estimation for infants. Evaluation and calibration of our system against ground truth assessments is complicated by our findings from a survey of human ratings of angle and symmetry, that such ratings exhibit low inter-rater reliability. To rectify this, we develop a Bayesian estimator of the ground truth derived from a probabilistic graphical model of fallible human raters. We show that the 3D infant pose estimation model can achieve 68% area under the receiver operating characteristic curve performance in predicting the Bayesian aggregate labels, compared to only 61% from a 2D infant pose estimation model and 60% from a 3D adult pose estimation model, highlighting the importance of 3D poses and infant domain knowledge in assessing infant body symmetry. Our survey analysis also suggests that human ratings are susceptible to higher levels of bias and inconsistency, and hence our final 3D pose-based symmetry assessment system is calibrated but not directly supervised by Bayesian aggregate human ratings, yielding higher levels of consistency and lower levels of inter-limb assessment bias.

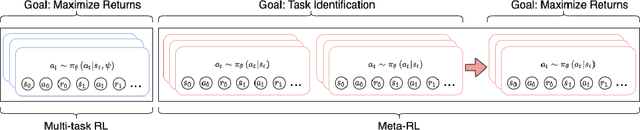

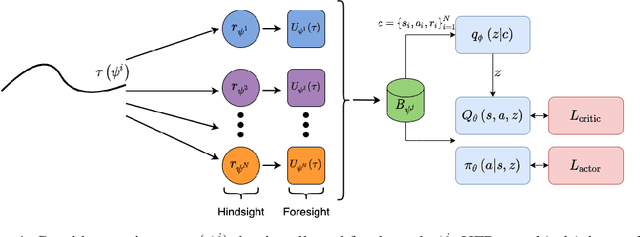

Hindsight Foresight Relabeling for Meta-Reinforcement Learning

Sep 18, 2021

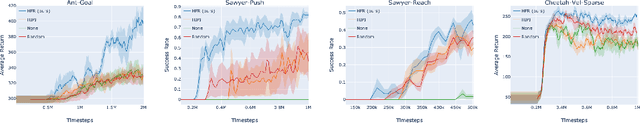

Abstract:Meta-reinforcement learning (meta-RL) algorithms allow for agents to learn new behaviors from small amounts of experience, mitigating the sample inefficiency problem in RL. However, while meta-RL agents can adapt quickly to new tasks at test time after experiencing only a few trajectories, the meta-training process is still sample-inefficient. Prior works have found that in the multi-task RL setting, relabeling past transitions and thus sharing experience among tasks can improve sample efficiency and asymptotic performance. We apply this idea to the meta-RL setting and devise a new relabeling method called Hindsight Foresight Relabeling (HFR). We construct a relabeling distribution using the combination of "hindsight", which is used to relabel trajectories using reward functions from the training task distribution, and "foresight", which takes the relabeled trajectories and computes the utility of each trajectory for each task. HFR is easy to implement and readily compatible with existing meta-RL algorithms. We find that HFR improves performance when compared to other relabeling methods on a variety of meta-RL tasks.

Mutual Information Based Knowledge Transfer Under State-Action Dimension Mismatch

Jun 12, 2020

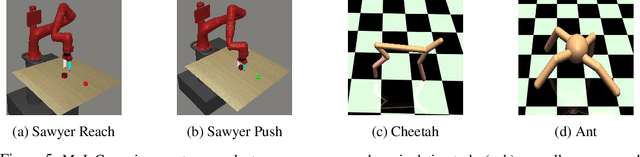

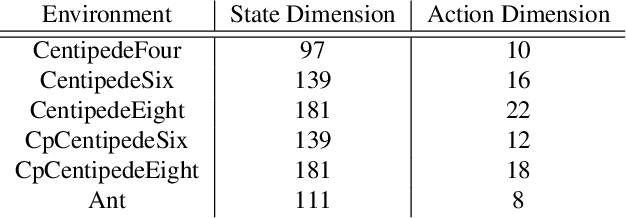

Abstract:Deep reinforcement learning (RL) algorithms have achieved great success on a wide variety of sequential decision-making tasks. However, many of these algorithms suffer from high sample complexity when learning from scratch using environmental rewards, due to issues such as credit-assignment and high-variance gradients, among others. Transfer learning, in which knowledge gained on a source task is applied to more efficiently learn a different but related target task, is a promising approach to improve the sample complexity in RL. Prior work has considered using pre-trained teacher policies to enhance the learning of the student policy, albeit with the constraint that the teacher and the student MDPs share the state-space or the action-space. In this paper, we propose a new framework for transfer learning where the teacher and the student can have arbitrarily different state- and action-spaces. To handle this mismatch, we produce embeddings which can systematically extract knowledge from the teacher policy and value networks, and blend it into the student networks. To train the embeddings, we use a task-aligned loss and show that the representations could be enriched further by adding a mutual information loss. Using a set of challenging simulated robotic locomotion tasks involving many-legged centipedes, we demonstrate successful transfer learning in situations when the teacher and student have different state- and action-spaces.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge