Michael Ebner

Predicting building types and functions at transnational scale

Sep 15, 2024

Abstract:Building-specific knowledge such as building type and function information is important for numerous energy applications. However, comprehensive datasets containing this information for individual households are missing in many regions of Europe. For the first time, we investigate whether it is feasible to predict building types and functional classes at a European scale based on only open GIS datasets available across countries. We train a graph neural network (GNN) classifier on a large-scale graph dataset consisting of OpenStreetMap (OSM) buildings across the EU, Norway, Switzerland, and the UK. To efficiently perform training using the large-scale graph, we utilize localized subgraphs. A graph transformer model achieves a high Cohen's kappa coefficient of 0.754 when classifying buildings into 9 classes, and a very high Cohen's kappa coefficient of 0.844 when classifying buildings into the residential and non-residential classes. The experimental results imply three core novel contributions to literature. Firstly, we show that building classification across multiple countries is possible using a multi-source dataset consisting of information about 2D building shape, land use, degree of urbanization, and countries as input, and OSM tags as ground truth. Secondly, our results indicate that GNN models that consider contextual information about building neighborhoods improve predictive performance compared to models that only consider individual buildings and ignore the neighborhood. Thirdly, we show that training with GNNs on localized subgraphs instead of standard GNNs improves performance for the task of building classification.

Synthetic white balancing for intra-operative hyperspectral imaging

Jul 24, 2023Abstract:Hyperspectral imaging shows promise for surgical applications to non-invasively provide spatially-resolved, spectral information. For calibration purposes, a white reference image of a highly-reflective Lambertian surface should be obtained under the same imaging conditions. Standard white references are not sterilizable, and so are unsuitable for surgical environments. We demonstrate the necessity for in situ white references and address this by proposing a novel, sterile, synthetic reference construction algorithm. The use of references obtained at different distances and lighting conditions to the subject were examined. Spectral and color reconstructions were compared with standard measurements qualitatively and quantitatively, using $\Delta E$ and normalised RMSE respectively. The algorithm forms a composite image from a video of a standard sterile ruler, whose imperfect reflectivity is compensated for. The reference is modelled as the product of independent spatial and spectral components, and a scalar factor accounting for gain, exposure, and light intensity. Evaluation of synthetic references against ideal but non-sterile references is performed using the same metrics alongside pixel-by-pixel errors. Finally, intraoperative integration is assessed though cadaveric experiments. Improper white balancing leads to increases in all quantitative and qualitative errors. Synthetic references achieve median pixel-by-pixel errors lower than 6.5% and produce similar reconstructions and errors to an ideal reference. The algorithm integrated well into surgical workflow, achieving median pixel-by-pixel errors of 4.77%, while maintaining good spectral and color reconstruction.

Hyperspectral Image Segmentation: A Preliminary Study on the Oral and Dental Spectral Image Database (ODSI-DB)

Mar 14, 2023Abstract:Visual discrimination of clinical tissue types remains challenging, with traditional RGB imaging providing limited contrast for such tasks. Hyperspectral imaging (HSI) is a promising technology providing rich spectral information that can extend far beyond three-channel RGB imaging. Moreover, recently developed snapshot HSI cameras enable real-time imaging with significant potential for clinical applications. Despite this, the investigation into the relative performance of HSI over RGB imaging for semantic segmentation purposes has been limited, particularly in the context of medical imaging. Here we compare the performance of state-of-the-art deep learning image segmentation methods when trained on hyperspectral images, RGB images, hyperspectral pixels (minus spatial context), and RGB pixels (disregarding spatial context). To achieve this, we employ the recently released Oral and Dental Spectral Image Database (ODSI-DB), which consists of 215 manually segmented dental reflectance spectral images with 35 different classes across 30 human subjects. The recent development of snapshot HSI cameras has made real-time clinical HSI a distinct possibility, though successful application requires a comprehensive understanding of the additional information HSI offers. Our work highlights the relative importance of spectral resolution, spectral range, and spatial information to both guide the development of HSI cameras and inform future clinical HSI applications.

Motion Correction and Volumetric Reconstruction for Fetal Functional Magnetic Resonance Imaging Data

Feb 11, 2022

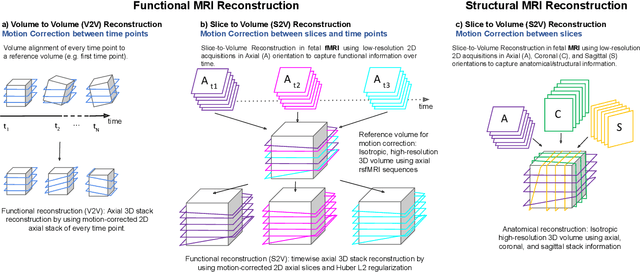

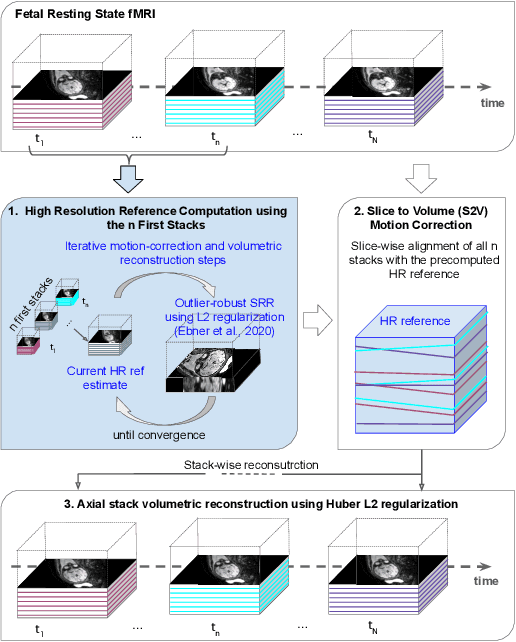

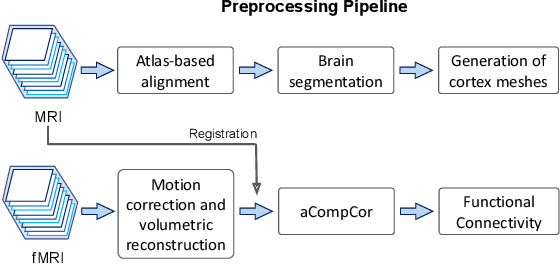

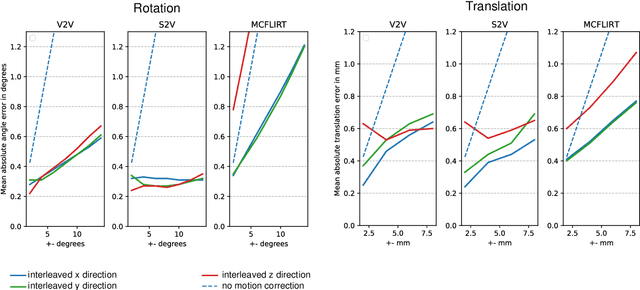

Abstract:Motion correction is an essential preprocessing step in functional Magnetic Resonance Imaging (fMRI) of the fetal brain with the aim to remove artifacts caused by fetal movement and maternal breathing and consequently to suppress erroneous signal correlations. Current motion correction approaches for fetal fMRI choose a single 3D volume from a specific acquisition timepoint with least motion artefacts as reference volume, and perform interpolation for the reconstruction of the motion corrected time series. The results can suffer, if no low-motion frame is available, and if reconstruction does not exploit any assumptions about the continuity of the fMRI signal. Here, we propose a novel framework, which estimates a high-resolution reference volume by using outlier-robust motion correction, and by utilizing Huber L2 regularization for intra-stack volumetric reconstruction of the motion-corrected fetal brain fMRI. We performed an extensive parameter study to investigate the effectiveness of motion estimation and present in this work benchmark metrics to quantify the effect of motion correction and regularised volumetric reconstruction approaches on functional connectivity computations. We demonstrate the proposed framework's ability to improve functional connectivity estimates, reproducibility and signal interpretability, which is clinically highly desirable for the establishment of prognostic noninvasive imaging biomarkers. The motion correction and volumetric reconstruction framework is made available as an open-source package of NiftyMIC.

Deep Learning Approach for Hyperspectral Image Demosaicking, Spectral Correction and High-resolution RGB Reconstruction

Sep 03, 2021

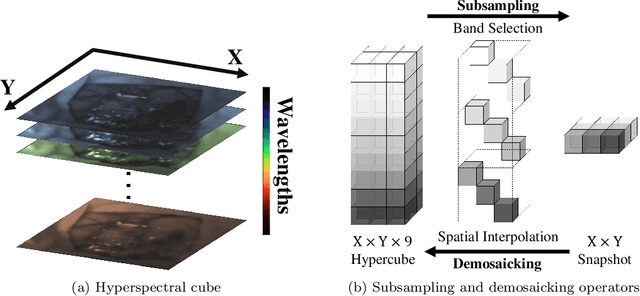

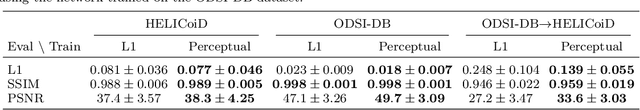

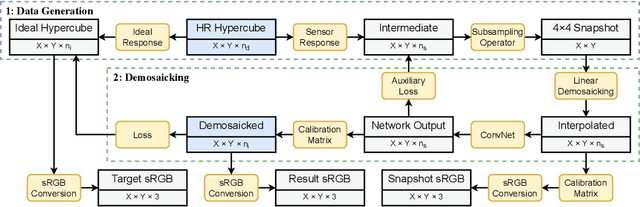

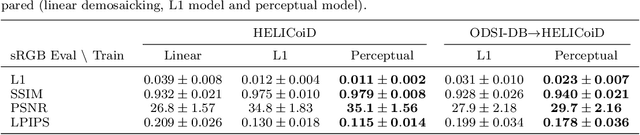

Abstract:Hyperspectral imaging is one of the most promising techniques for intraoperative tissue characterisation. Snapshot mosaic cameras, which can capture hyperspectral data in a single exposure, have the potential to make a real-time hyperspectral imaging system for surgical decision-making possible. However, optimal exploitation of the captured data requires solving an ill-posed demosaicking problem and applying additional spectral corrections to recover spatial and spectral information of the image. In this work, we propose a deep learning-based image demosaicking algorithm for snapshot hyperspectral images using supervised learning methods. Due to the lack of publicly available medical images acquired with snapshot mosaic cameras, a synthetic image generation approach is proposed to simulate snapshot images from existing medical image datasets captured by high-resolution, but slow, hyperspectral imaging devices. Image reconstruction is achieved using convolutional neural networks for hyperspectral image super-resolution, followed by cross-talk and leakage correction using a sensor-specific calibration matrix. The resulting demosaicked images are evaluated both quantitatively and qualitatively, showing clear improvements in image quality compared to a baseline demosaicking method using linear interpolation. Moreover, the fast processing time of~45\,ms of our algorithm to obtain super-resolved RGB or oxygenation saturation maps per image frame for a state-of-the-art snapshot mosaic camera demonstrates the potential for its seamless integration into real-time surgical hyperspectral imaging applications.

Distributionally Robust Segmentation of Abnormal Fetal Brain 3D MRI

Aug 09, 2021

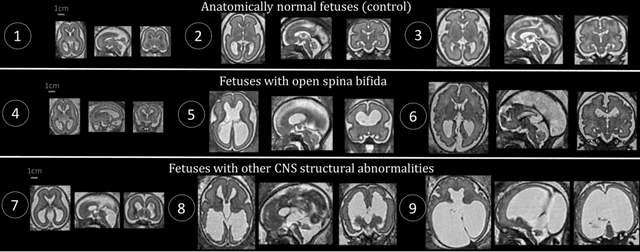

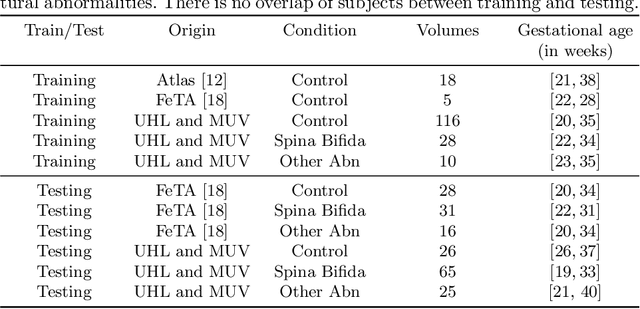

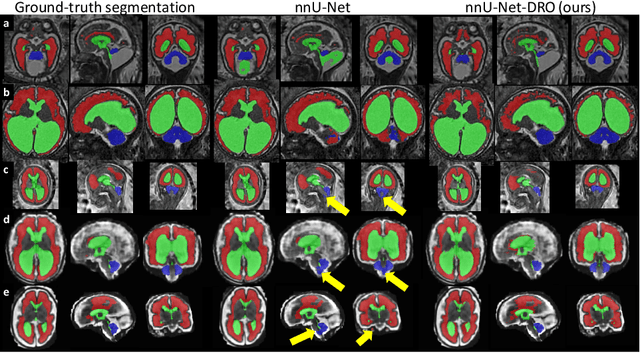

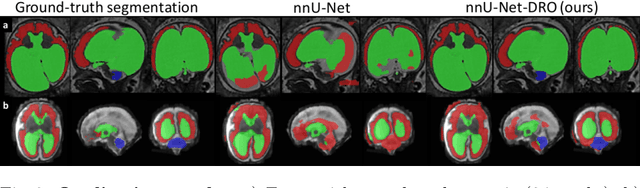

Abstract:The performance of deep neural networks typically increases with the number of training images. However, not all images have the same importance towards improved performance and robustness. In fetal brain MRI, abnormalities exacerbate the variability of the developing brain anatomy compared to non-pathological cases. A small number of abnormal cases, as is typically available in clinical datasets used for training, are unlikely to fairly represent the rich variability of abnormal developing brains. This leads machine learning systems trained by maximizing the average performance to be biased toward non-pathological cases. This problem was recently referred to as hidden stratification. To be suited for clinical use, automatic segmentation methods need to reliably achieve high-quality segmentation outcomes also for pathological cases. In this paper, we show that the state-of-the-art deep learning pipeline nnU-Net has difficulties to generalize to unseen abnormal cases. To mitigate this problem, we propose to train a deep neural network to minimize a percentile of the distribution of per-volume loss over the dataset. We show that this can be achieved by using Distributionally Robust Optimization (DRO). DRO automatically reweights the training samples with lower performance, encouraging nnU-Net to perform more consistently on all cases. We validated our approach using a dataset of 368 fetal brain T2w MRIs, including 124 MRIs of open spina bifida cases and 51 MRIs of cases with other severe abnormalities of brain development.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge