Matthew K. Hong

Personagram: Bridging Personas and Product Design for Creative Ideation with Multimodal LLMs

Feb 05, 2026Abstract:Product designers often begin their design process with handcrafted personas. While personas are intended to ground design decisions in consumer preferences, they often fall short in practice by remaining abstract, expensive to produce, and difficult to translate into actionable design features. As a result, personas risk serving as static reference points rather than tools that actively shape design outcomes. To address these challenges, we built Personagram, an interactive system powered by multimodal large language models (MLLMs) that helps designers explore detailed census-based personas, extract product features inferred from persona attributes, and recombine them for specific customer segments. In a study with 12 professional designers, we show that Personagram facilitates more actionable ideation workflows by structuring multimodal thinking from persona attributes to product design features, achieving higher engagement with personas, perceived transparency, and satisfaction compared to a chat-based baseline. We discuss implications of integrating AI-generated personas into product design workflows.

Inkspire: Supporting Design Exploration with Generative AI through Analogical Sketching

Jan 30, 2025

Abstract:With recent advancements in the capabilities of Text-to-Image (T2I) AI models, product designers have begun experimenting with them in their work. However, T2I models struggle to interpret abstract language and the current user experience of T2I tools can induce design fixation rather than a more iterative, exploratory process. To address these challenges, we developed Inkspire, a sketch-driven tool that supports designers in prototyping product design concepts with analogical inspirations and a complete sketch-to-design-to-sketch feedback loop. To inform the design of Inkspire, we conducted an exchange session with designers and distilled design goals for improving T2I interactions. In a within-subjects study comparing Inkspire to ControlNet, we found that Inkspire supported designers with more inspiration and exploration of design ideas, and improved aspects of the co-creative process by allowing designers to effectively grasp the current state of the AI to guide it towards novel design intentions.

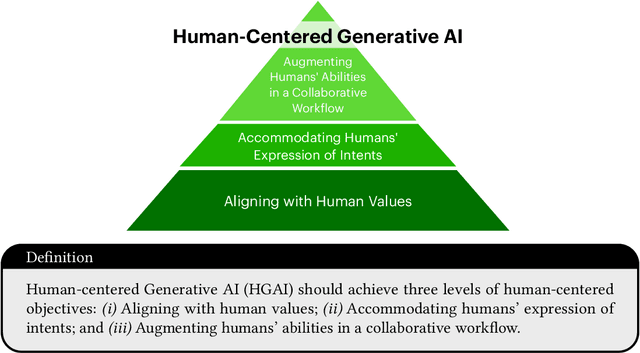

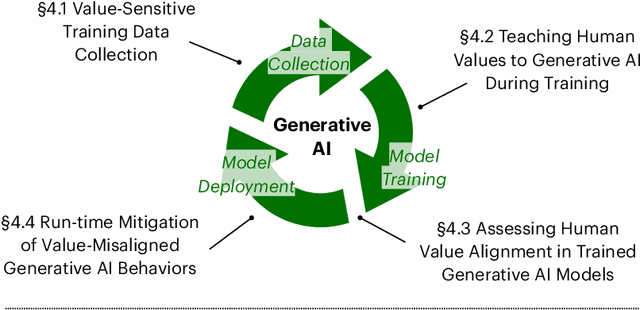

Next Steps for Human-Centered Generative AI: A Technical Perspective

Jun 27, 2023

Abstract:Through iterative, cross-disciplinary discussions, we define and propose next-steps for Human-centered Generative AI (HGAI) from a technical perspective. We contribute a roadmap that lays out future directions of Generative AI spanning three levels: Aligning with human values; Accommodating humans' expression of intents; and Augmenting humans' abilities in a collaborative workflow. This roadmap intends to draw interdisciplinary research teams to a comprehensive list of emergent ideas in HGAI, identifying their interested topics while maintaining a coherent big picture of the future work landscape.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge